I have seen these cases too. For the open tabs, don’t ask, but I have tested.

03.03.2026 01:05 —

👍 0

🔁 0

💬 0

📌 0

This is my understanding.

03.03.2026 00:57 —

👍 1

🔁 0

💬 1

📌 0

they are also tired and feel the pain

27.02.2026 23:23 —

👍 1

🔁 0

💬 0

📌 0

How Meta Executives Talked About Child Safety Behind the Scenes

For years, employees acknowledged a problem with potential child groomers, but prioritized growth over fixes.

Behind the scenes, “Meta was divided on whether protecting kids should take precedence over user growth and engagement. For years, the company only incrementally rolled out restrictive safety features, even as its own staff detailed the risks its platforms posed to children.”

27.02.2026 22:50 —

👍 91

🔁 45

💬 5

📌 4

Social media bans of kids and teens are NOT a solution. We need real, enforced governance and regulation of AI tech including recommenders, to protect people at every stage of life. We do NOT need to alienate a generation from (among other things) governance and regulations. 2/2

27.02.2026 06:35 —

👍 9

🔁 1

💬 1

📌 0

Embedding unreliable AI deep into the source code of the military without human oversight is the single stupidest thing we could do as a species.

And that is *exactly* what the United States is about to do.

26.02.2026 20:53 —

👍 232

🔁 88

💬 18

📌 11

"AI summary" tab of the article "Rethinking open source generative AI: open-washing and the EU AI Act". The tab has a disclaimer "AI-Generated Summary (Experimental)". Below the disclaimer it says "AI generated summary not available."

Partial screenshot of email to ACM. Full text of email:

Dear ACM Digital Library Board,

As an ACM author and as a professor and domain expert in AI, Language and Communication I write to you protesting the generation of "AI summaries" for ACM papers.

For one paper we have authored (link) the "summary" is mediocre and contains both basic factual problems and a dumbing down of the point of the article. I have submitted "feedback" on this but to summarise it, the AI text frequently misses the point and reads like and undergrad grasping at straws. It makes our work —which IS on generative AI, ironically— look mediocre, bland, and unprofessional.

More generally, I would argue this holds by nature of all generative AI summaries. These are textual artefacts designed (by means of reinforcement learning with human feedback) to be maximally plausible and palatable, but they cannot fundamentally serve as trustworthy summaries because they are not produced by a process in which truth or reliability plays a role. Hallucinations, as even work from OpenAI itself has shown, are an inevitable feature of the GPT technology, and therefore of the "summaries" produced by it.

That ACM should do this is especially worrying because scientific work shows us that (1) both scientists and the general public accords AI-generated content more credibility than it is owed; (2) AI text generation is resource-intensive and wasteful; (3) AI text generation is only possible because of the legally fraught practices of OpenAI, Meta and other model providers, who take human-produced texts without regard for consent or copyright and give us back slop; (4) the rising tide of AI slop, or synthetic text, is a major problem for our information ecology and scientific publishers should be working to solve it, not exacerbate it.

Update on ACM #genai: looks like they do act on requests to remove the dreadful ✨AI summary for individual papers: ours now says "AI generated summary not available".

FWIW, I objected to "basic factual problems" & said it "makes our work (...) look mediocre, bland, and unprofessional"

23.02.2026 10:43 —

👍 24

🔁 7

💬 2

📌 1

which would by hypothetically possible, if their objections would stem from them knowing this particular boyfriend. why do they share both the objection and the tone?

23.02.2026 12:28 —

👍 1

🔁 0

💬 1

📌 0

I think it is in principle ok for an academic senior to point out practical and even hypothetical implications of a dedication. Regardless, everything else in both mails is way out of line. Still, why do these »senior academics« act almost 100% similarly idiotic?

23.02.2026 00:21 —

👍 0

🔁 0

💬 1

📌 0

Many are appropriately outraged by Altman’s comments here implying that raising a human child is akin to “training” an AI model.

This is part of a broader pattern where AI industry leaders use language that collapses the boundary between human and machine.

🧵/

22.02.2026 19:29 —

👍 490

🔁 200

💬 28

📌 22

Apologies, previous post only refers to the book. This is the article, I think might be relevant:

Eric Gordon and Stephen Walter. Meaningful inefficiencies: Resisting the logic of technological efficiency in the design of civic systems.

22.02.2026 20:07 —

👍 1

🔁 0

💬 0

📌 0

Whilst I don’t understand the »create knowledge for themselves«, this may be relevant somewhat

Glas, R., S. Lammes, M. de Lange, J. Raessens, and I. de Vries, eds. 2019. The Playful Citizen. Civic Engagement in a Mediatized Culture. Amsterdam: Amsterdam University Press. 10.5117/9789462984523/ch16

22.02.2026 19:37 —

👍 2

🔁 0

💬 1

📌 0

congratulations rümeysa

If you haven’t, please read this beautiful, horrible op ed by her on the conditions in which she finished her phd

www.theguardian.com/us-news/ng-i...

19.02.2026 20:34 —

👍 31

🔁 12

💬 0

📌 0

Towns that question the value of an unsafe, costly, environmentally unsound, poorly governed monorail could be ‘left behind’, says Monorail Salesman

18.02.2026 18:46 —

👍 102

🔁 32

💬 2

📌 2

It's Time to Rage Against the AI Music Machine

If AI music takes over "humans will begin to echo the machines, and there will be a downward spiral into slop."

"In 1999, I interviewed Prince for TIME and he told me to leave my tape recorder off because he didn’t trust what future technology might do with unauthorized recordings of his voice.

At the time, I thought Prince was being paranoid..."

time.com/7338205/rage...

11.02.2026 03:07 —

👍 2301

🔁 537

💬 17

📌 24

Beat me to it, felt very far off

17.02.2026 21:47 —

👍 1

🔁 0

💬 0

📌 0

I'm not changing the way I write for the sake of weak thinkers. I don't care

16.02.2026 21:00 —

👍 409

🔁 68

💬 12

📌 5

Lange nichts gehört

15.02.2026 22:39 —

👍 2

🔁 0

💬 0

📌 0

Every academic article has a corresponding author whose email address is listed on the first page. If an article is paywalled, just send a short, polite email requesting a PDF from the author. 95% of the time you’ll receive it within a day. People like knowing their work is being read!

18.06.2024 09:11 —

👍 766

🔁 258

💬 20

📌 21

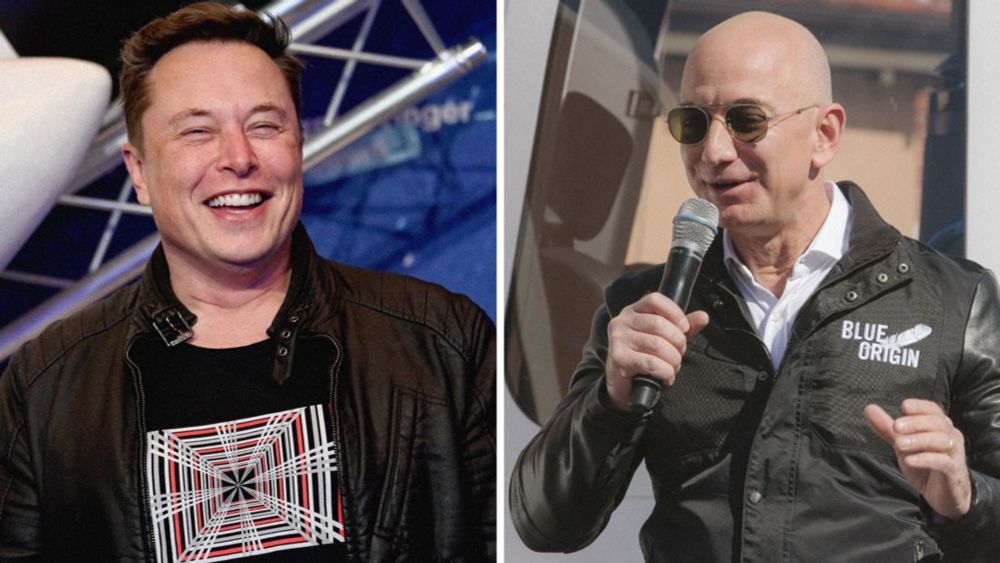

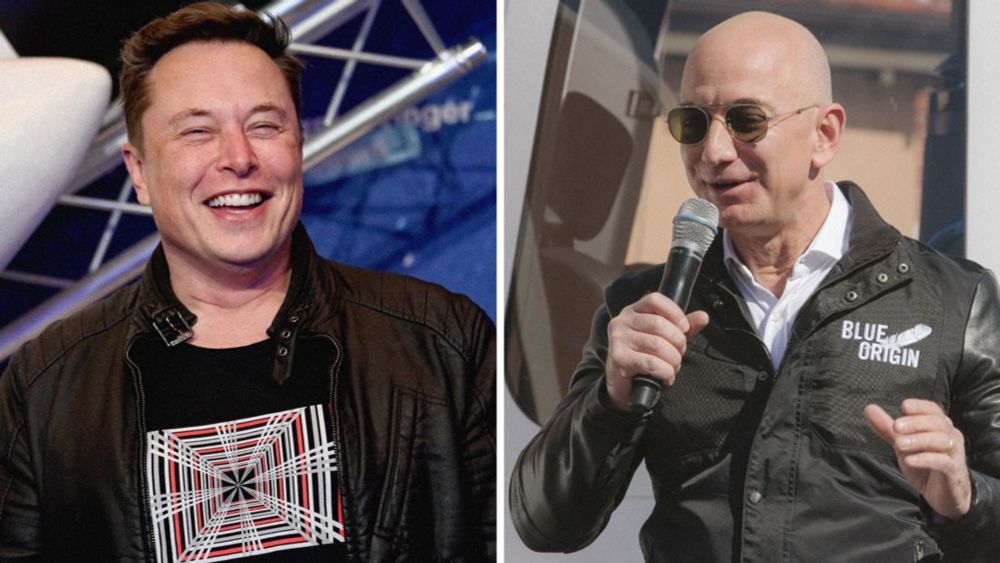

Billionaires Should Not Exist

Yes, every billionaire really is a policy failure.

When a single billionaire can accumulate more money in 10 seconds than their employees make in one year, while workers struggle to meet the basic cost of rent and medicine, then yes, every billionaire really is a policy failure.

Read our op-ed here ⤵️

23.01.2025 22:19 —

👍 28645

🔁 8496

💬 395

📌 1002

Eastern bluebird framed by berries and leaves

Eastern Bluebird.

This was taken 5.5 years ago, I'm not sure I'll ever take a better bird photo.

#birdoftheday

11.02.2026 16:21 —

👍 41

🔁 5

💬 3

📌 0

I think it's best for everyone to understand that the unified class project of billionaires right now is to do to white collar workers what globalization and neoliberalism did to blue collar workers.

04.02.2026 19:41 —

👍 12949

🔁 3434

💬 235

📌 242

it's ok if you want to mock them. the wider implication is that there are more researchers just like them, and they shouldn't feel embarrassed coming back from their delusional excursions.

23.01.2026 15:29 —

👍 0

🔁 0

💬 0

📌 1

Since this is making the rounds.

Acknowledging one’s one stupidity in public is brave and should be credited and not mocked.

What really gets me is that editors at Nature think it is a cautionary tale worth publishing.

23.01.2026 08:24 —

👍 4

🔁 0

💬 0

📌 2

Design Research: I cite what I (or my co-authors) can comprehend; but I also cite artworks sometimes.

21.01.2026 21:13 —

👍 1

🔁 0

💬 0

📌 0

the eating artist made international news and the police was involved, sounds like a fabulous master project

18.01.2026 23:59 —

👍 1

🔁 0

💬 0

📌 0

@acm.org, are you seriously requesting authors to fact-check the unasked-for AI-slop summaries of their work and then email individual revision requests? Apart from more free labour in your service, I fail to see benefits for authors.

Surely, there must be some kind of misunderstanding.

24.12.2025 21:38 —

👍 4

🔁 1

💬 0

📌 0