me trying to cut my ICML rebuttal down to <5000 characters

31.03.2025 12:17 — 👍 4 🔁 1 💬 0 📌 1me trying to cut my ICML rebuttal down to <5000 characters

31.03.2025 12:17 — 👍 4 🔁 1 💬 0 📌 1couldn’t agree more!

23.11.2024 17:05 — 👍 3 🔁 0 💬 0 📌 0😂😂😂😂😂😂

20.11.2024 22:03 — 👍 1 🔁 0 💬 0 📌 0

If people knew how much of my PhD has consisted of reading about something new, referencing back to Elements of Statistical Learning, and simply writing down what I learned…

It feels like a cheat code!

Part 2: Why do boosted trees outperform deep learning on tabular data??

@alanjeffares.bsky.social & I suspected that answers to this are obfuscated by the 2 being considered very different algs🤔

Instead we show they are more similar than you’d think — making their diffs smaller but predictive!🧵1/n

language is always evolving but if we ever reach a definition of “cool” that includes regression smoothers we will know our species has lost its way

20.11.2024 08:40 — 👍 1 🔁 0 💬 1 📌 0i’m too lazy to make a thinly-veiled self-promotion “starter pack”, so if you could all add me anyway that would be great…

19.11.2024 16:27 — 👍 7 🔁 0 💬 0 📌 0i’m starting a meta grumpy list for people that are grumpy about this list

18.11.2024 22:12 — 👍 4 🔁 0 💬 1 📌 0

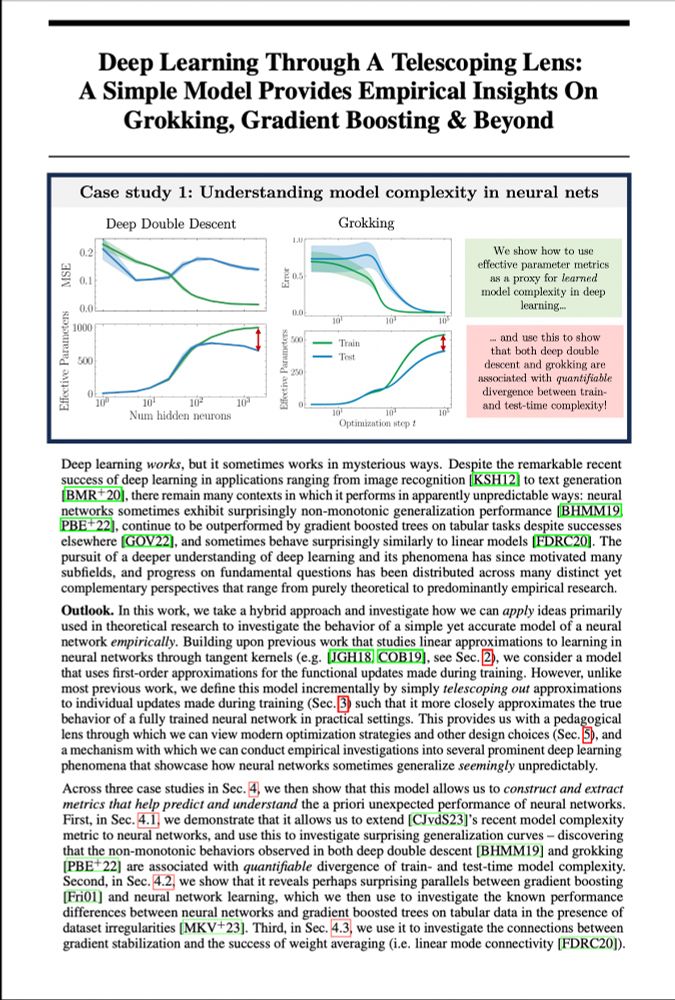

From double descent to grokking, deep learning sometimes works in unpredictable ways.. or does it?

For NeurIPS(my final PhD paper!), @alanjeffares.bsky.social & I explored if&how smart linearisation can help us better understand&predict numerous odd deep learning phenomena — and learned a lot..🧵1/n

and all of a sudden, my feed changed from musk and outrage to matrices and optimisers…

17.11.2024 17:27 — 👍 41 🔁 1 💬 0 📌 0