New paper! The Linear Representation Hypothesis is a powerful intuition for how language models work, but lacks formalization. We give a mathematical framework in which we can ask and answer a basic question: how many features can be stored under the hypothesis? 🧵 arxiv.org/abs/2602.11246

17.02.2026 16:37 —

👍 43

🔁 14

💬 1

📌 2

Title + abstract of the preprint

Excited to present a new preprint with @nkgarg.bsky.social: presenting usage statistics and observational findings from Paper Skygest in the first six months of deployment! 🎉📜

arxiv.org/abs/2601.04253

14.01.2026 19:48 —

👍 147

🔁 45

💬 4

📌 4

New #NeurIPS2025 paper: how should we evaluate machine learning models without a large, labeled dataset? We introduce Semi-Supervised Model Evaluation (SSME), which uses labeled and unlabeled data to estimate performance! We find SSME is far more accurate than standard methods.

17.10.2025 16:29 —

👍 21

🔁 7

💬 1

📌 4

selfishly i wish we could keep divya in our lab forever but i guess it would be a disservice to the rest of the world 😅 she’s been such a wonderful mentor to me—i’ve learned a lot from how thoughtful, creative, and knowledgeable she is about everything. she’s also super funny and amazing at baking 🤭

14.10.2025 17:14 —

👍 6

🔁 1

💬 1

📌 0

CONGRATS this is so exciting!!!

04.07.2025 19:56 —

👍 1

🔁 0

💬 0

📌 0

aww thank you!!! you too for your best paper 😌🫶🏼

03.07.2025 13:14 —

👍 1

🔁 0

💬 0

📌 0

Ahh thank you! ☺️

27.06.2025 17:44 —

👍 1

🔁 0

💬 0

📌 0

I can’t believe I’m saying this: our work received a Best Paper Award at #CHIL2025!! So so excited and grateful 🥰 Looking forward to day 2 of the conference with these awesome people :)

27.06.2025 17:04 —

👍 17

🔁 2

💬 1

📌 1

Science and immigration cuts · Nikhil Garg

I wrote about science cuts and my family's immigration story as part of The McClintock Letters organized by @cornellasap.bsky.social. Haven't yet placed it in a Houston-based newspaper but hopefully it's useful here

gargnikhil.com/posts/202506...

16.06.2025 11:09 —

👍 26

🔁 6

💬 2

📌 0

A gif explaining the value of test-time augmentation to conformal classification. The video begins with an illustration of TTA reducing the size of the predicted set of classes for a dog image, and goes on to explain that this is because TTA promotes the true class's predicted probability to be higher, even when it's predicted to be unlikely.

New work 🎉: conformal classifiers return sets of classes for each example, with a probabilistic guarantee the true class is included. But these sets can be too large to be useful.

In our #CVPR2025 paper, we propose a method to make them more compact without sacrificing coverage.

14.06.2025 15:00 —

👍 22

🔁 6

💬 3

📌 1

yay!! 🤩

06.05.2025 00:12 —

👍 2

🔁 0

💬 0

📌 0

I really enjoyed (and learned a LOT from) working on this project with these wonderful co-authors:

@dmshanmugam.bsky.social

Ashley Beecy

Gabriel Sayer

@destrin.bsky.social

@nkgarg.bsky.social

@emmapierson.bsky.social

7/7

01.05.2025 12:57 —

👍 5

🔁 1

💬 0

📌 0

Our work underscores the importance of accounting for health disparities; we lay a foundation for doing so with a method to (1) estimate disease severity in the presence of health disparities and (2) identify disparity patterns that can inform public health interventions. 6/

01.05.2025 12:57 —

👍 4

🔁 0

💬 1

📌 0

The interpretability and identifiability of our model also allow us to learn fine-grained descriptions of disparities. Fitting our model on heart failure patient data from NewYork-Presbyterian, our model identifies groups that face each type of health disparity. 5/

01.05.2025 12:57 —

👍 3

🔁 0

💬 1

📌 0

We prove that *failing to* account for these disparities biases severity estimates. By jointly accounting for all three, our model more accurately recovers severity. Indeed, accounting for these disparities in real heart failure data does meaningfully shift severity estimates. 4/

01.05.2025 12:57 —

👍 3

🔁 0

💬 1

📌 0

We propose an interpretable disease progression model that captures 3 key disparities: certain patient groups may (1) start receiving care at higher disease severity levels, (2) experience faster disease progression, or (3) receive less frequent care conditional on severity. 3/

01.05.2025 12:57 —

👍 3

🔁 0

💬 1

📌 0

Disease progression models are often used to help healthcare providers diagnose and treat chronic diseases. But these models have historically failed to account for health disparities that bias the data they are trained on. 2/

01.05.2025 12:57 —

👍 3

🔁 0

💬 1

📌 0

I’m really excited to share the first paper of my PhD, “Learning Disease Progression Models That Capture Health Disparities” (accepted at #CHIL2025)! ✨ 1/

📄: arxiv.org/abs/2412.16406

01.05.2025 12:57 —

👍 36

🔁 10

💬 3

📌 7

My ‘woke DEI’ grant has been flagged for scrutiny. Where do I go from here?

My work in making artificial intelligence fair has been noticed by US officials intent on ending ‘class warfare propaganda’.

The US government recently flagged my scientific grant in its "woke DEI database". Many people have asked me what I will do.

My answer today in Nature.

We will not be cowed. We will keep using AI to build a fairer, healthier world.

www.nature.com/articles/d41...

25.04.2025 17:19 —

👍 40

🔁 13

💬 1

📌 1

check out the findings from our #dogathon 😍🐶 !!

02.04.2025 14:23 —

👍 7

🔁 0

💬 0

📌 0

Migration data lets us study responses to environmental disasters, social change patterns, policy impacts, etc. But public data is too coarse, obscuring these important phenomena!

We build MIGRATE: a dataset of yearly flows between 47 billion pairs of US Census Block Groups. 1/5

28.03.2025 15:25 —

👍 42

🔁 18

💬 5

📌 1

A screenshot of the abstract of the paper, detailing our findings that several multi-agent frameworks can be hijacked to enable a complete security breach.

Excited to announce a new preprint from my lab (with @rishi-jha.bsky.social and Vitaly Shmatikov; my first as a first author!) about severe security vulnerabilities in LLM-based multi-agent systems:

“Multi-Agent Systems Execute Arbitrary Malicious Code”

arxiv.org/abs/2503.12188

1/12

18.03.2025 15:23 —

👍 8

🔁 2

💬 1

📌 0

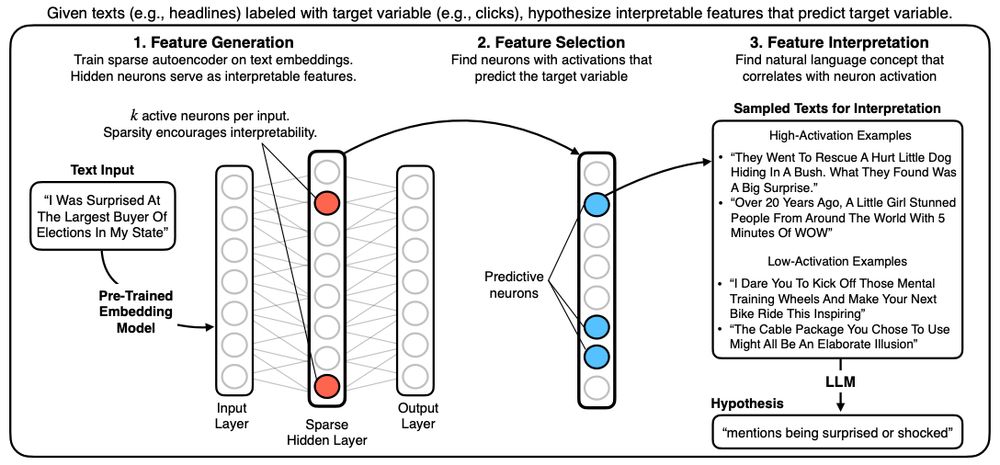

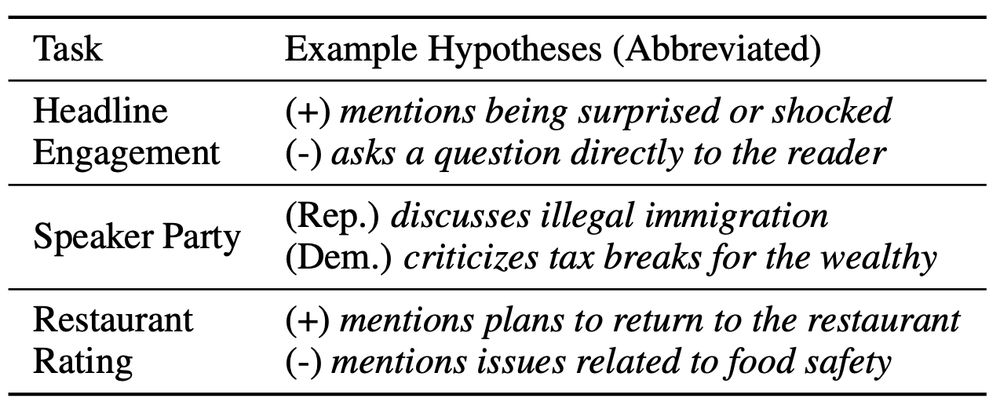

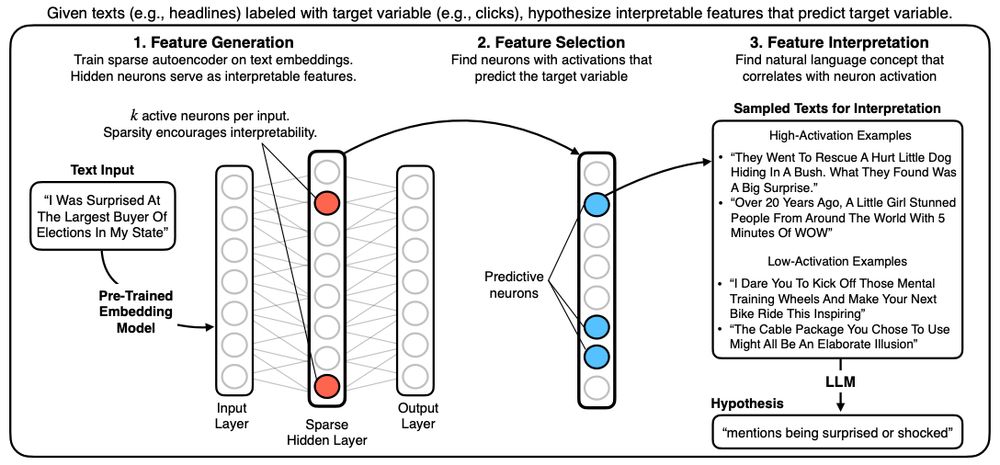

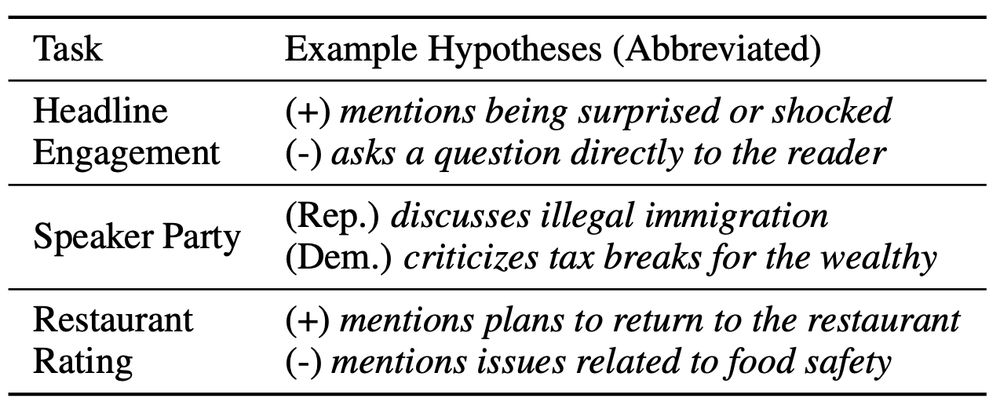

(1/n) New paper/code! Sparse Autoencoders for Hypothesis Generation

HypotheSAEs generates interpretable features of text data that predict a target variable: What features predict clicks from headlines / party from congressional speech / rating from Yelp review?

arxiv.org/abs/2502.04382

18.03.2025 15:29 —

👍 14

🔁 5

💬 1

📌 1

💡New preprint & Python package: We use sparse autoencoders to generate hypotheses from large text datasets.

Our method, HypotheSAEs, produces interpretable text features that predict a target variable, e.g. features in news headlines that predict engagement. 🧵1/

18.03.2025 15:17 —

👍 41

🔁 13

💬 1

📌 3

Please repost to get the word out! @nkgarg.bsky.social and I are excited to present a personalized feed for academics! It shows posts about papers from accounts you’re following bsky.app/profile/pape...

10.03.2025 15:12 —

👍 164

🔁 117

💬 8

📌 13

I'm excited to use my first post here to introduce the first paper of my PhD, "User-item fairness tradeoffs in recommendations" (NeurIPS 2024)!

This is joint work with Sudalakshmee Chiniah and my advisor @nkgarg.bsky.social

Description/links below: 1/

11.12.2024 05:22 —

👍 14

🔁 2

💬 1

📌 1