Glad to see this work led by the talented @hannahmech.bsky.social (and also with @emilymyers.bsky.social and @reilly-coglab.com) is out in the world. It's been fun to be thinking in units beyond the sentence.

22.12.2025 14:14 —

👍 10

🔁 2

💬 0

📌 0

We liked your interview so much we have used it in a variety of studies including brain imaging and a method where we measure how pupils change over time (pupillometry). Thank you for doing such a good job altering people's brains!

22.12.2025 16:25 —

👍 0

🔁 0

💬 0

📌 0

Scientists scanned people in an fMRI as they listened to me talk about my book She Has Her Mother's Laugh. I altered their brains! (Or at least my semantics did...)

22.12.2025 15:55 —

👍 31

🔁 3

💬 2

📌 0

Self five!!

03.11.2025 13:45 —

👍 1

🔁 0

💬 0

📌 0

Why are we so good at adapting to "sub-optimal" speech? I argue, in this review paper, that adaptation may be driven by the combined action of dopamine and norepinephrine via prediction error signaling. It's early days with this idea, but I'm excited to have it out there and to keep exploring more!

06.10.2025 13:21 —

👍 9

🔁 3

💬 0

📌 0

Is there a difference? hahaha

02.09.2025 17:32 —

👍 1

🔁 0

💬 0

📌 0

Utterly - I never know what's going to happen next

02.09.2025 15:57 —

👍 1

🔁 0

💬 1

📌 0

So exciting!!!!

02.09.2025 14:47 —

👍 1

🔁 0

💬 1

📌 0

Awwwww Yeah -- it's official

01.09.2025 17:10 —

👍 49

🔁 7

💬 3

📌 0

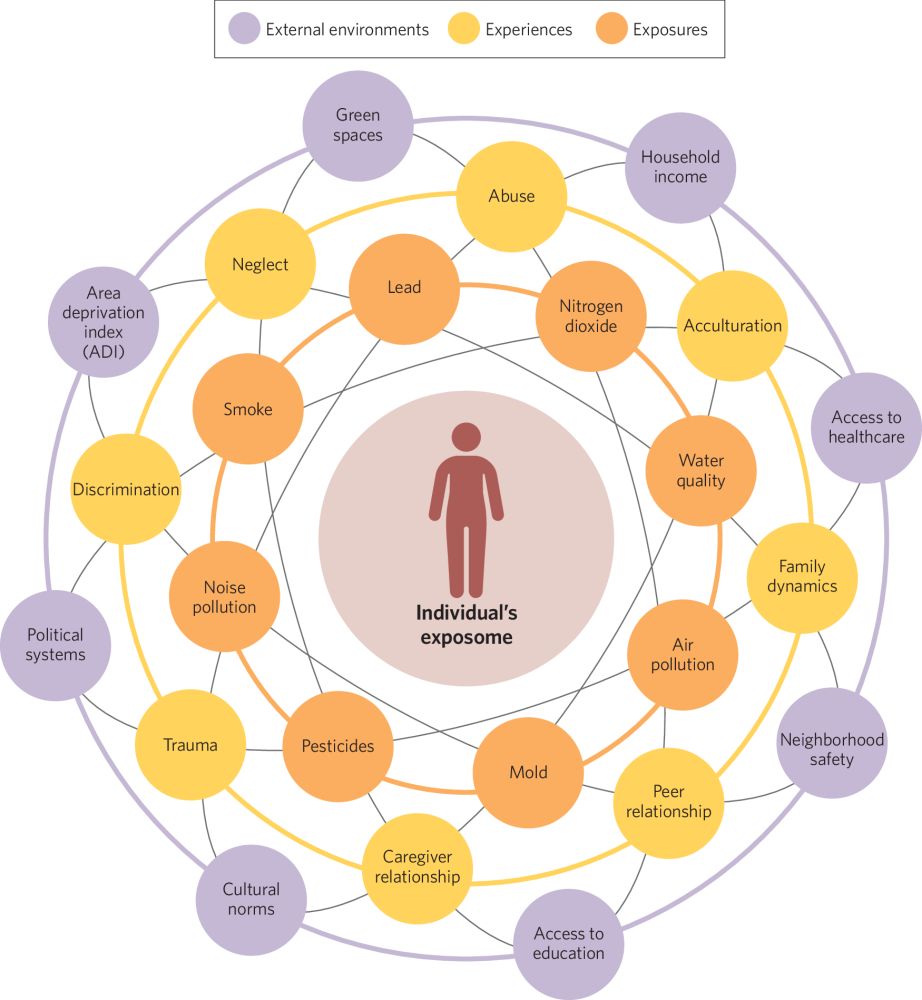

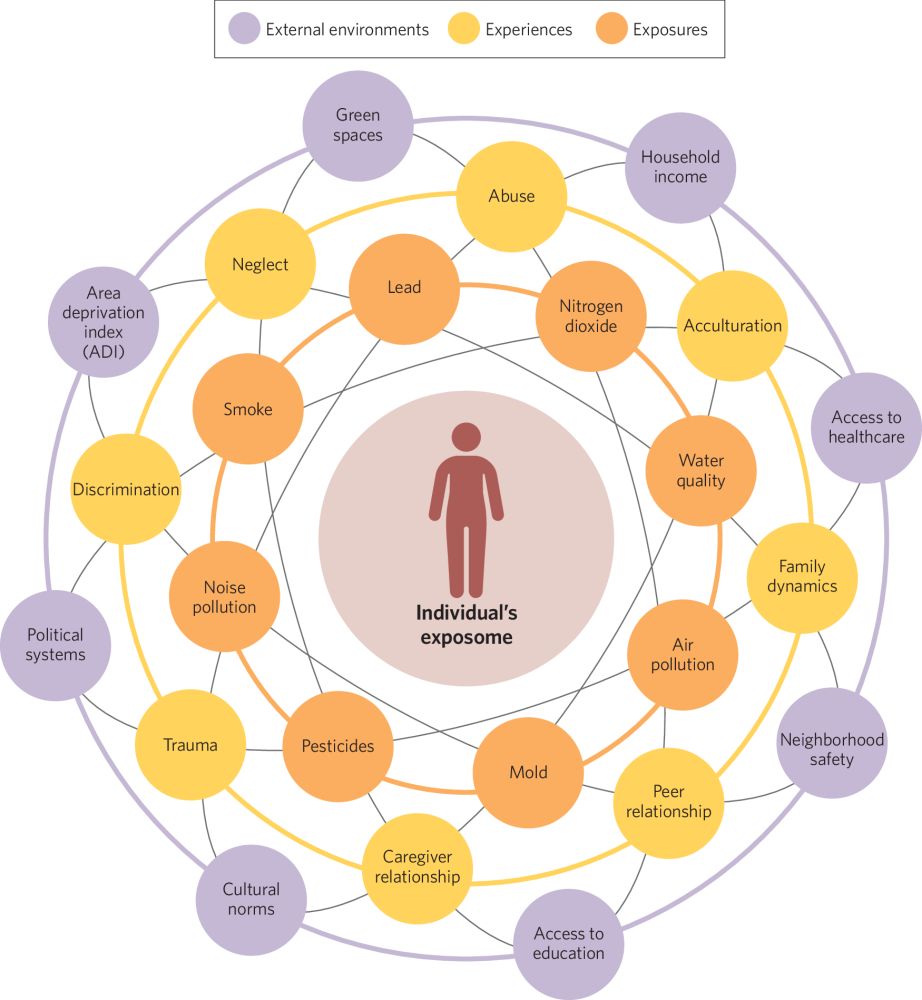

The effect of the “exposome” on developmental brain health and cognitive outcomes

Neuropsychopharmacology - The effect of the “exposome” on developmental brain health and cognitive outcomes

Absolutely thrilled to share the 🌟 FIRST PAPER 🌟 from ACORN Lab! 🐿️ I’m beyond proud of all-star grad student @heatherarobinson.bsky.social for this review of “exposome” effects on neurodevelopment & cognition! Paper here rdcu.be/ezUqx & thread below 👇 /1

#neuroskyence #PsychSciSky #devsky #cogdev

08.08.2025 16:22 —

👍 70

🔁 33

💬 4

📌 1

Such a fun experience to travel down to Georgia and have our team of talented students show the summit attendees what the @langscistation.bsky.social is all about! It's been a few summers since I've had the pleasure of running studies with the LSS and it was lovely to reprise my role :)

23.07.2025 23:10 —

👍 4

🔁 0

💬 0

📌 0

Our conversation analysis software is now on CRAN! Reach out with any questions. We hope it’s useful for all you language and social interaction folks out there.

22.07.2025 18:44 —

👍 38

🔁 14

💬 1

📌 0

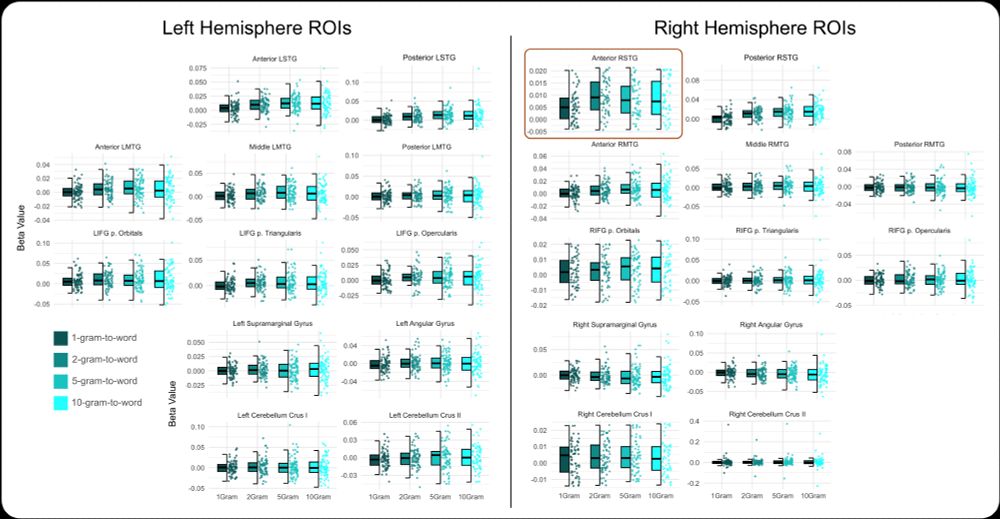

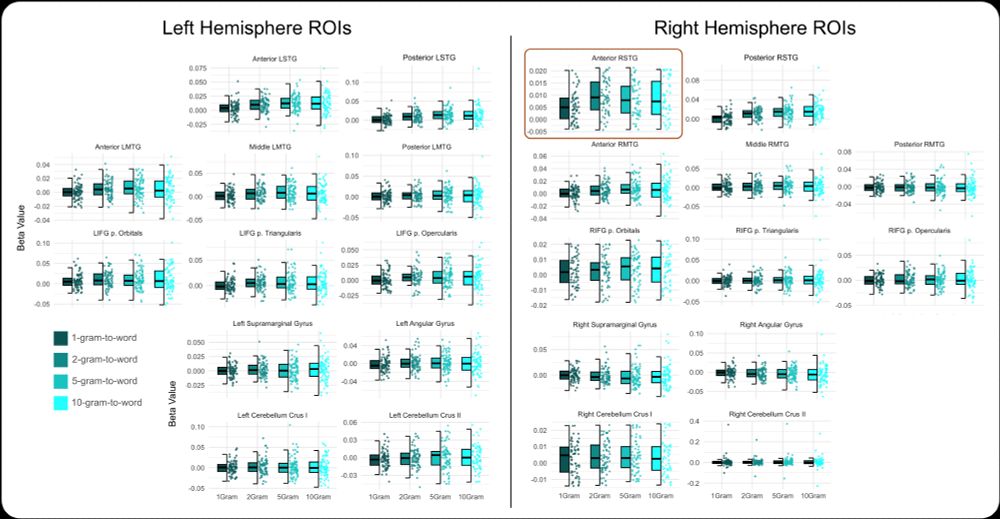

As you listen to a story, the meaning of each word you hear relates to the meaning of prior words. But how? We operationalized semantic distance, and identified brain regions that correspond to this rolling summary measure during naturalistic listening. #neuroscienceoflanguage

13.06.2025 21:48 —

👍 26

🔁 7

💬 0

📌 0

Thanks @ariellekeller.bsky.social, it was a true team effort!

13.06.2025 20:34 —

👍 0

🔁 0

💬 0

📌 0

OSF

HUGE props to @jamiereillycog.bsky.social (check out his super cool SemanticDistance package in R too: osf.io/preprints/ps...), @jpeelle.bsky.social, and @emilymyers.bsky.social for getting this project out into the world. It's neat stuff.

13.06.2025 20:33 —

👍 4

🔁 0

💬 0

📌 0

Plots showing activation patterns within right and left hemisphere ROIs for changes in the context window size of semantic distance. The right anterior STG is highlighted as that had a statistically significant difference across the window sizes.

The results are interesting (if I am so biased as to say), maybe especially so because the anterior right temporal lobe was particularly sensitive to changes in context window size (so, from 2-gram to 10-gram) - perhaps suggesting a specialized role in semantic integration during listening.

13.06.2025 20:33 —

👍 1

🔁 0

💬 1

📌 0

Check out our preprint (linked again here: osf.io/preprints/ps...) where we measured neural sensitivity to changes in semantic space while listening to a podcast. Not only do we look at word-to-word but larger chunks too (2-gram, 5-gram, 10-gram) to examine meaning construction at multiple levels.

13.06.2025 20:33 —

👍 33

🔁 6

💬 1

📌 2

I can't believe we didn't think about that! Maybe we can get starburst to sponsor??

06.05.2025 15:52 —

👍 1

🔁 0

💬 1

📌 0

In this new pub we ask whether a small rewards (cents) might get people to learn the phonetic details of talker more quickly (e.g. a s--sh shift). Answer: probably not. The fact that we got to title the paper "Cents and Shenshibility" was just a bonus. Explainer below!

06.05.2025 15:08 —

👍 29

🔁 6

💬 3

📌 0

a woman with curly hair is sitting in a chair and saying money please .

Alt: a woman with curly hair is sitting in a chair and saying money please .

This work is reasonably exciting (to us) as it suggests that this process is relatively insulated from external influence – which begs the question: why? Food for future study, and we hope this work inspires renewed interest in the role of attention and reward in talker-specific phonetic learning.

06.05.2025 13:52 —

👍 3

🔁 0

💬 2

📌 0

Plots of the pooled results for three post hoc analyses summarizing over phonetic categorization data from multiple experiments reported here. For all, the x-axis depicts the acoustic energy as the proportion of /s/ energy, proceeding from more /ʃ/-like responses on the left to more /s/-like responses on the right-hand side. The y-axis plots the mean of participant /s/ responses. Error bars depict the standard error of the mean. Bias direction is indicated by the color of the line, with red indicating the /s/-biased talker and the blue indicating the /ʃ/-biased talker. A Post hoc analysis comparing all experiments with a high- and low-reward talker to assess if the magnitude of the bias effect is different for the value of the talker. Panel B) Post hoc analysis comparing experiments with and without reward components to test if tasks with reward makes the bias effect smaller. Panel C) Post hoc analysis comparing magnitude of the bias effect after either the talker-decision cover task or the phoneme-monitoring cover task during exposure to examine any task-related differences in phonetic recalibration.

(5/) We then followed up with several alternations: what if we reward at the level of the phoneme instead of at the talker? What if listeners know the value of the reward before they hear the ambiguous word? Results converge on the possibility that reward does not influence phonetic recalibration

06.05.2025 13:52 —

👍 2

🔁 0

💬 2

📌 0

Results from the phonetic categorization task from Experiment 1A (left column) and Experiment 1B (right column). The red lines indicate categorization for the /s/-biased talker and the blue lines indicate categorization for the /ʃ/-biased talker. On the x-axis, we plot the acoustic energy as the proportion of /s/ energy, proceeding from more /ʃ/-like responses on the left to more/s/-like responses on the right-hand side. Shaded gray regions indicate the range depicted in Fig. 5. Rewarded Talker is indicated by line type: solid for high-reward talker and dashed for low-reward talker.

(4/) In the first experiment, using a probabilistic reward schedule, we found that listeners learned just as much about the high reward talker as they did for the low reward talker, suggesting that perhaps external value may not factor into talker-specific phonetic recalibration. Curious

06.05.2025 13:52 —

👍 2

🔁 0

💬 1

📌 0

Schematic of the experiment task structure. All experiments had the same task progression. In the exposure phase, participants heard tokens from both talkers, presented in an interleaved fashion. The exposure task varied slightly for each experimental question (Panel B). After the exposure task, participants immediately completed a phonetic categorization task which was blocked by talker; talker order was counterbalanced across participants. The phonetic categorization task was a 2AFC decision where participants indicated by button press whether a given token on a 7-point continuum from sign-shine was more like “sign” or like “shine.” Panel B) Variants on the exposure task by experimental question. Experiments 1A and 1B used a talker decision task where participants indicated whether the talker was male or female and received feedback and a reward (points that corresponded to a small monetary payout) for correct answers on a subset of the trials. Experiments 2A, 2B, and 3 transitioned to a phoneme monitoring task where participants indicated if the auditory word contained the “n” phoneme or not. Again, they received feedback and a reward for a subset of correct responses. Experiment 3 used the same phoneme monitoring task but added a reward cue prior to the auditory stimulus that indicated the value of the talker. After the phoneme monitoring response, participants also received feedback.

(3/) In our adaptation of a multitalker LGPL paradigm (randomly intermixed exposure then talker-specific test), we added a small monetary reward following correct trials more often for one talker vs the other. We then measured the amount of learning via a test for each talker. So, what did we find?

06.05.2025 13:52 —

👍 2

🔁 0

💬 1

📌 0

a close up of a man 's face with the words `` i don 't care about your life . ''

Alt: A close up of a Tyrion Lanister from Game of Thrones with the words "I don't care about your life .''

(2/) We wanted to know if listeners might prioritize learning for one talker that was literally rewarded more often than another: something we might experience in real life. Might we learn more about the speech for those we value? And learn less about the speech for talkers that we value less?

06.05.2025 13:52 —

👍 2

🔁 0

💬 1

📌 0

Annual meeting of the Society for the Neurobiology of Language

September 12-14 2025

Gallaudet University

Abstract submission through April 29

neurolang.org

The Society for the Neurobiology of Language meeting will be at Gallaudet University, September 12-14th. We have 4 outstanding keynotes (Fumiko Hoeft, Duane Watson, Carol Padden, Fatemeh Geranmayeh).

Abstract submissions are open! Hope to see you there! #SNL2025

2025.neurolang.org/abstract-sub...

15.04.2025 18:25 —

👍 33

🔁 16

💬 2

📌 1

Thank you for the shout-out! We have an open-source model (osf.io/db79q/) for anyone who wants to start a lab digest -- it leads your writers through all the skills they need to learn about public science communication! Stay tuned for our 10th issue that will be released soon!

08.04.2025 12:01 —

👍 7

🔁 2

💬 1

📌 0