arxiv.org/abs/2602.07519

14.02.2026 10:30 —

👍 5

🔁 3

💬 0

📌 0

Also, including me.

10.02.2026 19:14 —

👍 3

🔁 2

💬 1

📌 0

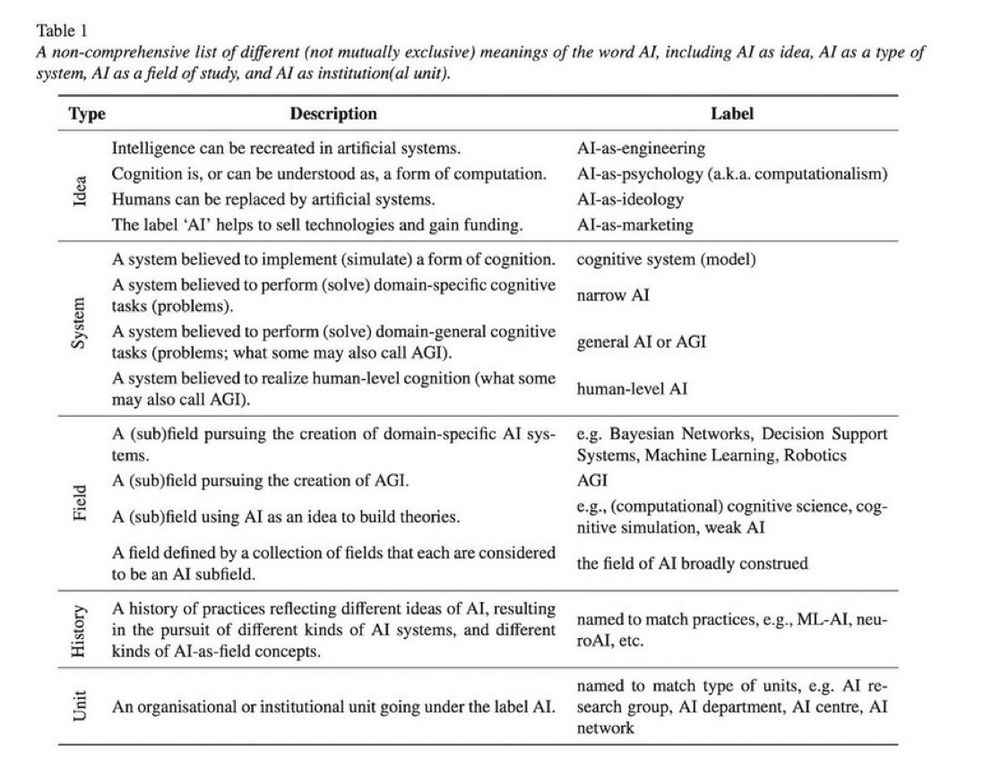

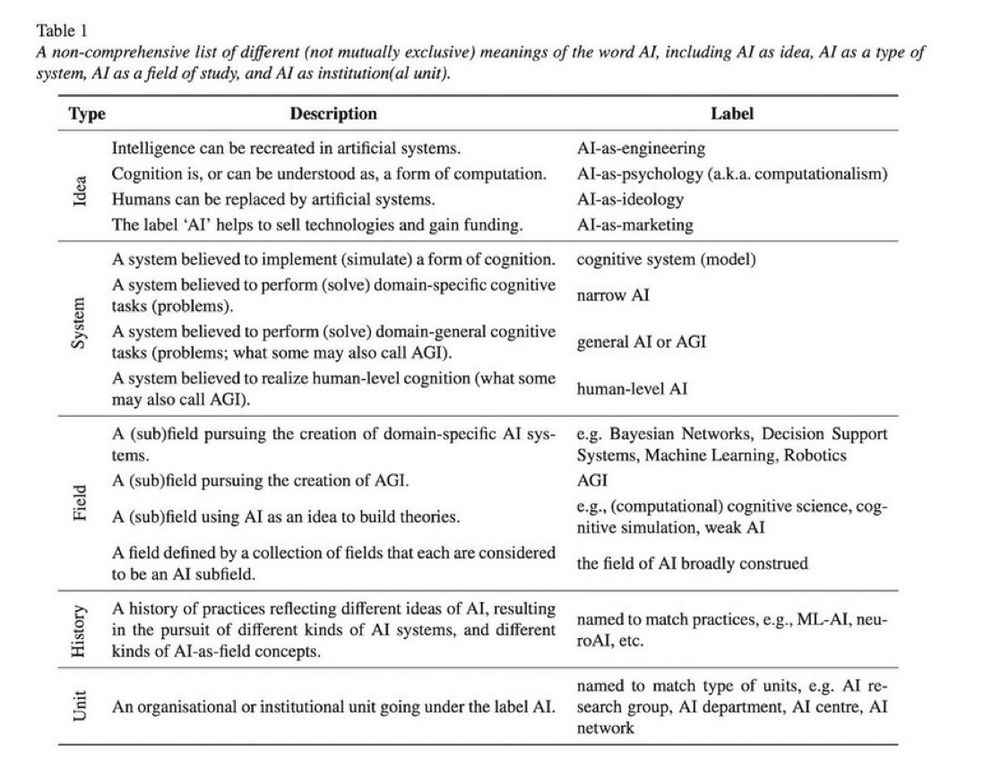

Table 1

A non-comprehensive list of different (not mutually exclusive) meanings of the word Al, including Al as idea, Al as a type of system, Al as a field of study, and Al as institution(al unit).

The term ‘Artificial Intelligence’ (AI) means many things to many people (see Table 1). (...) One meaning of ‘AI’ that seems often forgotten these days is one that played a crucial role in the birth of cognitive science as an interdiscipline in the 1970s and ’80s." 2/n

16.08.2024 19:42 —

👍 190

🔁 47

💬 7

📌 12

This is NOT what AI was about

"What can researchers do if they suspect that their manuscripts have been peer reviewed using artificial intelligence (AI)?"

02.12.2025 19:43 —

👍 2

🔁 1

💬 0

📌 0

This -> "We have deprived the youth of the joys of discovery, the thrill to feel yourself close to an answer, and the wonderful, rewarding feeling of a moment of lucidity after hard work."

01.12.2025 09:15 —

👍 11

🔁 4

💬 0

📌 0

Scripto_Asinine or sound minds?

Perhaps of interest to some of you.

"Quick societal adoption of tools often reflects a demand driven by business interests or culture. Reflecting on generative AI, especially Large Language Models, I believe there is a strong lifestyle generation component that nurtures..

cal-r.org/mondragon/S8...

30.11.2025 15:44 —

👍 7

🔁 5

💬 0

📌 2

EU set to water down landmark AI act after Big Tech pressure

Commission proposes pauses to provisions in digital rule book

Surprise, surprise. Here we go.

“The European Commission is proposing a pause to parts of its landmark artificial intelligence laws amid intense pressure from Big Tech companies and the US government.”

www.ft.com/content/af6c...

07.11.2025 14:03 —

👍 21

🔁 16

💬 4

📌 4

YouTube video by librebel

Against the Uncritical Adoption of AI Technologies in Academia by Guest et. al. (2025)

✨ This is wonderful 🎬 🍿

Librebel on Youtube reads out our position paper:

Guest, O., Suarez, … & van Rooij, I. (2025). Against the Uncritical Adoption of 'AI' Technologies in Academia. Zenodo. doi.org/10.5281/zeno...

www.youtube.com/watch?v=cJNO... @olivia.science @marentierra.bsky.social

08.11.2025 00:22 —

👍 39

🔁 15

💬 2

📌 1

AI Is Hollowing Out Higher Education

Olivia Guest & Iris van Rooij urge teachers and scholars to reject tools that commodify learning, deskill students, and promote illiteracy.

“Ultimately, the collective strategy of AI companies threatens to deskill precisely those people who are essential for society to function(…) automation of knowledge and culture by private companies is a worrying prospect – conjuring dystopian and outright fascistic scenarios.” — @olivia.science

17.10.2025 22:51 —

👍 438

🔁 223

💬 6

📌 26

Abstract: Under the banner of progress, products have been uncritically adopted or

even imposed on users — in past centuries with tobacco and combustion engines, and in

the 21st with social media. For these collective blunders, we now regret our involvement or

apathy as scientists, and society struggles to put the genie back in the bottle. Currently, we

are similarly entangled with artificial intelligence (AI) technology. For example, software updates are rolled out seamlessly and non-consensually, Microsoft Office is bundled with chatbots, and we, our students, and our employers have had no say, as it is not

considered a valid position to reject AI technologies in our teaching and research. This

is why in June 2025, we co-authored an Open Letter calling on our employers to reverse

and rethink their stance on uncritically adopting AI technologies. In this position piece,

we expound on why universities must take their role seriously toa) counter the technology

industry’s marketing, hype, and harm; and to b) safeguard higher education, critical

thinking, expertise, academic freedom, and scientific integrity. We include pointers to

relevant work to further inform our colleagues.

Figure 1. A cartoon set theoretic view on various terms (see Table 1) used when discussing the superset AI

(black outline, hatched background): LLMs are in orange; ANNs are in magenta; generative models are

in blue; and finally, chatbots are in green. Where these intersect, the colours reflect that, e.g. generative adversarial network (GAN) and Boltzmann machine (BM) models are in the purple subset because they are

both generative and ANNs. In the case of proprietary closed source models, e.g. OpenAI’s ChatGPT and

Apple’s Siri, we cannot verify their implementation and so academics can only make educated guesses (cf.

Dingemanse 2025). Undefined terms used above: BERT (Devlin et al. 2019); AlexNet (Krizhevsky et al.

2017); A.L.I.C.E. (Wallace 2009); ELIZA (Weizenbaum 1966); Jabberwacky (Twist 2003); linear discriminant analysis (LDA); quadratic discriminant analysis (QDA).

Table 1. Below some of the typical terminological disarray is untangled. Importantly, none of these terms

are orthogonal nor do they exclusively pick out the types of products we may wish to critique or proscribe.

Protecting the Ecosystem of Human Knowledge: Five Principles

Finally! 🤩 Our position piece: Against the Uncritical Adoption of 'AI' Technologies in Academia:

doi.org/10.5281/zeno...

We unpick the tech industry’s marketing, hype, & harm; and we argue for safeguarding higher education, critical

thinking, expertise, academic freedom, & scientific integrity.

1/n

06.09.2025 08:13 —

👍 3776

🔁 1889

💬 110

📌 389

Some pictures of this enlightening event organised by @hcid.city and @citai.bsky.social

05.09.2025 19:14 —

👍 3

🔁 1

💬 1

📌 0

I am pleased to announce that this paper has been accepted for publication in Artificial Intelligence (AIJ)! 😊

arxiv.org/abs/2310.01536

11.08.2025 13:43 —

👍 8

🔁 4

💬 1

📌 1

YouTube video by CSER Cambridge

Professor Margaret Boden - Human-level AI: Is it Looming or Illusory?

Did you all know that Margaret Boden founded the first School of Cognitive and Computing Sciences in 1987.

No?

Well, now you do 😌

Btw, listen to this great talk by her. I'll be adding this to the resources from our 1st year students in Intro to AI.

www.youtube.com/watch?v=wPRA...

01.09.2024 19:21 —

👍 86

🔁 26

💬 9

📌 3

YouTube video by Future of Intelligence

The Margaret Boden Lecture - Lecture One by Professor Margaret Boden (Sussex)

Inaugural Lecture of the Margaret Boden Lecture series at the Leverhulme Centre for the Future of Intelligence

www.youtube.com/watch?v=zNr2...

28.07.2025 16:18 —

👍 4

🔁 4

💬 0

📌 0

Those who were lucky to know her personally can only share her defence of the university as the place for fundamental and speculative research and debate and her commitment to challenge powers-that-be.

🌸

2/2

28.07.2025 15:58 —

👍 1

🔁 0

💬 0

📌 0

Obituary: Professor Maggie Boden

The University of Sussex mourns the loss of Professor Margaret (Maggie) Boden, a pioneering figure in cognitive science and artificial intelligence.

Sad news. RIP Maggie Boden.

Maggie was an AI pioneer and a truly interdisciplinary scholar, integrating in her work philosophy, psychology, and computer science -worth emphasising in an era of massive, data-hungry GenAI architectures.

1/n

staff.sussex.ac.uk/news/article...

28.07.2025 15:57 —

👍 1

🔁 0

💬 1

📌 0

24.07.2025 14:51 —

👍 2

🔁 2

💬 0

📌 0

Comics & AI: Critical Prompts

A multidisciplinary conference on the future of comics, technology, and creativity

You are invited to a one-day multidisciplinary conference on the future of comics, technology, and creativity. Abstracts (200 words) + bios (100 words) due: 10 July 2025. Don’t miss this opportunity to rethink comics and AI with a multidisciplinary community! #CFP: comicsandai.org #ComicsStudies

15.05.2025 06:07 —

👍 17

🔁 13

💬 2

📌 4

12.05.2025 17:29 —

👍 2

🔁 0

💬 0

📌 0

CitAI-Seminars

Website of the Artificial Reseach Centre, CitAI. Seminars.

#CitAI_Seminars

Tuesday, 22-04 (Zoom, 16:00 BST) Marin Lujak Artificial Intelligence Research Group on

'Scalable, efficient and distributed multi-agent coordination of automated agricultural vehicle fleets'

If interested, pls get in touch with

the organisers.

More info cit-ai.net/seminars.html

11.04.2025 14:23 —

👍 0

🔁 0

💬 0

📌 0

The AI Continent Action Plan

The European Union is committed and determined to become a global leader in Artificial Intelligence, a leading AI continent.

The EU has just announced a plan to establish Europe’s leadership in AI.

digital-strategy.ec.europa.eu/en/library/a...

Five key points:

1. Creating a solid computing infrastructure: strengthening the network of AI Factories and establishing resource-efficient Gigafactories

1/n

09.04.2025 13:50 —

👍 8

🔁 2

💬 1

📌 0