I have never wanted to align my professional growth-hacking roadmap with an institutional 360 mission statement for accelerated personal abundance as much as I do now.

11.09.2025 16:00 —

👍 1

🔁 0

💬 0

📌 0

Civil Servants On Trains, Running LLMs

22.07.2025 17:13 —

👍 0

🔁 0

💬 0

📌 0

“GovGPT, please book me a return train from Leeds to London, with tube travel to Canary Wharf for next Wednesday. Make sure the booking adheres to civil service travel cost policy.”

<New Mexico data centre bursts into flames>

22.07.2025 16:48 —

👍 22

🔁 5

💬 1

📌 1

That's pretty much exactly what I would expect a card-carrying member of the Campaign for Real Liquid Knowledge to say.

22.07.2025 08:47 —

👍 0

🔁 0

💬 1

📌 0

Of course, it's only "Liquid Knowledge" if it's from the Connaissance-Liquide region of France. Otherwise it's just sparkling public sector transformation.

21.07.2025 12:03 —

👍 0

🔁 0

💬 1

📌 0

🔥🔥🔥

04.06.2025 10:22 —

👍 42

🔁 12

💬 0

📌 0

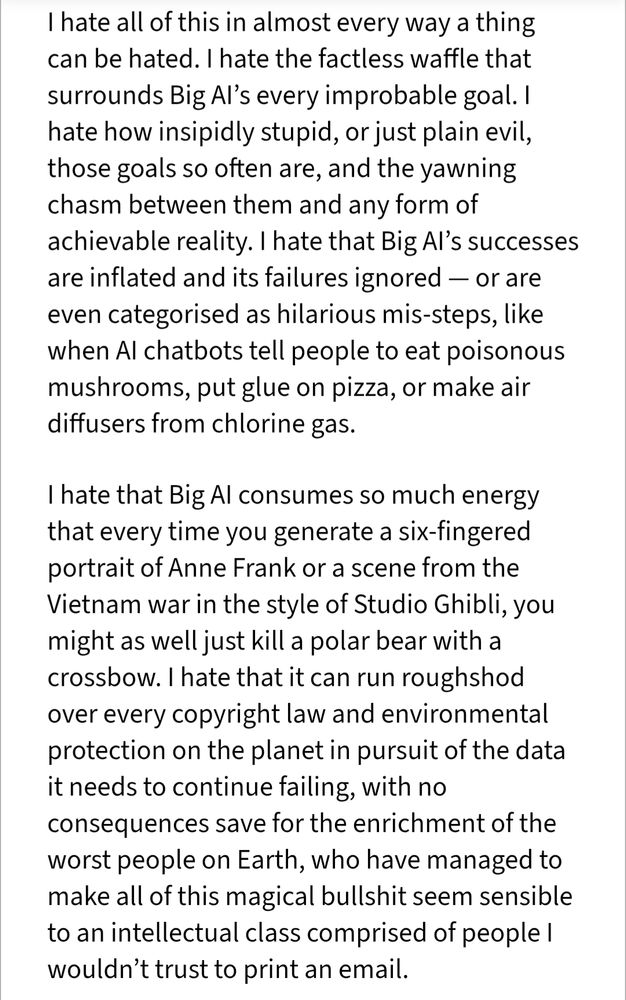

I hate all of this in almost every way a thing can be hated. I hate the factless waffle that surrounds Big AI’s every improbable goal. I hate how insipidly stupid, or just plain evil, those goals so often are, and the yawning chasm between them and any form of achievable reality. I hate that Big AI’s successes are inflated and its failures ignored — or are even categorised as hilarious mis-steps, like when AI chatbots tell people to eat poisonous mushrooms, put glue on pizza, or make air diffusers from chlorine gas.

I hate that Big AI consumes so much energy that every time you generate a six-fingered portrait of Anne Frank or a scene from the Vietnam war in the style of Studio Ghibli, you might as well just kill a polar bear with a crossbow. I hate that it can run roughshod over every copyright law and environmental protection on the planet in pursuit of the data it needs to continue failing, with no consequences save for the enrichment of the worst people on Earth, who have managed to make all of this magical bullshit seem sensible to an intellectual class comprised of people I wouldn’t trust to print an email.

On hatred.

05.04.2025 12:11 —

👍 1927

🔁 823

💬 25

📌 42

This week I have been marking my five-year COVID anniversary by... testing positive for COVID. Lightest dose I've had so far, but it's still knocked me for six.

It's not so much the immediate impact of course, but the long-term & cumulative effects that concern me.

Worst anniversary re-issue ever.

21.03.2025 10:40 —

👍 0

🔁 0

💬 0

📌 0

Back in 2020 we were using your paper on algorithmic injustices in our cross-government data ethics courses at the Data Science Campus at the ONS in the UK (basically training up UK government data scientists). I'll see if I can find some refs.

15.03.2025 14:49 —

👍 3

🔁 0

💬 0

📌 0

A giant redwood tree viewed from the base of the trunk looking straight upwards.

They even have Californian redwoods! I have many fond memories of playing in sequoia forests as a child. Even now, so many years later, when I close my eyes and think of trees, this is what I picture.

14.03.2025 11:46 —

👍 0

🔁 0

💬 0

📌 0

An open gate in the middle of a Hugh stone wall, with a red tree to the left of the date and a bush to the right. Through the gate you can see a pathway lined with plants and a stone building in the distance.

I think I found my new Happy Place this week. It's Green Week here at TCD, and the Trinity College Botanic Garden celebrated National Tree Week with a tree trail. The Botanic Garden is off-campus and I'd never been before, but it's amazing.

So peaceful. Much trees.

14.03.2025 11:41 —

👍 2

🔁 1

💬 1

📌 0

Labour’s escalating claims around the potential use of AI in government are borne out of relatable constraints and welcome ambition for Britain but

We are deeply concerned that in an effort to increase efficiency and find savings, the UK could end up wasting £100s of millions of public money in failed projects. This risks damaging public services - and ultimately holding Britain back - rather than taking the opportunity to make them fit for the future.

It might sound like a silver bullet but we can’t just shoehorn AI into existing services.

Here are 10 questions that we believe the Government needs to demonstrate it has answers to before signing away millions of pounds of taxpayer’s money with no break clause on the contracts if things go wrong

Yesterday a group of us put our heads together to think about the questions that should be asked of Starmer's plans for AI in government - you might have seen this nod to it in Politico this morning. These are for journalists, politicians, and anyone with an interest in pro-democracy tech (1/3)

14.03.2025 09:35 —

👍 119

🔁 64

💬 10

📌 9

Out for a group dinner in Dublin seated beside a work colleague from the US who had just arrived that morning.

Me: how was your flight?

Him: Not too bad, I'm a pretty good pilot.

Me:

Me:

My brain: I have nothing more I can add to this conversation, you're on your own.

Me: That's nice

10.03.2025 12:20 —

👍 7

🔁 2

💬 1

📌 0

Reminder: if you haven’t yet read “The Mythical Man Month,” buy two copies so you can read it faster.

06.03.2025 06:57 —

👍 615

🔁 93

💬 29

📌 5

7 Conclusion

We surveyed 319 knowledge workers who use GenAI tools (e.g.,

ChatGPT, Copilot) at work at least once per week, to model how

they enact critical thinking when using GenAI tools, and how GenAI

affects their perceived effort of thinking critically. Analysing 936

real-world GenAI tool use examples our participants shared, we

find that knowledge workers engage in critical thinking primarily

to ensure the quality of their work, e.g. by verifying outputs against

external sources. Moreover, while GenAI can improve worker effi-

ciency, it can inhibit critical engagement with work and can poten-

tially lead to long-term overreliance on the tool and diminished skill

for independent problem-solving. Higher confidence in GenAI’s

ability to perform a task is related to less critical thinking effort.

When using GenAI tools, the effort invested in critical thinking

shifts from information gathering to information verification; from

problem-solving to AI response integration; and from task execu-

tion to task stewardship. Knowledge workers face new challenges

in critical thinking as they incorporate GenAI into their knowledge

workflows. To that end, our work suggests that GenAI tools need

to be designed to support knowledge workers’ critical thinking by

addressing their awareness, motivation, and ability barriers

The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers www.microsoft.com/en-us/resear...

using genAI reduces a person’s cognitive efforts and critical thinking, findings from Microsoft Research

05.03.2025 20:06 —

👍 204

🔁 84

💬 7

📌 21

Memorandum of Understanding between UK and Anthropic on AI opportunities

Well this is 🍿 for anyone trying to work out what is happening in UK AI policy www.gov.uk/government/p...

14.02.2025 08:41 —

👍 19

🔁 12

💬 5

📌 0

Of course it's only a Vibes-Based Estimate if it's from the Vibrac region of France, otherwise it's just sparkling anecdotal science.

11.02.2025 09:55 —

👍 1

🔁 0

💬 0

📌 0

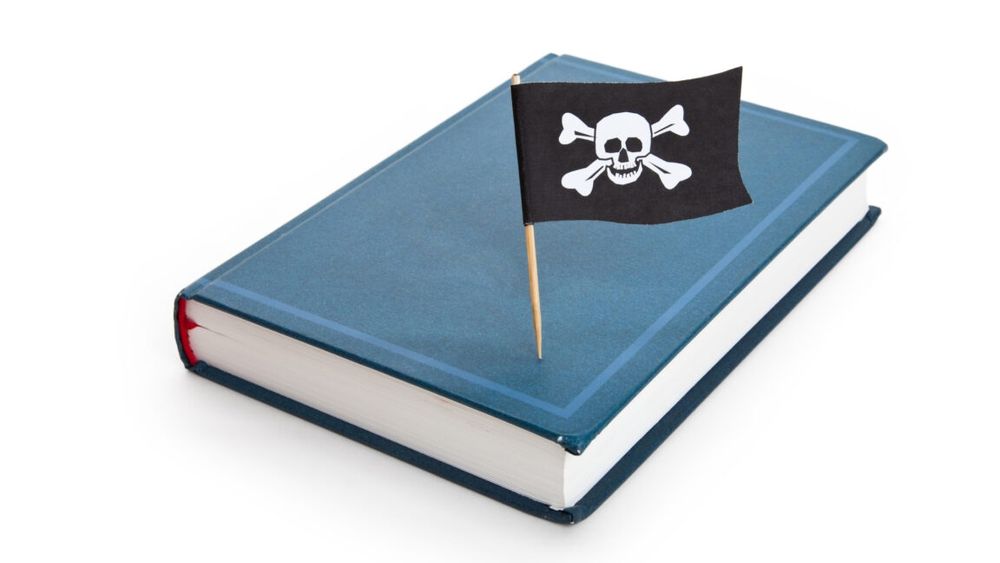

”Torrenting from a corporate laptop doesn’t feel right”: Meta emails unsealed

Meta’s alleged torrenting and seeding of pirated books complicates copyright case.

"Meta torrented "at least 81.7 terabytes of data across multiple shadow libraries through the site Anna’s Archive, including at least 35.7 terabytes of data from Z-Library and LibGen" arstechnica.com/tech-policy/...

06.02.2025 22:35 —

👍 190

🔁 70

💬 4

📌 26

Like it or not, this absolutely changes the security and threat prevention model for digital government. Every worst-case scenario conjured by civil society orgs and rights advocates - the things people say will never happen - is unfolding at pace. Relying on lawful benevolence is not enough.

05.02.2025 21:01 —

👍 267

🔁 108

💬 9

📌 5

With Google removing their Responsible AI Principles, they no longer state that they will *not* engage in "Technologies whose purpose contravenes widely accepted principles of international law and human rights".

Concerns about surveillance and injury are also erased.

ai.google/responsibili...

04.02.2025 19:58 —

👍 271

🔁 173

💬 9

📌 20

![Please use the sharing tools found via the share button at the top or side of articles. Copying articles to share with others is a breach of FT.com T&Cs and Copyright Policy. Email licensing@ft.com to buy additional rights. Subscribers may share up to 10 or 20 articles per month using the gift article service. More information can be found at https://www.ft.com/tour.

https://www.ft.com/content/a0dfedd1-5255-4fa9-8ccc-1fe01de87ea6?sharetype=blocked

OpenAI says it has found evidence that Chinese artificial intelligence start-up DeepSeek used the US company’s proprietary models to train its own open-source competitor, as concerns grow over a potential breach of intellectual property.

The San Francisco-based ChatGPT maker told the Financial Times it had seen some evidence of “distillation”, which it suspects to be from DeepSeek.

The technique is used by developers to obtain better performance on smaller models by using outputs from larger, more capable ones, allowing them to achieve similar results on specific tasks at a much lower cost.

Distillation is a common practice in the industry but the concern was that DeepSeek may be doing it to build its own rival model, which is a breach of OpenAI’s terms of service.

“The issue is when you [take it out of the platform and] are doing it to create your own model for your own purposes,” said one person close to OpenAI.](https://cdn.bsky.app/img/feed_thumbnail/plain/did:plc:26hkfmtr7jrchexamh7hk6rz/bafkreicsgga7q6qtz6xcrc4xl4sucq4wgcyrlojh4roufwiyj3tcon5wka@jpeg)

Please use the sharing tools found via the share button at the top or side of articles. Copying articles to share with others is a breach of FT.com T&Cs and Copyright Policy. Email licensing@ft.com to buy additional rights. Subscribers may share up to 10 or 20 articles per month using the gift article service. More information can be found at https://www.ft.com/tour.

https://www.ft.com/content/a0dfedd1-5255-4fa9-8ccc-1fe01de87ea6?sharetype=blocked

OpenAI says it has found evidence that Chinese artificial intelligence start-up DeepSeek used the US company’s proprietary models to train its own open-source competitor, as concerns grow over a potential breach of intellectual property.

The San Francisco-based ChatGPT maker told the Financial Times it had seen some evidence of “distillation”, which it suspects to be from DeepSeek.

The technique is used by developers to obtain better performance on smaller models by using outputs from larger, more capable ones, allowing them to achieve similar results on specific tasks at a much lower cost.

Distillation is a common practice in the industry but the concern was that DeepSeek may be doing it to build its own rival model, which is a breach of OpenAI’s terms of service.

“The issue is when you [take it out of the platform and] are doing it to create your own model for your own purposes,” said one person close to OpenAI.

beyond parody www.ft.com/content/a0df...

29.01.2025 11:15 —

👍 280

🔁 51

💬 13

📌 27

Addendum: whether DeepSeek or OpenAI, there's no such thing as AGI and attempting to build it results in harm.

See our paper.

27.01.2025 19:44 —

👍 162

🔁 40

💬 2

📌 4

This whole thread worth reading, but just to note that DeepSeek should prompt the UK government to revisit the AI Opportunities Action Plan strategy of building out a huge domestic compute capability, especially where it comes at the cost of Net Zero and housing priorities.

27.01.2025 18:33 —

👍 18

🔁 6

💬 1

📌 2

Screenshot of Google docs generative AI agent asking me to Rephrase, Shorten, Elaborate, More formal.

Holy moly. I'm trying to write an academic paper, and nearly every application I'm using is not only offering Generative AI as an option for writing, but *pushing it* -- pervading the design to the point where a simple misclick would make my content AI-generated. Here's why that's a problem. 🧵

27.01.2025 18:38 —

👍 848

🔁 312

💬 31

📌 49

Understanding DOGE as Procurement Capture - Anil Dash

A blog about making culture. Since 1999.

Missed this when it was posted. Worth considering in the UK Gov AI push - always ask yourself who is impacted by all the "AI efficiencies", and who benefits financially. anildash.com/2025/01/04/D...

26.01.2025 19:13 —

👍 0

🔁 0

💬 0

📌 0

The structural reason big tech and major VCs are funding AI to such a degree is to undermine labor, period. It is no accident that the single most effective task genAI does is coding — the highest paid, most powerful job in tech. It *is* possible to build worker-owned/centric, consensual AI, though.

26.01.2025 18:39 —

👍 292

🔁 53

💬 18

📌 3

![Please use the sharing tools found via the share button at the top or side of articles. Copying articles to share with others is a breach of FT.com T&Cs and Copyright Policy. Email licensing@ft.com to buy additional rights. Subscribers may share up to 10 or 20 articles per month using the gift article service. More information can be found at https://www.ft.com/tour.

https://www.ft.com/content/a0dfedd1-5255-4fa9-8ccc-1fe01de87ea6?sharetype=blocked

OpenAI says it has found evidence that Chinese artificial intelligence start-up DeepSeek used the US company’s proprietary models to train its own open-source competitor, as concerns grow over a potential breach of intellectual property.

The San Francisco-based ChatGPT maker told the Financial Times it had seen some evidence of “distillation”, which it suspects to be from DeepSeek.

The technique is used by developers to obtain better performance on smaller models by using outputs from larger, more capable ones, allowing them to achieve similar results on specific tasks at a much lower cost.

Distillation is a common practice in the industry but the concern was that DeepSeek may be doing it to build its own rival model, which is a breach of OpenAI’s terms of service.

“The issue is when you [take it out of the platform and] are doing it to create your own model for your own purposes,” said one person close to OpenAI.](https://cdn.bsky.app/img/feed_thumbnail/plain/did:plc:26hkfmtr7jrchexamh7hk6rz/bafkreicsgga7q6qtz6xcrc4xl4sucq4wgcyrlojh4roufwiyj3tcon5wka@jpeg)