Out now in @nathumbehav.nature.com! We applied graph theoretic analyses to fMRI data of participants watching movies/listening to stories. Integration across large-scale functional networks mediates arousal-dependent enhancement of narrative memories. Open access link: rdcu.be/eKKAw

13.10.2025 15:55 —

👍 56

🔁 19

💬 1

📌 3

I'm also deeply grateful to those who offered insightful feedback along the way: the BLRB community at UChicago, joint CogNeuro meeting at Yale, @esfinn.bsky.social 's lab and many others. I’d like to thank Emma Megla and Wilma Bainbridge for sharing the aphantasia data! (10/10)

20.08.2025 13:53 —

👍 2

🔁 0

💬 1

📌 0

HUGE thank you to everyone whose incredible efforts over the past three years made this project possible! @tchamberlain.bsky.social @hayoungsong.bsky.social @annacorriveau.bsky.social @zz112.bsky.social, Taysha Martinez, Laura Sams, Marvin Chun, @ycleong.bsky.social @monicarosenb.bsky.social (9/10)

20.08.2025 13:53 —

👍 4

🔁 0

💬 1

📌 0

Together, we found that ongoing thoughts at rest are reflected in brain dynamics and these network patterns predict everyday cognition and experiences.

Our work underscores the crucial role of subjective in-scanner experiences in understanding functional brain organization and behavior. (8/10)

20.08.2025 13:53 —

👍 1

🔁 0

💬 1

📌 0

Neuromarkers of these thoughts further generalized to HCP data (N=908), where decoded thought patterns predicted positive vs. negative trait-level individual differences measures. This suggests that links between rsFC and behavior might in part reflect differences in ongoing thoughts. (7/10)

20.08.2025 13:53 —

👍 1

🔁 0

💬 1

📌 0

Moreover, the model predicting whether people are thinking in the form of images distinguished an aphantasic individual—who lacks visual imagery—from their otherwise identical twin. Data from academic.oup.com/cercor/artic.... (6/10)

20.08.2025 13:53 —

👍 4

🔁 0

💬 1

📌 0

Thought models generalized beyond self-report, predicting non-introspective markers, such as pupil size, linguistic sentiment of speech and the strength of a sustained attention network (Rosenberg et al., 2016, 2020). (5/10)

20.08.2025 13:53 —

👍 2

🔁 0

💬 1

📌 0

How are these thoughts related to resting-state functional connectivity (rsFC) patterns? We found that similarity in ongoing thoughts tracks similarity in rsFC patterns within and across individuals, and that both thought ratings and topics could be reliably decoded from rsFC (4/10)

20.08.2025 13:53 —

👍 1

🔁 0

💬 1

📌 0

We observed a remarkable idiosyncrasy in ongoing thoughts between individuals and over time, both in terms of self-reported ratings as well as the content and topics of thoughts. (3/10)

20.08.2025 13:53 —

👍 1

🔁 1

💬 1

📌 1

In our “annotated rest” task, 60 individuals rested, and verbally described and rated their ongoing thoughts after each 30-sec rest period. (2/10)

20.08.2025 13:53 —

👍 4

🔁 1

💬 1

📌 0

Ongoing thoughts at rest reflect functional brain organization and behavior

Resting-state functional connectivity (rsFC)-brain connectivity observed when people rest with no external tasks-predicts individual differences in behavior. Yet, rest is not idle; it involves streams...

New preprint! 🧠

Our mind wanders at rest. By periodically probing ongoing thoughts during resting-state fMRI, we show these thoughts are reflected in brain network dynamics and contribute to pervasive links between functional brain architecture and everyday behavior (1/10).

doi.org/10.1101/2025...

20.08.2025 13:53 —

👍 68

🔁 23

💬 4

📌 4

I’m thrilled to announce that I will start as a presidential assistant professor in Neuroscience at the City U of Hong Kong in Jan 2026!

I have RA, PhD, and postdoc positions available! Come work with me on neural network models + experiments on human memory!

RT appreciated!

(1/5)

08.05.2025 01:16 —

👍 130

🔁 39

💬 14

📌 4

Feeling fortunate that #SANS2025 was in Chicago, and so much of the lab was able to be part of the meeting! It's crazy how much the lab has grown over the past 3y10m, and I'm so proud of the work we are doing together! Happy that we could host the lab, alums (and surprise guests)!

#CASNL@SANS

27.04.2025 05:02 —

👍 45

🔁 6

💬 1

📌 0

To learn more about this dataset and the neural dynamics of narrative insight, check out our recent work (preprint below) led by the amazing @hayoungsong.bsky.social and chat with her on Saturday 1:50 PM - 3:00 PM! Poster ID: P3-B-30.

www.biorxiv.org/content/10.1...

24.04.2025 21:00 —

👍 2

🔁 1

💬 0

📌 0

Curious how the human brain updates social impression in a naturalistic setting? We scanned participants watching This Is Us, and found that sudden neural pattern shifts at insight moments of comprehension reflect impression updating. Come and chat Friday 4:15–5:15pm at #SANS2025, Poster P2-G-69.

24.04.2025 21:00 —

👍 22

🔁 3

💬 1

📌 0

Accelerated learning of a noninvasive human brain-computer interface via manifold geometry

Brain-computer interfaces (BCIs) promise to restore and enhance a wide range of human capabilities. However, a barrier to the adoption of BCIs is how long it can take users to learn to control them. W...

New preprint! Excited to share our latest work “Accelerated learning of a noninvasive human brain-computer interface via manifold geometry” ft. outstanding former undergraduate Chandra Fincke, @glajoie.bsky.social, @krishnaswamylab.bsky.social, and @wutsaiyale.bsky.social's Nick Turk-Browne 1/8

03.04.2025 23:04 —

👍 66

🔁 20

💬 2

📌 3

Many thanks to Janice Chen, @lukejchang.bsky.social and @asieh.bsky.social for open sourcing the movie datasets! Also wanted to give a shoutout to UChicago MRIRC for helping us collect the North by Northwest data. (9/9)

17.04.2025 15:41 —

👍 3

🔁 0

💬 0

📌 0

GitHub - jinke828/AffectPrediction

Contribute to jinke828/AffectPrediction development by creating an account on GitHub.

We have made our model and analysis scripts publicly available to facilitate its use by other researchers in decoding moment-to-moment emotional arousal in novel datasets, providing a new tool to probe affective experience using fMRI. (8/9)

github.com/jinke828/Aff...

17.04.2025 15:41 —

👍 1

🔁 0

💬 1

📌 0

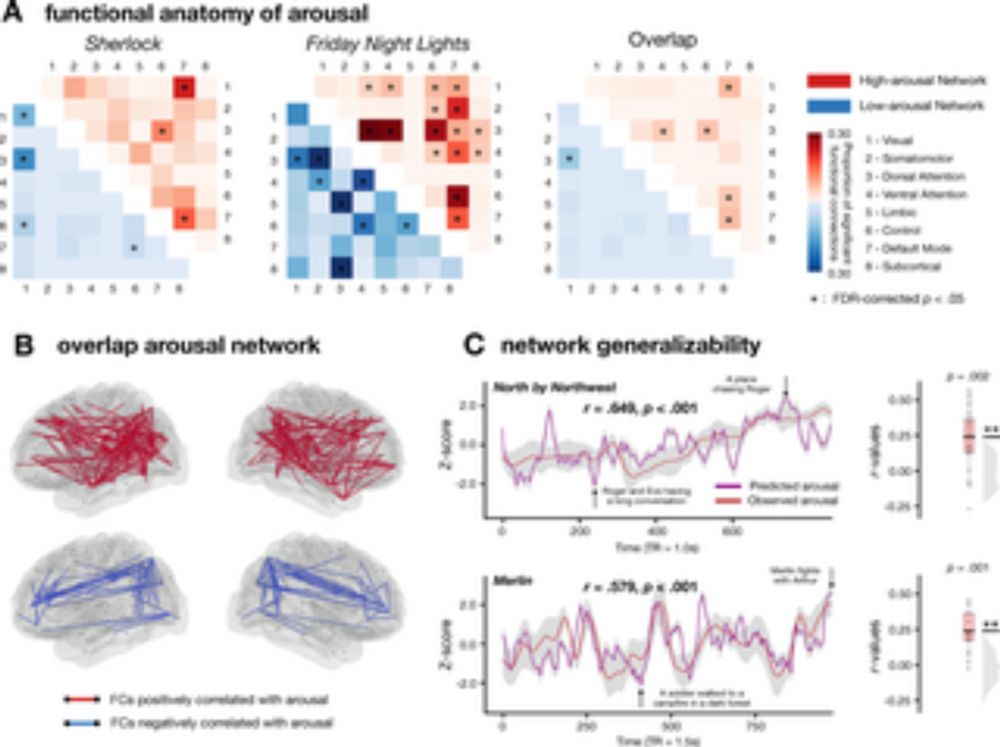

In conclusion, our findings reveal a generalizable representation of emotional arousal embedded in patterns of dynamic functional connectivity, suggesting a common underlying neural signature of emotional arousal across individuals and situational contexts. (7/9)

17.04.2025 15:41 —

👍 0

🔁 0

💬 1

📌 0

In contrast, using the same computational modeling approach, we were unable to find a generalizable neural representation of valence in functional connectivity. Null results are inherently difficult to interpret, but we offer several possible speculations in our paper. (6/9)

17.04.2025 15:41 —

👍 0

🔁 0

💬 1

📌 0

The network generalized to two additional, novel movies, where model-predicted arousal time courses corresponded with the plot of each movie, suggesting a methodological tool for researchers who wish to obtain continuous measures of arousal without having to collect additional human ratings. (5/9)

17.04.2025 15:41 —

👍 0

🔁 0

💬 1

📌 0

This generalizable arousal network is encoded in interactions between multiple large-scale functional networks including default mode network, dorsal attention network, ventral attention network and frontoparietal network. (4/9)

17.04.2025 15:41 —

👍 0

🔁 0

💬 1

📌 0

We observed robust out-of-sample generalizability of the arousal models across movie datasets that were distinct in low-level features, characters, narratives, and genre, suggesting a situation-general neural representation of arousal. (3/9)

17.04.2025 15:41 —

👍 0

🔁 0

💬 1

📌 0

One possibility is that there are generalizable neural patterns associated with valence or arousal across contexts and individuals. We utilized open movie-watching fMRI datasets and built predictive models of moment-to-moment valence and arousal from functional correlations in brain activity. (2/9)

17.04.2025 15:41 —

👍 0

🔁 0

💬 1

📌 0

From the excitement of reuniting with a long-lost friend to the anger when being treated unfairly, our daily lives are colored by diverse affective experiences. Despite the remarkable diversity, is there an intrinsic similarity in how the brain represents these experiences? (1/9)

17.04.2025 15:41 —

👍 2

🔁 0

💬 1

📌 0

Remember what your partner said during a heated argument? Or the rush of getting your first job offer? Why do these emotionally arousing moments stick? Across 3 studies, and 3 arousal measures, we found that emotional arousal enhances memory encoding by promoting functional integration in the 🧠 1/🧵

14.03.2025 17:05 —

👍 43

🔁 16

💬 11

📌 3

Attention here! We found ind. diff. & fMRI evidence that sustained attention is more closely related to long term memory than to attentional control. With the best team @monicarosenb.bsky.social @edvogel.bsky.social @annacorriveau.bsky.social @jinke.bsky.social

14.03.2025 16:12 —

👍 19

🔁 9

💬 0

📌 0