LinkedIn

This link will take you to a page that’s not on LinkedIn

[Qualitative Researchers]

We are researching how qualitative researchers are using and/or thinking about AI in their research pipeline.

If you are a qualitative researcher and can spare some minutes, please answer this survey: cornell.ca1.qualtrics.com/jfe/form/SV_...

#AI #QualitativeResearch

27.10.2025 19:50 —

👍 0

🔁 2

💬 0

📌 0

I think it is more broadly applicable! the question is whether the interpretation effort to predictive payoff ratio still makes sense. I think it would take judicious application of the characteristics, as it may be a little harder to reason about them for different system classes

03.02.2026 01:33 —

👍 1

🔁 0

💬 0

📌 0

babe wake up, lindsay popwski just dropped a new paper 🔥

02.02.2026 19:01 —

👍 1

🔁 1

💬 0

📌 0

and yeah, data is a huge piece of the puzzle! We’re using “algorithm” in the colloquial sense to describe the whole sociotechnical system that users experience and form theories around, so data is a critical part of that

30.01.2026 20:12 —

👍 1

🔁 0

💬 1

📌 0

Definitely agree! Alignment was a tricky choice of terminology because of how overloaded the word is but we wanted to make the point about individual priors/perspectives on different concepts (in contrast to accuracy).

30.01.2026 20:11 —

👍 1

🔁 0

💬 1

📌 0

Empirical evidence that people can build highly predictive mental models of even complex AIs, if and only if the algorithm fulfills three criteria:

30.01.2026 02:20 —

👍 12

🔁 3

💬 0

📌 0

This work was done with an amazing team! Thanks to my collaborators and advisors: @helenasresearch.bsky.social, Ignacio Javier Fernandez, Chijioke Chinaza Mgbahurike, Ralf Herbrich, Jeff Hancock, @mbernst.bsky.social

It'll get presented this fall at #CSCW2026 but should be out sooner in PACM HCI

29.01.2026 18:49 —

👍 5

🔁 0

💬 0

📌 0

Alignment is the factor with greatest flexibility, perhaps because people are used to errors in their algorithms.

Users are willing to tolerate relatively high error rates if a single obvious feature has more signal than noise, e.g., if the like count is highly correlated.

29.01.2026 18:49 —

👍 5

🔁 0

💬 1

📌 0

When users cannot explain an algorithm with one clear idea, they often juggle multiple (incorrect) mental models.

Compactness becomes a kind of crumple zone: by vaguely combining additional factors, users avoid being clearly proven wrong.

29.01.2026 18:49 —

👍 4

🔁 0

💬 1

📌 0

These properties also shape which mental models users deploy when reasoning about algorithm behavior.

Users default to incorporating highly available engagement signals into their mental models regardless of whether they are present in the underlying algorithm.

29.01.2026 18:49 —

👍 3

🔁 0

💬 1

📌 0

A bar plot of prediction accuracy by ACA criteria. The bar for ACA algorithms is around 85\%, while the bars for all other configurations range around 45-55\%. There is no apparent difference for 2/3 ACA criteria versus 1/3 or 0/3 ACA criteria.

We find that users predict algorithm behavior accurately if and only if all three properties hold, regardless of algorithmic complexity.

For non compliant algorithms, prediction accuracy falls to around chance.

29.01.2026 18:49 —

👍 3

🔁 0

💬 1

📌 0

We test this theory in a large pre-registered study (N=1250) where participants predicted the behavior of 25 social media feed-ranking algorithms systematically varying the ACA properties, ranging from single-feature rankings to complex, LLM-based systems.

29.01.2026 18:49 —

👍 4

🔁 0

💬 1

📌 0

The core proposition: If an algorithm satisfies all three conditions (Available, Compact, Aligned), then a person will be able to accurately predict its behavior, even if the algorithm is complex. Characteristics improving and worsening each characteristic are shown. For available: visible features and concepts users are predisposed to notice help, hidden features and hard-to-extract features hurt. For compact: a single concept or many that can be united into one help, multiple divergent concepts combined hurts. For aligned, high accuracy of the underlying algorithm and unambiguous concepts help, low accuracy or concepts subject to disagreement hurt.

Three factors matter here: (cognitive) availability means the algorithm is built on concepts users already reason with, (conceptual) compactness means those concepts cohere to a single construct, and alignment means people and the algorithm apply the concept in similar ways.

29.01.2026 18:49 —

👍 6

🔁 1

💬 1

📌 0

It is tempting to say that unpredictability is inevitable as algorithms grow more complex, in our social media feeds and beyond.

But that intuition fails. Predictability hinges instead on whether users can faithfully translate an algorithm’s behavior into a usable mental model.

29.01.2026 18:49 —

👍 3

🔁 0

💬 1

📌 0

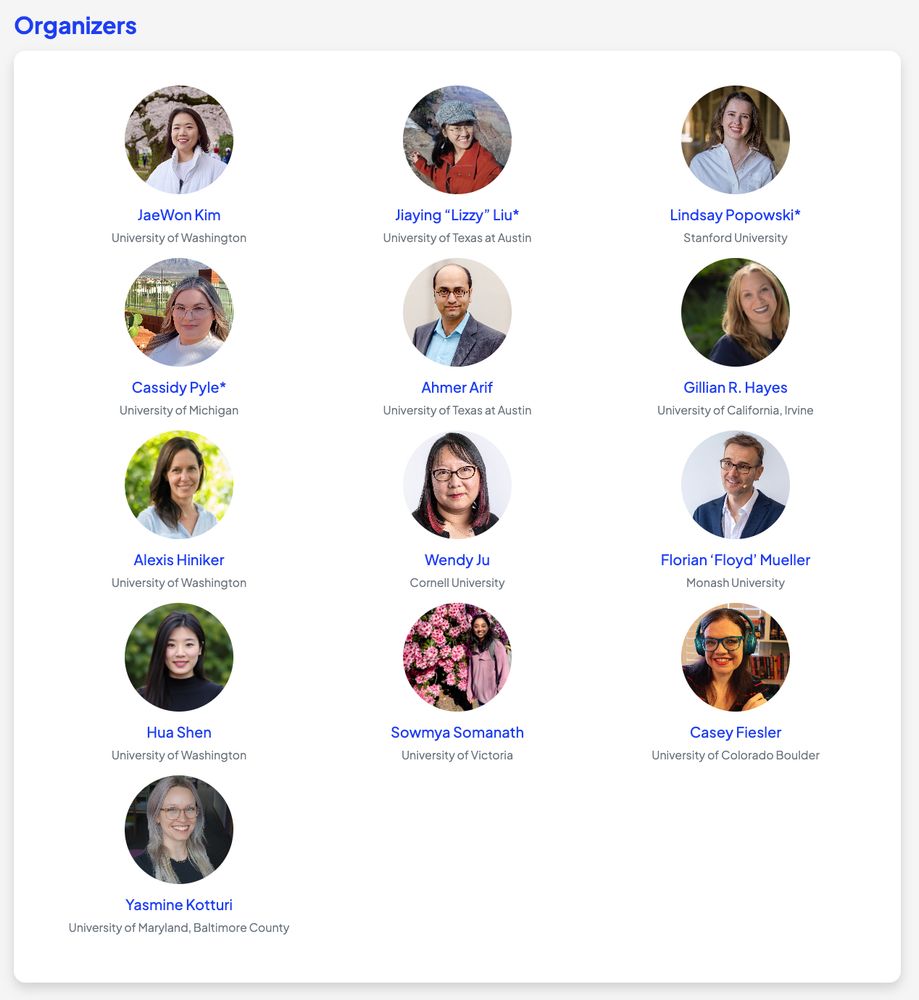

A search for factors for algorithm understanding results in multiple terms displayed as documents, including available, compact, and aligned. These are shown to be necessary and sufficient. Other, similar terms are shown in the background faded, like intuitive, rule-based, grounded, modular, linear, decomposable, accurate, symbolic, causal, and personalized.

Is the only way we can create algorithms that people understand to make them trivially simple? We argue, no.

People can predict the behavior of algorithms that are arbitrarily complex, if and only if they are available, compact and aligned.

arxiv.org/abs/2601.18966

29.01.2026 18:49 —

👍 38

🔁 10

💬 2

📌 3

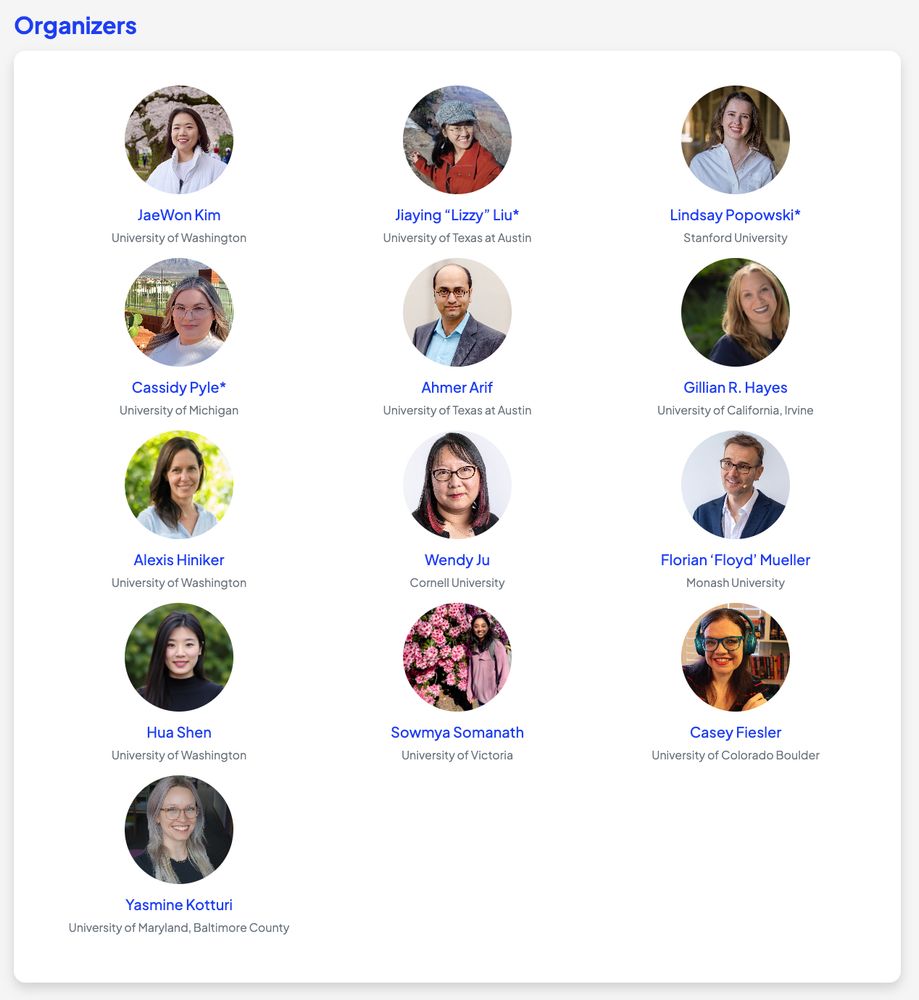

screenshot of the title and authors of the Science paper that are linked in the next post

Our new article in @science.org enables social media reranking outside of platforms' walled gardens.

We add an LLM-powered reranking of highly polarizing political content into N=1256 participants' feeds. Downranking cools tensions with the opposite party—but upranking inflames them.

01.12.2025 19:33 —

👍 46

🔁 13

💬 1

📌 2

The results: reducing exposure to divisive content improved feelings toward the other party by about 2 points on a 100-point scale. On the other hand, boosting that content cooled feelings by about 2.5 points. The researchers say the shift is equivalent to about three years of rising partisan animosity in America, created (or reversed) in just a week.

Remarkably, researchers found this result without adding or removing hyper-partisan material — it simply changed the order in which the posts appear. (For people who saw less polarizing content, for example, it was still in their feeds — just way lower, and thus less likely to be seen.)

“I think the general intuition is that there's a little bit of a failure of imagination going on with a lot of the platforms,” Michael S. Bernstein, a researcher at Stanford and co-author of the paper, told me in an interview. “We think they were sort of painted into a box where they felt like they had to optimize for certain engagement criteria, like that's the only thing they could do. It just felt like that didn't necessarily have to be the case anymore."

A novel new study finds you can reduce polarization on X simply with a simple change to the algorithm. I spoke with the researchers: www.platformer.news/stanford-pol...

02.12.2025 00:58 —

👍 137

🔁 33

💬 9

📌 6

No, but literally! (I also love the parasol protectorate, btw)

08.09.2025 23:44 —

👍 2

🔁 0

💬 1

📌 0

Screenshot of the workshop website. Title: Design for Hope: Cultivating Deliberate Hope in the Face of Complex Societal Challenges. Date: Saturday, October 18th, 2025, 9am-5pm (TBU). Overview: Societal challenges, such as those at the heart of CSCW research, are often complex, and responses to them frequently prioritize harm reduction and prevention as immediate goals. While these efforts are essential, they can unintentionally narrow our sense of what is possible, centering our attention on mitigating risks rather than expanding possibilities.

We're doing a follow-up (in-person) workshop @ CSCW! Positech 2: Design for Hope will explore how design methodologies can help us envision and achieve positive social futures AND how these processes cultivate hope in the face of otherwise overwhelming challenges. More: positech-cscw-2025.github.io

14.07.2025 23:01 —

👍 7

🔁 0

💬 1

📌 0

workshop website screenshots

headshots of workshop organizers

Following up on last year’s Positech workshop, thrilled to announce our workshop @acm-cscw.bsky.social 2025 on how design might help us cultivate deliberate hope in the face of complex societal challenges, whether in our everyday or broader aspirations 🌱

cfp 👉 positech-cscw-2025.github.io

14.07.2025 20:04 —

👍 9

🔁 3

💬 1

📌 2

a couple of hours before my keynote, I went through an intense negotiation with the organisers (for over a hour) where we went through my slides and had to remove anything that mentions 'Palestine' 'Israel' and replace 'genocide' with 'war crimes'

1/

08.07.2025 09:58 —

👍 1417

🔁 675

💬 38

📌 64

i think it is a sign of low character to post something controversial knowing it will get angry comments and then insult the people who responded to your ragebait

30.06.2025 12:03 —

👍 7347

🔁 631

💬 398

📌 83

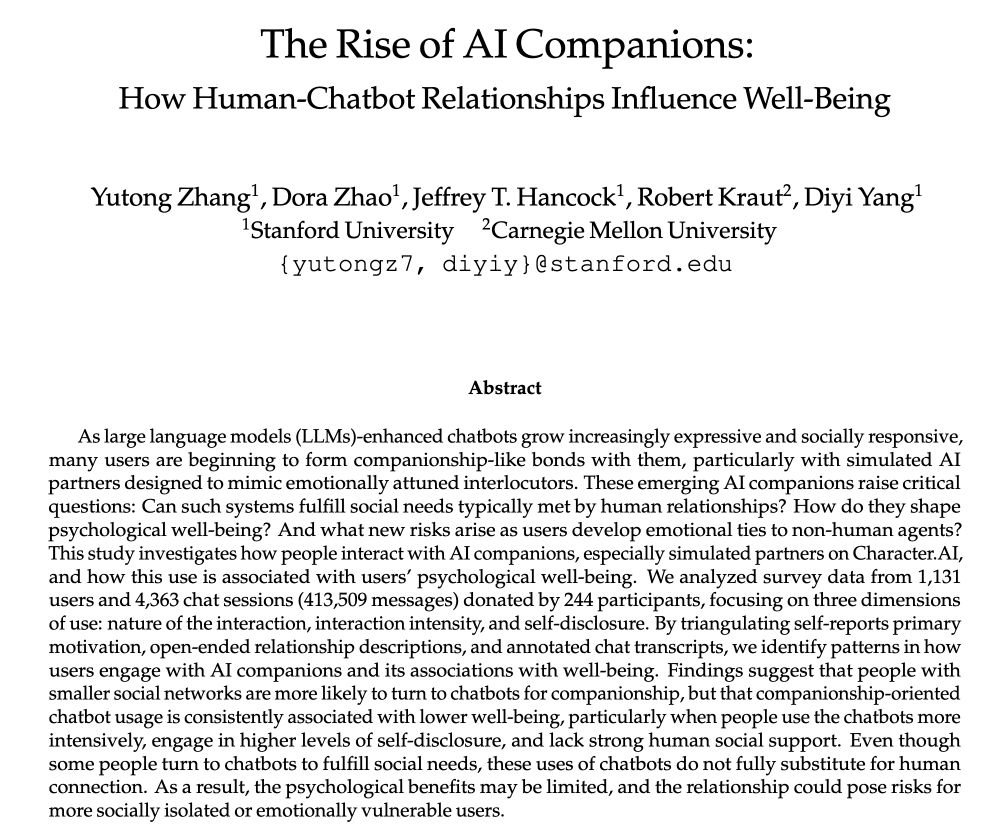

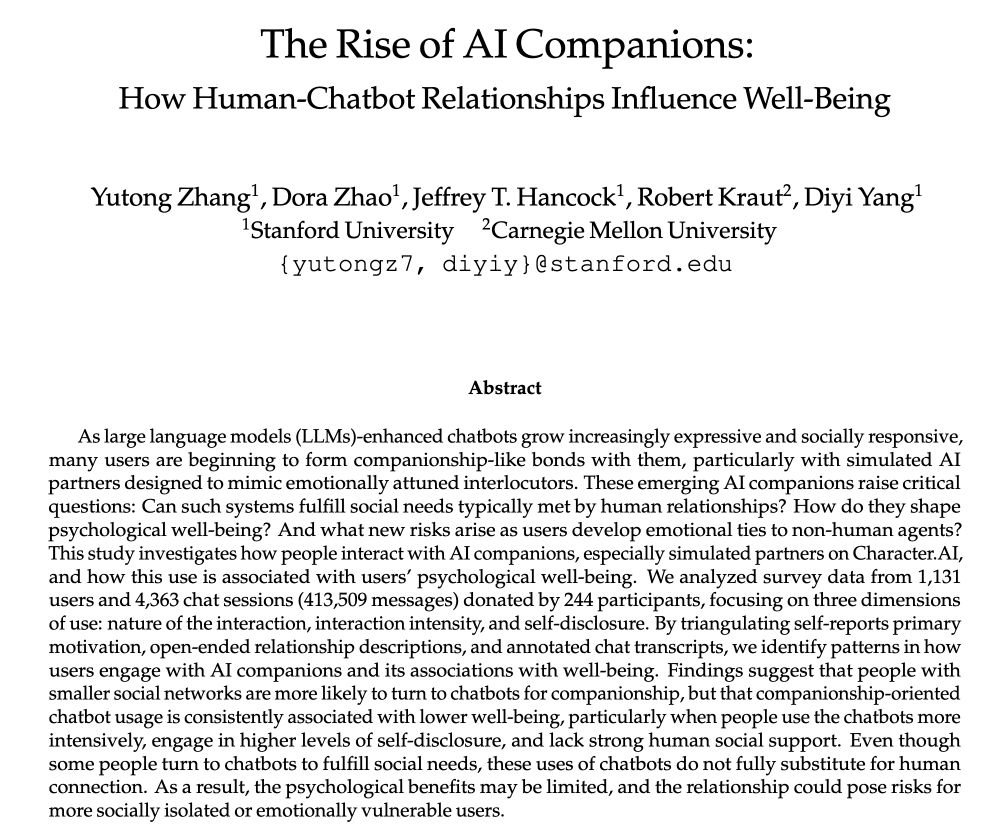

AI companions aren’t science fiction anymore 🤖💬❤️

Thousands are turning to AI chatbots for emotional connection – finding comfort, sharing secrets, and even falling in love. But as AI companionship grows, the line between real and artificial relationships blurs.

18.06.2025 16:27 —

👍 6

🔁 3

💬 1

📌 0

As infuriating as the conservative SCOTUS is, never forget that America’s genocide against its trans population is the child of “moderate” liberal outlets like the Atlantic and the New York Times, and especially Jesse Singal and Katie Herzog, who made healthy careers out of polishing bigotry.

18.06.2025 15:35 —

👍 2734

🔁 827

💬 24

📌 23

also an incredible researcher/mentor/collaborator/colleague but like, whatever. we know what's really important here.

02.06.2025 19:40 —

👍 2

🔁 0

💬 0

📌 0

what is stanford hci going to do without our funniest member next year?

02.06.2025 19:39 —

👍 8

🔁 0

💬 2

📌 1

I'm so excited to join the CS department at Johns Hopkins University (@jhu.edu) as an Assistant Professor! I'm looking for students interested in social computing, HCI, and AI—especially around designing better online systems in the age of LLMs. Come work with me! piccardi.me

02.06.2025 17:16 —

👍 19

🔁 2

💬 1

📌 2

They’re so lucky to land you!!!!!!!! Congrats congrats congrats!

30.05.2025 23:47 —

👍 1

🔁 0

💬 1

📌 0

I'm excited to announce that I’ll be joining the Computer Science department at Johns Hopkins as an Assistant Professor this Fall! I’ll be working on large language models, computational social science, and AI & society—and will be recruiting PhD students. Apply to work with me!

30.05.2025 15:56 —

👍 91

🔁 4

💬 14

📌 1

The Tainted Cup

Ancillary Justice

Jade City

The Ninth Rain

Godkiller

A Memory Called Empire

Blood Over Bright Haven

I’ve heard very good things about the raven scholar and the incandescent but I can’t yet vouch for either

25.05.2025 05:58 —

👍 2

🔁 0

💬 1

📌 0