One to go! Thanks to everyone who agreed to review so far! 🫶

If you have the capacity for one emergency review on explainability of NLP models, please reach out via DMs/chat or by replying here. #ACL2026NLP

bsky.app/profile/nfel...

One to go! Thanks to everyone who agreed to review so far! 🫶

If you have the capacity for one emergency review on explainability of NLP models, please reach out via DMs/chat or by replying here. #ACL2026NLP

bsky.app/profile/nfel...

Hello #NLProc #ACL2026NLP community, I'm looking for an emergency reviewer for an ARR submission on LLM interpretability.

If you're available to complete a review before Feb 15, please reply or DM 🙏

Hello #NLProc #ACL2026NLP people. I am looking for **two emergency reviewers** in the Safety and Alignment in LLMs track for ACL/ARR.

Reviews are due Feb 15th. Please DM if interested and available.

Happy to offer drinks/food if you live in/pass by Lisbon ☀️

I'm looking for two emergency reviewers 🧑🚒👩🚒 for the ARR January Generalizability and Transfer track.

Please reach out if you have time & qualify for review or RT for visibility🙏🙏

Seems to be a common situation for ACs this round, but I'm also looking for two emergency reviewers for the January #ARR Evaluation and Resources track. I'd appreciate any help (reposts, encouragement, black magic...)

10.02.2026 11:15 — 👍 3 🔁 6 💬 0 📌 0I am looking for 2 emergency reviewers for the ARR Ethics, Bias & Fairness track. Please DM me if you are available 🙏

10.02.2026 09:27 — 👍 6 🔁 6 💬 0 📌 0Thanks a lot! Sent you a DM!

09.02.2026 17:48 — 👍 1 🔁 0 💬 0 📌 0Looking for emergency reviewers for ARR Special Track "Explainability of NLP Models". Topics: Faithfulness, mechanistic interpretability, surveys and position papers. Deadline Feb 14 AoE. #ACL2026NLP

09.02.2026 17:33 — 👍 8 🔁 7 💬 1 📌 1It was a real pleasure to visit the Health NLP Lab in Tübingen and present my research at BIFOLD and TU Berlin in collaboration with Charité and University of Augsburg among others. We had some exciting discussions. Thanks for having me!

21.01.2026 14:42 — 👍 10 🔁 0 💬 0 📌 0

Last week, Dr. Nils Feldhus @nfel.bsky.social, postdoctoral researcher at @tuberlin.bsky.social and @bifold.berlin, visited our lab and presented his research during our weekly lab meeting.

21.01.2026 14:00 — 👍 10 🔁 4 💬 1 📌 1

Sharing my favorite papers I read in 2025 from human-centric XAI, mechanistic interpretability, NLG evaluation, and related fields, covering conferences I've attended (ACL in Austria, EMNLP in China), but also journals, ML and HCI conferences:

nfelnlp.github.io/recommended/...

I’m at #NeurIPS in San Diego this week! Come see our poster on feature interpretability. Find @eberleoliver.bsky.social and me at:

🪧Poster Session 1 @ Exhibit Hall C,D,E #1015

Wed 3 Dec, 11 am - 2 pm

🪧Poster @ Mech Interp Workshop

Upper Level Room 30A-E

Sun 7 Dec, 8 am - 5 pm

*Urgently* looking for emergency reviewers for the ARR October Interpretability track 🙏🙏

ReSkies much appreciated

Heading to the EMNLP BlackboxNLP Workshop this Sunday? Don’t miss @nfel.bsky.social and @lkopf.bsky.social poster on „Interpreting Language Models Through Concept Descriptions: A Survey“

aclanthology.org/2025.blackbo...

#EMNLP #BlackboxNLP #XAI #Interpretapility

Nov 9, CODI-CRAC Workshop, 14:20-15:30 @ Hall C – Human and LLM-based Assessment of Teaching Acts in Expert-led Explanatory Dialogues (Anagnostopoulou et al.)

🗞️ aclanthology.org/2025.codi-1....

Nov 9, @blackboxnlp.bsky.social , 11:00-12:00 @ Hall C – Interpreting Language Models Through Concept Descriptions: A Survey (Feldhus & Kopf) @lkopf.bsky.social

🗞️ aclanthology.org/2025.blackbo...

bsky.app/profile/nfel...

I'm at #EMNLP2025 in Suzhou🇨🇳 to present these papers in the coming days:

Nov 7, Session 14, 12:30-13:30 @ Hall C – Multilingual Datasets for Custom Input Extraction and Explanation Requests Parsing in Conversational XAI Systems (Wang et al.) @qiaw99.bsky.social

🗞️ aclanthology.org/2025.finding...

🙏 Many thanks to the institutions that supported this research:

@tuberlin.bsky.social

@bifold.berlin

Looking forward to presenting this in 🇨🇳 Suzhou early November!

Concept description evaluation techniques categorized by metric, study, and the underlying quality being measured. Metrics are grouped into conceptual families: predictive simulation, input-based evaluation, output-based evaluation, semantic similarity, and human judgment.

Our synthesis reveals a growing demand for more rigorous, causal evaluation. By outlining the state of the art and identifying key challenges, this survey provides a roadmap for future research toward making models more transparent.

This survey has been accepted at @blackboxnlp.bsky.social at EMNLP

We consider concept descriptions in open-vocabulary settings, the evolving landscape of automated and human metrics for evaluating them, and the datasets that underpin this research.

This is a companion paper to our PRISM paper that was accepted at NeurIPS last week: bsky.app/profile/lkop...

Overview of descriptions for model components (neurons, attention heads) and model abstractions (SAE features, circuits).

🔍 Are you curious about uncovering the underlying mechanisms and identifying the roles of model components (neurons, …) and abstractions (SAEs, …)?

We provide the first survey of concept description generation and evaluation methods.

Joint effort w/ @lkopf.bsky.social

📄 arxiv.org/abs/2510.01048

Happy to share that our PRISM paper has been accepted at #NeurIPS2025 🎉

In this work, we introduce a multi-concept feature description framework that can identify and score polysemantic features.

📄 Paper: arxiv.org/abs/2506.15538

#NeurIPS #MechInterp #XAI

The submission deadline of the inaugural Young Researchers workshop at INLG 2025 has been extended by 5 days.

We're excited to receive your 2p position papers showcasing your NLG-related research until August 31, 2025! @siggen.bsky.social

ynlg-workshop.github.io

bsky.app/profile/nfel...

Excited to announce the first-ever Workshop for Young Researchers in Natural Language Generation (YNLG), supported by @siggen.bsky.social, taking place on October 29, 2025 in Hanoi, Vietnam, co-located with INLG 2025.

Call for Submissions is out now!

ynlg-workshop.github.io

We welcome position papers on ongoing research in Natural Language Generation.

Let’s build a vibrant, collaborative community of the next generation of NLG researchers!

Stoked to organize it together with @patuchen.bsky.social, Adarsa, Rudali, Michela, and Alyssa! 🤩

📍 Venue: Vietnam Institute for Advanced Study in Mathematics, Hanoi, Vietnam — during INLG 2025.

💡 If you'll be in the region for INLG or EMNLP 2025, this is a great opportunity to connect and share your work!

📅 Submission deadline: August 26, 2025

YNLG aims to foster a welcoming community of early-career researchers in NLG. This is your chance to:

📌 Present your work-in-progress research

📌 Receive constructive feedback from senior researchers and peers

📌 Discuss current trends and future directions in NLG

Excited to announce the first-ever Workshop for Young Researchers in Natural Language Generation (YNLG), supported by @siggen.bsky.social, taking place on October 29, 2025 in Hanoi, Vietnam, co-located with INLG 2025.

Call for Submissions is out now!

ynlg-workshop.github.io

Thoroughly enjoying the range of topics at the first #ACL2025NLP poster session!

Our FitCF poster presentation on counterfactual example generation at #ACL2025 has been moved to Tuesday, July 29, at 16:00-17:30.

bsky.app/profile/nfel...

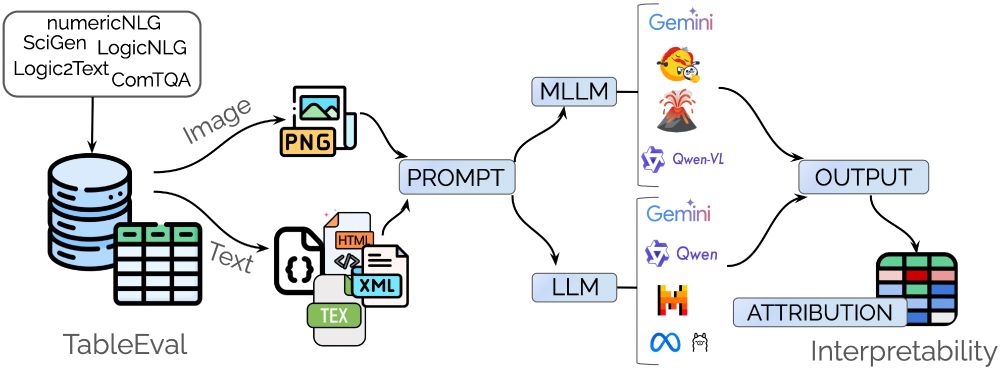

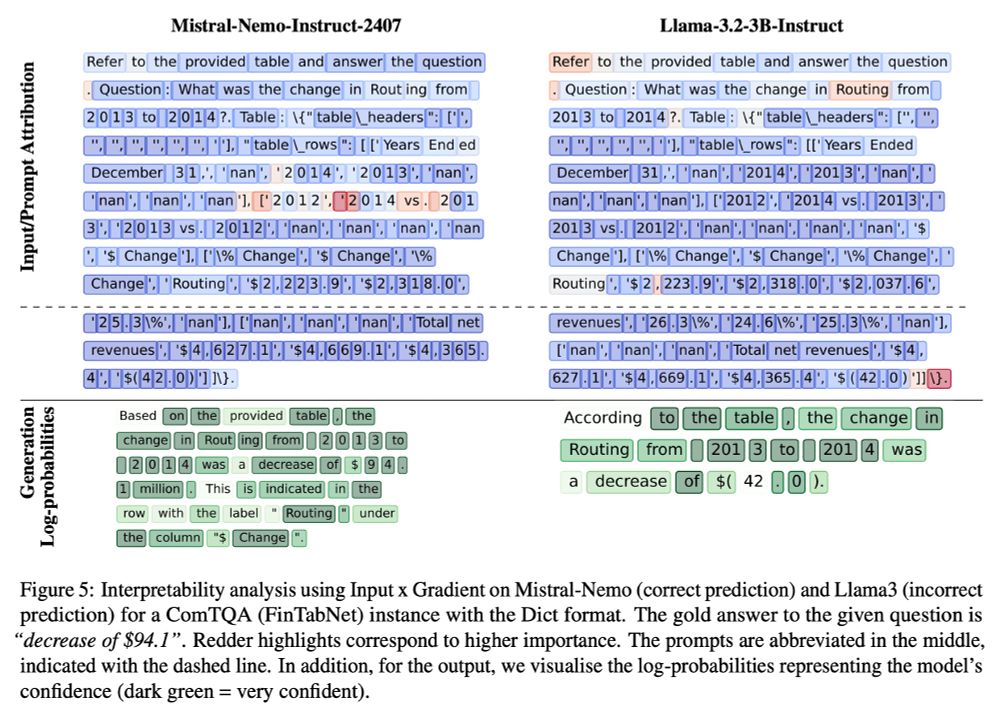

We introduce the TableEval benchmark and investigate the effectiveness and robustness of text-based and multimodal LLMs on table understanding through a cross-domain & cross-modality evaluation.

Joint work by DFKI SLT incl. Fabio Barth, Raia Abu Ahmad, @malteos.bsky.social @pjox.bsky.social