So excited that our paper got accepted to ICLR!! Check it out 👇

27.01.2026 18:33 — 👍 10 🔁 1 💬 0 📌 0So excited that our paper got accepted to ICLR!! Check it out 👇

27.01.2026 18:33 — 👍 10 🔁 1 💬 0 📌 0Super excited about our new paper!! Check this out 👇🧵

26.11.2025 19:55 — 👍 9 🔁 0 💬 0 📌 0

ICoN Center alumna @nogazs.bsky.social offers a fresh take on how the brain compresses visual information to guide intelligent behavior in @thetransmitter.bsky.social article: “The visual system’s lingering mystery: Connecting neural activity and perception”.

www.thetransmitter.org/the-big-pict...

Figuring out how the brain uses information from visual neurons may require new tools, writes @neurograce.bsky.social. Hear from 10 experts in the field.

#neuroskyence

www.thetransmitter.org/the-big-pict...

![Comic. [long message with unreadable text that includes lots of punctuation marks like semi colons and em dashes as well as citations.] Highlighted line at the bottom zoomed in reads: Not ChatGPT output—I’m just like this. [caption] I’ve had to start adding this disclaimer to my messages.](https://cdn.bsky.app/img/feed_thumbnail/plain/did:plc:cz73r7iyiqn26upot4jtjdhk/bafkreifbqjlfnleuu4jrpvc7q6e43ckn2lqvuv7vdgtuf7e67dw5acy4di@jpeg)

Comic. [long message with unreadable text that includes lots of punctuation marks like semi colons and em dashes as well as citations.] Highlighted line at the bottom zoomed in reads: Not ChatGPT output—I’m just like this. [caption] I’ve had to start adding this disclaimer to my messages.

Disclaimer

xkcd.com/3126/

If you've reached so far and find this research exciting: my lab is recruiting a postdoc and PhD students!

➡️Postdoc applications: apply.interfolio.com/170656

➡️PhD applications: as.nyu.edu/psychology/g...

10/10

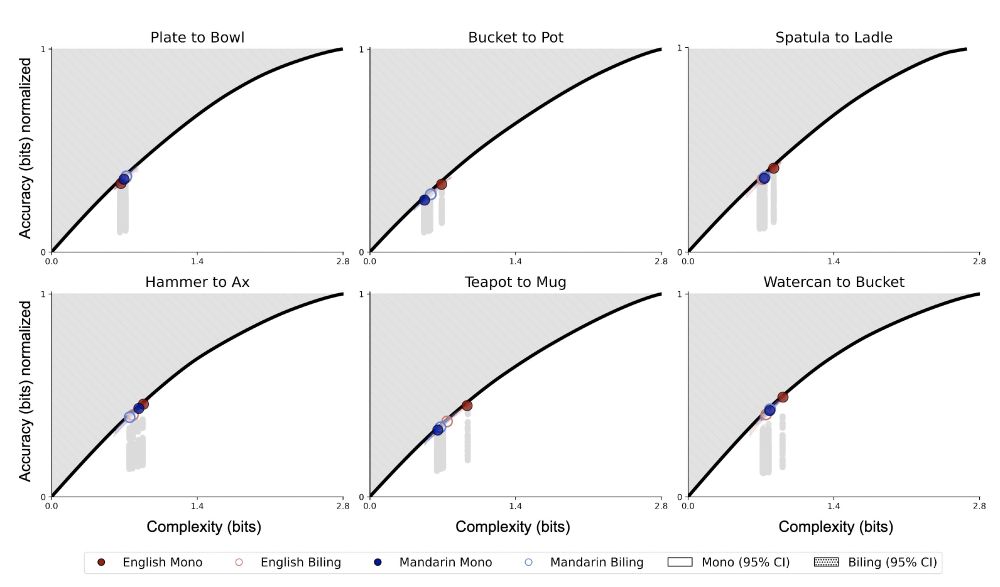

Taken together, these studies further support the hypothesis that efficient compression is a fundamental principle underlying language, cognition, and intelligence more generally!

9/n

This further supports the generality of the IB principle and its applicability across the lexicon, and shows that action abstractions in language may be highly efficient

escholarship.org/uc/item/48w0...

w/ Thomas Langlois @rplevy.bsky.social @nidhise.bsky.social

8/n

We test this by considering a cross-linguistic dataset of locomotion naming 🏃♀️💃🚶

We find that even in this challenging dynamical multi-modal domain, systems of semantic categories across languages are significantly efficient

7/n

3️⃣ Our evidence supporting the IB framework for semantics has so far been based on static inputs like adjectives (e.g., color), nouns (e.g., objects), or function words (e.g., pronouns). Can this theory also apply to action verbs, referring to dynamic multi-modal inputs?

6/n

This suggests that bilinguals must satisfy additional constraints while operating under the same pressure for efficiency as monolinguals

escholarship.org/uc/item/4128...

w/ Maya Taliaferro, Nathaniel Imel, Esti Blanco-Elorrieta

5/n

2️⃣ Bilinguals employ two different category systems, but in practice they converge on systems that differ from monolinguals. Is this a sign bilinguals depart from efficiency to satisfy other (e.g., learnability) constraints? No, bilinguals still maintain optimality!

4/n

individual learners seem to have an inductive bias toward maintaining optimally compressed representations, even without an explicit need to communicate

escholarship.org/uc/item/63d7...

w/ Nathaniel Imel, Jennifer Culbertson, @simonkirby.bsky.social

3/n

1️⃣ We've previously shown converging evidence that semantic categories across languages achieve near-optimal compression via the Information-Bottleneck principle. But how do languages become near-optimal?

By revisiting human iterated language learning data, we find that

2/n

If you missed us at #cogsci2025, my lab presented 3 new studies showing how efficient (lossy) compression shapes individual learners, bilinguals, and action abstractions in language, further demonstrating the extraordinary applicability of this principle to human cognition! 🧵

1/n

w/ @alisongopnik.bsky.social @norijacoby.bsky.social

22.07.2025 19:18 — 👍 1 🔁 0 💬 0 📌 0

Super excited to have the #InfoCog workshop this year at #CogSci2025! Join us in SF for an exciting lineup of speakers and panelists, and check out the workshop's website for more info and detailed scheduled

sites.google.com/view/infocog...

Promotional image for a #CogSci2025 workshop titled “Information Theory and Cognitive Science.” Organized and presented by Noga Zaslavsky, Thomas A Langlois, Nathaniel Imel, Clara Meister, Eleonora Gualdoni, and Daniel Polani. Scheduled for July 30 at 8:30 AM in room Pacifica C. The top of the image features the conference theme, “Theories of the Past / Theories of the Future,” and the dates: July 30–August 2 in San Francisco.

#Workshop at #CogSci2025

Information Theory and Cognitive Science

🗓️ Wednesday, July 30

📍 Pacifica C - 8:30-10:00

🗣️ Noga Zaslavsky, Thomas A Langlois, Nathaniel Imel, Clara Meister, Eleonora Gualdoni, and Daniel Polani

🧑💻 underline.io/events/489/s...

📣 I'm looking for a postdoc to join my lab at NYU! Come work with me on a principled, theory-driven approach to studying language, learning, and reasoning, in humans and AI agents.

Apply here: apply.interfolio.com/170656

And come chat with me at #CogSci2025 if interested!

Nathaniel Imel, Jennifer Culbertson, @simonkirby.bsky.social & @nogazs.bsky.social:

Iterated language learning is shaped by a drive for optimizing lossy compression (Talks 37: Language and Computation 3, 1 August @ 16:22; blurb below) (2/)

This month I'm celebrating a decade (!!) since my first paper was published, which now has over 2,000 citations 🥹

"Deep learning and the information bottleneck principle" with the late, great Tali Tishby

ieeexplore.ieee.org/document/713...

🔆 I'm hiring! 🔆

There are two open positions:

1. Summer research position (best for master's or graduate student); focus on computational social cognition.

2. Postdoc (currently interviewing!); focus on computational social cognition and AI safety.

sites.google.com/corp/site/sy...

Because we must build good things while we scream about the bad, I have started a "Data for Good" team @data-for-good-team.bsky.social that partners with organizations needing short-term data science help. We have three projects ongoing & will add more as our capacity grows.

data-for-good-team.org

Excited to share our new paper "Towards Human-Like Emergent Communication via Utility, Informativeness, and Complexity"

direct.mit.edu/opmi/article...

@rplevy.bsky.social

And looking forward to speaking about this line of work tomorrow at @nyudatascience.bsky.social!

Congratulations to Rich Sutton and Andrew Barto on receiving the Turing Award in recognition of their significant contributions to ML. I also stand with them: Releasing models to the public without the right technical and societal safeguards is irresponsible.

www.ft.com/content/d8f8...

New preprint! In arxiv.org/abs/2502.20349 “Naturalistic Computational Cognitive Science: Towards generalizable models and theories that capture the full range of natural behavior” we synthesize AI & cognitive science works to a perspective on seeking generalizable understanding of cognition. Thread:

28.02.2025 17:14 — 👍 78 🔁 20 💬 1 📌 0hi @xuanalogue.bsky.social , thanks so much for creating this starter pack! I'd love to be added too 😀

14.01.2025 05:41 — 👍 1 🔁 0 💬 1 📌 0

Why do diverse ANNs resemble brain representations? Check out our new paper with Colton Casto, @nogazs.bsky.social , Colin Conwell, Mark Richardson, & @evfedorenko.bsky.social on “Universality of representation in biological and artificial neural networks.” 🧠🤖

tinyurl.com/yckndmjt