w/ James Cohan, @jacobeisenstein.bsky.social, and Kristina Toutanova

Paper link: arxiv.org/abs/2509.22445

w/ James Cohan, @jacobeisenstein.bsky.social, and Kristina Toutanova

Paper link: arxiv.org/abs/2509.22445

We hope this work adds some conceptual clarity around how Kolmogorov complexity relates to neural networks, and provides a path towards identifying new complexity measures that enable greater compression and generalization.

01.10.2025 14:11 — 👍 1 🔁 0 💬 1 📌 0

We prove that asymptotically optimal objectives exist for Transformers, building on a new demonstration of their computational universality. We also highlight potential challenges related to effectively optimizing such objectives.

01.10.2025 14:11 — 👍 0 🔁 0 💬 1 📌 0To address this question, we define the notion of asymptotically optimal description length objectives. We establish that a minimizer of such an objective achieves optimal compression, for any dataset, up to an additive constant, in the limit as model resource bounds increase.

01.10.2025 14:11 — 👍 0 🔁 0 💬 1 📌 0The Kolmogorov complexity of an object is the length of the shortest program that prints that object. Combining Kolmogorov complexity with the MDL principle provides an elegant foundation for formalizing Occam’s razor. But how can these ideas be applied to neural networks?

01.10.2025 14:11 — 👍 0 🔁 0 💬 1 📌 0

Bridging Kolmogorov Complexity and Deep Learning: Asymptotically Optimal Description Length Objectives for Transformers

Excited to share a new paper that aims to narrow the conceptual gap between the idealized notion of Kolmogorov complexity and practical complexity measures for neural networks.

01.10.2025 14:11 — 👍 9 🔁 5 💬 1 📌 0

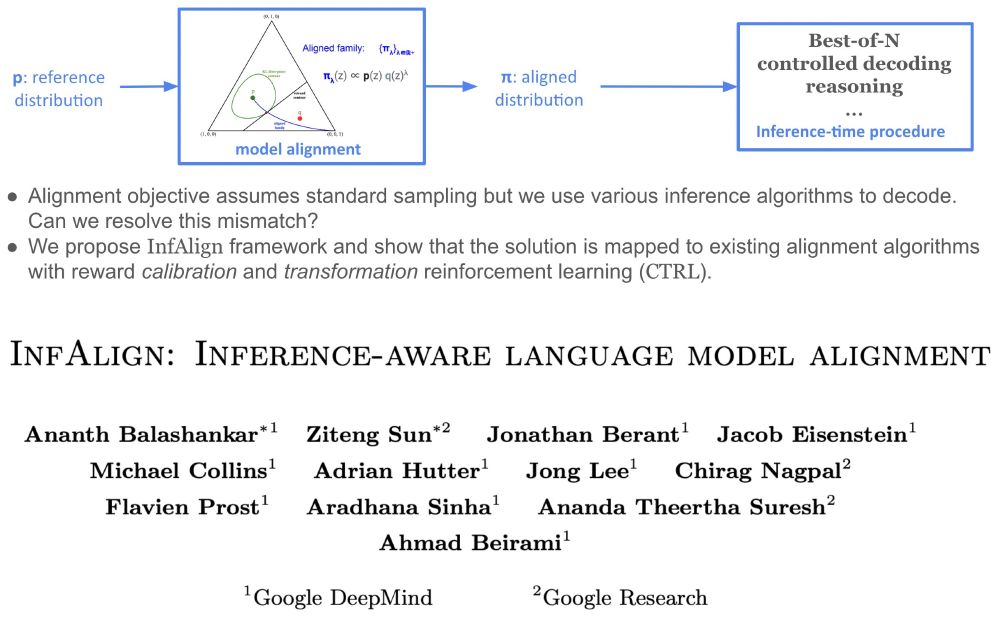

InfAlign: Inference-aware language model alignment Ananth Balashankar, Ziteng Sun, Jonathan Berant, Jacob Eisenstein, Michael Collins, Adrian Hutter, Jong Lee, Chirag Nagpal, Flavien Prost, Aradhana Sinha, Ananda Theertha Suresh, Ahmad Beirami

Excited to share 𝐈𝐧𝐟𝐀𝐥𝐢𝐠𝐧!

Alignment optimization objective implicitly assumes 𝘴𝘢𝘮𝘱𝘭𝘪𝘯𝘨 from the resulting aligned model. But we are increasingly using different and sometimes sophisticated inference-time compute algorithms.

How to resolve this discrepancy?🧵

I'll be at NeurIPS this week. Please reach out if you would like to chat!

09.12.2024 21:51 — 👍 6 🔁 0 💬 1 📌 0New starter pack! go.bsky.app/GZ4hZzu

28.10.2024 09:43 — 👍 42 🔁 17 💬 6 📌 5

Two BioML starter packs now:

Pack 1: go.bsky.app/2VWBcCd

Pack 2: go.bsky.app/Bw84Hmc

DM if you want to be included (or nominate people who should be!)

Hi Marc, thanks for putting this together, mind adding me?

19.11.2024 18:54 — 👍 1 🔁 0 💬 0 📌 0

Wanted to share that Varun Godbole recently released a prompting playbook. The title says prompt tuning, but this is text prompts, not soft prompts.

github.com/varungodbole...

New here? Interested in AI/ML? Check out these great starter packs!

AI: go.bsky.app/SipA7it

RL: go.bsky.app/3WPHcHg

Women in AI: go.bsky.app/LaGDpqg

NLP: go.bsky.app/SngwGeS

AI and news: go.bsky.app/5sFqVNS

You can also search all starter packs here: blueskydirectory.com/starter-pack...

Getting set up on Bluesky today!

11.11.2024 00:50 — 👍 5 🔁 0 💬 0 📌 0

I’m pretty excited about this one!

ALTA is A Language for Transformer Analysis.

Because ALTA programs can be compiled to transformer weights, it provides constructive proofs of transformer expressivity. It also offers new analytic tools for *learnability*.

arxiv.org/abs/2410.18077