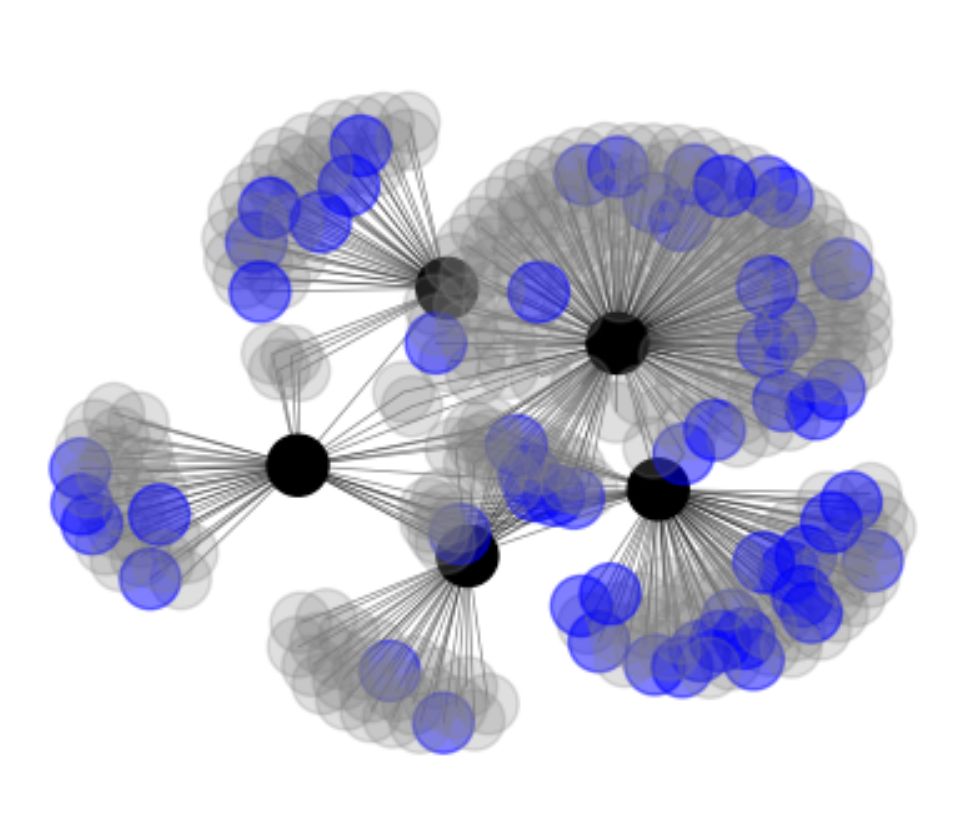

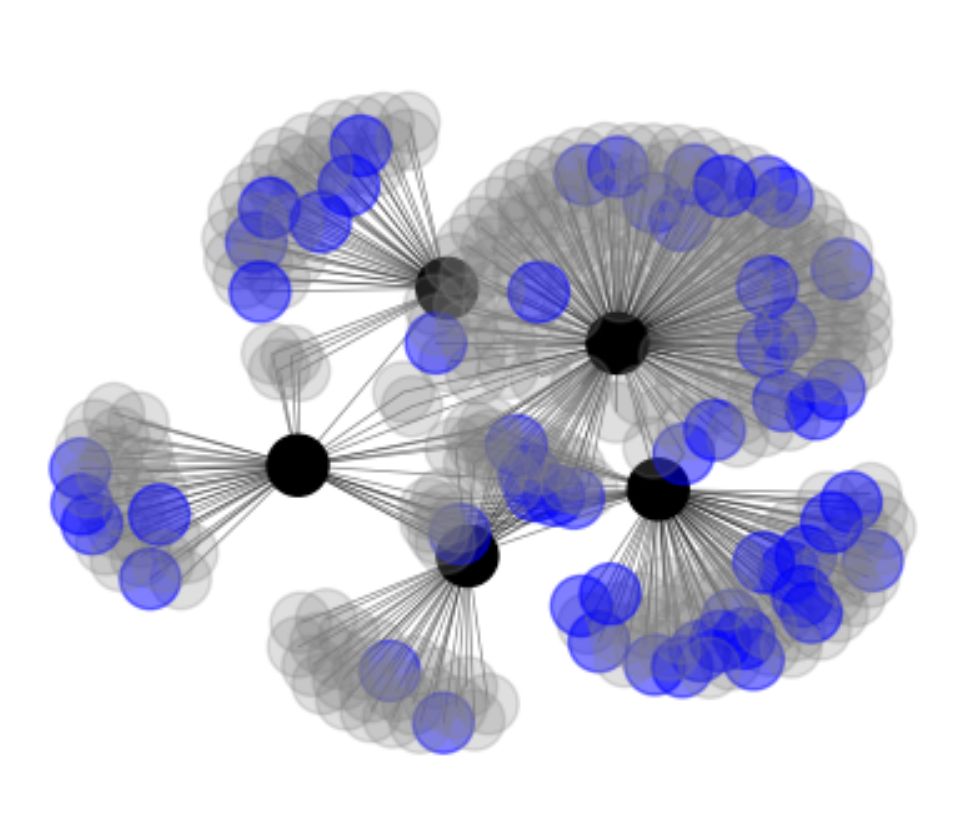

The transformer was invented in Google. RLHF was not invented in industry labs, but came to prominence in OpenAI and DeepMind. I took 5 of the most influential papers (black dots) and visualized their references. Blue dots are papers that acknowledge federal funding (DARPA, NSF).

12.04.2025 02:35 —

👍 109

🔁 24

💬 2

📌 0

New Preprint! Interested in learning about how working memory is subserved by both compositional and generative mechanisms? Read on!

14.04.2025 02:24 —

👍 31

🔁 8

💬 1

📌 0

To be clear, I believe the interesting areas of modularity that warrant investigation are world modeling, reasoning, planning, and memory, generally. Bring back fuzzy memory modules! [6]

[6] arxiv.org/abs/1410.5401

14.04.2025 05:12 —

👍 0

🔁 0

💬 0

📌 0

Ugh, sorry for the gross LinkedIn links on that cross post. I'll do better next time. 🫠

14.04.2025 05:05 —

👍 0

🔁 0

💬 0

📌 0

After a short era in which people questioned the value of academia in ML, its value is more obvious than ever. Big labs stopped publishing the minute commercial incentives showed up and are relentlessly focused on a singular vision of scaling. Academia is a meaningful complement, bringing...

1/2

14.04.2025 01:04 —

👍 214

🔁 41

💬 2

📌 2

LinkedIn

This link will take you to a page that’s not on LinkedIn

Full disclosure, I'm a bit biased as I've developed a similar proprietary set of protocols recently. Kudos to the communities for recognizing the need for standardization for further scaling!

[1] lnkd.in/eUqVV5SQ

[2] lnkd.in/gf6e6XH6

[3] lnkd.in/gx4EbSFR

[4] lnkd.in/g_42revZ

14.04.2025 05:00 —

👍 0

🔁 0

💬 2

📌 0

LinkedIn

This link will take you to a page that’s not on LinkedIn

My intuition is that standardization of message passing protocols, like MCP[3] and A2A[4], will further enable both research and engineering for these kinds of approaches.

14.04.2025 05:00 —

👍 0

🔁 0

💬 1

📌 0

LinkedIn

This link will take you to a page that’s not on LinkedIn

One of the reasons I'm happy to see a disaggregation effect in the departure from "one massive model to rule them all" is the modularity and, frankly, accessibility brought forward for those without so much compute available. I hope to see more socratic [2] type approaches in the coming years!

14.04.2025 05:00 —

👍 0

🔁 0

💬 1

📌 0

LinkedIn

This link will take you to a page that’s not on LinkedIn

The AI research scene is dealing with a second order hardware lottery[1] effect right now, GPUs being the first, in that many papers being published are based on pretrained models trained using large research clusters available to only a few labs.

14.04.2025 05:00 —

👍 0

🔁 0

💬 1

📌 0

“Philosophy would render us entirely skeptics, were not nature too strong for it.”

— David Hume, An Enquiry Concerning Human Understanding

#philosophy #philsky

21.03.2025 03:06 —

👍 37

🔁 4

💬 0

📌 1

How it started / how it's going.....

18.03.2025 02:44 —

👍 145

🔁 24

💬 9

📌 7

we released olmo 32b today! ☺️

🐟our largest & best fully open model to-date

🐠right up there w similar size weights-only models from big companies on popular benchmarks

🐡but we used way less compute & all our data, ckpts, code, recipe are free & open

made a nice plot of our post-trained results!✌️

13.03.2025 20:42 —

👍 40

🔁 7

💬 2

📌 1

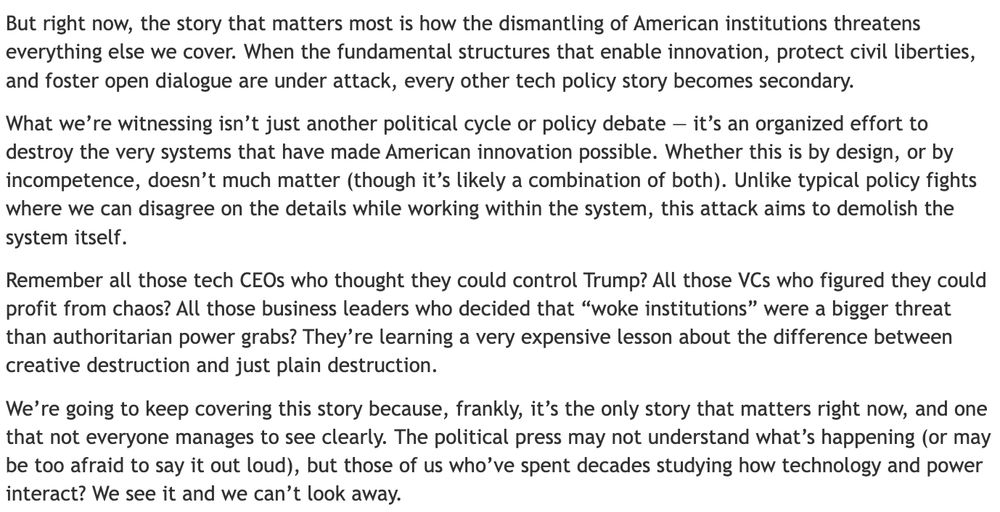

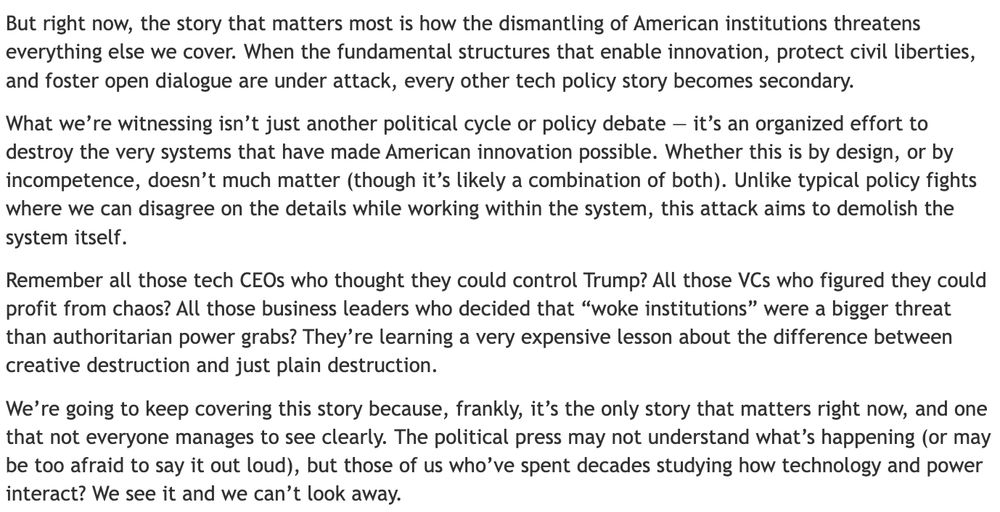

Some of his readers have asked Mike Masnick @mmasnick.bsky.social why his technology news site, Tech Dirt, has been covering politics so intensely lately. www.techdirt.com/2025/03/04/w...

I cannot recommend Mike's reply enough. It's exactly what readers need to hear, what journalists need to do.

07.03.2025 00:09 —

👍 4564

🔁 1820

💬 86

📌 114

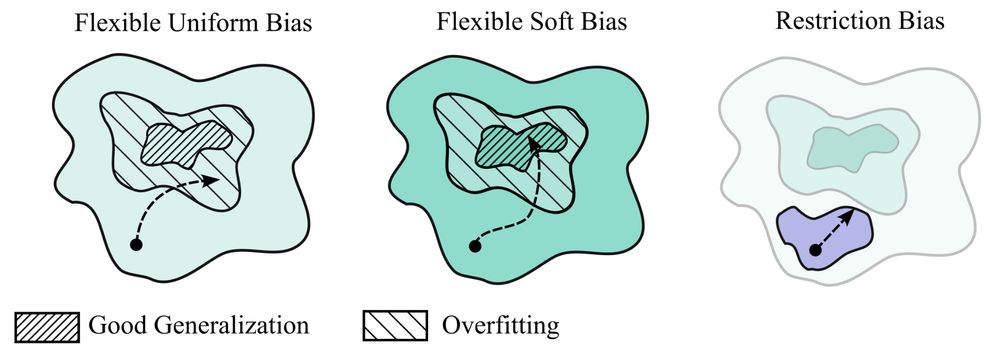

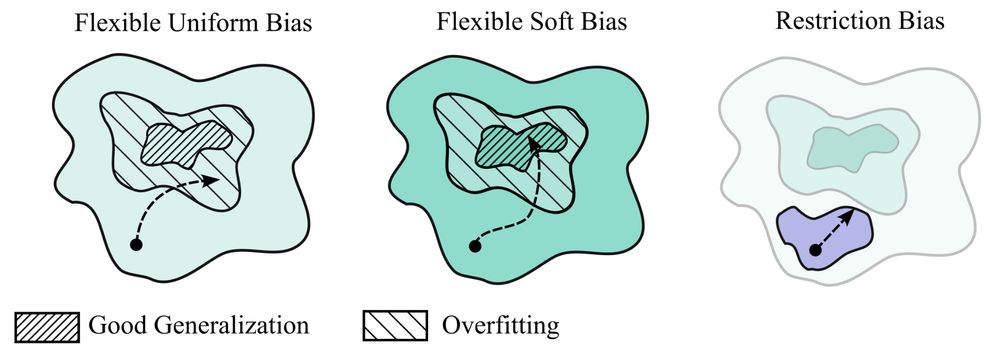

My new paper "Deep Learning is Not So Mysterious or Different": arxiv.org/abs/2503.02113. Generalization behaviours in deep learning can be intuitively understood through a notion of soft inductive biases, and formally characterized with countable hypothesis bounds! 1/12

05.03.2025 15:37 —

👍 209

🔁 49

💬 6

📌 9

Awesome LLM Post-training

This repository is a curated collection of the most influential papers, code implementations, benchmarks, and resources related to Large Language Models (LLMs) Post-Training Methodologies.

github.com/mbzuai-oryx/...

04.03.2025 00:03 —

👍 44

🔁 10

💬 1

📌 0

Starlink embedded in the FAA.

Grok used by the OPM.

Tesla contracts from the DoD.

SpaceX taking over NASA tasks.

We are "NeuraLink requirements for Social Security payments" away from a complete governmental parasitic symbiosis.

25.02.2025 15:43 —

👍 3

🔁 5

💬 1

📌 0

GitHub - ARBORproject/arborproject.github.io

Contribute to ARBORproject/arborproject.github.io development by creating an account on GitHub.

Today we're launching a multi-lab open collaboration, the ARBOR project, to accelerate AI interpretability research for reasoning models. Please join us!

github.com/ARBORproject...

(ARBOR = Analysis of Reasoning Behavior through Open Research)

20.02.2025 19:55 —

👍 44

🔁 9

💬 1

📌 0

JUST IN: NASA says there's now a 3.1% chance an asteroid will hit Earth in 2032, up from 2.6% yesterday.

This is the highest risk assessment an asteroid has ever received, surpassing 2.7% in 2004

18.02.2025 19:24 —

👍 799

🔁 165

💬 168

📌 683

Forget “tapestry” or “delve” these are the actual unique giveaway words for each model, relative to each other. arxiv.org/pdf/2502.12150

19.02.2025 03:04 —

👍 102

🔁 17

💬 6

📌 9

perplexity-ai/r1-1776 · Hugging Face

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

An uncensored version of R1 is released 🔥

“R1 1776 is a DeepSeek-R1 reasoning model that has been post-trained by Perplexity AI to remove CCP censorship. The model provides unbiased, accurate, and factual information while maintaining high reasoning capabilities.”

huggingface.co/perplexity-a...

19.02.2025 03:22 —

👍 58

🔁 11

💬 2

📌 7

Why reasoning models will generalize

People underestimate the long-term potential of “reasoning.”

Why reasoning models will generalize

DeepSeek R1 is just the tip of the ice berg of rapid progress.

People underestimate the long-term potential of “reasoning.”

28.01.2025 21:04 —

👍 51

🔁 8

💬 5

📌 1

Current me: It's only one more project/talk/paper/review...

Future me: Don't do this, I beg you.

Current me: Super interesting, could find a way to fit it in...

Future me: C'mon, remember the rule, just say no!

Current me: & loads of time before the deadline...

Future me: Wait, can you even hear me?

17.01.2025 16:01 —

👍 58

🔁 10

💬 1

📌 3

The state of post-training in 2025

A re-record of my NeurIPS tutorial on language modeling (plus some added content).

The state of post-training in 2025: a tutorial on modern post-training

A re-record of my NeurIPS tutorial on language modeling (plus some added content on the high level state of things)

Blog + extra context: https://buff.ly/424VvLm

YouTube: https://buff.ly/40808l5

Slides: https://buff.ly/404jGa9

08.01.2025 15:38 —

👍 80

🔁 17

💬 4

📌 0

In Solidarity with Ann Telnaes. ✊

“Democracy Dies in Darkness.”

anntelnaes.substack.com/p/why-im-qui...

@anntelnaes.bsky.social

05.01.2025 16:36 —

👍 123

🔁 32

💬 0

📌 2