. anaezquerro.bsky.social presenting our work on graph parsing as tagging at #EMNLP2025 !!!

05.11.2025 08:57 — 👍 2 🔁 0 💬 0 📌 0

. anaezquerro.bsky.social presenting our work on graph parsing as tagging at #EMNLP2025 !!!

05.11.2025 08:57 — 👍 2 🔁 0 💬 0 📌 0

🌱 I Simposio sobre IA Verde 🌱

📅 25 y 26 noviembre | 📍 CITIC, A Coruña

💻 Modalidad híbrida | Inscripción gratuita: forms.cloud.microsoft/r/KW0MrUES0L...

👉 Más info: catedrainditexalgoritmosverdes.com/es/simposio-...

Today, @anaezquerro.bsky.social will be presenting our paper 'Hierarchical Bracketing Encodings for Dependency Parsing as Tagging' at #ACL2025NLP 🇦🇹

Room 1.86 (Session from 14:00 to 15:30).

Paper: aclanthology.org/2025.acl-lon...

Happy to share that our paper 'Document-level event extraction from Italian crime news using minimal data' has been published: www.sciencedirect.com/science/arti... #nlp #nlproc

21.04.2025 14:36 — 👍 1 🔁 0 💬 0 📌 0Justamente, la historia de muchos gallegos. Mucho mérito aprender así el idioma y no sabía que se podía ver la TVG desde allí. Si algún día visitas Caión estaría general poder aprovechar y organizar una charla invitada.

24.11.2024 16:20 — 👍 1 🔁 0 💬 1 📌 0Siendo yo de Vigo, tengo curiosidad por saber lo del Celta 🩵

24.11.2024 10:27 — 👍 1 🔁 0 💬 1 📌 0Could you add me, too? aclanthology.org/people/d/dav... Thanks!

19.11.2024 08:58 — 👍 1 🔁 0 💬 0 📌 0All the ACL chapters are here now: @aaclmeeting.bsky.social @emnlpmeeting.bsky.social @eaclmeeting.bsky.social @naaclmeeting.bsky.social #NLProc

19.11.2024 03:48 — 👍 107 🔁 37 💬 1 📌 3Hello, Computational linguistics/NLP world in Bluesky! We're creating the same accounts on other social media platforms in Bluesky! #NLProc

14.11.2024 00:17 — 👍 132 🔁 31 💬 4 📌 5

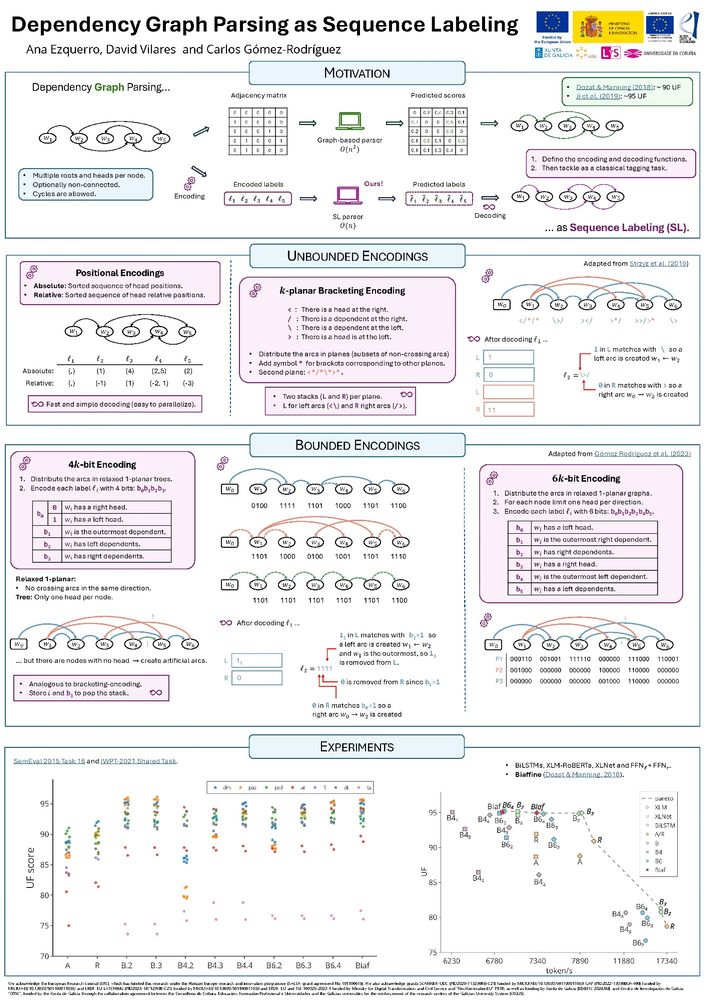

Today at 14:00, @anaezquerro.bsky.social and @carlosg.bsky.social will be presenting our paper "Dependency Graph Parsing as Sequence Labeling" at #EMNLP2024! Join them at the poster session in Riverfront Hall.

Paper: aclanthology.org/2024.emnlp-m...

Our paper, "Dependency Graph Parsing as Sequence Labeling" (with anaezquerro.bsky.social, myself, and @carlosg.bsky.social), will be presented this Tuesday at #EMNLP2024 in Miami!

The paper is already in the ACL Anthology: aclanthology.org/2024.emnlp-m...

Our paper "From Partial to Strictly Incremental Constituent Parsing" (w/ Ana Ezquerro and @carlosg.bsky.social) is now available on arXiV and it will be presented at #EACL2024. We explore the capacities of various incremental encoders and decoders for this classical task: arxiv.org/abs/2402.02782

07.02.2024 08:03 — 👍 0 🔁 0 💬 0 📌 0

We just submitted to arXiv our paper (w/ @carlosg.bsky.social and Diego Roca): "4 and 7-bit Labeling for Projective and Non-Projective Dependency Trees", which we will be presenting at #EMNLP2023, in Singapore!

arxiv.org/abs/2310.14319

New paper, out in Open Mind

We find that in reading, the brain uses 2 strategies to control the eyes

Reading times (fixation durations) depend on linguistic processing, but skipping (fix. location) mostly depends on low-level oculomotor processing

#CogSci 🧠🤖 🧠📈

direct.mit.edu/opmi/article...

Happy to have this paper accepted at IJCNLP-AACL 2023: "On the Challenges of Fully Incremental Neural Dependency Parsing", led by Ana Ezquerro. The preprint is now available here: arxiv.org/abs/2309.16254

29.09.2023 07:11 — 👍 1 🔁 0 💬 0 📌 0Happy to have this paper accepted at IJCNLP-AACL 2023, led by Alberto Muñoz-Ortiz: Assessment of Pre-Trained Models Across Languages and Grammars arxiv.org/abs/2309.11165

25.09.2023 14:11 — 👍 1 🔁 0 💬 0 📌 0

Have you ever written a paper and looked at it and realized it should have been three papers and possibly even more? I felt that with our latest work about phase transitions in masked language model training, led by Angie Chen. We found so many cool things that deserve so much followup work!

15.09.2023 05:00 — 👍 16 🔁 6 💬 0 📌 1Found this interesting QA on human language and its evolution: bmcbiol.biomedcentral.com/articles/10....

14.09.2023 15:19 — 👍 1 🔁 1 💬 0 📌 0Hello, Bluesky!

13.09.2023 07:59 — 👍 6 🔁 0 💬 0 📌 0