#EMNLP2025 submission deadline in 3 days!

16.05.2025 15:05 — 👍 0 🔁 0 💬 0 📌 0#EMNLP2025 submission deadline in 3 days!

16.05.2025 15:05 — 👍 0 🔁 0 💬 0 📌 0

Our paper "Misattribution Matters: Quantifying Unfairness in Authorship Attribution" got accepted to #ACL2025!

@niranjanb.bsky.social @ajayp95.bsky.social

Arxiv link coming hopefully soon!

I'll do the advisory advertising: @ykl7.bsky.social is a fantastic researcher and is passionate about being in academia. He has this amazing ability to simply get things done! Happy to say more in a letter or over a chat but if you are going to @naaclmeeting.bsky.social (#NAACL2025) ping him.

29.04.2025 18:40 — 👍 3 🔁 1 💬 1 📌 0I'm headed to #NAACL2025 ✈️ in Albuquerque 🏜️Looking for postdoc positions in the US, so if you're hiring (or know someone who is), let's chat at the conference! Also organizing #WNU2025 so make sure to swing by the workshop on May 4

29.04.2025 11:13 — 👍 4 🔁 0 💬 0 📌 1Heads up for anyone who missed this: ARR has moved to 5 cycles per year and the EMNLP deadline will be in May.

15.02.2025 15:17 — 👍 24 🔁 4 💬 0 📌 1

First CfP for #EMNLP2025 is live now. Submission deadline to May ARR cycle!

Excited to be part of organizing the conference as publicity chair w/ @amuuueller.bsky.social @dallascard.bsky.social so watch out for more updates esp by following the official conf account @emnlpmeeting.bsky.social

🚨 The submission deadline (Feb 17) for the Workshop on Narrative Understanding at #NAACL2025 is approaching us! Excited to see diverse work on studying different aspects of narratives 🤩

Submit here: www.softconf.com/naacl2025/WN... #NLProc #wnu2025

📢 The 7th Workshop on Narrative Understanding (WNU) will happen with #NAACL2025 and is open for submissions.

🌐: tinyurl.com/wnu25

Direct Submission: February 17

Pre-Reviewed (ARR) papers: March 10

Excited to organize this again and hope to see you in Albuquerque 🌵 early this May! #wnu2025 #NLProc

I've read one-off anecdotes of such rings across conferences. Has there been a study by chairs/organizers about how frequents this is?

06.01.2025 14:39 — 👍 0 🔁 0 💬 1 📌 0In the early days of CS, reviewing loads were typically 20 papers. This required a much greater commitment from the reviewers, but results were much more consistent. For FOCS and STOC, reviewers read EVERY submission

13.12.2024 18:50 — 👍 12 🔁 2 💬 3 📌 0Thanks @mohitbansal.bsky.social for the wonderful Distinguished Lecture on agents and multimodal generation. This got so many of us here at Stony Brook excited for the potential in these areas. Also, thanks for spending time with our students & sharing your wisdom. It was a pleasure hosting you!

09.12.2024 12:49 — 👍 10 🔁 3 💬 1 📌 0

Why did @duolingoverde.bsky.social go with the villain vibe for the year in review this year? 🤔

05.12.2024 19:19 — 👍 5 🔁 0 💬 0 📌 0

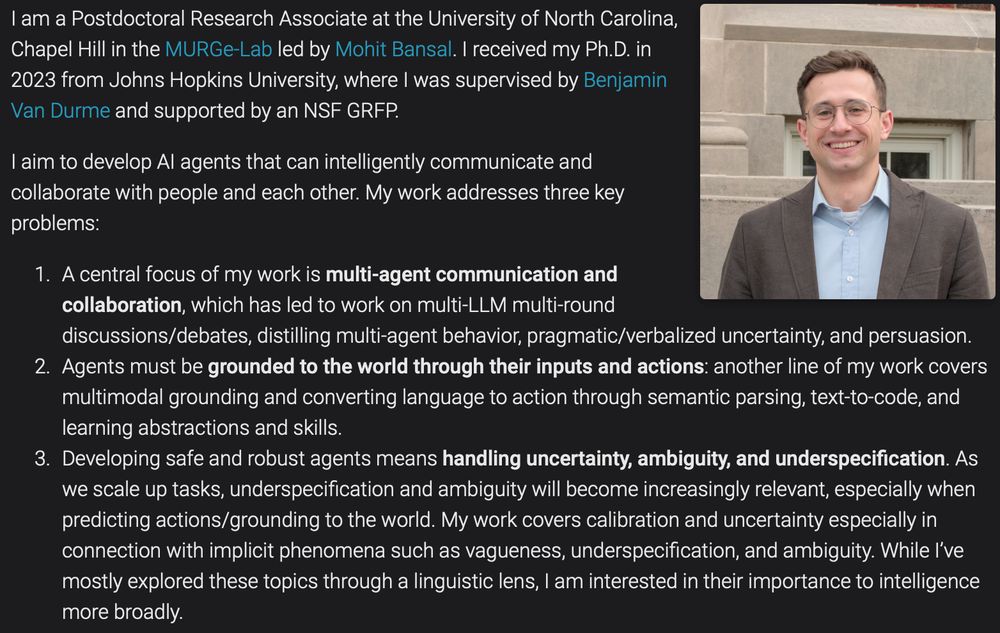

🚨 I am on the faculty job market this year 🚨

I will be presenting at #NeurIPS2024 and am happy to chat in-person or digitally!

I work on developing AI agents that can collaborate and communicate robustly with us and each other.

More at: esteng.github.io and in thread below

🧵👇

Looking forward to giving this Distinguished Lecture at StonyBrook next week & meeting the several awesome NLP + CV folks there - thanks Niranjan + all for the kind invitation 🙂

PS. Excited to give a new talk on "Planning Agents for Collaborative Reasoning and Multimodal Generation" ➡️➡️

🧵👇

Lots of posts about LLMs trivially generating websites. How do I prompt them to generate a full (static) website with CSS and JS too? The HTML is fine but I can't fix (a lot of) issues in the generated CSS and JS. I feel like we still need domain expertise to make sure these things work 😅

30.11.2024 21:57 — 👍 4 🔁 0 💬 1 📌 0

The question that a reviewer should ask themselves is:

Does this paper take a gradient step in a promising direction? Is the community better off with this paper published? If the answer is yes, then the recommendation should be to accept.

Releasing SmolVLM, a small 2 billion parameters Vision+Language Model (VLM) built for on-device/in-browser inference with images/videos.

Outperforms all models at similar GPU RAM usage and tokens throughputs

Blog post: huggingface.co/blog/smolvlm

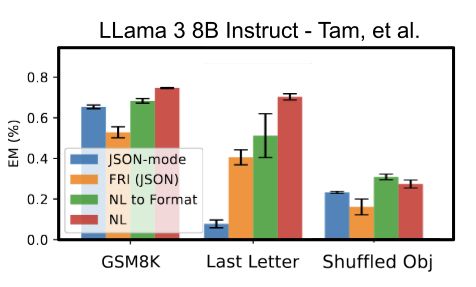

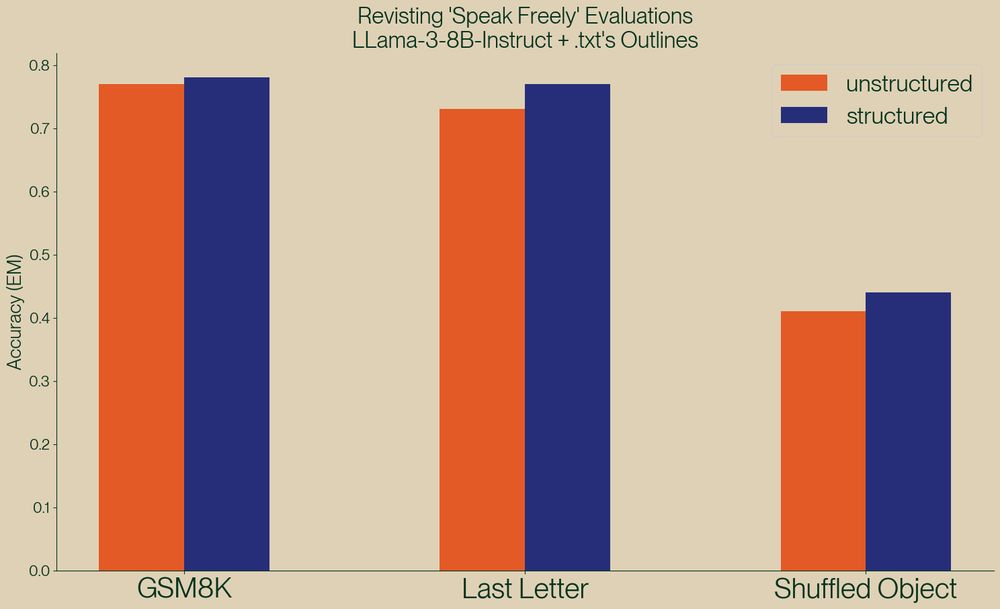

A new paper, "Let Me Speak Freely" has been spreading rumors that structured generation hurts LLM evaluation performance.

Well, we've taken a look and found serious issue in this paper, and shown, once again, that structured generation *improves* evaluation performance!

Free food might motivate grad students to do more 😂

24.11.2024 23:05 — 👍 2 🔁 0 💬 0 📌 0The criteria to get accepted to ARR should be similar to TMLR. The presentation slots in conferences and workshops left to PCs to decide. Decouple the two completely.

24.11.2024 17:27 — 👍 2 🔁 1 💬 0 📌 0One of my gripes with ARR (orhers have also raised this) is that reviews and scores are not tied to accept/reject decisions. But coupling them together again brings it closer to the old review system again 😅

24.11.2024 17:11 — 👍 0 🔁 0 💬 1 📌 0

I noticed a lot of starter packs skewed towards faculty/industry, so I made one of just NLP & ML students: go.bsky.app/vju2ux

Students do different research, go on the job market, and recruit other students. Ping me and I'll add you!

An agent would be an LLM to do one specific task (or function, as you refer to it) such as plan (decide to delegate), summarize long context, summarize short fontext

23.11.2024 01:02 — 👍 0 🔁 0 💬 1 📌 0Yes, a single system can delegate to multiple different 'agent' LLMs. So a multi agent system is still just one system but has differing components inside (here, an agent that can summarize novels vs another that can summarize a news article)

23.11.2024 00:59 — 👍 0 🔁 0 💬 1 📌 0I guess the system can be multi-agent because each prompt goes to an LLM and final prompt can go to a different LLM altogether. E.g., get claude to plan, get gpt 3.5 to do easy (sub)tasks, all within 1 'system'. Maybe if we use LLM as a parameter to a function, we can call it a multi function agent

23.11.2024 00:49 — 👍 1 🔁 0 💬 1 📌 0Without more specifics, two different agents (built on different models) might be better at using specific tools (B and D respectively) rather than a single agent equipped with both B and D.

23.11.2024 00:33 — 👍 1 🔁 0 💬 1 📌 0Context length is one things. But each model also has different 'strengths' that you can harness. Plus, combining outputs from different models means different 'perspectives' of agents you can leverage

23.11.2024 00:27 — 👍 3 🔁 0 💬 2 📌 0You can use different LLMs to 'act' as different role-based agents in 'multi-agent' systems which is different from using the same agent equipped with different operations

23.11.2024 00:21 — 👍 5 🔁 0 💬 3 📌 0

📢 NAACL reviews have been released!

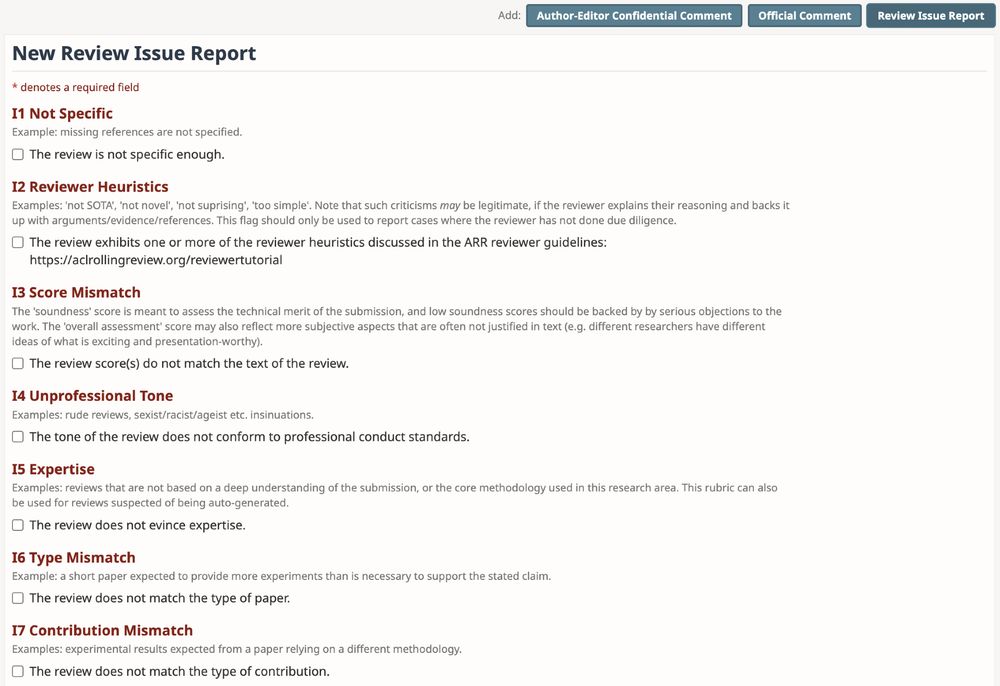

🆕 feature alert: ARR now has review issue flagging! Thanks @jkkummerfeld.bsky.social & OR team for help with implementation, and other EiCs for supporting the idea! It'll be live after author response. More details here: aclrollingreview.org/authors#step...

/1