🔗 Check it out:

👉 Project: m2svid.github.io

📄 Paper: arxiv.org/abs/2505.16565

💻 Code: (coming soon!)

@3dvconf.bsky.social

@ninashv.bsky.social

PhD student at the University of Tuebingen. Computer vision, video understanding, multimodal learning. https://ninatu.github.io/

🔗 Check it out:

👉 Project: m2svid.github.io

📄 Paper: arxiv.org/abs/2505.16565

💻 Code: (coming soon!)

@3dvconf.bsky.social

📊 Results:

✅Higher Quality: Our approach outperforms previous state-of-the-art methods, being ranked best 2.6x more often than the second-place method in user studies.

✅Faster: Runs 6x faster than state-of-the-art competitors.

⚡ Moreover, unlike other methods, we generate a new view without iterative diffusion steps, by training end-to-end and minimizing image space losses.

16.12.2025 09:57 — 👍 0 🔁 0 💬 1 📌 0

💡 Our Solution: We solve this by extending Stable Video Diffusion to utilize the input video, warped view (using an off-the-shelf depth model), and disocclusion masks to generate a view from the perspective of the other eye, fixing depth errors and inpainting gaps seamlessly.

16.12.2025 09:57 — 👍 0 🔁 0 💬 1 📌 0🛑 The Problem: Warping standard video to a view from perspective of the other eye is tricky. It creates empty "holes" (disocclusions) and messy depth artifacts where the depth model is inaccurate

16.12.2025 09:57 — 👍 1 🔁 0 💬 1 📌 0

Do you want to watch monocular videos in a headset with an immersive 3D experience? We propose M2SVid, a novel architecture that converts standard videos into high-quality, temporally consistent stereo.

16.12.2025 09:57 — 👍 0 🔁 0 💬 1 📌 0

Excited to share our new paper: M2SVid: End-to-End Inpainting and Refinement for Monocular-to-Stereo Video Conversion! ACCEPTED by 3DV 2026!🎬

👉 Project: m2svid.github.io

📄 Paper: arxiv.org/abs/2505.16565

Done with Goutam Bhat, Prune Truong, @hildekuehne.bsky.social Federico Tombari 🧵👇

ICCV 2025 🌺 Aloha from Hawaii! MPI-INF (D2) is presenting 4 papers this year (one Highlight). Thread 👇

19.10.2025 07:48 — 👍 13 🔁 6 💬 1 📌 0Super interesting insights!

16.09.2025 14:42 — 👍 2 🔁 0 💬 0 📌 0🌍 11 ELLIS Members and Scholars from five countries have received ERC Starting Grants! Congratulations to all awardees! 👏

Last week @erc.europa.eu awarded 478 grants totaling €761M to support early-career researchers across Europe.

🔗 Learn more: ellis.eu/news/erc-awa...

🚀 UTD is now fully released!

Code ✅ Models ✅ 2M video descriptions ✅ Debiased splits for 12 datasets ✅

Everything you need to benchmark video models more fairly is now public:

🔗 github.com/ninatu/utd-p...

🎥 Let’s make video understanding actually about video understanding.

Everybody misses the 1-page rebuttal.

These lengthy forum style comments are a nightmare: a nightmare for the authors who spend way too much time writing them, a nightmare for the reviewers who spend too much time understanding them, a nightmare for the ACs who will have to summarize all. Stop it!

Finishing your PhD or just defended? Apply to the #ICCV2025 Doctoral Consortium. Get feedback and mentorship from leading researchers in computer vision.

Doctoral consortium info: iccv.thecvf.com/Conferences/...

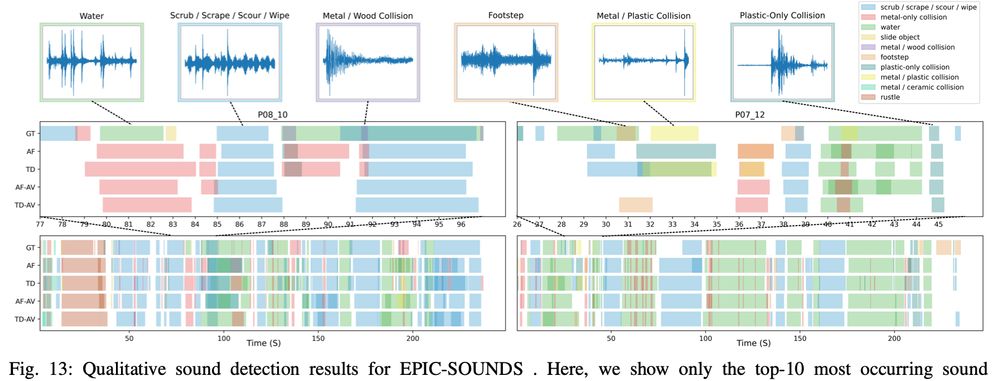

Extended EPIC-SOUND paper was accepted at TPAMI

arxiv.org/abs/2302.006...

This follows ICASSP 2023 oral, extended for detection and further analysis

epic-kitchens.github.io/epic-sounds/

work by @jaesunghuh.bsky.social Jacob Chalk @ekazakos.bsky.social

@oxford-vgg.bsky.social @bristoluni.bsky.social

Update on hidden prompts in papers targeting LLM reviews: ICML 2025 PCs react.

icml.cc/Conferences/...

Today, we release Franca, a new vision Foundation Model that matches and often outperforms DINOv2.

The data, the training code and the model weights are open-source.

This is the result of a close and fun collaboration

@valeoai.bsky.social (in France) and @funailab.bsky.social (in Franconia)🚀

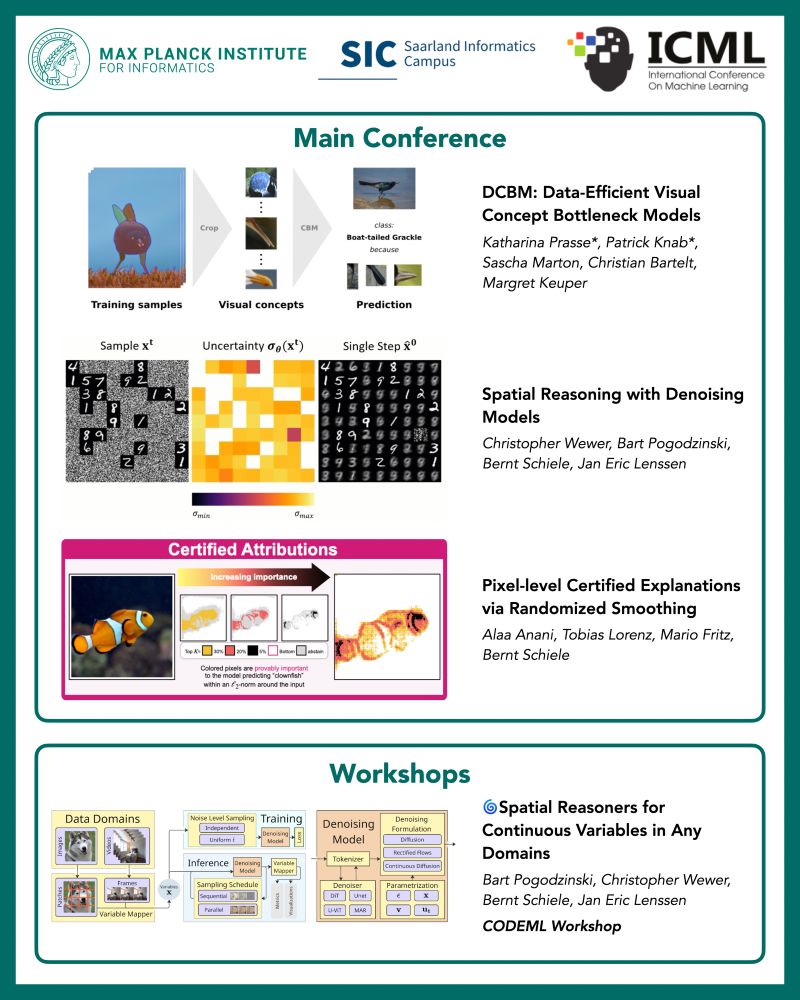

Papers accepted at ICML 2025 from the Computer Vision and Machine Learning Department at the Max Planck Institute for Informatics.

Papers being presented from our group at #ICML2025!

Congratulations to all the authors! To know more, visit us in the poster sessions!

A 🧵with more details:

@icmlconf.bsky.social @mpi-inf.mpg.de

Happening now! Check out the great work from Felix and Co. We improve video action grounding by >=10% on V-HICO and DALY(hope we didn't miss anyone)!

Fri 13 Jun 10:30 a.m. CDT — 12:30 p.m. CDT

ExHall D Poster #306

Paper: openaccess.thecvf.com/content/CVPR...

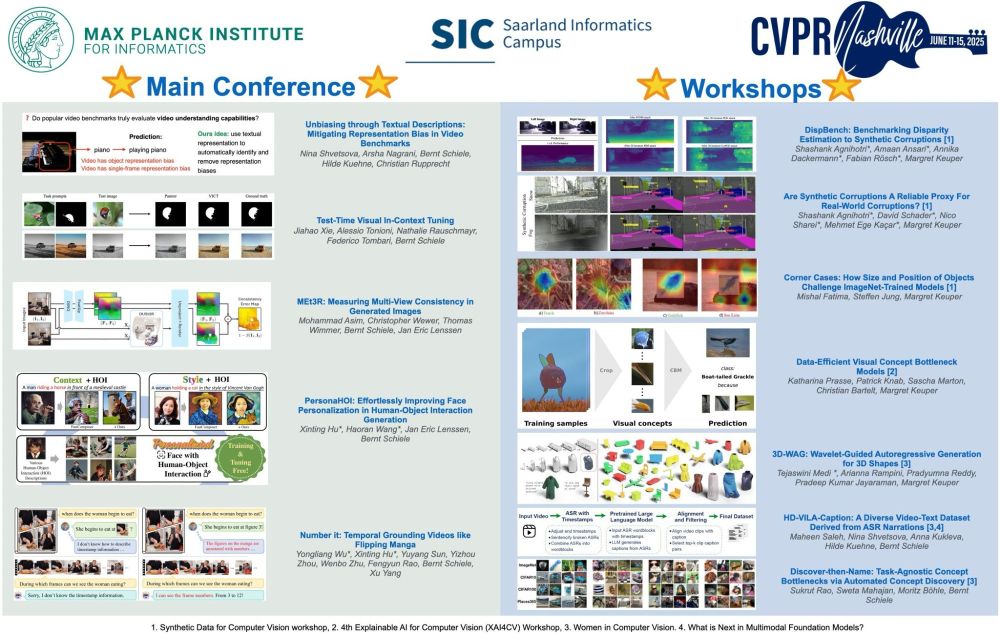

Thread: Workshop Papers from Our Lab at CVPR 2025! 🚀

👏 Huge congrats to our members on these workshop paper acceptances! Excited to see their work at #CVPR2025 🌟

#MPI-INF #D2 #Workshop #AI #ComputerVision #PhD

@mpi-inf.mpg.de

Thread: Main Conference Papers from Our Lab at CVPR 2025! 🚀

👏 Big congrats to everyone! Keep an eye out at #CVPR2025 🌟

#MPI-INF #D2 #ComputerVision #AI #PhD #ML

@mpi-inf.mpg.de

🎉 Exciting News #CVPR2025!

We’re proud to announce that we have 5 papers accepted to the main conference and 7 papers accepted at various CVPR workshops this year!

We’re looking forward to sharing our research with the community in Nashville!

Stay tuned for more details! @mpi-inf.mpg.de

Do you want to present your recently accepted or ongoing work @cvprconference.bsky.social #CVPR2025 EgoVis workshop?

Submit your abstract before DL of Fri 2 May,

egovis.github.io/cvpr25/#cfp

🚀Excited to announce our CVPR 2025 paper: Unbiasing through Textual Descriptions!

We release new descriptions for 1.9M(!) videos and object-debiased splits for 12 datasets!

🔗Project: utd-project.github.io

by @ninashv.bsky.social et al 🧵👇

@cvprconference.bsky.social