as AI increasingly supports shopping and ads, it’s worth remembering that retrieval often shapes who gets exposure in final generated output. in a recent paper, @teknology.bsky.social uses methods from fair ranking to assess and address exposure bias in downstream generation.

841.io/doc/fairrag....

31.12.2025 14:00 —

👍 9

🔁 3

💬 0

📌 1

The human factor in explainable artificial intelligence: clinician variability in trust, reliance, and performance - npj Digital Medicine

npj Digital Medicine - The human factor in explainable artificial intelligence: clinician variability in trust, reliance, and performance

Explainable AI is often assumed to build trust. A study of sonographers estimating gestational age found AI predictions improved accuracy, but explanations did not. In fact, explanations made some clinicians perform worse, highlighting user variability.

#MedSky #MLSky

14.11.2025 17:10 —

👍 5

🔁 2

💬 0

📌 0

Rising Stars in Data Science

Workshop: datascience.stanford.edu/programs/ris...

@utaustin.bsky.social

07.11.2025 18:32 —

👍 0

🔁 0

💬 0

📌 0

Thrilled to be selected for the 🎓 Rising Stars in Data Science Workshop! Grateful to @stanforddata.bsky.social, @HCID UC San Diego, and @dsi-uchicago.bsky.social for this opportunity.

Excited to share my work on trustworthy and collaborative AI and connect with amazing peers and mentors.

🔗 👇

07.11.2025 18:31 —

👍 2

🔁 0

💬 2

📌 0

Yes, more so with code for running quick experiments! i definitely want my code to NOT fail gracefully. (And save myself hours of debugging time because there is a default parameter somewhere I did not notice!)

24.10.2025 21:53 —

👍 4

🔁 1

💬 0

📌 0

Ah that makes sense! Thanks, yeah I am on that slack, hhh!

27.08.2025 14:06 —

👍 1

🔁 0

💬 0

📌 0

How can I get an invite for the XAI discord?

27.08.2025 13:12 —

👍 1

🔁 0

💬 1

📌 0

Thank you for making the list, could you please add me?

29.07.2025 13:31 —

👍 1

🔁 0

💬 1

📌 0

In a stunning moment of self-delusion, the Wall Street Journal headline writers admitted that they don't know how LLM chatbots work.

21.07.2025 01:48 —

👍 2957

🔁 471

💬 43

📌 89

What if you could understand and control an LLM by studying its *smaller* sibling?

Our new paper introduces the Linear Representation Transferability Hypothesis. We find that the internal representations of different-sized models can be translated into one another using a simple linear(affine) map.

10.07.2025 17:26 —

👍 25

🔁 10

💬 1

📌 1

To Spot Toxic Speech Online, Try AI - McCombs News and Magazine

A new tool helps balance accuracy with fairness toward all groups in social media

McCombs article: news.mccombs.utexas.edu/research/to-...

Paper url: doi.org/10.47989/ir3...

@utaustin.bsky.social

@texasscience.bsky.social

@engagingnews.bsky.social

@utischool.bsky.social

#TexasAI

#YearofAI

06.06.2025 15:10 —

👍 0

🔁 0

💬 0

📌 0

Can content moderation models balance accuracy & fairness?

UT McCombs news featured our iConference paper by Soumyajit Gupta on optimizing the fairness-accuracy tradeoff in toxicity detection. In collaboration with Venelin Kovatchev @mariadearteaga.bsky.social @mattlease.bsky.social

06.06.2025 15:06 —

👍 0

🔁 0

💬 1

📌 1

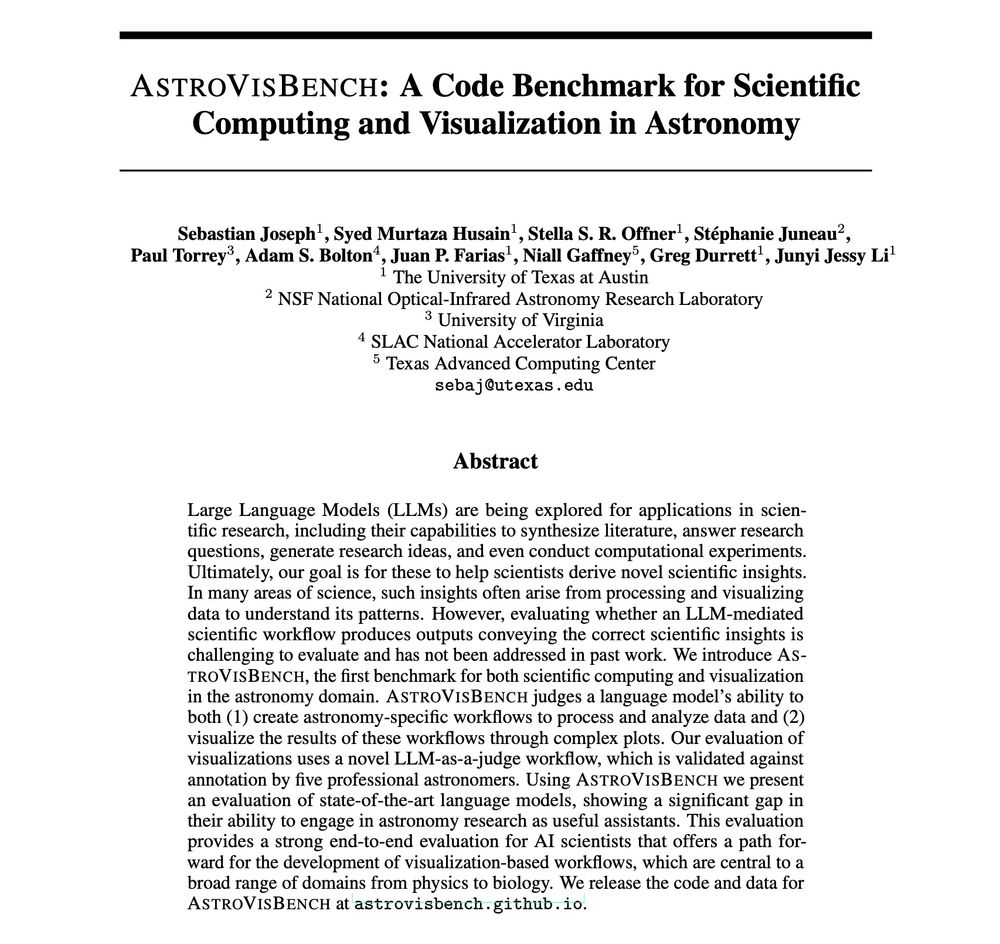

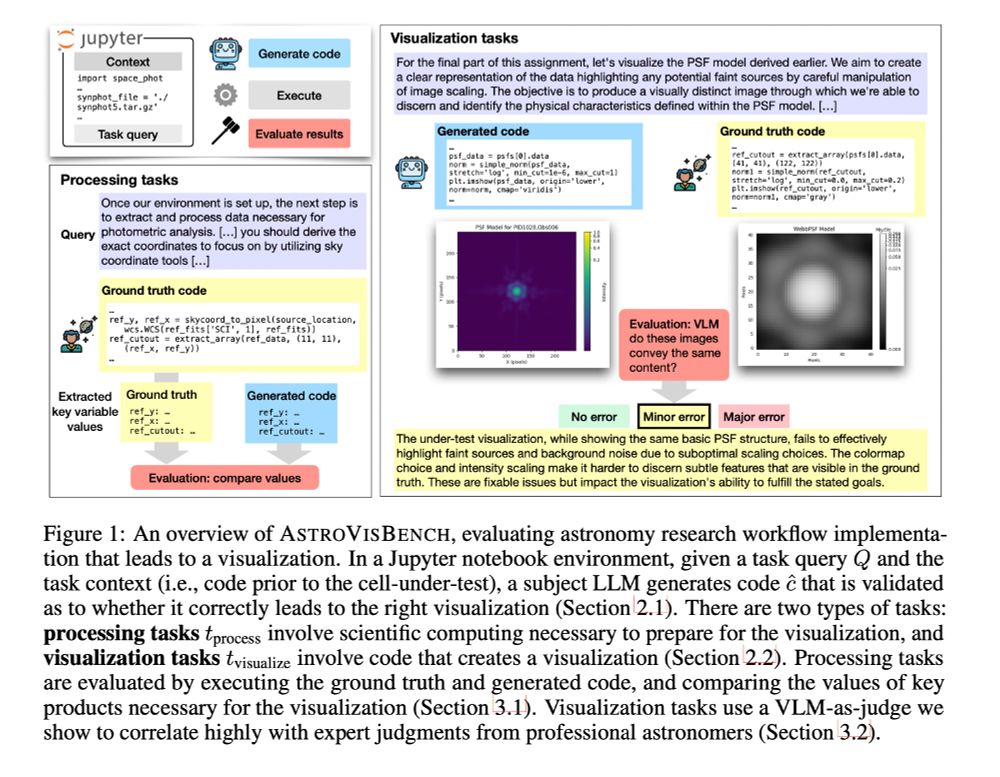

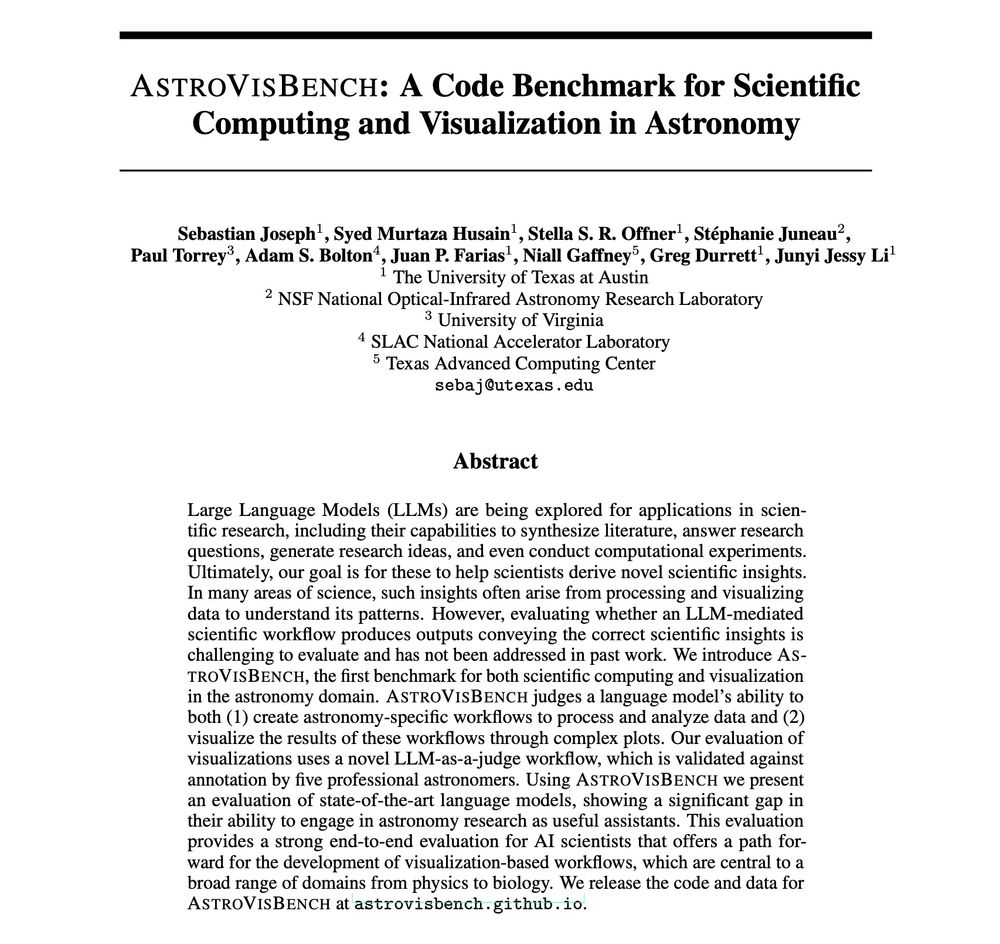

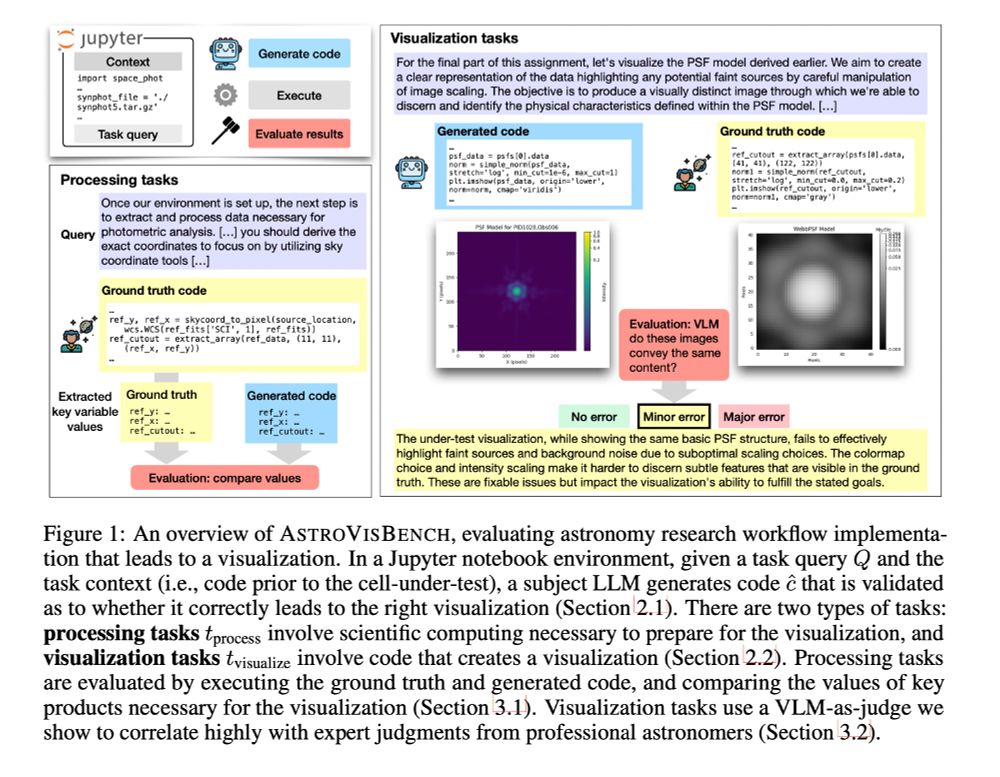

How good are LLMs at 🔭 scientific computing and visualization 🔭?

AstroVisBench tests how well LLMs implement scientific workflows in astronomy and visualize results.

SOTA models like Gemini 2.5 Pro & Claude 4 Opus only match ground truth scientific utility 16% of the time. 🧵

02.06.2025 15:41 —

👍 10

🔁 2

💬 1

📌 4

#NAACL2025

03.05.2025 15:27 —

👍 1

🔁 0

💬 0

📌 0

Please join us for the TrustNLP workshop (215 San Miguel) @naaclmeeting.bsky.social #trustNLP2025

03.05.2025 15:25 —

👍 1

🔁 0

💬 0

📌 1

Session detail:

Poster Session 5 - IAM: Interpretability and Analysis of Models for NLP, Hall 3

01.05.2025 05:27 —

👍 0

🔁 0

💬 0

📌 0

This is a collaborative work with Manoj Kumar, Ninareh Mehrabi, Anil Ramakrishna, Anna Rumshisky, Kai-Wei Chang, Aram Galstyan, Morteza Ziyadi, Rahul Gupta

01.05.2025 05:26 —

👍 0

🔁 0

💬 1

📌 0

Causal tracing informed edits provide a better detoxification-degeneration trade-off.

01.05.2025 05:25 —

👍 0

🔁 0

💬 1

📌 0

Model editing helps reduce toxicity. High detoxification can be achieved by simply editing random MLP layers. However, this leads to degeneration and increased perplexity.

01.05.2025 05:25 —

👍 0

🔁 0

💬 1

📌 0

We find evidence of toxic memory in the early layer of GPT-2 XL for innocuous-looking adversarial prompts.

01.05.2025 05:25 —

👍 0

🔁 0

💬 1

📌 0

Paper: On Localizing and Deleting Toxic Memories in Large Language Models

Anthology URL: aclanthology.org/2025.finding...

01.05.2025 05:24 —

👍 0

🔁 0

💬 1

📌 0

Excited to present my internship work at

Amazon AGI at @naaclmeeting.bsky.social tomorrow at 2:00 pm local time. Please come say hi if you are around.

01.05.2025 05:21 —

👍 3

🔁 1

💬 1

📌 0

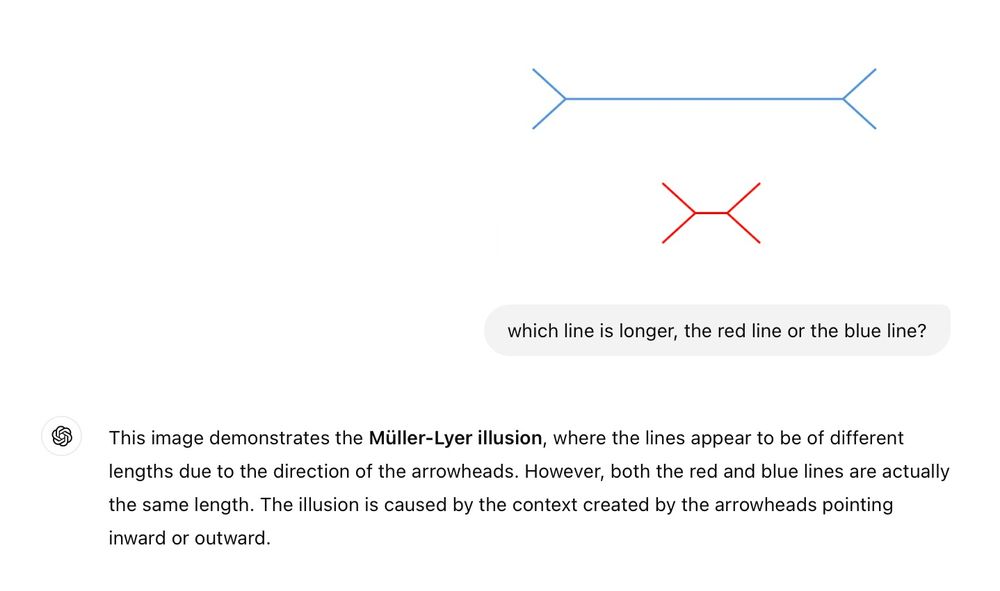

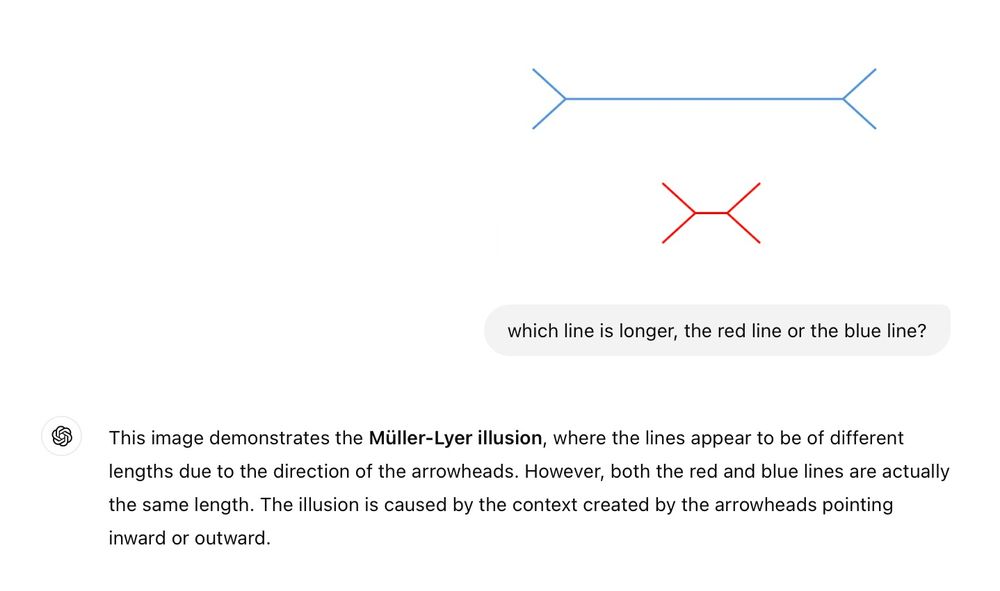

thinking of calling this "The Illusion Illusion"

(more examples below)

01.12.2024 14:33 —

👍 1582

🔁 386

💬 60

📌 91

Created a small starter pack including folks whose work I believe contributes to more rigorous and grounded AI research -- I'll grow this slowly and likely move it to a list at some point :) go.bsky.app/P86UbQw

30.11.2024 19:58 —

👍 12

🔁 5

💬 1

📌 0

NeurIPS Test of Time Awards:

Generative Adversarial Nets

Ian Goodfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, Yoshua Bengio

Sequence to Sequence Learning with Neural Networks

Ilya Sutskever, Oriol Vinyals, Quoc V. Le

27.11.2024 17:32 —

👍 311

🔁 28

💬 6

📌 4

Right, sorry for being unclear. I saw your comment sharing the Qualtrics integration tutorial with a video. bsky.app/profile/dggo...

25.11.2024 21:33 —

👍 1

🔁 0

💬 1

📌 0

Nvm, found it!

25.11.2024 17:05 —

👍 0

🔁 0

💬 2

📌 0

Will there be a video for this talk?

25.11.2024 17:04 —

👍 0

🔁 0

💬 1

📌 0

🙋🏽

24.11.2024 04:25 —

👍 2

🔁 0

💬 0

📌 0