A favorite recent image of some busy microglia, lovingly named the Rat King (yellow CD68, pink Iba1, blue DAPI) for my first #FluorescenceFriday

12.12.2025 14:38 — 👍 42 🔁 7 💬 2 📌 1

A favorite recent image of some busy microglia, lovingly named the Rat King (yellow CD68, pink Iba1, blue DAPI) for my first #FluorescenceFriday

12.12.2025 14:38 — 👍 42 🔁 7 💬 2 📌 1

This is a glimpse of the cytoskeletal structures we found doing U-ExM in more than 200 species, now in Cell🤩: shorturl.at/6oHvi

Amazing collaboration between @centriolelab.bsky.social , @dudinlab.bsky.social and @gautamdey.bsky.social labs.

I believe last one is a 🕷️

#FluorescenceFriday

#Microscopy

Congratulations to all the Democratic candidates who won tonight. It’s a reminder that when we come together around strong, forward-looking leaders who care about the issues that matter, we can win. We’ve still got plenty of work to do, but the future looks a little bit brighter.

05.11.2025 02:47 — 👍 61767 🔁 11148 💬 1183 📌 516

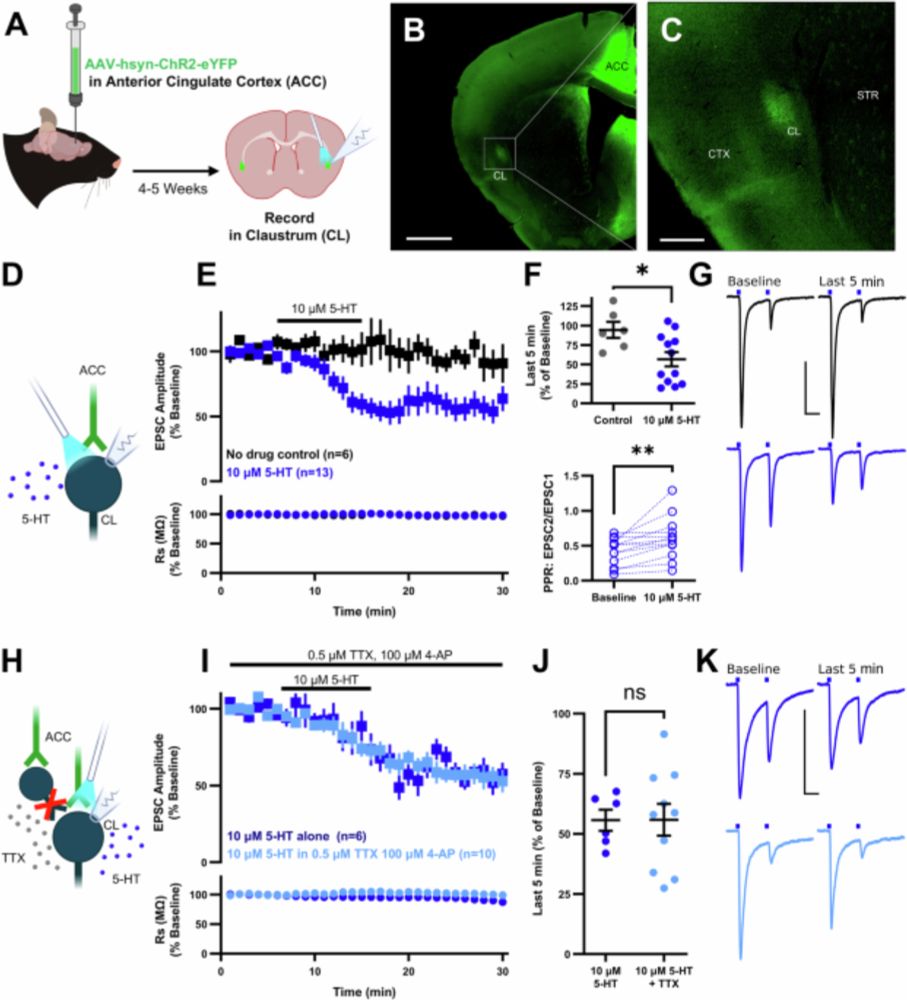

Check out my most recent paper published in #eNeuro

A pre and post spiking protocol depresses claustrocortical EPSPs, unless you perform the same protocol in the presence of DOI, then the polarity reverses into potentiation! We also see some DOI effects on spike kinetics.

Just out in Nature! “The astrocytic ensemble acts as a multiday trace to stabilize memory.” We identified astrocytic ensembles that link experiences across days to stabilize memory nature.com/articles/s41.... New astrocyte tools are openly available at Addgene: addgene.org/Jun_Nagai/. 1/8

15.10.2025 16:11 — 👍 82 🔁 27 💬 4 📌 3

This is really helpful! Thank you.

How high/low should i be looking for with those numbers/what numbers would you say are acceptable?

The rig will be used for fluorescence-guided patch, but I have a secret hope to combine it with 1P-calcium sensor imaging for a maybe experiment.

29.10.2025 18:44 — 👍 1 🔁 0 💬 1 📌 0Currently building a patch ephys rig and agonizing over camera selection. Do any patchers have any advice on what specifications I should be prioritizing? Also, has anyone used the Thorlabs Kiralux sCMOS cameras? 🧪

29.10.2025 18:44 — 👍 3 🔁 3 💬 1 📌 0The rig will be used for fluorescence-guided patch, but I have a secret hope to combine it with 1P-calcium sensor imaging for an experiment.

29.10.2025 18:40 — 👍 0 🔁 0 💬 0 📌 0

While ChatGPT use has been linked to suicides and mental-health hospitalizations among heavy users, this appears to be the first documented murder involving a troubled person who had been engaging extensively with an AI chatbot. Soelberg posted hours of videos of himself scrolling through his conversations with ChatGPT on social media in the months before he died. The tone and language of the conversations are strikingly similar to the delusional chats many other people have been reporting in recent months. A key feature of AI chatbots is that, generally, the bot “doesn’t push back,” said Dr. Keith Sakata, a psychiatrist at the University of California, San Francisco who has treated 12 patients over the past year who were hospitalized for mental-health emergencies involving AI use. “Psychosis thrives when reality stops pushing back, and AI can really just soften that wall.” OpenAI said ChatGPT encouraged Soelberg to contact outside professionals. The Wall Street Journal’s review of his publicly available chats showed the bot suggesting he reach out to emergency services in the context of his allegation that he’d been poisoned.

"While ChatGPT use has been linked to suicides and mental-health hospitalizations among heavy users, this appears to be the first documented murder involving a troubled person who had been engaging extensively with an AI chatbot."

29.08.2025 01:12 — 👍 581 🔁 144 💬 7 📌 22

Abstract: Under the banner of progress, products have been uncritically adopted or even imposed on users — in past centuries with tobacco and combustion engines, and in the 21st with social media. For these collective blunders, we now regret our involvement or apathy as scientists, and society struggles to put the genie back in the bottle. Currently, we are similarly entangled with artificial intelligence (AI) technology. For example, software updates are rolled out seamlessly and non-consensually, Microsoft Office is bundled with chatbots, and we, our students, and our employers have had no say, as it is not considered a valid position to reject AI technologies in our teaching and research. This is why in June 2025, we co-authored an Open Letter calling on our employers to reverse and rethink their stance on uncritically adopting AI technologies. In this position piece, we expound on why universities must take their role seriously toa) counter the technology industry’s marketing, hype, and harm; and to b) safeguard higher education, critical thinking, expertise, academic freedom, and scientific integrity. We include pointers to relevant work to further inform our colleagues.

Figure 1. A cartoon set theoretic view on various terms (see Table 1) used when discussing the superset AI (black outline, hatched background): LLMs are in orange; ANNs are in magenta; generative models are in blue; and finally, chatbots are in green. Where these intersect, the colours reflect that, e.g. generative adversarial network (GAN) and Boltzmann machine (BM) models are in the purple subset because they are both generative and ANNs. In the case of proprietary closed source models, e.g. OpenAI’s ChatGPT and Apple’s Siri, we cannot verify their implementation and so academics can only make educated guesses (cf. Dingemanse 2025). Undefined terms used above: BERT (Devlin et al. 2019); AlexNet (Krizhevsky et al. 2017); A.L.I.C.E. (Wallace 2009); ELIZA (Weizenbaum 1966); Jabberwacky (Twist 2003); linear discriminant analysis (LDA); quadratic discriminant analysis (QDA).

Table 1. Below some of the typical terminological disarray is untangled. Importantly, none of these terms are orthogonal nor do they exclusively pick out the types of products we may wish to critique or proscribe.

Protecting the Ecosystem of Human Knowledge: Five Principles

Finally! 🤩 Our position piece: Against the Uncritical Adoption of 'AI' Technologies in Academia:

doi.org/10.5281/zeno...

We unpick the tech industry’s marketing, hype, & harm; and we argue for safeguarding higher education, critical

thinking, expertise, academic freedom, & scientific integrity.

1/n

Here's a look at a field of striatal astrocytes expressing GCaMP6f-cyto from the Huda Lab for #FluorescenceFriday !

05.09.2025 18:39 — 👍 63 🔁 13 💬 0 📌 1Thanks! We gotta catch up sometime soon.

26.08.2025 12:02 — 👍 1 🔁 0 💬 0 📌 0Thank you!

21.08.2025 13:31 — 👍 0 🔁 0 💬 0 📌 0

Hello, I would like to be added to the science feed please!

www.nature.com/articles/s41...

Excited my PhD work in @brianmathur.bsky.social’s lab is now published and available!

21.08.2025 02:40 — 👍 22 🔁 8 💬 1 📌 2