London #neuroscience people you may like this. We're hosting a series of talks at Imperial & Crick on how to get experiment and theory working together better. Each session will have a talk around this and extended networking / group discussion on the questions raised. Plus, free food!

🤖🧠🧪

26.02.2026 14:27 —

👍 30

🔁 10

💬 1

📌 0

PhD opportunities

The School of Convergence Science at Imperial College London is inviting applications for fully funded PhD studentships on research projects that sup...

PhD opportunity in "The role of neural development in multimodal intelligence" with me, @marcusghosh.bsky.social and @flor-iacaruso.bsky.social. Read details below 👇.

Note there's a very short window for applying (deadline Feb 27).

🤖🧠🧪

www.imperial.ac.uk/school-of-co...

11.02.2026 18:41 —

👍 22

🔁 22

💬 2

📌 0

Toy models, just in time for Christmas!

Excited to share my first article for @thetransmitter.bsky.social

#neuroskyence

22.12.2025 15:39 —

👍 46

🔁 20

💬 0

📌 3

Brains have many pathways / subnetworks but which principles underlie their formation?

In our #NeurIPS paper lead by Jack Cook we identify biologically relevant inductive biases that create pathways in brain-like Mixture-of-Experts models🧵

#neuroskyence #compneuro #neuroAI

arxiv.org/abs/2506.02813

21.11.2025 12:01 —

👍 37

🔁 12

💬 1

📌 0

thanks! will check and follow this.

14.11.2025 12:58 —

👍 1

🔁 0

💬 0

📌 0

Thanks for this insightful comment. I expect that low-precision weights and Gaussian noise could play a similar role as sources of imprecision, and that delays (or other parameters) offer additional degrees of freedom that can compensate for it. Good way to further explore

14.11.2025 12:20 —

👍 1

🔁 0

💬 1

📌 0

This might suggest that a large fraction of computational power may actually live in the temporal parameters, not just in the weights.

13.11.2025 20:52 —

👍 2

🔁 0

💬 0

📌 0

With my great advisors and colleagues, @achterbrain.bsky.social @zhe @danakarca.bsky.social @neural-reckoning.org, we show that if heterogeneous axonal delays (imprecise) can capture the essential temporal structure of a task, spiking networks do not need precise synaptic weights to perform well.

13.11.2025 20:51 —

👍 22

🔁 10

💬 2

📌 0

Exploiting heterogeneous delays for efficient computation in low-bit neural networks

Neural networks rely on learning synaptic weights. However, this overlooks other neural parameters that can also be learned and may be utilized by the brain. One such parameter is the delay: the brain...

Psst - neuromorphic folks. Did you know that you can solve the SHD dataset with 90% accuracy using only 22 kb of parameter memory by quantising weights and delays? Check out our preprint with @pengfei-sun.bsky.social and @danakarca.bsky.social, or read the TLDR below. 👇🤖🧠🧪 arxiv.org/abs/2510.27434

13.11.2025 17:40 —

👍 43

🔁 16

💬 3

📌 3

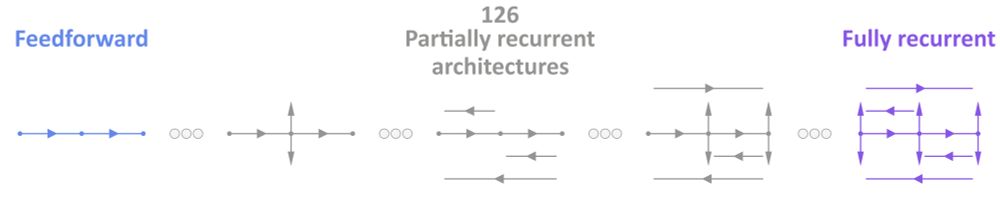

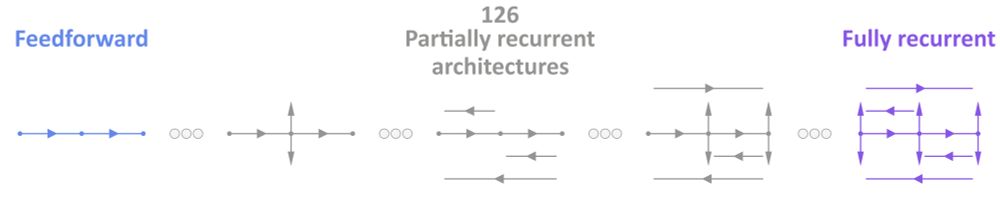

A figure showing a range of neural network architectures.

From Ghosh & Goodman, 2025, bioRxiv.

Interested in #neuroscience + #AI and looking for a PhD position?

I can support your application @imperialcollegeldn.bsky.social

✅ Check your eligibility (below)

✅ Contact me (DM or email)

UK nationals: www.imperial.ac.uk/life-science...

Otherwise: www.imperial.ac.uk/study/fees-a...

04.11.2025 09:47 —

👍 19

🔁 8

💬 1

📌 0

SNUFA 2025

Spiking Neural networks as Universal Function Approximators

Spiking NN fans - the #SNUFA workshop (Nov 5-6) agenda is finalised and online now. Make sure to register (free) soon. (Note you can register for either day and come to both.)

Agenda: snufa.net/2025/

Registration: www.eventbrite.co.uk/e/snufa-2025...

Thanks to all who voted on abstracts!

🤖🧠🧪

23.10.2025 16:17 —

👍 31

🔁 16

💬 0

📌 8

SNUFA 2025

Spiking Neural networks as Universal Function Approximators

Message for participants of the #SNUFA 2025 spiking neural network workshop. We got almost 60 awesome abstract submissions, and we'd now like your help to select which ones should be offered talks. Follow the "abstract voting" link at snufa.net/2025/ to take part. It should take <15m. Thanks! ❤️

01.10.2025 19:16 —

👍 18

🔁 10

💬 0

📌 1

SNUFA 2025

Spiking Neural networks as Universal Function Approximators

Submissions (short!) due for SNUFA spiking neural networks conference in <2 weeks! 🤖🧠🧪

forms.cloud.microsoft/e/XkZLavhaJe

More info at snufa.net/2025/

Note that we normally get around 700 participants and recordings go on YouTube and get 100s-1000s views.

Please repost.

16.09.2025 09:33 —

👍 26

🔁 16

💬 0

📌 0

Diagram of how the "collaborative modelling of the brain" (COMOB) project started. Starting material lead to group research or solo research, coming together in online workshops (monthly) in an iterative cycle, finishing with writing up together. The diagram is illustrated with colourful cartoon blob characters.

Is anarchist science possible? As an experiment, we got together a large group of computational neuroscientists from around the world to work on a single project without top down direction. Read on to find out what happened. 🤖🧠🧪

04.09.2025 14:59 —

👍 75

🔁 28

💬 2

📌 3

Yeah robots are definitely not taking over the world.

Not the red one, at least. 🤣

01.09.2025 11:54 —

👍 1692

🔁 309

💬 179

📌 123

SNUFA 2025

Spiking Neural networks as Universal Function Approximators

Spiking neural networks people, this message is for you!

The annual SNUFA workshop is now open for abstract submission (deadline Sept 26) and (free) registration. This year's speakers include Elisabetta Chicca, Jason Eshraghian, Tomoki Fukai, Chengcheng Huang, and... you?

snufa.net/2025/

🤖🧠🧪

07.08.2025 11:56 —

👍 30

🔁 16

💬 2

📌 0

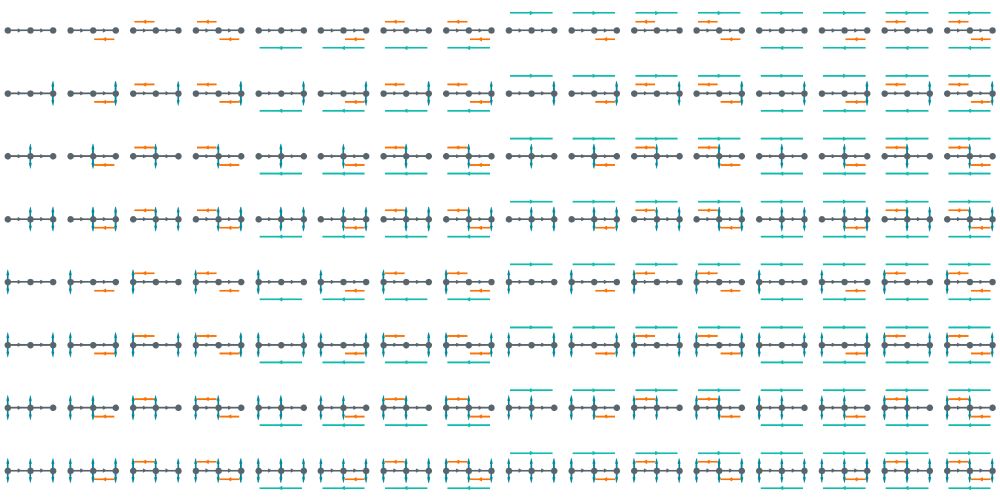

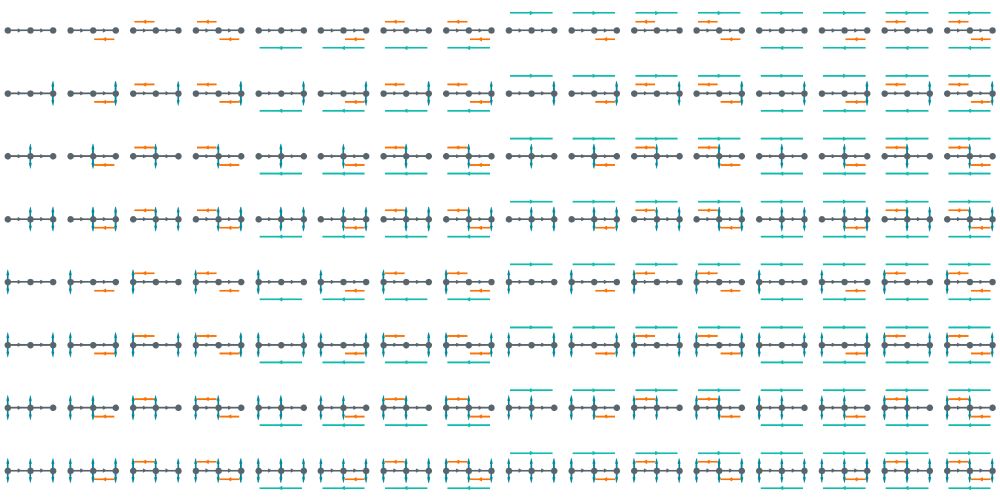

A diagram showing 128 neural network architectures.

How does the structure of a neural circuit shape its function?

@neuralreckoning.bsky.social & I explore this in our new preprint:

doi.org/10.1101/2025...

🤖🧠🧪

🧵1/9

01.08.2025 08:26 —

👍 109

🔁 40

💬 6

📌 7

Description

Please note that job descriptions are not exhaustive, and you may be asked to take on additional duties that align with the key responsibilities ment...

Hiring a post-doc at Imperial in EEE. Broad in scope + flexible on topics: neural networks & new AI accelerators from a HW/SW co-design perspective!

w/ @neuralreckoning.bsky.social @achterbrain.bsky.social in Intelligent Systems and Networks group.

Plz share! 🚀: www.imperial.ac.uk/jobs/search-...

25.07.2025 13:27 —

👍 14

🔁 9

💬 2

📌 1

Together with my supervisor and the Neural Reckoning team neuralreckoning.bsky.social, we’ve developed a new SHD/SSC variant that strips out rate information but spike‑timing information—designed to really challenge SNNs. Your feedback is welcome—let us know how it performs in your models!

24.07.2025 17:47 —

👍 3

🔁 0

💬 1

📌 0

Fusing multisensory signals across channels and time.

Now published at PLOS Comp Biol! 🎉 With @swathianil.bsky.social and @marcusghosh.bsky.social.

journals.plos.org/ploscompbiol...

TLDR, when multisensory signals vary over time, neural architecture becomes important. Biggest not always best.

10.06.2025 15:21 —

👍 43

🔁 14

💬 1

📌 1

Abstract submissions are now open for the UK Neural Computation Conference! (Deadline 12th May).

We also have ARIA sponsored travel funds for ECRs! 💥

Submit here: neuralcomputation.uk/submission.html

Genreal info: neuralcomputation.uk

Registration open May. Any qs get in touch + plz share!

06.03.2025 15:21 —

👍 3

🔁 2

💬 0

📌 0

For our next UCL #NeuroAI online seminar, we are happy to be hosting Dr Dan Goodman @neuralreckoning.bsky.social (@imperialcollegeldn.bsky.social).

🗓️Wed 12 Feb 2025

⏰2-3pm GMT

Talk title: Neural architectures: what are they good for anyway?

04.02.2025 10:49 —

👍 29

🔁 8

💬 1

📌 3