🦸🏻#17: What is A2A and why is it – still! – underappreciated?

A Blog post by Ksenia Se on Hugging Face

If you're building with agents, or planning to, this is the protocol to watch.

In our deep dive into A2A you'll learn how it works and how to start with it, whether MCP and A2A are competitors, and if Google might use it to index every agent on the internet 👇

Enjoy and leave your feedback!

10.05.2025 08:45 —

👍 1

🔁 0

💬 0

📌 0

• Specialist agents working together like modular teams

• Easy cross-enterprise workflows

• Standardized human-in-the-loop collaboration between AI and people

• And even a searchable, internet-scale agents directory

10.05.2025 08:45 —

👍 1

🔁 0

💬 1

📌 0

Why is A2A important?

Most AI agents today live in silos. @Google’s A2A protocol aims to be the “common language” that lets them to collaborate.

A2A could unlock many possibilities:

10.05.2025 08:45 —

👍 0

🔁 0

💬 1

📌 0

🦸🏻#17: What is A2A and why is it – still! – underappreciated?

A Blog post by Ksenia Se on Hugging Face

People want to understand agentic infrastructure protocols better. The strong response to our MCP article shows there’s real demand for clarity around standardization of AI ecosystems

Since so many people asked, we are making our article on Agent2Agent (A2A) free to read on @hf.co

🧵

10.05.2025 08:45 —

👍 2

🔁 0

💬 1

📌 0

Can Liquid Models Beat Transformers? Meet Hyena Edge – the Newest Member of the LFM Family

we discuss a new wave of architecture from Liquid AI – built from first principles, optimized for real hardware, and challenging the Transformer playbook with smarter, leaner models

Hyena Edge is an experimental convolutional multi-hybrid model, It runs efficiently on smaller devices like your phone. At its core, it replaces 2/3 of attention with fast convolutions and gating

And Liquid AI are working on something even more interesting

How can their models beat Transformers? 👇

05.05.2025 08:33 —

👍 0

🔁 0

💬 0

📌 0

The most important features of LFMs (Liquid Foundation Models) from Liquid AI?

Memory-efficiency, inference speed, without compromising model quality.

LFMs have been benchmarked on real hardware, proving that they can beat Transformers.

Liquid AI have also just released Hyena Edge👇

05.05.2025 08:33 —

👍 0

🔁 0

💬 1

📌 0

YouTube video by Turing Post

When Will We Stop Coding? A conversation with Amjad Masad, CEO and co-founder @ Replit

What happens when the biggest advocate for coding literacy starts telling people not to learn to code?

In the new Inference episode, I sat down with Amjad Masad, CEO and co-founder at Replit, to discuss the evolution in coding.

Are we entering a post-coding world?

www.youtube.com/watch?v=PlDe...

04.05.2025 23:13 —

👍 2

🔁 0

💬 1

📌 0

7. Values in the Wild: Discovering and Analyzing Values in Real-World Language Model Interactions, @anthropicai.bsky.social

Maps AI value expressions across real-world interactions to inform grounded AI value alignment

arxiv.org/abs/2504.15236

30.04.2025 23:44 —

👍 3

🔁 0

💬 1

📌 0

6. Roll the dice & look before you leap: Going beyond the creative limits of next-token prediction

Highlights limitations of next-token prediction and proposes noise-injection strategies for open-ended creativity

arxiv.org/abs/2504.15266

GitHub: github.com/chenwu98/alg...

30.04.2025 23:44 —

👍 0

🔁 0

💬 1

📌 0

5. The Sparse Frontier: Sparse Attention Trade-offs in Transformer LLMs

Investigates sparse attention trade-offs and proposes scaling laws for long-context LLMs

arxiv.org/abs/2504.17768

30.04.2025 23:44 —

👍 0

🔁 0

💬 1

📌 0

4. Efficient Pretraining Length Scaling

Presents PHD-Transformer to enable efficient long-context pretraining without inflating memory costs

arxiv.org/abs/2504.14992

30.04.2025 23:44 —

👍 0

🔁 0

💬 1

📌 0

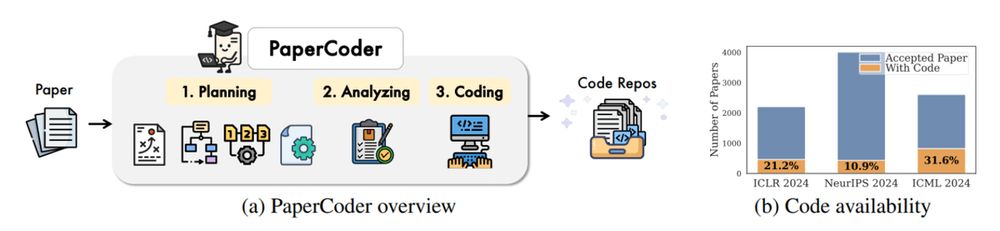

3. Paper2Code

Automates end-to-end ML paper-to-code translation with a multi-agent framework

arxiv.org/abs/2504.17192

Code: github.com/going-doer/P...

30.04.2025 23:44 —

👍 0

🔁 0

💬 1

📌 0

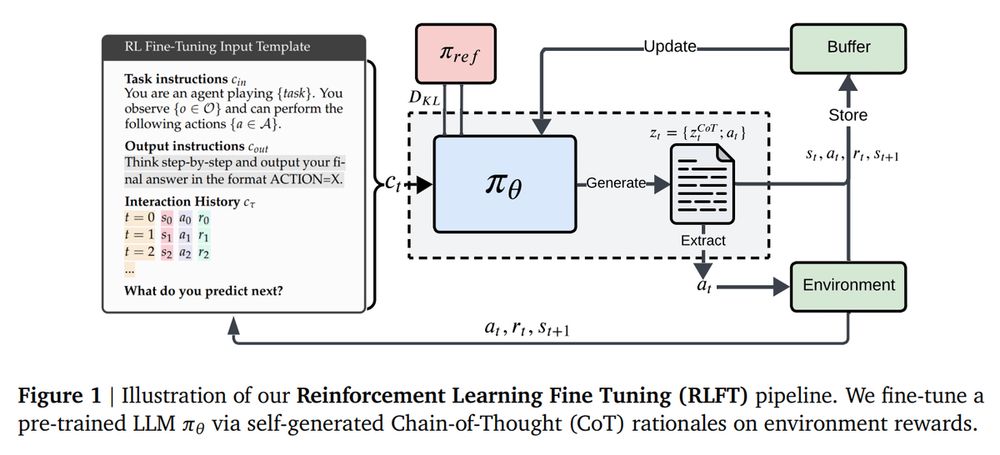

2. LLMs are Greedy Agents: Effects of RL Fine-tuning on Decision-Making Abilities

Analyzes how RL fine-tuning improves exploration and decision-making abilities of LLMs

arxiv.org/abs/2504.16078

30.04.2025 23:44 —

👍 0

🔁 0

💬 1

📌 0

1. TTRL: Test-Time Reinforcement Learning

Introduces a method for self-evolving LLMs at test-time using reward signals without labeled data

arxiv.org/abs/2504.16084

GitHub: github.com/PRIME-RL/TTRL

30.04.2025 23:44 —

👍 0

🔁 0

💬 1

📌 0

9. Skywork R1V2: Multimodal Hybrid Reinforcement Learning for Reasoning

Advances multimodal reasoning with a hybrid RL paradigm balancing reward guidance and rule-based strategies.

arxiv.org/abs/2504.16656

Model: huggingface.co/Skywork/Skyw...

29.04.2025 11:12 —

👍 0

🔁 0

💬 1

📌 0

8. Process Reward Models That Think introduces ThinkPRM

It's a generative verifier that scales step-wise reward modeling with minimal supervision.

arxiv.org/abs/2504.16828

GitHub: github.com/mukhal/think...

29.04.2025 11:12 —

👍 0

🔁 0

💬 1

📌 0

7. Surya OCR:

Release an open-source, high-speed OCR model supporting 90+ languages with LaTeX formatting and structured output for real-world document processing.

x.com/VikParuchuri...

29.04.2025 11:12 —

👍 0

🔁 0

💬 1

📌 0

Paper page - Trillion 7B Technical Report

Join the discussion on this paper page

6. Trillion-7B:

Develops a highly token-efficient multilingual LLM using specialized cross-lingual techniques for Korean, Japanese, and more.

huggingface.co/papers/2504....

Model:

huggingface.co/trillionlabs...

29.04.2025 11:12 —

👍 0

🔁 0

💬 1

📌 0

5. Eagle 2.5 by NVIDIA

Expands vision-language models to handle long-context video and image comprehension with specialized training tricks and efficient scaling.

arxiv.org/abs/2504.15271

Project page: nvlabs.github.io/EAGLE/

29.04.2025 11:12 —

👍 0

🔁 0

💬 1

📌 0

4. Aimo-2 winning solution by Nvidia

Builds state-of-the-art mathematical reasoning models with OpenMathReasoning dataset.

arxiv.org/abs/2504.16891

29.04.2025 11:12 —

👍 1

🔁 0

💬 1

📌 0

3. Kimi-Audio

Builds a universal audio foundation model for understanding, generating, and conversing in audio and text, achieving SOTA across diverse benchmarks.

arxiv.org/abs/2504.18425

Codes, model checkpoints, the evaluation toolkits: github.com/MoonshotAI/K...

29.04.2025 11:12 —

👍 0

🔁 0

💬 1

📌 0

2. Tina: Tiny Reasoning Models via LoRA:

Achieve strong reasoning capabilities with tiny models by applying cost-efficient low-rank adaptation and reinforcement learning.

arxiv.org/abs/2504.15777

29.04.2025 11:12 —

👍 0

🔁 0

💬 1

📌 0

🦸🏻#17: What is A2A and why is it – still! – underappreciated?

everything you need to know about Google’s Agent2Agent protocol (and if Google builds the world’s first agent directory, A2A will be the language it speaks)

If you want to learn how A2A works, why we need it, what it unlocks, and whether it might rival MCP — read our new article as a great starting guide. It's also useful for those who've already explored A2A-> www.turingpost.com/p/a2a

26.04.2025 20:42 —

👍 0

🔁 0

💬 0

📌 0