On November 1st we're running it again! Come join over 300 students in learning about what makes distributed training tick, common bottlenecks, and more. maven.com/walk-with-c...

05.10.2025 12:02 — 👍 0 🔁 0 💬 0 📌 0

On November 1st we're running it again! Come join over 300 students in learning about what makes distributed training tick, common bottlenecks, and more. maven.com/walk-with-c...

05.10.2025 12:02 — 👍 0 🔁 0 💬 0 📌 0Dispatching works by keeping the dataset on one process and then sending the batches to the other workers throughout training. This incurs a memory cost since this is a GPU -> GPU transfer, however many find this to be more appealing than other alternatives.

05.10.2025 12:02 — 👍 0 🔁 0 💬 1 📌 0

DataLoader Dispatching

When constrained by a variety of reasons to where you can't include multiple copies (or mmaps) of datasets in memory, be it too many concurrent streams, low resource availability, or a slow CPU, dispatching is here to help.

Also get access to the prior cohort's guest speakers too! (And get in for 35% off) maven.com/walk-with-c...

03.10.2025 11:24 — 👍 0 🔁 0 💬 0 📌 0On November 1st starts the second cohort of Scratch to Scale! Come learn the major tricks and algorithms used when single-GPU training hits failure points.

03.10.2025 11:24 — 👍 0 🔁 0 💬 1 📌 0This ensures consistent randomness and avoids redundant computation, making it well-suited for more complex sampling strategies.

03.10.2025 11:24 — 👍 0 🔁 0 💬 1 📌 0Batch sampler sharding is especially efficient when using non-trivial sampling methods (weighted, balanced, temperature-based, etc.), since the sampling logic runs once globally rather than being duplicated per worker.

03.10.2025 11:24 — 👍 0 🔁 0 💬 1 📌 0Unlike iterable dataset sharding, where each worker directly pulls raw data in its __iter__, batch sampler sharding first generates indices that specify which items to fetch. These indices are grouped into batches, which are then collated into tensors and moved to CUDA for training.

03.10.2025 11:24 — 👍 0 🔁 0 💬 1 📌 0

Batch Sampler Sharding

03.10.2025 11:24 — 👍 3 🔁 0 💬 1 📌 0

On November 1st starts the second cohort of Scratch to Scale! Come learn the major tricks and algorithms used when single-GPU training hits failure points. Also get access to the prior cohort's guest speakers too! (And get in for 35% off) maven.com/walk-with-c...

02.10.2025 11:05 — 👍 0 🔁 0 💬 0 📌 0The main benefit to doing things this way is it's extremely fast (there are no communications involved since each process can figure out its own slice of the data), however it's more RAM heavy since the entire dataset needs to live in memory.

02.10.2025 11:05 — 👍 0 🔁 0 💬 1 📌 0Afterwards, all that's needed is to move the data to the device and you're ready to perform distributed data parallelism!

02.10.2025 11:05 — 👍 0 🔁 0 💬 1 📌 0For this to work, every process contains the entire dataset (so uses more RAM, but RAM is cheap). During the Dataset's __iter__() call each process will grab a pre-determined "chunk" of a global batch of items from the dataset in the __getitem__().

02.10.2025 11:05 — 👍 0 🔁 0 💬 1 📌 0

Dataset Sharding

When performing distributed data parallelism, we split the dataset every batch so every device sees a different chunk of the data. There are different methods for doing so. One example is sharding at the *dataset* level, shown here.

Reach out to me if you need financial assistance/cost is too much. Happy to try and work within budgets (or company education stipends): scratchtoscale@gmail.com

22.06.2025 23:19 — 👍 2 🔁 0 💬 0 📌 0

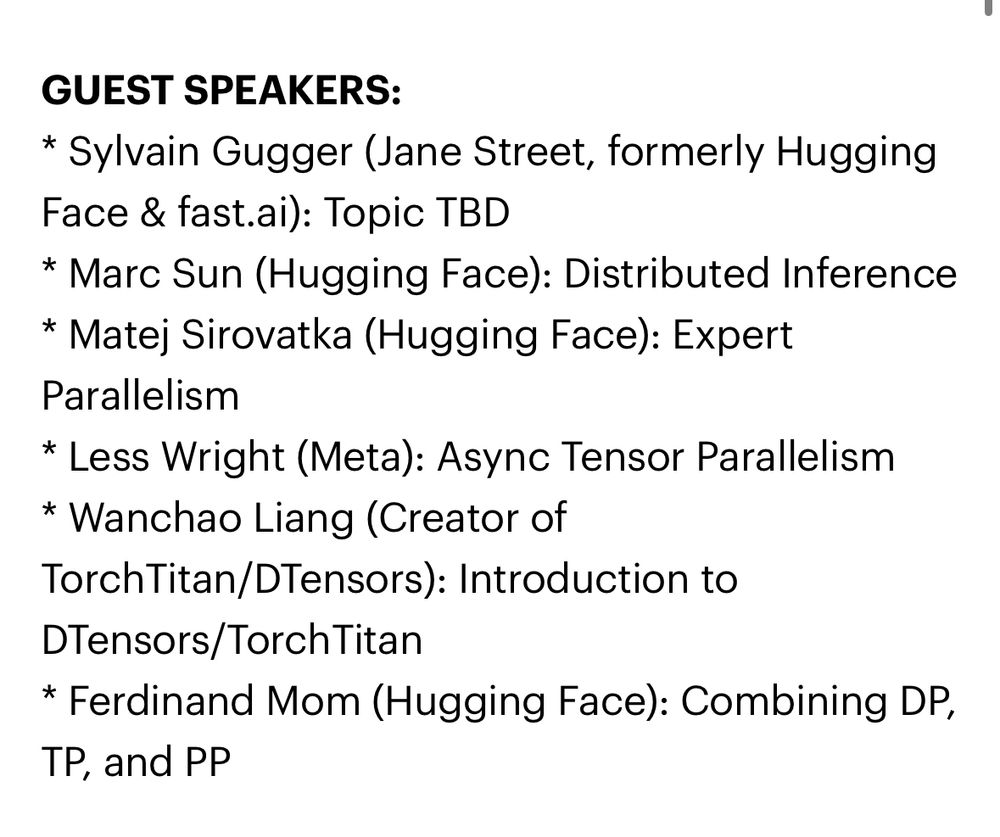

Hi all 👋

Back very briefly to mention I’m working on a new course, and there’s a star-studded set of guest speakers 🎉

From Scratch to Scale: Distributed Training (from the ground up).

From now until I’m done writing the course material, it’s 25% off :)

maven.com/walk-with-co...

AAAHHHHHHHHH BE NICE TO OPEN SOURCE MAINTAINERS OH MY GOD. SOME OF YOU ARE SO RUDE, WHO RAISED YOU

10.03.2025 17:32 — 👍 557 🔁 86 💬 15 📌 4

JK STRONG is still awesome. Solution (which they were already aware of this) was literally logout -> login!

11/10 customer support

I specifically mean my gym tracking app, STRONG. I’ve used it for 5 years, have a lifetime membership, and they decided that there needs to be internet access to check for premium to add warmup sets. Which is a bit insane. (It is a premium feature, but there’s better ways to do this)

11.03.2025 11:49 — 👍 1 🔁 0 💬 1 📌 0Enshittification has come to my gym app. Lord help us all 😭

11.03.2025 11:28 — 👍 2 🔁 0 💬 1 📌 1And I’ll try wearing it not sleeping only :)

08.03.2025 18:05 — 👍 1 🔁 0 💬 0 📌 0(As a result I no longer wear mine and track my sleep using other methods that don’t have that psychological affect)

08.03.2025 17:37 — 👍 2 🔁 0 💬 1 📌 0As someone who did Oura for 2 years, I can say it got to a point where my sleep depended on the Oura score, not the Oura score providing insight. So be weary of that long-term otherwise full agree 💯

08.03.2025 17:37 — 👍 2 🔁 0 💬 1 📌 0 02.03.2025 12:22 —

👍 100

🔁 16

💬 0

📌 0

02.03.2025 12:22 —

👍 100

🔁 16

💬 0

📌 0

03.03.2025 03:06 —

👍 6

🔁 0

💬 0

📌 0

03.03.2025 03:06 —

👍 6

🔁 0

💬 0

📌 0

👀

03.03.2025 03:01 — 👍 1 🔁 0 💬 0 📌 0Thanks Chris! 🫡

02.03.2025 16:58 — 👍 0 🔁 0 💬 0 📌 0

Recently did my first lifting competition, overall quite happy where things ended up (bar bench) and was a ton of fun. Might wait a year and give it another go, but I did get first in the weight class!

145kg squat

180kg deadlift

90kg bench