Self-supervised perception for tactile skin covered dexterous hands

We present Sparsh-skin, a pre-trained encoder for magnetic skin sensors distributed across the fingertips, phalanges, and palm of a dexterous robot hand. Magnetic tactile skins offer a flexible form f...

This was an amazing collaboration at

@aiatmeta.bsky.social &

@cmurobotics.bsky.social : @carohiguera.bsky.social ,

@mukadammh, @francois_hogan

and many others

Code coming soon! Checkout our paper: arxiv.org/abs/2505.11420 &

Website: akashsharma02.github.io/sparsh-skin-... for more details

6/6

27.05.2025 14:48 —

👍 0

🔁 0

💬 0

📌 0

And we also show improvement in many tactile perception tasks such as force estimation, pose estimation and full-hand joystick state estimation.

5/6

27.05.2025 14:47 —

👍 1

🔁 0

💬 1

📌 0

With this we see a 75% improvement in real-world tactile plug insertion over end-to-end using vision and tactile:

4/6

27.05.2025 14:46 —

👍 0

🔁 0

💬 1

📌 0

We pretrain Sparsh-skin with 4 hours of unlabeled data via self-distillation, and make several changes to get highly performant reps:

Decorrelate signals by tokenizing 1s window of tactile data.

Condition the encoder on robot hand configurations via sensor positions as input

3/6

27.05.2025 14:45 —

👍 0

🔁 0

💬 1

📌 0

Sparsh-skin is an approach to pretrain encoders for magnetic skin sensors on a dexterous robot hand.

It improves tactile tasks by over 56% in end-to-end methods and by over 41% in prior work.

It is trained via self-supervision for the Xela sensor, so no labeled data needed!

2/6

27.05.2025 14:44 —

👍 1

🔁 0

💬 1

📌 0

Robots need touch for human-like hands to reach the goal of general manipulation. However, approaches today don’t use tactile sensing or use specific architectures per tactile task.

Can 1 model improve many tactile tasks?

🌟Introducing Sparsh-skin: tinyurl.com/y935wz5c

1/6

27.05.2025 14:44 —

👍 4

🔁 0

💬 1

📌 0

I might sound salty, but I never got how 'outstanding reviewers' are chosen. Til then, part of the 'mediocre reviewers' gang it is 🤣

12.05.2025 23:15 —

👍 2

🔁 0

💬 1

📌 0

Sparsh | Self-supervised touch representations for vision-based tactile sensing

Sparsh: Self-supervised touch representations for vision-based tactile sensing

Check out my work to know more:

1. Sparsh: tactile reps for vision based sensors sparsh-ssl.github.io

2. [Releasing soon] Sparsh-skin: Tactile reps for full hand magnetic skins

3. [Coming soon] Reps for multimodal touch fusing tactile-images, audio, motion and pressure

11.05.2025 13:31 —

👍 0

🔁 0

💬 0

📌 0

We took a matter of fact approach for a robotics conference, and it backfired too.

11.05.2025 12:53 —

👍 0

🔁 0

💬 0

📌 0

I asked "on the other platform" what were the most important improvements to the original 2017 transformer.

That was quite popular and here is a synthesis of the responses:

28.04.2025 06:47 —

👍 204

🔁 43

💬 4

📌 3

⏰ Heads up! The deadline for two #CVPR2025 Autonomous Grand Challenge tracks is May 10th, 2025:

1️⃣ NAVSIM v2 Challenge: huggingface.co/spaces/AGC20...

2️⃣ World Model Challenge by 1X: huggingface.co/spaces/1x-te...

28.04.2025 09:41 —

👍 9

🔁 6

💬 1

📌 0

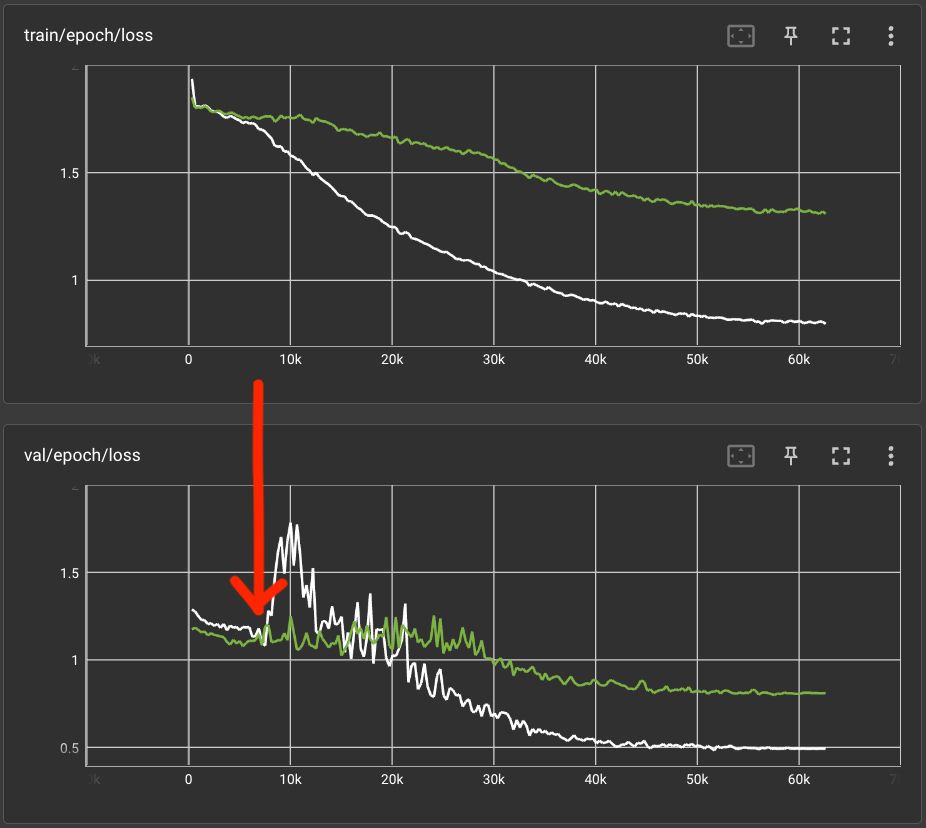

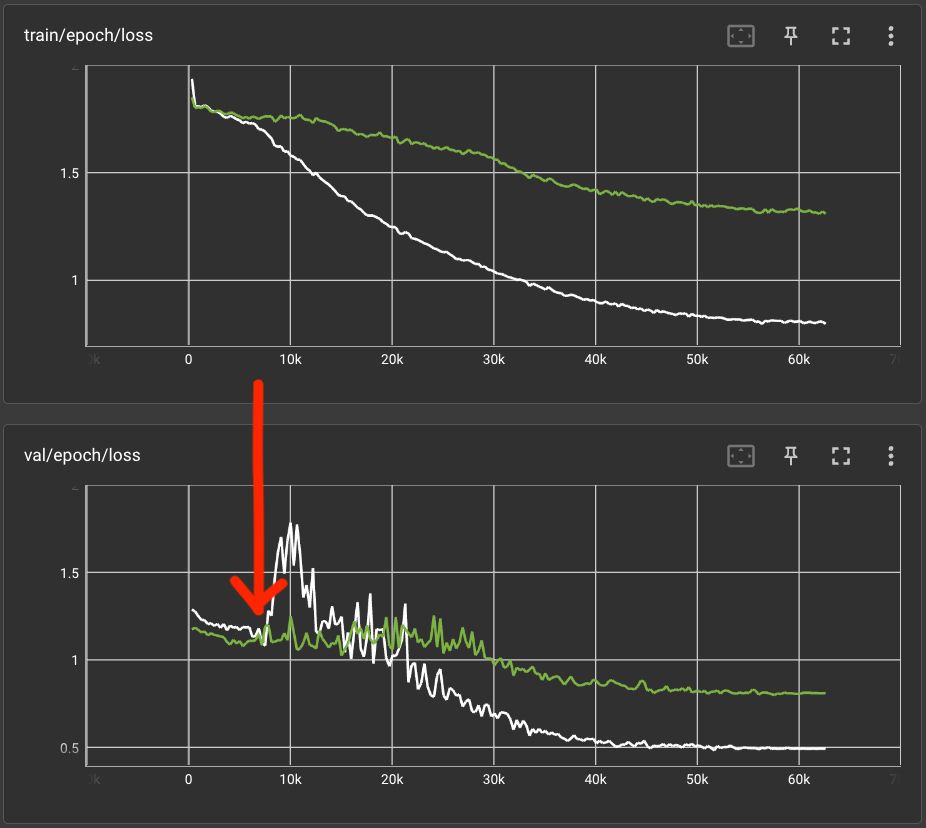

I love situations like this: in the pre-deep era (and following classical learning theory), people would have stopped training the white model at the red arrow, as the validation error increases. But, no, the model first seems to learns unwanted short cuts (overfitting wildly) but finds a way out.

27.03.2025 15:30 —

👍 52

🔁 9

💬 6

📌 0

First page of the instagram post

A new #CosmicDistanceLadder post on why lunar and solar eclipses tend to come in pairs (for instance, the solar eclipse next week is paired with the lunar eclipse from last week). www.instagram.com/p/DHkS3EcA40L

24.03.2025 04:42 —

👍 30

🔁 5

💬 2

📌 0

A photo of Lerrel looking happy.

What would you love to know about #robot learning and decision making?

Later this season, I'll be chatting to Prof. Lerrel Pinto (@lerrelpinto.com) from NYU about using machine learning to train robots to adapt to new environments.

Send me your questions for Lerrel: robottalk.org/ask-a-question/

18.03.2025 10:11 —

👍 13

🔁 7

💬 0

📌 1

We are looking for a student researcher to work on video understanding plus 3D, in Google DeepMind London. DM/Email me or pass it to someone if you feel it may be a good fit!

05.03.2025 20:43 —

👍 20

🔁 6

💬 0

📌 0

The first measles death in the US in a decade -- the tragic, preventable death of a child whose parents chose not to protect them with vaccination -- should spark an immediate nation-wide campaign to ensure all children are protected against preventable diseases. Anything less is unconscionable.

26.02.2025 19:36 —

👍 39026

🔁 9469

💬 1135

📌 450

Congrats Eric, really cool stuff!

26.02.2025 02:07 —

👍 1

🔁 0

💬 0

📌 0

Gearing up for our workshop on 4D Vision at @CVPR this June! Check out our line up of speakers and submit your work by Mar 28. Spread the word!

12.02.2025 13:35 —

👍 5

🔁 1

💬 0

📌 0

Gumbel distribution - Wikipedia

At first I thought poisson or log-normal, but after a bit of searching, maybe Gumbel distribution: en.m.wikipedia.org/wiki/Gumbel_...

09.02.2025 14:38 —

👍 2

🔁 0

💬 1

📌 0

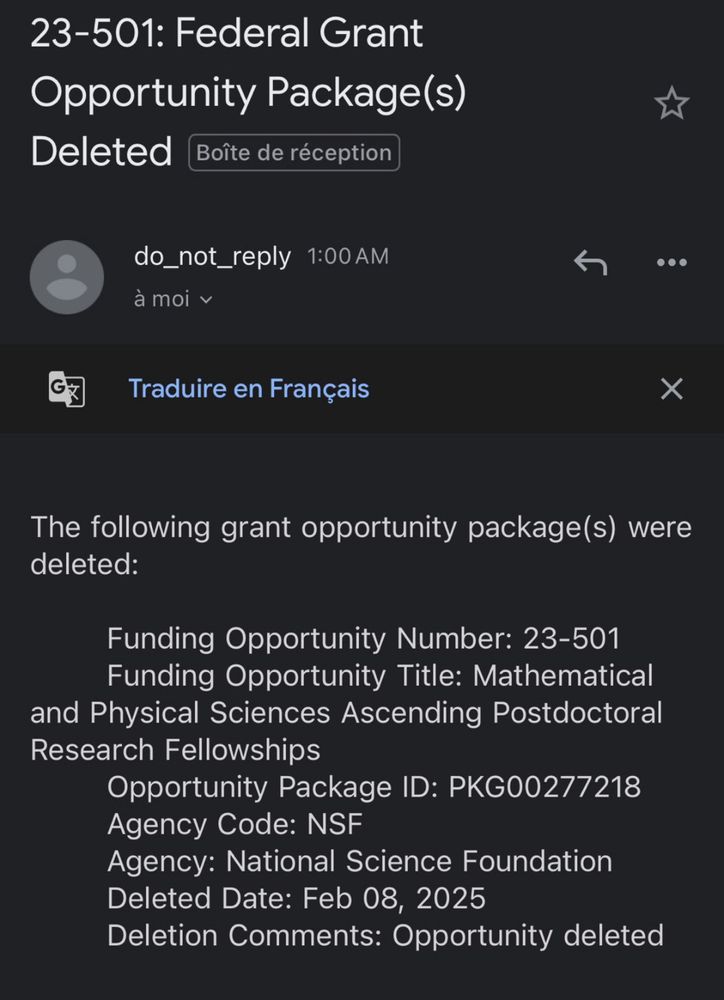

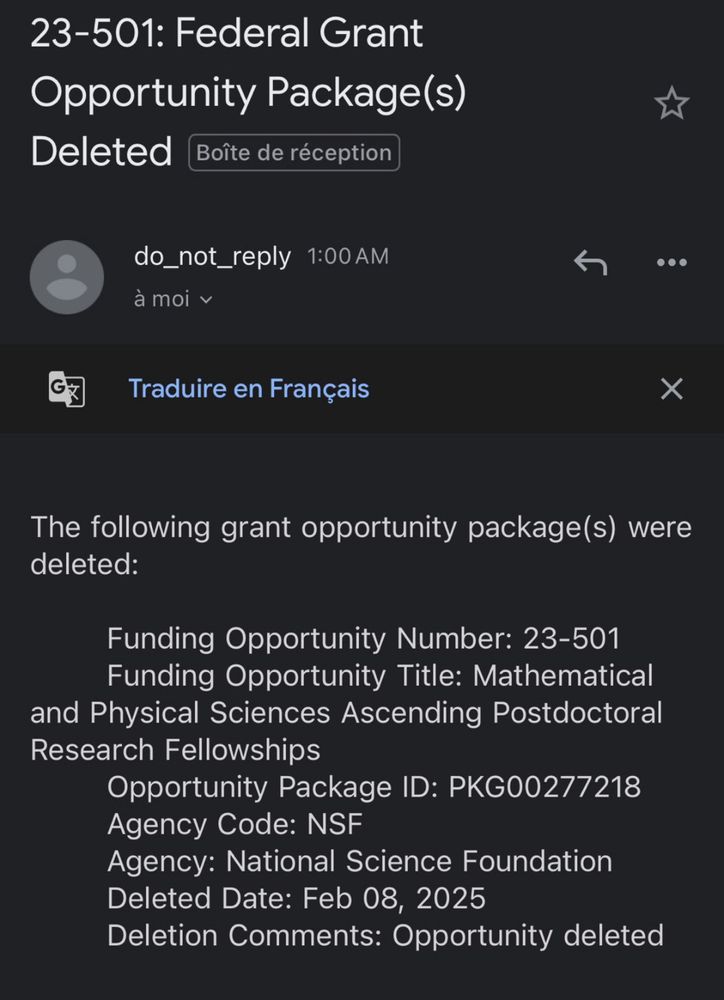

Last night I found out that the NSF math postdoctoral fellowship I applied for is being deleted because it does not comply with Trump’s executive orders on DEI in the federal government. I’m going to answer some FAQs and share some thoughts about this ordeal in this thread 1/n

08.02.2025 18:42 —

👍 1329

🔁 493

💬 50

📌 53

Now, see how life changes when you swap control and caps lock! 😆

08.02.2025 04:56 —

👍 2

🔁 0

💬 0

📌 0

DexGen

Seeing some of the early results from DexterityGen were definitely a wow moment for me!

It doesn't take a lot to realize all the new opportunities a strong teleop system like this enables! 🚀

X thread: x.com/zhaohengyin/...

Link: zhaohengyin.github.io/dexteritygen/

08.02.2025 03:02 —

👍 2

🔁 1

💬 0

📌 0

Not one VC would ever fund a startup to do the kind of hardcore optimization work that DeepSeek did.

Every VC firm should be asking themselves why.

28.01.2025 05:00 —

👍 105

🔁 11

💬 5

📌 2

Are those gulab jamuns? 👀👀

25.01.2025 03:21 —

👍 3

🔁 0

💬 1

📌 0

just warms my heart to see how they're citing my stuff --

"some people have done some thing [7]"

"most work is inadequate [8]"

"unlike prior work [7,8,9], we don't suck"

7. Bigham

8. Bigham

9. Bigham

them increasing my hindex is joke on them! 😂

17.01.2025 20:32 —

👍 21

🔁 3

💬 1

📌 0

A new dawn, a golden era of boot licking before us. Unheralded, unimaginable forms of boot licking to be discovered

07.01.2025 14:20 —

👍 27

🔁 3

💬 0

📌 0

It’s kinda wild how much of ML is tradition. Not always in a bad way, just that there’s so damn much that you’re forced to rely on others’ recommendations for models, hyperparameters, training sets, loss metrics, architectures, and quirky practices.

18.12.2024 17:27 —

👍 14

🔁 1

💬 4

📌 0