Join us on Wednesday April 23 at 12 PM ET for Metagov Seminar: A rule-based approach to mitigating AI-risk (Evan Miyazono). For more details and link to join, register here - lu.ma/m0lc3280

22.04.2025 10:09 — 👍 2 🔁 1 💬 0 📌 0

Join us on Wednesday April 23 at 12 PM ET for Metagov Seminar: A rule-based approach to mitigating AI-risk (Evan Miyazono). For more details and link to join, register here - lu.ma/m0lc3280

22.04.2025 10:09 — 👍 2 🔁 1 💬 0 📌 0

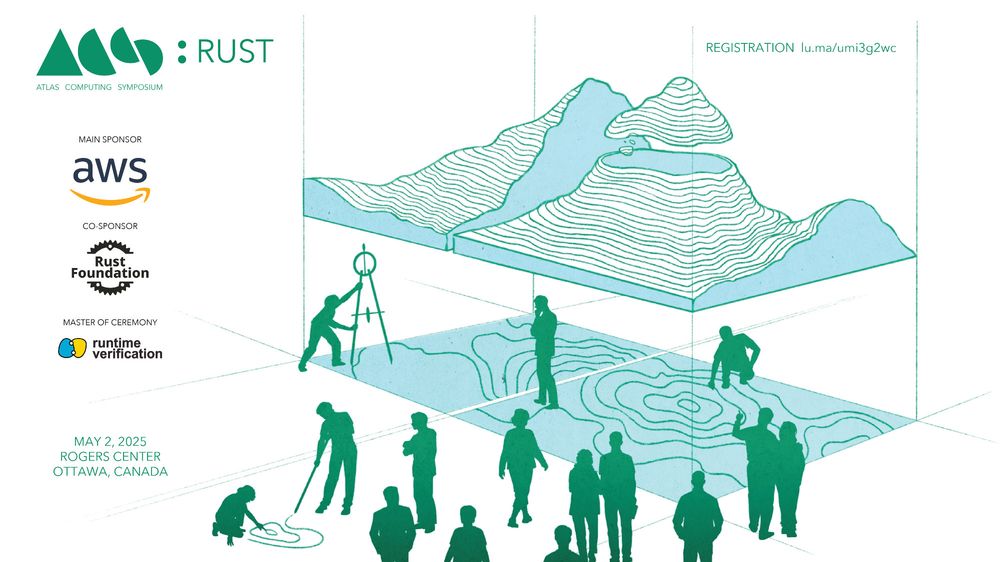

Attending #ICSE2025 in Ottawa? Join us at the Rust Symposium (Friday, May 2, 9am-5pm) for a full day of cutting-edge tools and research. Featuring speakers from AWS, ETH Zurich, Formal Land, Inria, Microsoft, and Runtime Verification. #RustLang

Registration here lu.ma/umi3g2wc

(may I never stop pointing technologists to the federalist papers)

27.02.2025 04:56 — 👍 1 🔁 0 💬 0 📌 0

I was recently asked "has there ever been a technology (other than weapons) where there was as much debate about whether it would be good for society as there is for AI?"

I'm unusually pleased with my answer: "the creation of the US government"

totally agree! Other books I consider pleasant surprises in this category are

Foundations of Decision Analysis by Howard and Abbas,

quantum.country, and

arxiv.org/abs/1803.05316 for Category Theory

So I went longer between finishing the first and starting the second than I did between starting the second and finishing the fourth. I'd definitely recommend starting into the second if you haven't to see if the momentum catches you.

27.12.2024 20:09 — 👍 1 🔁 0 💬 1 📌 0Also reminds me of the Utopian Oath from @adapalmer.bsky.social's Terra Ignota series (what such a monastic sacrifice might look like today). I don't know if you've read the series but @benreinhardt.bsky.social, @divya.bsky.social and I all love it.

27.12.2024 19:45 — 👍 5 🔁 0 💬 1 📌 0

reminds me of

en.wikipedia.org/wiki/Handica... (non-choice sacrifice of resources)

en.wikipedia.org/wiki/Altruis... (sacrificing for the good of the family/tribe)

but both kinda advance fitness of shared genes, due to natural selection + selection bias (is "natural selection bias" a term?)

I want to be able to mash on my keyboard to select the next most likely autocomplete/autocorrect option. I think I'd tolerate much more aggressive autocorrect if I had this affordance.

The voice command "ugh" would also be sufficient.

These systems are inhuman intelligences, and will continue dramatically outperforming humans in more domains and (for now) underperforming in others.

If LLMs were growing more capable like a human child, I'd be a lot less worried.

It's easy to find opinions+evidence that "o3 is a superhuman problem solver." Also easy to find takes+evidence that it's way dumber than a child.

The surprising takes are the ones that assume only one of these is true.

participant reviews:

"My favorite part was hearing someone else's rec - you learn something about the person giving the gift, the person receiving it, and yourself)"

"it's like watching someone open someone else's gift thinking 'yeah, nice, I'm getting myself that'"

Step 3: The person who posed the question reveals themself and offers a recommendation.

Our recs included south-asian snacks, a Ted Chiang short story, some voyager eps, a bunch of great books, and a few movies. Plus it felt like a nice hangout and close-out.

Step 2: randomly order participants, who choose which elicitation question they want to answer in as much detail as they want.

(e.g. the person who picks "what do you like in a story?" could say "fantasy that turns out to be scifi" or "nonfiction that's stranger than fiction")

example preference elicitation questions:

What are your top 3 "little treats" and favorite flavor profiles?

What's one of the best holiday gifts you ever received, and why?

What do you like in a story?

How to do it?

Step 1: everyone submits a "preference elicitation question" directly to a coordinator to anonymize (or you could make an anonymous form).

So, think of a category of rec (e.g. book, film, snack food, etc) and ask a question that will inform that rec

Why is this a problem? Feels dumb + carbon-intensive to ship white-elephant gifts to each other. I've tried sending books, but preference between e-book, audiobook, physical book, etc is kind of a hassle.

20.12.2024 22:46 — 👍 0 🔁 0 💬 1 📌 0I have a solution I love for the remote-first company holiday gift exchange. A recommendation exchange! Everyone gives and gets a personalized recommendation, no shipping involved - here's how we did it:

20.12.2024 22:46 — 👍 1 🔁 0 💬 1 📌 0

"a comprehensive and incomprehensible deep dive"

*chef kiss*

(stolen from youtu.be/qwiLnLsuqx8?...)

I'm confused - I thought they all had wheels at this size. www.cryofab.com/wp-content/u...

at least the ones I used did...

The Goodhart-Hanlon lazor:

Any sufficiently large group or entity appearing to act maliciously likely includes, somewhere, at least one unaware and/or unwilling person nonetheless making things worse by just following orders and maximizing some metric.

I knew FutureHouse generated a mind-numbing number of Wikipedia pages, but I was very surprised to learn that human peer review found 2x the errors in human-generated articles compared to the AI-generated ones

07.12.2024 23:27 — 👍 0 🔁 0 💬 0 📌 0Makes me wonder if there are intuitive ways to quantify this. Especially if you have models of different people for something like a recommendation engine.

07.12.2024 06:28 — 👍 0 🔁 0 💬 0 📌 0I love that Cosmos Ventures includes "supporting individual preferences and abilities" in its definition of human flourishing. "Would a different person make the same choice" seems to be a really interesting indicator of freedom/agency.

07.12.2024 06:28 — 👍 4 🔁 0 💬 1 📌 0

"generative adversarial proving"

One agent tries to prove the theorem; one tries to find counterexamples

I was surprised to learn that this isn't apparent, and that it might not be common?

05.12.2024 04:58 — 👍 0 🔁 0 💬 0 📌 0I realized I support people because I believe in them - I want to amplify/empower them as an embodiment of their preferences and values. Not because I like the values themselves.

05.12.2024 04:58 — 👍 0 🔁 0 💬 1 📌 0I think I'll start just posting screenshots to tweets I like that aren't on bsky

05.12.2024 04:54 — 👍 0 🔁 0 💬 0 📌 0 05.12.2024 04:54 —

👍 0

🔁 0

💬 1

📌 0

05.12.2024 04:54 —

👍 0

🔁 0

💬 1

📌 0

Totally agree. Flashy LLM applications are NLP, but the interesting directions seem ~ NP-complete problems.

Interesting continuation: in this framing, getting enough information from your NP solution could efficiently validate the AI output, which ends up looking ~ like property-based testing.