Takeaway: reasoning LLMs are getting better and better on math and code—deterministic reasoning tasks. But we should also evaluate them on open-ended, inherently uncertain everyday reasoning! (9/10)

04.03.2026 16:13 — 👍 8 🔁 2 💬 1 📌 0Takeaway: reasoning LLMs are getting better and better on math and code—deterministic reasoning tasks. But we should also evaluate them on open-ended, inherently uncertain everyday reasoning! (9/10)

04.03.2026 16:13 — 👍 8 🔁 2 💬 1 📌 0

🚨New Paper!🚨 How do reasoning LLMs handle inferences that have no deterministic answer? We find that they diverge from humans in some significant ways, and fail to reflect human uncertainty… 🧵(1/10)

04.03.2026 16:13 — 👍 52 🔁 18 💬 3 📌 1

Beyond our controlled setup, we also show how LatentLens works much better than baselines on off-the-shelf Qwen2-VL-7B-Instruct

11.02.2026 14:12 — 👍 3 🔁 1 💬 1 📌 0

Building a VLM can be surprisingly simple: You keep both the LLM and vision encoder frozen, you just train a small MLP that projects into the LLM embedding space as prefixes. That’s it 😮

But how and why does that work? How do visual tokens relate to language, i.e. do they have interpretable NNs?

Paper: arxiv.org/abs/2602.00462

Code: github.com/McGill-NLP/...

Demo: tinyurl.com/ce57mn4v

Couldn't have imagined better collaborators to wrap up the phd: Shravan Nayak @oscmansan.bsky.social @vaibhavadlakha.bsky.social

@delliott.bsky.social @sivareddyg.bsky.social @mariusmosbach.bsky.social

🚨New paper

Are visual tokens going into an LLM interpretable 🤔

Existing methods (e.g. logit lens) and assumptions would lead you to think “not much”...

We propose LatentLens and show that most visual tokens are interpretable across *all* layers 💡

Details 🧵

"Not only is the ratio of AI’s resource rapacity to its productive utility indefensibly and irremediably skewed, AI-made material is itself a waste product: flimsy, shoddy, disposable, a single-use plastic of the mind."

>>

enshittification | noun | when a digital platform is made worse for users, in order to increase profits

03.09.2025 20:22 — 👍 29152 🔁 8574 💬 507 📌 649

Windows Notepad, the native simple text editor, now has formatting options and a Copilot button.

Look what they did to Notepad. Shut the fuck up. This is Notepad. You are not welcome here. Oh yeah "Let me use Copilot for Notepad". "I'm going to sign into my account for Notepad". What the fuck are you talking about. It's Notepad.

27.08.2025 01:41 — 👍 17427 🔁 4577 💬 446 📌 495

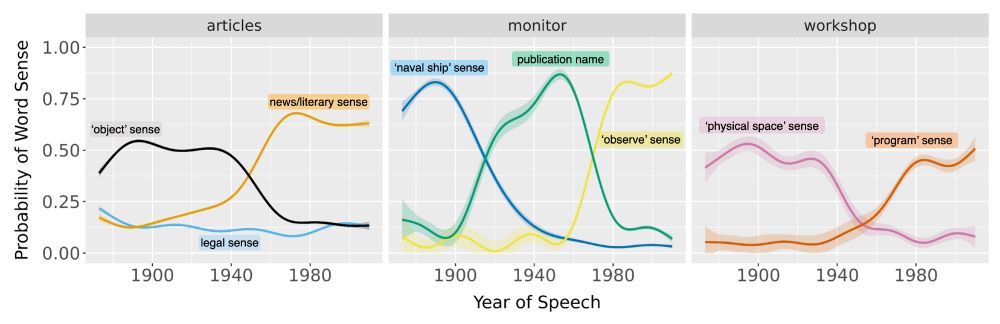

Our new paper in #PNAS (bit.ly/4fcWfma) presents a surprising finding—when words change meaning, older speakers rapidly adopt the new usage; inter-generational differences are often minor.

w/ Michelle Yang, @sivareddyg.bsky.social , @msonderegger.bsky.social and @dallascard.bsky.social👇(1/12)

Thrilled to announce our new survey that explores the exciting possibilities and troubling risks of computational persuasion in the era of LLMs 🤖💬

📄Arxiv: arxiv.org/pdf/2505.07775

💻 GitHub: github.com/beyzabozdag/...

Started a new podcast with @tomvergara.bsky.social !

Behind the Research of AI:

We look behind the scenes, beyond the polished papers 🧐🧪

If this sounds fun, check out our first "official" episode with the awesome Gauthier Gidel

from @mila-quebec.bsky.social :

open.spotify.com/episode/7oTc...

Zohran Mamdani, a 33-year-old state assemblyman, declared victory in New York City’s Democratic mayoral primary after Andrew Cuomo conceded the race.

“Tonight we made history,” Mamdani said, addressing his supporters. wapo.st/44yMVoI

Mahmoud Khalil is finally home with his beautiful wife and newborn son.

Each one of the 104 days he spent detained was a grave injustice.

From the moment of his detention, @ccrjustice.org + @aclu.org engaged my office as we worked closely to help secure his release. They did remarkable work here.

The facts:

We release (MVPBench) with around 55K videos (grouped as *minimal video pairs*) from diverse physical understanding sources

Arxiv: arxiv.org/abs/2506.09987

Huggingface: huggingface.co/datasets/fac...

GitHub: github.com/facebookrese...

Leaderboard: huggingface.co/spaces/faceb...

Excited to share the results of my recent internship!

We ask 🤔

What subtle shortcuts are VideoLLMs taking on spatio-temporal questions?

And how can we instead curate shortcut-robust examples at a large-scale?

We release: MVPBench

Details 👇🔬

Congrats!

30.05.2025 18:20 — 👍 2 🔁 0 💬 0 📌 0Today, I was denied access to seeing my constituent, Mr. Kilmar Abrego Garcia. If there is nothing to hide, cut the crap. Let his lawyer and I check on him.

26.05.2025 19:32 — 👍 38952 🔁 10739 💬 732 📌 353

Breaking news: The Trump administration revoked Harvard’s ability to enroll foreign students, saying it allowed anti-American agitators.

Existing foreign students must transfer or risk losing their legal status, DHS said.

when in albuquerque…

07.05.2025 06:00 — 👍 4 🔁 0 💬 0 📌 0We won a Senior Area Chair Award at NAACL!! Many thanks again to my amazing coauthors Gaurav Kamath and @sivareddyg.bsky.social :-)

03.05.2025 15:50 — 👍 13 🔁 2 💬 0 📌 0Check out Gaurav's video on their #NAACL paper and find @adadtur.bsky.social at the conference 👇

02.05.2025 01:41 — 👍 11 🔁 1 💬 0 📌 0Great work from labmates on LLMs vs humans regarding linguistic preferences: You know when a sentence kind of feels off e.g. "I met at the park the man". So in what ways do LLMs follow these human intuitions?

01.05.2025 15:04 — 👍 7 🔁 3 💬 0 📌 0

Ada is an undergrad and will soon be looking for PhDs. Gaurav is a PhD student looking for intellectually stimulating internships/visiting positions. They did most of the work without much of my help. Highly recommend them. Please reach out to them if you have any positions.

01.05.2025 15:14 — 👍 6 🔁 2 💬 1 📌 0Incredibly proud of my students @adadtur.bsky.social and Gaurav Kamath for winning a SAC award at #NAACL2025 for their work on assessing how LLMs model constituent shifts.

01.05.2025 15:11 — 👍 17 🔁 5 💬 1 📌 0Congratulations to Mila members @adadtur.bsky.social , Gaurav Kamath and @sivareddyg.bsky.social for their SAC award at NAACL! Check out Ada's talk in Session I: Oral/Poster 6. Paper: arxiv.org/abs/2502.05670

01.05.2025 14:30 — 👍 13 🔁 7 💬 0 📌 3I filmed this yesterday on my way to Lousiana where my constituent Rümeysa Öztürk is being wrongfully held by ICE. I’m there now demanding her release. More to come.

22.04.2025 21:36 — 👍 30269 🔁 6193 💬 933 📌 443

A circular diagram with a blue whale icon at the center. The diagram shows 8 interconnected research areas around LLM reasoning represented as colored rectangular boxes arranged in a circular pattern. The areas include: §3 Analysis of Reasoning Chains (central cloud), §4 Scaling of Thoughts (discussing thought length and performance metrics), §5 Long Context Evaluation (focusing on information recall), §6 Faithfulness to Context (examining question answering accuracy), §7 Safety Evaluation (assessing harmful content generation and jailbreak resistance), §8 Language & Culture (exploring moral reasoning and language effects), §9 Relation to Human Processing (comparing cognitive processes), §10 Visual Reasoning (covering ASCII generation capabilities), and §11 Following Token Budget (investigating direct prompting techniques). Arrows connect the sections in a clockwise flow, suggesting an iterative research methodology.

Models like DeepSeek-R1 🐋 mark a fundamental shift in how LLMs approach complex problems. In our preprint on R1 Thoughtology, we study R1’s reasoning chains across a variety of tasks; investigating its capabilities, limitations, and behaviour.

🔗: mcgill-nlp.github.io/thoughtology/

Not sure if this has been shared here yet, but this is video of Rumeysa Ozturk's arrest posted by WCVB. It's terrifying.

26.03.2025 16:43 — 👍 4596 🔁 2300 💬 49 📌 1168