In this amazing multidisciplinary collaboration, we report our early experience with the @openclaw-x.bsky.social ->

23.02.2026 23:32 — 👍 40 🔁 21 💬 1 📌 9

In this amazing multidisciplinary collaboration, we report our early experience with the @openclaw-x.bsky.social ->

23.02.2026 23:32 — 👍 40 🔁 21 💬 1 📌 9Good question! We measure CoT generalizability: can Model A's reasoning guide Model B to the same conclusion? Whether real reasoning or shared hallucination, both appear "consistent." We test if explanations generalize across models (good explainer), regardless of internal faithfulness.

23.01.2026 04:04 — 👍 0 🔁 0 💬 0 📌 0

What does this mean for RLHF and post-training?

Cross-model consistency could be a valuable training signal for developing more interpretable reasoning models.

See more:

📄 arXiv: arxiv.org/abs/2601.11517

🌐 Website: genex.baulab.info

Thanks to CBAI for pairing me with Chandan! 🙏

🧵(7/7)

What do humans actually prefer?

Participants rated CoTs on clarity, ease of following, and confidence. We find that Consistency >> Accuracy for predicting preferences. Humans trust explanations that multiple models agree on!

🧵(6/7)

Testing on MedCalc-Bench & Instruction-Induction (we added new tasks):

📈 Transfer & ensemble → much higher consistency than baselines

⚠️ Model choice matters! The same transfer approach works great with Model A's CoT but poorly with Model B's.

Some models are better "explainers" than others.

🧵(5/7)

Practical implication: CoT Monitorability

Cross-model consistency provides a complementary signal: explanations that generalize across models may be more monitorable and trustworthy for oversight.

bsky.app/profile/ai-f...

🧵(4/7)

We evaluate 4 approaches:

🔹 Empty CoT: No/empty reasoning

🔹 Default: Model's own CoT

🔹 Transfer CoT: Model A's CoT → Model B

🔹 Ensemble CoT: Combine multiple models' thoughts

We measure cross-model consistency: How often do model pairs reach the same answer (including same wrong answers)?

🧵(3/7)

Why does this matter? Faithfulness research shows CoT doesn't always reflect internal reasoning.

What if explanations serve a different purpose?

If Model B follows Model A's reasoning to the same conclusion, maybe these explanations capture something generalizable.

🧵(2/7)

Can models understand each other's reasoning? 🤔

When Model A explains its Chain-of-Thought (CoT) , do Models B, C, and D interpret it the same way?

Our new preprint with @davidbau.bsky.social and @csinva.bsky.social explores CoT generalizability 🧵👇

(1/7)

Can you solve this algebra puzzle? 🧩

cb=c, ac=b, ab=?

A small transformer can learn to solve problems like this!

And since the letters don't have inherent meaning, this lets us study how context alone imparts meaning. Here's what we found:🧵⬇️

How can a language model find the veggies in a menu?

New pre-print where we investigate the internal mechanisms of LLMs when filtering on a list of options.

Spoiler: turns out LLMs use strategies surprisingly similar to functional programming (think "filter" from python)! 🧵

What's the right unit of analysis for understanding LLM internals? We explore in our mech interp survey (a major update from our 2024 ms).

We’ve added more recent work and more immediately actionable directions for future work. Now published in Computational Linguistics!

NEMI 2024 (Last Year)

🚨 Registration is live! 🚨

The New England Mechanistic Interpretability (NEMI) Workshop is happening Aug 22nd 2025 at Northeastern University!

A chance for the mech interp community to nerd out on how models really work 🧠🤖

🌐 Info: nemiconf.github.io/summer25/

📝 Register: forms.gle/v4kJCweE3UUH...

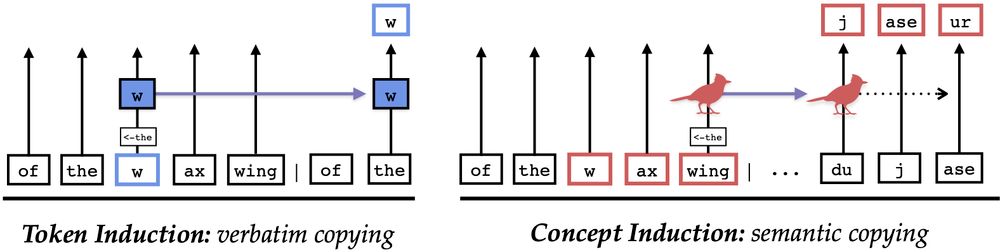

[📄] Are LLMs mindless token-shifters, or do they build meaningful representations of language? We study how LLMs copy text in-context, and physically separate out two types of induction heads: token heads, which copy literal tokens, and concept heads, which copy word meanings.

07.04.2025 13:54 — 👍 76 🔁 19 💬 1 📌 6

The other key tasks are model search and benchmarking, with important applications like document generation and auditing.

Read more in our paper (with @davidbau.bsky.social

and Renée Miller) here: arxiv.org/abs/2403.02327

Excited to share that this is accepted to #EDBT2025! 🎉

🧵5/5

The second one is model versioning — where the aim is to map a model’s position within a lake of models, capturing these relationships using directed model graphs.

Other tasks, like model tree heritage recovery and differentiating outputs from various LLMs, are part of model versioning.

🧵4/5

We see four major tasks for Model Lakes.

The first is model attribution — tracing & understanding a model's output through attack techniques like model inversion (recovering user inputs) and interpretability methods like reverse engineering to analyze model behavior.

bsky.app/profile/srus...

🧵3/5

Model Lake is a system containing numerous heterogenous pre-trained models and related data in their natural formats. This concept is inspired from data lakes, which collect raw, unstructured data at scale.

By addressing shared challenges across research, we can unlock meaningful solutions. 👇

🧵2/5

Model Lakes Design. A model lake stores models and processes them using techniques, like inference, interpretability, weight-space modeling and indexing to support various user interactions. It generates outputs like version graphs, model cards and ranked models, refining them into human-readable results, as shown on the figure's right side.

🚀 How would you know what model to use? 🤗

With millions of models emerging rapidly, how do we verify, track, and find the right one?

We survey and formalize Model Lakes 🌊🤖 — a framework to structure, navigate, and make sense of this landscape.

Website: lakes.baulab.info

#AI #Database

🧵1/5

PhD Applicants: remember that the Northeastern Computer Science PhD application deadline is Dec 15.

It's a terrific time to do a PhD, with so many interesting things happening in AI.

Apply here:

www.khoury.northeastern.edu/apply/phd-ap...

More big news! Applications are open for the NDIF Summer Engineering Fellowship—an opportunity to work on cutting-edge AI research infrastructure this summer in Boston! 🚀

10.12.2024 21:59 — 👍 9 🔁 6 💬 1 📌 2