if you’re at ICLR and also left your poster printing to the last minute

Colour Connect Pte Lte is goated

large format

1hr

$28

crispy

if you’re at ICLR and also left your poster printing to the last minute

Colour Connect Pte Lte is goated

large format

1hr

$28

crispy

i'm at ICLR this week

presenting at the morning poster session on thursday

excited to catch up with friends and collaborators, old and new

let's chat

thanks to @yaringal.bsky.social , John P. Cunningham , and David Blei for their help !

13.12.2024 17:26 — 👍 1 🔁 0 💬 0 📌 0

fun @bleilab.bsky.social x oatml collab

come chat with Nicolas , @swetakar.bsky.social , Quentin , Jannik , and i today

thank you to my co-authors @velezbeltran.bsky.social and @bleilab.bsky.social

13.12.2024 16:10 — 👍 0 🔁 0 💬 0 📌 0

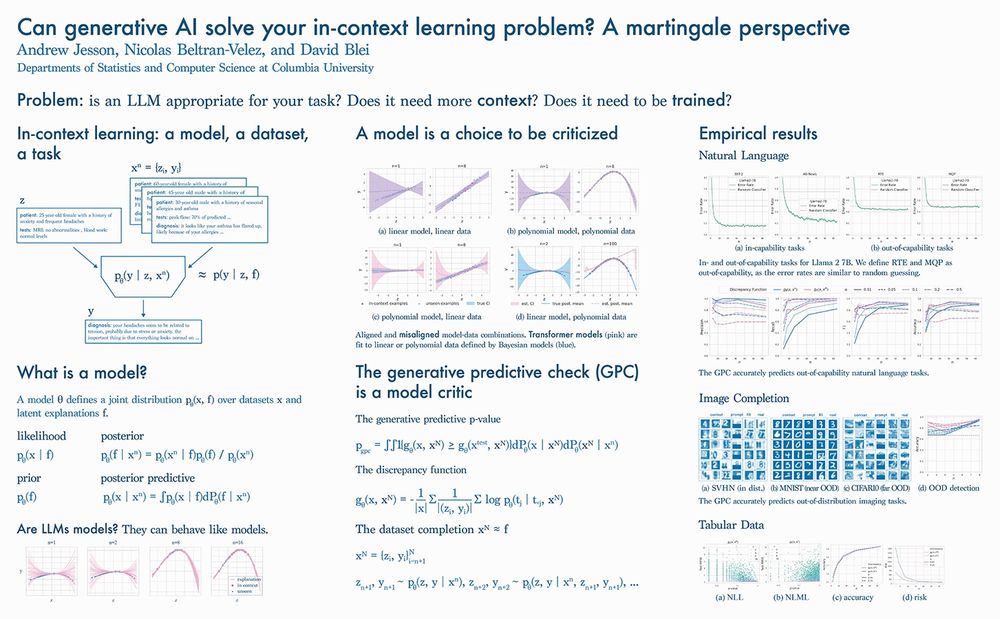

we explore two different discrepancies: the negative log likelihood (NLL), and the negative log marginal likelihood (NLML)

the NLL gives p-values that are informative of whether there are enough in-context examples

this can reduce risk in safety critical settings

we show that the GPC is an effective OOD predictor on generative image completion tasks using a modified Llama-2 model trained from scratch

13.12.2024 16:10 — 👍 0 🔁 0 💬 1 📌 0

we show that the GPC is an effective predictor of out-of-capability natural language tasks using pre-trained LLMs

13.12.2024 16:10 — 👍 0 🔁 0 💬 1 📌 0

we show that the GPC is an effective OOD predictor for tabular data using synthetic data and a modified Llama-2 model trained from scratch

13.12.2024 16:10 — 👍 0 🔁 0 💬 1 📌 0

the result is the generative predictive p-value

pre-selecting a significance level α to threshold the p-value gives us a predictor of model capacity: the generative predictive check (GPC)

problem:

not all generative models (eg, LLMs) lend access to the likelihood and posterior

solution:

we can sample dataset completions from the predictive to simulate sampling from the posterior

and we can estimate the likelihood by conditioning on the completions

understanding these nuances is the domain of Bayesian model criticism

posterior predictive checks form a family of model criticism techniques

but for discrepancy functions like the negative log likelihood, PPCs require the likelihood and posterior

the posterior is informative about if there are enough in-context examples

but such inferences are made by any model, even misaligned ones

if a model is too flexible, more examples may be needed to specify the task

if it is too specialized, the inferences may be unreliable

a model θ defines a joint distribution over datasets x and explanations f

the joint comprises the likelihood over datasets and the prior over explanations

the posterior is a distribution over explanations given a dataset

the posterior predictive gives the model a voice

an in-context learning problem comprises a model, a dataset, and a task

knowing when an LLM provides reliable responses is challenging in this setting

there may not be enough in-context examples to specify the task

or the model may just not have the capability to it

can generative ai solve your in-context learning problem ?

we develop a predictor that only requires sampling and log probs

we show it works for tabular, natural language, and imaging problems

come chat at the safe generative ai workshop at NeurIPS

📄 arxiv.org/abs/2412.06033

Hello!

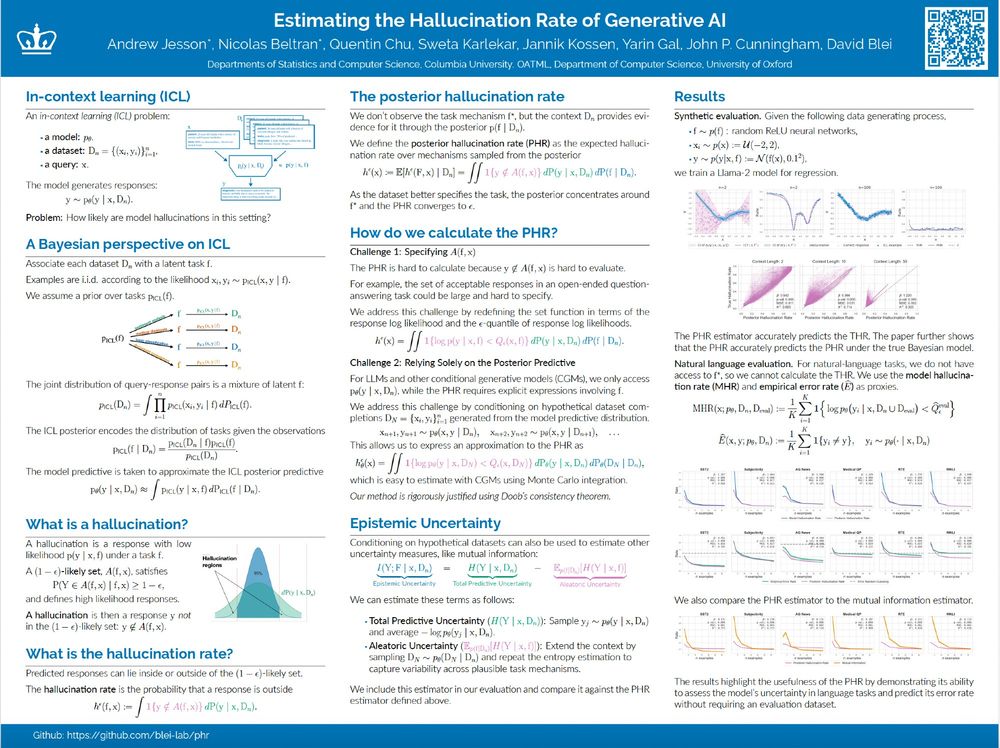

We will be presenting Estimating the Hallucination Rate of Generative AI at NeurIPS. Come if you'd like to chat about epistemic uncertainty for In-Context Learning, or uncertainty more generally. :)

Location: East Exhibit Hall A-C #2703

Time: Friday @ 4:30

Paper: arxiv.org/abs/2406.07457

does your circuit preserve model behavior ?

does removing it disable the task ?

does it contain redundant parts ?

don ' t know ?

then come chat about hypothesis testing for mechanistic interpretability

poster 2803 East 4:30-7:30pm at NeurIPS

The circuit hypothesis proposes that LLM capabilities emerge from small subnetworks within the model. But how can we actually test this? 🤔

joint work with @velezbeltran.bsky.social @maggiemakar.bsky.social @anndvision.bsky.social @bleilab.bsky.social Adria @far.ai Achille and Caro

ofc

i love openreview

but damn

that ' s a lot of tabs

(Shameless) plug for David Blei's lab at Columbia University! People in the lab currently work on a variety of topics, including probabilistic machine learning, Bayesian stats, mechanistic interpretability, causal inference and NLP.

Please give us a follow! @bleilab.bsky.social

youtu.be/X-Rgc7VzIp0?...

17.02.2024 22:02 — 👍 0 🔁 0 💬 0 📌 0