Link to Poster & Paper --> neurips.cc/virtual/2025...

01.12.2025 13:23 — 👍 2 🔁 0 💬 0 📌 0Link to Poster & Paper --> neurips.cc/virtual/2025...

01.12.2025 13:23 — 👍 2 🔁 0 💬 0 📌 0

🎤Come see the poster at #NeurIPS2025: 📍 Wed Dec 3, 4:30-7:30 PM PST 📍 Exhibit Hall C,D,E #5000

This started as exploring how to predict neuronal responses to videos! First preprint here:

arxiv.org/abs/2505.07620 (soon to be updated)

Let's chat about bio-inspired vision! 🚀

Using Representational Similarity Analysis, we found HoCNNs learn fundamentally different geometries than standard CNNs.

Their representations are more dispersed in high-dimensional space → better class separation and more discriminative features!

We then systematically perturbed images with controlled higher-order statistics.

HoCNNs showed increased sensitivity to these perturbations—proving they genuinely rely on higher-order correlations! Yet they're more robust to common corruptions (CIFAR-10-C).

We employ our higher-order convolutional layer in different network structures, from vanilla CNNs to ResNet-18 and validate it across different datasets.

01.12.2025 13:23 — 👍 1 🔁 0 💬 1 📌 0

Key finding: Optimal performance at 3rd/4th order!

This remarkably aligns with Koenderink & van Doorn's analysis: natural images have ~63% quadratic, ~35% cubic, and ~2% quartic correlations. Our model learns to match the statistical structure of the natural world.

Our solution: Higher-order convolutions!

We extend standard convolution to include learnable 2nd, 3rd, and 4th order terms with independent weights. Each order captures different aspects of visual structure—from edges (1st) to complex textures (3rd/4th).

The issue: Standard CNNs use pointwise nonlinearities with tied weights. When you expand σ(w₁x₁ + w₂x₂), different orders share the same weights—limiting expressivity!

Natural images have rich higher-order correlations that basic convolutions struggle to capture.

🧠🔬 Excited to share our #NeurIPS2025 paper: "Convolution Goes Higher-Order"!

We asked: Can shallow networks be as expressive as deep ones? Inspired by biological vision, we introduce higher-order convolutions that capture complex image patterns standard CNNs miss.

🧵👇

This project was jointly done with

@slneuro.bsky.social building on deep intuitions and under the supervision of Matthew Chalk! 🙏

Come discuss at #NeurIPS2025! 🎪

📍 Fri Dec 5, 11 AM-2 PM PST

📍 Exhibit Hall C,D,E #2005

Scales to natural images too! 🐦🐱

Tested with realistic retinal ganglion cell models. Again, I_local peaks at high-contrast regions & object boundaries—the regions contributing most to encoded information.

Shows the method works beyond toy examples!

Applied to visual neurons responding to MNIST digits: I_local(x) reveals information is concentrated along object EDGES—exactly where the decoded images are most sensitive to stimulus changes! 🎯

Compare to Fisher info which just shows "blobs" at receptive field locations.

The magic: our decomposition can be efficiently computed using diffusion models!

Train conditional & unconditional models to predict X from noisy observations. This makes the method scalable to high-dimensional naturalistic stimuli—something previous methods couldn't handle.

We derive a new decomposition "I_local(x)" that satisfies ALL axioms! 🎉

Key insight: integrate Fisher information over noise-corrupted stimuli. This generalizes Fisher info to finite perturbations while remaining a valid mutual information decomposition.

Our approach: introduce 4 core axioms any meaningful decomposition should satisfy:

✓ Completeness: recovers total mutual information

✓ Locality: local changes have local effects

✓ Positivity: information ≥ 0

✓ Additivity: combines measurements properly

The challenge: decomposing mutual information I(R;X) into stimulus-specific contributions I(x) is fundamentally ill-posed - many solutions exist!

Previous methods (Fisher info, stimulus specific info) violate key properties, making them hard to interpret as sensitivity measures.

🧠 How do neurons encode information? We know HOW MUCH, but what about WHAT information they encode?

Our new work uses diffusion models to decompose neural information down to individual stimuli & features!

🎯Spotlight at #NeurIPS2025 🌟📄

arxiv.org/abs/2505.11309

Happy to share my first work with a connection to myopia, a collaboration with EssilorLuxottica

www.science.org/doi/10.1126/...

🕳️🐇Into the Rabbit Hull – Part II

Continuing our interpretation of DINOv2, the second part of our study concerns the *geometry of concepts* and the synthesis of our findings toward a new representational *phenomenology*:

the Minkowski Representation Hypothesis

Figuring out how the brain uses information from visual neurons may require new tools, writes @neurograce.bsky.social. Hear from 10 experts in the field.

#neuroskyence

www.thetransmitter.org/the-big-pict...

🚨 New preprint out from our lab!

📄 The Rod Bipolar Cell Pathway Contributes to Surround Responses in OFF Retinal Ganglion Cells

👉 www.biorxiv.org/content/10.1...

Images (or image patches) are secretly multi-channel signals over groups. Below, the dihedral group of order 8: reflecting/rotating the image permutes the values in the magenta vector. So we can reshape the image into 8-tuples that all permute according to the dihedral group (edge case diagonals).

03.10.2025 09:13 — 👍 9 🔁 1 💬 2 📌 0

Thrilled to see this work accepted at NeurIPS!

Kudos to @hafezghm.bsky.social for the heroic effort in demonstrating the efficacy of seq-JEPA in representation learning from multiple angles.

#MLSky 🧠🤖

We present our preprint on ViV1T, a transformer for dynamic mouse V1 response prediction. We reveal novel response properties and confirm them in vivo.

With @wulfdewolf.bsky.social, Danai Katsanevaki, @arnoonken.bsky.social, @rochefortlab.bsky.social.

Paper and code at the end of the thread!

🧵1/7

Our illustrated guide to non-Euclidian ML is finally published!

Check it out for

⭐️ gorgeous figures (with new additions!) on topology, algebra, and geometry in the field

⭐️ broken down tables for easy reading

⭐️ accessible text, additional refs, and more

iopscience.iop.org/article/10.1...

🚨New paper🚨

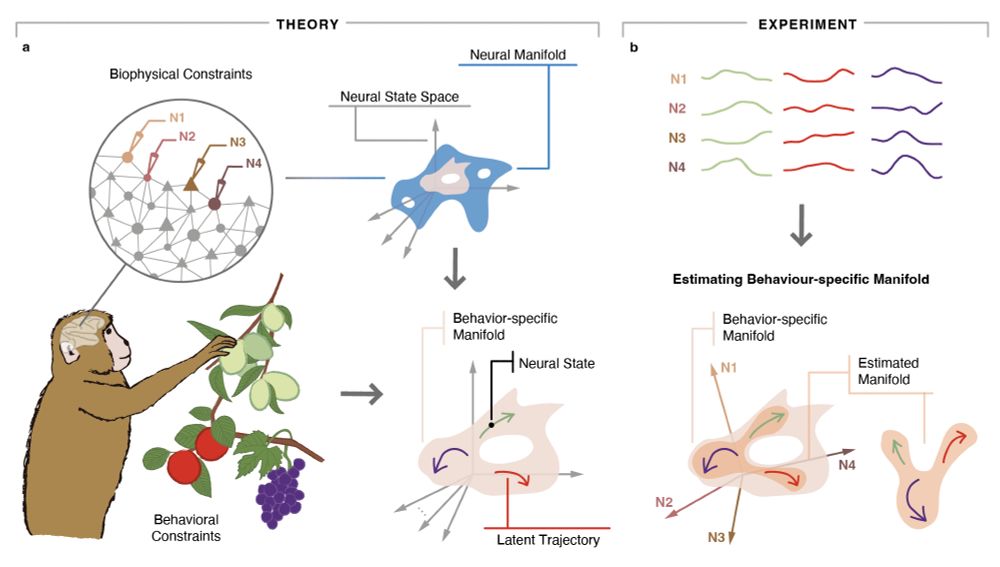

Neural manifolds went from a niche-y word to an ubiquitous term in systems neuro thanks to many interesting findings across fields. But like with any emerging term, people use it very differently.

Here, we clarify our take on the term, and review key findings & challenges rdcu.be/ex8hW

Do you study neural systems with feedback at different temporal-, spatial-, hierarchical-, data-, or computational- scales? Have you submitted your abstract to the "Neurocybernetics at Scale" symposium?

Due to multiple requests, new abstract submission deadline is 18 July! #AI4Science #cybernetics

NeurReps is back for its 4th edition at NeurIPS 2025! Stay tuned for updates!

10.07.2025 13:16 — 👍 9 🔁 4 💬 0 📌 0

Exciting new preprint from the lab: “Adopting a human developmental visual diet yields robust, shape-based AI vision”. A most wonderful case where brain inspiration massively improved AI solutions.

Work with @zejinlu.bsky.social @sushrutthorat.bsky.social and Radek Cichy

arxiv.org/abs/2507.03168

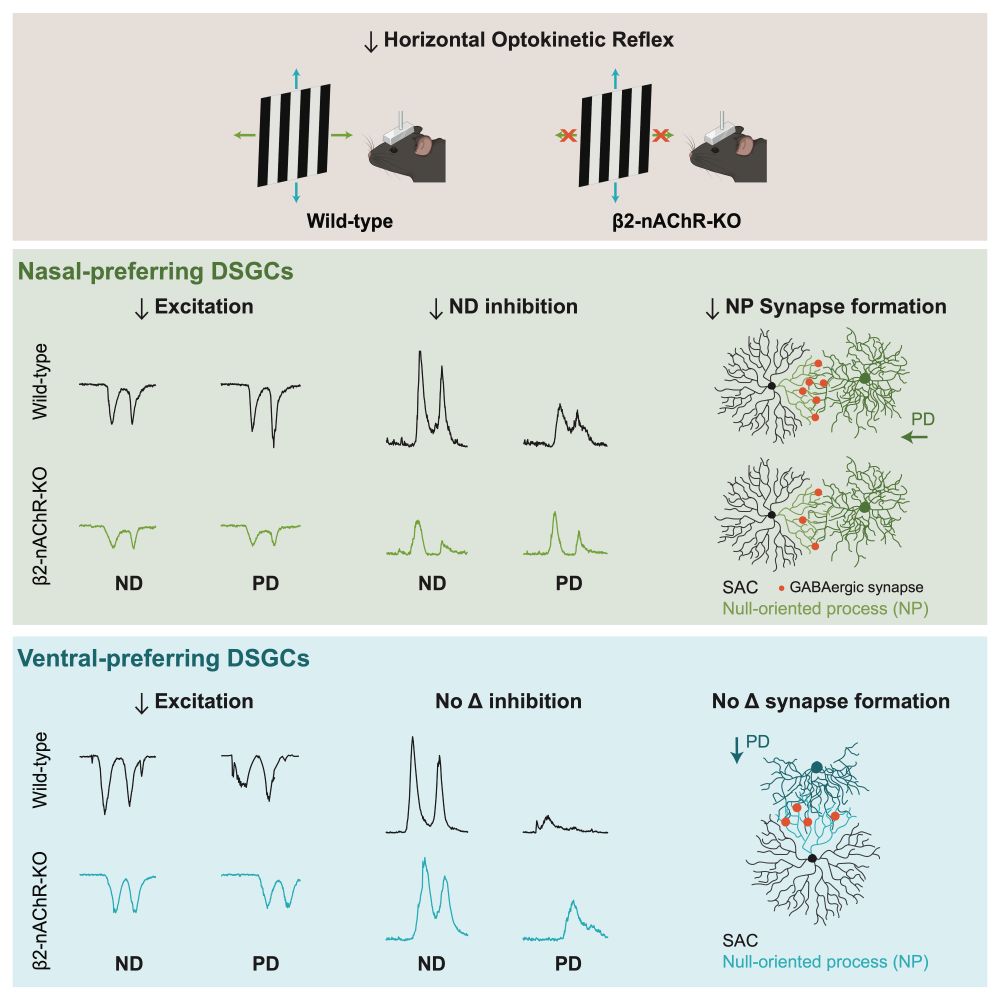

Lack of spontaneous activity (#retinalwaves) in early development prevents the formation of retinal circuits critical for stabilizing images as we move through the world. doi.org/10.1016/j.ce....

30.06.2025 16:21 — 👍 20 🔁 5 💬 1 📌 0