Thank you!

22.02.2026 18:15 — 👍 0 🔁 0 💬 0 📌 0Thank you!

22.02.2026 18:15 — 👍 0 🔁 0 💬 0 📌 0Here's my previous post for summary and preprint link: bsky.app/profile/yong...

22.02.2026 01:31 — 👍 0 🔁 0 💬 0 📌 0

New paper with @timbrady.bsky.social and @violastoermer.bsky.social now out in JoCN! "Real-world Objects Scaffold Visual Working Memory for Features: Increased Neural Engagement When Colors Are Remembered as Part of Meaningful Objects" doi.org/10.1162/JOCN...

22.02.2026 01:29 — 👍 39 🔁 11 💬 2 📌 0This highlights the role of semantics in VWM, challenging models that treat WM as primarily perceptual with fixed limits. Using naturalistic stimuli with careful evaluations may enable us to probe the rich structure of VWM, offering a deeper understanding of how VWM is used in the real-world. 11/

09.02.2026 21:14 — 👍 1 🔁 0 💬 0 📌 0

How can real objects enhance VWM? We hypothesize that semantic distinctiveness is the key: beyond visual features, real objects engage conceptual knowledge, yielding more distinctive and potentially stable memory representations, ultimately aiding performance. 10/

09.02.2026 21:13 — 👍 3 🔁 0 💬 1 📌 0

Lastly, a large-sample (n=300) correlation analysis showed that subjective familiarity rating predicted memory performance for real objects, while colourfulness scaled with memory performance for counterfeit objects, despite both stimulus sets matched in colourfulness scores. 9/

09.02.2026 21:12 — 👍 0 🔁 0 💬 1 📌 0

ERP pattern similarity analysis showed that encoding and remembering real objects resulted in earlier and more robust pattern stability than counterfeit objects. This may suggest that real objects result in quicker and more stable memory representations due to available visual-semantic templates. 8/

09.02.2026 21:10 — 👍 1 🔁 0 💬 1 📌 0CDA is one way to look at neural engagement. But this only captures an average amplitude over predefined time windows. Another way we can examine neural engagement is to look at how the patterns of neural activity dynamically change over time. 7/

09.02.2026 21:09 — 👍 1 🔁 0 💬 1 📌 0

We also looked at underlying neural activities using EEG, specifically contralateral-delay-activity (CDA). The results showed increased neural engagement when remembering real than counterfeit objects, shown in both heightened and more spread-out lateralized ERP activities. 6/

09.02.2026 21:09 — 👍 0 🔁 0 💬 1 📌 0

Strikingly, despite this match VWM performance improved only for real objects, not counterfeit ones. Counterfeit objects resulted in similar performance to fully scrambled shapes. 5/

09.02.2026 21:08 — 👍 0 🔁 0 💬 1 📌 0

First, we tested whether these counterfeit objects are well matched in perceptual similarity with real objects. Using CNNs, we extracted visual features of each object and quantified similarities among them. This confirmed that counterfeit objects are indeed visually matched to real objects. 4/

09.02.2026 21:08 — 👍 0 🔁 0 💬 1 📌 0

Here, we tackled this using “counterfeit objects” (Cooper et al., 2023): GAN-generated images that match real objects in visual properties but are novel and unrecognizable. 3/

09.02.2026 21:07 — 👍 1 🔁 0 💬 1 📌 0

Oftentimes memory performance comparison is made across drastically different looking stimulus types such as real objects and colored circles or scrambled shapes. Specifically, real objects are visually more unique and discernible. Can these perceptual differences explain the memory benefit? 2/

09.02.2026 21:07 — 👍 0 🔁 0 💬 1 📌 0New preprint with @SamJung @timbrady.bsky.social and @violastoermer.bsky.social: osf.io/preprints/ps.... Here we uncover what might be driving the “meaningfulness benefit” in visual working memory. Studies show that real objects are remembered better in VWM tasks than abstract stimuli. But why? 1/

09.02.2026 21:06 — 👍 41 🔁 24 💬 1 📌 0

🚨New paper altert🚨

As a synthesis of my PhD research, we revisited the prevailing assumption about the mechanisms underlying repetition learning, and re-evaluated these assumption in light of recent findings.

Now out in Perspectives on Psychological Science:

doi.org/10.1177/1745...

Make it your New Year resolution to add a #workingmemory dataset to OpenWMData so that we can curate our field's precious data, start testing theories and benchmarking models across datasets, conduct secondary analyses and meta-research using the data itself, and help me feel like I'm, like, alive.

02.01.2026 04:37 — 👍 28 🔁 15 💬 1 📌 0Has anyone attended any pre-data-collection poster sessions (i.e., poster sessions where people present their plans for experiments before data collection in order to get feedback when it's most useful) at conferences other than VSS?

20.12.2025 00:55 — 👍 6 🔁 3 💬 2 📌 0Broadly, this suggests that the FFDE is relatively location-specific. Fun and quite shocking illusion to look at!

14.11.2025 18:24 — 👍 0 🔁 0 💬 0 📌 0

Our results consistently showed that the illusion was significantly disrupted with location shifts of the faces. We also look at how the illusion develops over time, something that hasn't been looked closely before.

14.11.2025 18:24 — 👍 0 🔁 0 💬 1 📌 0

FFDE is a fun illusion where peripherally presented faces start looking monstrous. Here we test whether this illusion can be transferred to new locations during the face streams. Importantly, we use joysticks so that people can continuously rate how weird the faces get, capturing the whole dynamics.

14.11.2025 18:22 — 👍 0 🔁 0 💬 1 📌 0

New paper with NicoleAnayaSosa and @violastoermer.bsky.social now out in Perception! "Testing location invariance of the flashed face distortion effect" journals.sagepub.com/eprint/WVUFW...

14.11.2025 16:48 — 👍 9 🔁 3 💬 1 📌 0Tomorrow afternoon I'll be presenting my symposium talk at #ESCoP2025 titled "Meaningful and familiar stimuli support visual working memory for simple features"! See you there!

02.09.2025 20:53 — 👍 21 🔁 7 💬 0 📌 0

We are now recruiting STEM mentors for the 2025-2026 graduate school application cycle!

⏰ Mentor applications close on July 31st, 2025 ⏰

✨ APPLY HERE: dashboard.project-short.com ✨

Questions? Email us: contact@project-short.com

#gradschool #phd #phdapplication #gradadmissions

And here is a preprint link: osf.io/preprints/ps...

10.07.2025 00:57 — 👍 1 🔁 0 💬 0 📌 0New paper with LaurenWilliams, @timbrady.bsky.social, and @violastoermer.bsky.social now out in JEP: General! "Limits of verbal labels in cognition: Category labels do not improve visual working memory performance for obfuscated objects" psycnet.apa.org/record/2026-...

10.07.2025 00:53 — 👍 15 🔁 4 💬 1 📌 0Today afternoon I’ll be presenting my poster at #vss2025 titled “Perceptual and conceptual contributions of the real-world object benefit in visual working memory: Is looking like an object good enough to enhance memory?” See you at Pavillion!

18.05.2025 13:45 — 👍 3 🔁 1 💬 0 📌 0Overall, our results add to the emerging evidence that meaningful and familiar stimuli can enhance visual working memory processes and also show that remembering meaningful stimuli and simple features share core active cognitive processes.

17.05.2025 20:37 — 👍 0 🔁 0 💬 0 📌 0Additionally, the meaningfulness benefits showed up even in the first five trials of the task in our results, showing how robust the effect is in visual working memory.

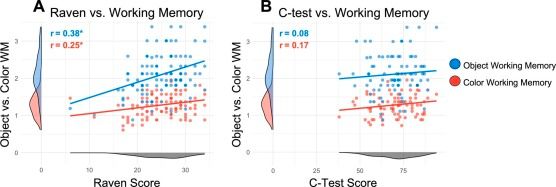

17.05.2025 20:35 — 👍 0 🔁 0 💬 1 📌 0We also find that the amount of working memory increase individuals get from using meaningful stimuli also correlates with fluid intelligence scores, suggesting a link between meaningfulness benefit and fluid intelligence abilities.

17.05.2025 20:33 — 👍 0 🔁 0 💬 1 📌 0

In this paper we show that working memory performances for both real-world objects and colored circles reliably correlate with individual differences in fluid intelligence but not with crystallized intelligence measures.

17.05.2025 20:33 — 👍 0 🔁 0 💬 1 📌 0