New work from the team on identifying memorized training samples for free

26.06.2025 16:27 —

👍 0

🔁 0

💬 0

📌 0

To properly defend LLM agents against prompt injection, we need 1️⃣ better defenses which are robust against informed adversaries, and 2️⃣ account for these vulnerabilities even in “aligned” LLMs when deploying them as agents.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

💬 Does this mean the existing alignment-based defenses 🛡️ are not useful? No! But they are likely more brittle than previously believed.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

More specifically, it uses intermediate training checkpoints as “stepping stones” 👣🪨 to craft attacks against the final aligned model. This is hugely successful with the suffixes found by Checkpoint-GCG, bypassing SOTA defenses such as SecAlign 90%+ of the time 🎯.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

We propose Checkpoint-GCG, an attack method that assumes an informed adversary with some knowledge of the alignment mechanism 🧭.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

🤔 How would we know this though? We propose to use informed adversaries – attackers with more knowledge than currently seems “realistic”, to evaluate the robustness of defenses against future, yet-unknown attacks like we do in privacy.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

With LLMs being integrated into systems everywhere and deployed as agents, we however argue that this is not enough ⚠️. We cannot constantly pen-and-patch, patching LLMs every time a new attack is discovered. We need to ensure our defenses are robust and future-proof 🦾.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

Recent methods claim near-perfect protection against existing red teaming attacks, including GCG, which automatically finds adversarial suffixes to manipulate model behaviour.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

🛡️ Today’s defenses against prompt injection typically rely on alignment-based training, teaching LLMs to ignore injected instructions 💉.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

Sophisticated prompt injection attacks are often done by pairing instructions with adversarial suffixes 💣 that trick models into following the injected instructions.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

This is known as prompt injection 💉, where malicious actors hide instructions in files or web pages (like invisible white text) that manipulate the LLM’s behaviour.

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

Have you ever uploaded a PDF 📄 to ChatGPT 🤖 and asked for a summary? There is a chance the model followed hidden instructions inside the file instead of your prompt 😈

A thread 🧵

20.06.2025 10:50 —

👍 0

🔁 0

💬 1

📌 0

This is an exciting opportunity for technically strong and curious candidates who want to do meaningful research that influences both academia and industry. If you’re weighing the next step in your career, we offer a path to impactful, high-quality research with freedom to explore

20.05.2025 10:33 —

👍 0

🔁 0

💬 1

📌 0

To see more of our work and get to know the team, check here (cpg.doc.ic.ac.uk)!

20.05.2025 10:33 —

👍 0

🔁 0

💬 1

📌 0

✅Can individuals be re-identified even from aggregated statistics? (arxiv.org/abs/2504.18497)

✅How can we efficiently identify training samples at risk of leaking in ML models? (arxiv.org/abs/2411.05743)

20.05.2025 10:33 —

👍 0

🔁 0

💬 1

📌 0

✅How can we rigorously measure what LLMs memorize? (arxiv.org/abs/2406.17975)

✅How can we automatically discover privacy vulnerabilities in query-based systems at scale and in practice? (arxiv.org/abs/2409.01992)

20.05.2025 10:33 —

👍 0

🔁 0

💬 1

📌 0

Happy to share that we are offering one additional fully-funded PhD position starting in Fall 2025! Our research group at Imperial College London works on machine learning and data privacy and security.

Recently, we tackled questions such as:

20.05.2025 10:33 —

👍 2

🔁 0

💬 1

📌 0

🚨One (more!) fully-funded PhD position in our group at Imperial College London – Privacy & Machine Learning 🔐🤖 starting Oct 2025

Plz RT 🔄

20.05.2025 10:33 —

👍 1

🔁 1

💬 1

📌 0

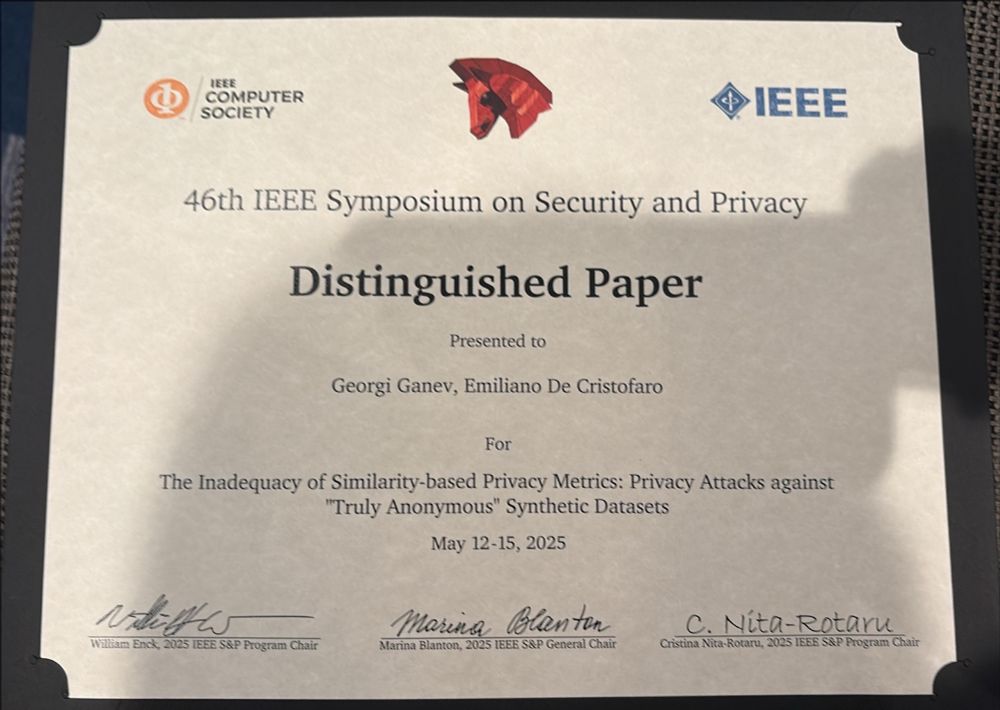

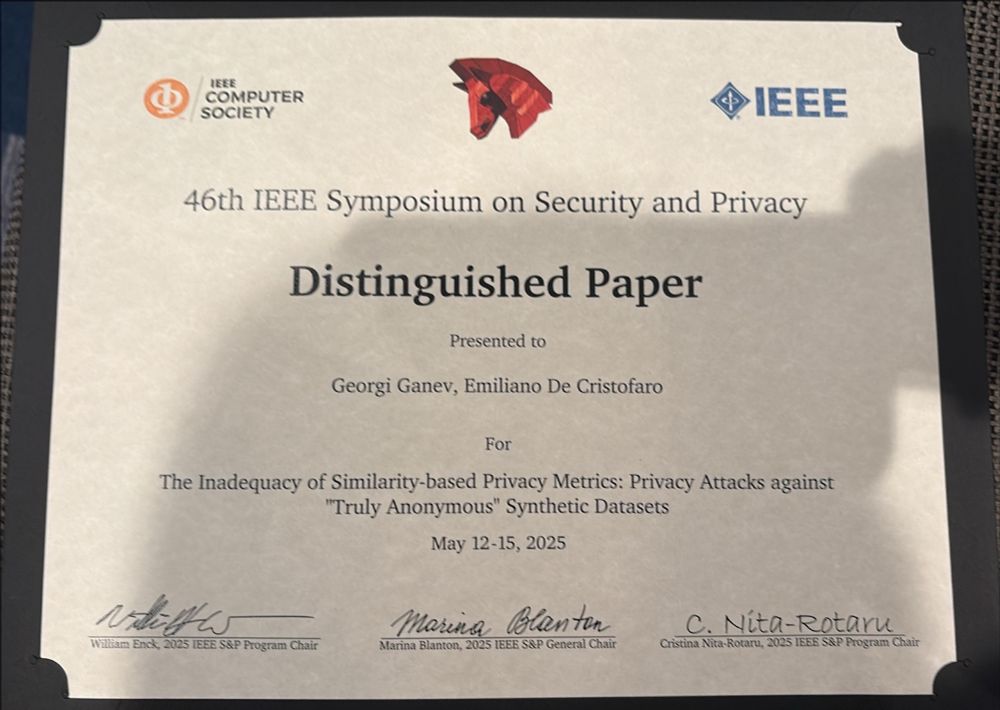

Huge congrats to @spalab.cs.ucr.edu's Georgi Ganev for receiving the Distinguished Paper Award at IEEE S&P for his work "The Inadequacy of Similarity-based Privacy Metrics: Privacy Attacks against “Truly Anonymous” Synthetic Datasets."

Paper: arxiv.org/pdf/2312.051...

14.05.2025 17:51 —

👍 18

🔁 4

💬 1

📌 0

What should I do then? Use MIAs. They are the rigorous and comprehensive standard for evaluating the privacy of synthetic data, including making legal anonymity claims, and when comparing models.

09.05.2025 12:21 —

👍 0

🔁 0

💬 1

📌 0

DCR indeed only appears to catch the most obvious privacy failures, like synthetic datasets that contain large numbers of exact copies from the training data.

09.05.2025 12:21 —

👍 0

🔁 0

💬 1

📌 0

📏 DCR fails to detect privacy leakage, but could it still work as an inexpensive, directional signal for privacy risk? In our experiments, DCR shows no correlation with how vulnerable a dataset is to membership inference attacks.

09.05.2025 12:21 —

👍 0

🔁 0

💬 1

📌 0

😨 The same holds for classical synthetic data generators (IndHist, Baynet, CTGAN): even when DCR marks their output as “private,” membership inference attacks can still correctly correctly infer the membership of up to 20% of the training records used to generate the synthetic data.

09.05.2025 12:21 —

👍 0

🔁 0

💬 1

📌 0

😶🌫️ Datasets generated by state-of-the-art tabular diffusion models (TabDDPM, ClavaDDPM) declared “private” by DCR are highly vulnerable to membership inference attacks (MIAs) – reaching up to 0.35 true positive rate (TPR) at a low false positive rate (FPR).

09.05.2025 12:21 —

👍 0

🔁 0

💬 1

📌 0

How do you know your synthetic data is anonymous 🥸?

If your answer is “we checked Distance to Closest Record (DCR),” then… we might have bad news for you.

Our latest work shows DCR and other proxy metrics to be inadequate measures of the privacy risk of synthetic data.

09.05.2025 12:21 —

👍 2

🔁 1

💬 1

📌 0

We hope DeSIA will now help do the same for what is arguably the most common data release in practice: aggregate statistics.

07.05.2025 11:15 —

👍 0

🔁 0

💬 0

📌 0