Hello all! 👋 🚨 New Preprint Alert! 🚨

Code World Models for General Game-Playing. ♟️🎲 ♣️♥️♠️♦️

I am pleased to announce our new paper, which provides an extremely sample-efficient way to create an agent that can perform well in multi-agent, partially-observed, symbolic environments!

🧵 1/N

09.10.2025 19:27 —

👍 54

🔁 9

💬 2

📌 4

This is the most interesting contribution to the discussion about consciousness I've read in years. For me, the discussion is pretty much resolved.

17.06.2025 15:32 —

👍 4

🔁 1

💬 0

📌 0

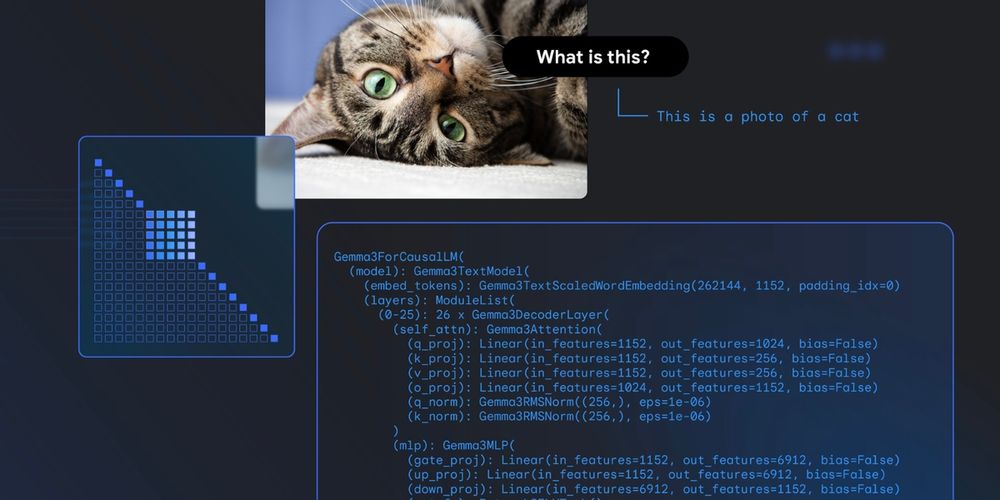

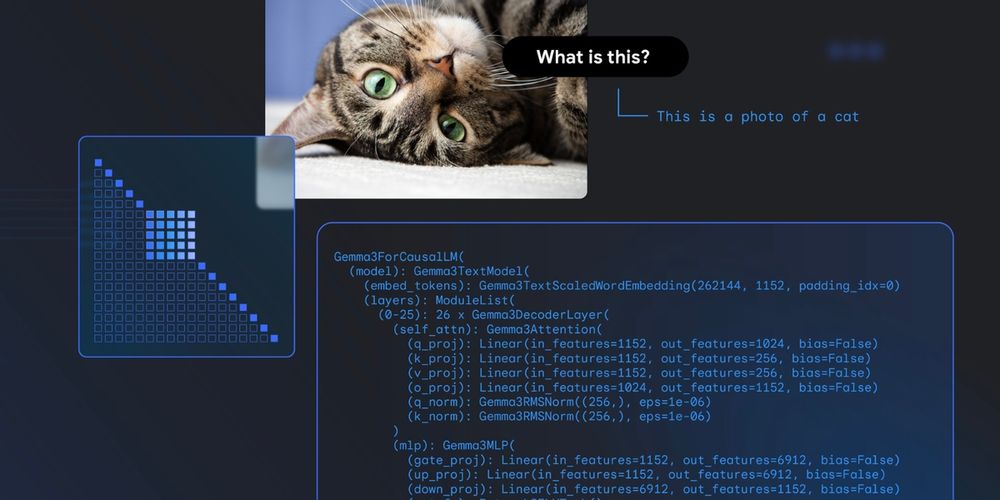

Gemma explained: What’s new in Gemma 3- Google Developers Blog

Google's Gemma 3 model includes vision-language support and architectural changes for resource-friendly multimodal language models.

Gemma 3 explained: Longer context, image support, and a new 1B model. → goo.gle/4lV8iaw

Other key enhancements:

🔸 Best model that fits in a single consumer GPU or TPU host

🔸 KV-cache memory reduction with 5-to-1 interleaved attention

🔸 And more!

Read the blog for the full details on Gemma 3.

30.04.2025 21:46 —

👍 22

🔁 8

💬 1

📌 0

Today we are releasing the dataset of table tennis ball trajectories used to train the Google DeepMind robot that can play amateur table tennis with humans (sites.google.com/corp/view/co...). This work was accepted for presentation at #ICRA2025 and we hope to see you there!

30.04.2025 16:15 —

👍 20

🔁 3

💬 1

📌 0

YouTube video by Google for Developers

Inside Gemma 3: Modifying the output through activation hacking

Gemma 3 are just amazing models!

but what if you want to manipulate it's internal activations to understand how it does its text generation?

Sascha Rothe is here to teach you how!

Great insights for anyone curious about the inner workings of LLMs!

www.youtube.com/watch?v=JTUs...

28.04.2025 13:57 —

👍 10

🔁 4

💬 1

📌 0

“Wanting to be Understood” - Could a deep human need to be understood be the crucial evolutionary 'gadget' bootstrapping cooperation, culture, and language? We explore this idea using AI simulations in our new paper: arxiv.org/abs/2504.06611 🧠

#Evolution #Cognition #AI #GoogleDeepMind

11.04.2025 23:33 —

👍 15

🔁 3

💬 1

📌 0

New paper from our team @GoogleDeepMind!

🚨 We've put LLMs to the test as writing co-pilots – how good are they really at helping us write? LLMs are increasingly used for open-ended tasks like writing assistance, but how do we assess their effectiveness? 🤔

arxiv.org/pdf/2503.19711

02.04.2025 09:51 —

👍 20

🔁 8

💬 1

📌 1

🚨 I’m hosting a Student Researcher @GoogleDeepMind!

Join us on the Autonomous Assistants team (led by

@egrefen.bsky.social) to explore multi-agent communication—how agents learn to interact, coordinate, and solve tasks together.

DM me for details!

02.04.2025 09:57 —

👍 14

🔁 3

💬 1

📌 0

🥁Introducing Gemini 2.5, our most intelligent model with impressive capabilities in advanced reasoning and coding.

Now integrating thinking capabilities, 2.5 Pro Experimental is our most performant Gemini model yet. It’s #1 on the LM Arena leaderboard. 🥇

25.03.2025 17:25 —

👍 215

🔁 65

💬 34

📌 11

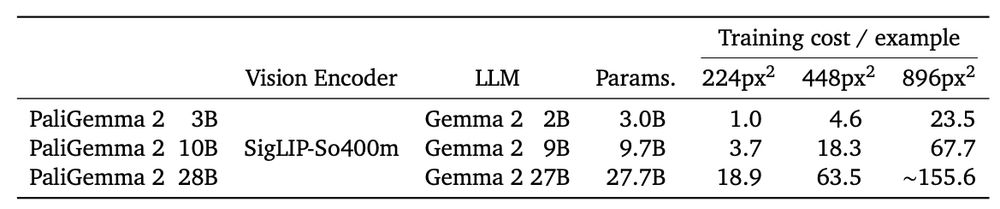

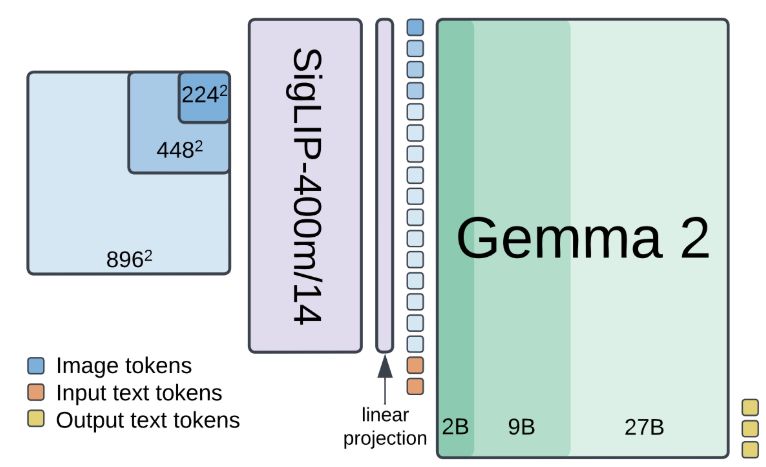

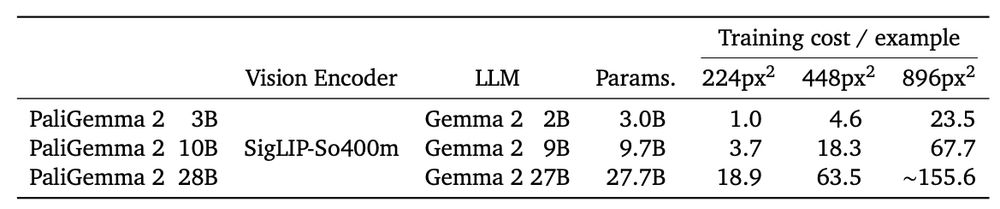

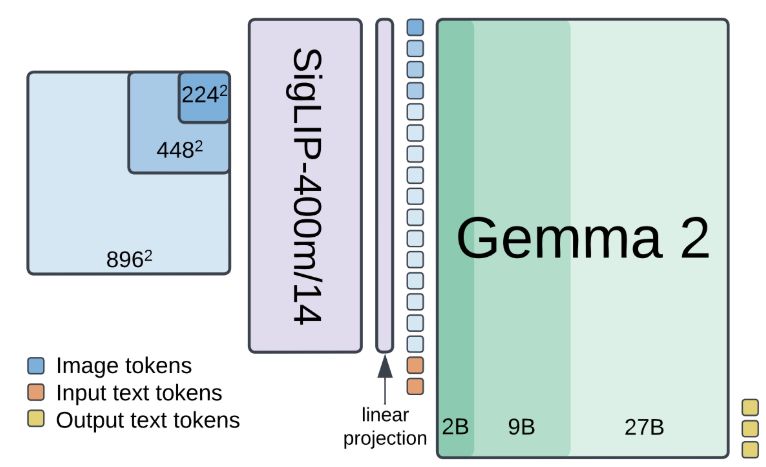

Looking for a small or medium sized VLM? PaliGemma 2 spans more than 150x of compute!

Not sure yet if you want to invest the time 🪄finetuning🪄 on your data? Give it a try with our ready-to-use "mix" checkpoints:

🤗 huggingface.co/blog/paligem...

🎤 developers.googleblog.com/en/introduci...

19.02.2025 17:47 —

👍 19

🔁 7

💬 0

📌 0

How do we ensure humans can still effectively oversee increasingly powerful AI systems? In our blog, we argue that achieving Human-AI complementarity is an underexplored yet vital piece of this puzzle! And, it’s hard, but we achieved it.

🧵(1/10)

24.12.2024 00:00 —

👍 1

🔁 1

💬 1

📌 1

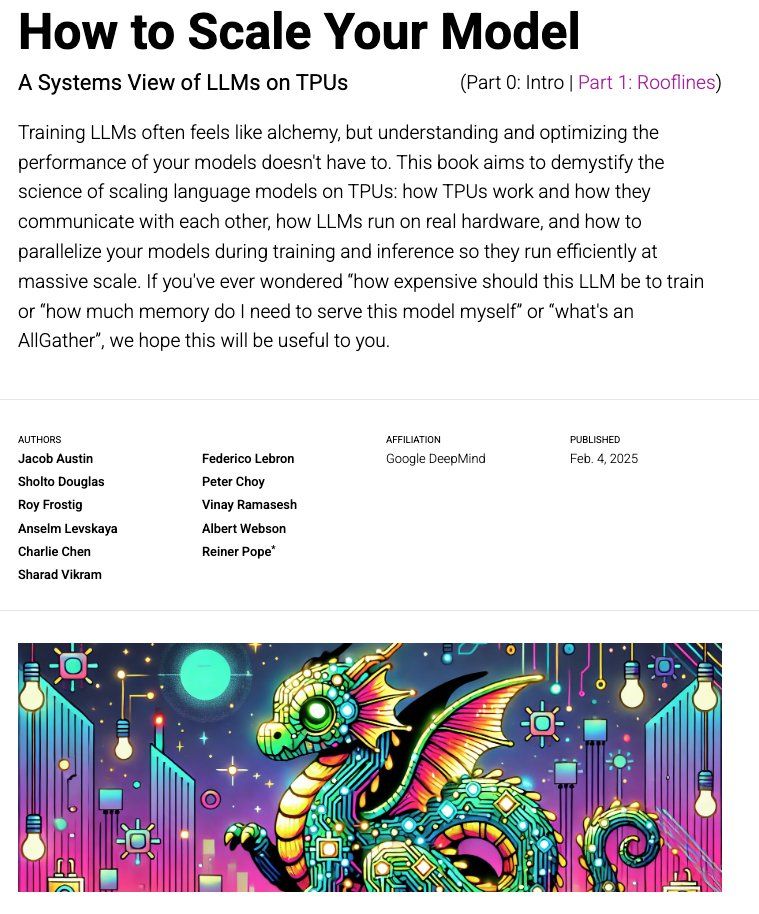

Making LLMs run efficiently can feel scary, but scaling isn’t magic, it’s math! We wanted to demystify the “systems view” of LLMs and wrote a little textbook called “How To Scale Your Model” which we’re releasing today. 1/n

04.02.2025 18:54 —

👍 94

🔁 28

💬 3

📌 8

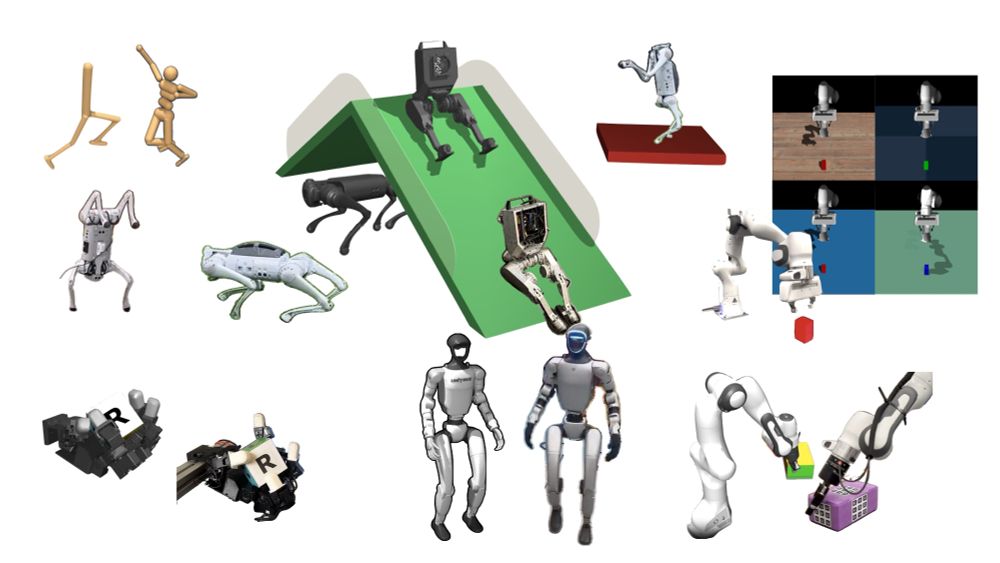

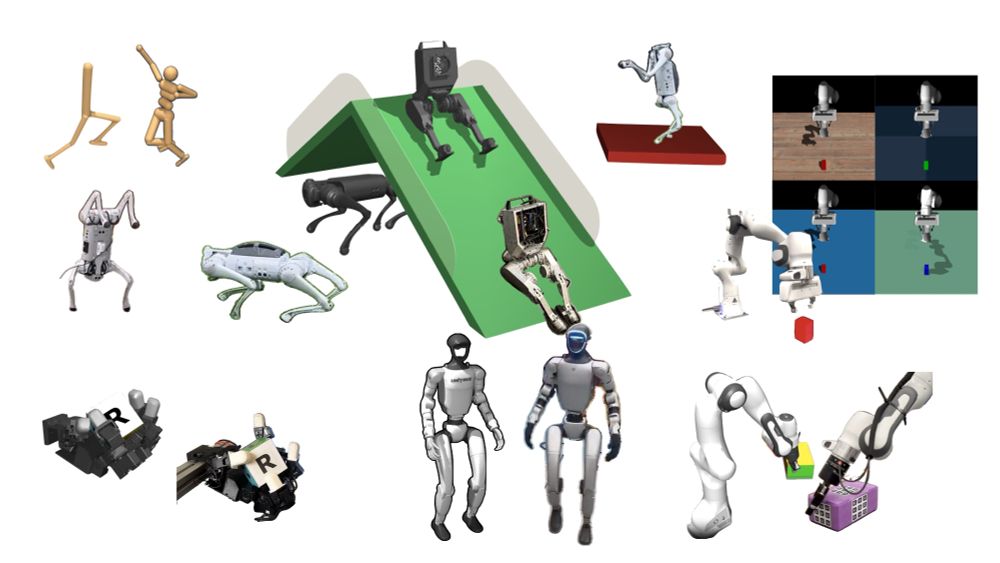

MuJoCo Playground

An open-source framework for GPU-accelerated robot learning and sim-to-real transfer

Introducing playground.mujoco.org

Combining MuJoCo’s rich and thriving ecosystem, massively parallel GPU-accelerated simulation, and real-world results across a diverse range of robot platforms: quadrupeds, humanoids, dexterous hands, and arms.

Get started today: pip install playground

16.01.2025 20:48 —

👍 74

🔁 20

💬 1

📌 3

Apptronik

Apptronik Partners with Google DeepMind.

apptronik.com/news-collect...

21.12.2024 12:33 —

👍 6

🔁 0

💬 0

📌 0

Would it make sense to add this account (for MuJoCo announcements etc.)?

19.12.2024 09:12 —

👍 3

🔁 0

💬 0

📌 0

I'd be happy to be included (MuJoCo news etc.)

17.12.2024 13:57 —

👍 1

🔁 0

💬 0

📌 0

New paper! We show that by using keypoint-based image representation, robot policies become robust to different object types and background changes.

We call this method Prescriptive Point Priors for robot Policies or P3-PO in short. Full project is here: point-priors.github.io

10.12.2024 20:32 —

👍 37

🔁 7

💬 1

📌 2

HOT 🔥 fastest, most precise, and most capable hand control setup ever...

Less than $450 and fully open-source 🤯

by @huggingface, @therobotstudio, @NepYope

This tendon-driven technology will disrupt robotics! Retweet to accelerate its democratization 🚀

A thread 🧵

15.12.2024 08:22 —

👍 73

🔁 27

💬 3

📌 2

Check out Motivo, a behavioral foundation model for humanoid control by FAIR.

It's a one-of-its-kind unsupervised RL project, and it comes with a demo that is SO fun to play with!

metamotivo.metademolab.com

(for the record, they use compile and cudagraphs -> github.com/facebookrese...)

14.12.2024 00:44 —

👍 30

🔁 5

💬 1

📌 0

🚀🚀PaliGemma 2 is our updated and improved PaliGemma release using the Gemma 2 models and providing new pre-trained checkpoints for the full cross product of {224px,448px,896px} resolutions and {3B,10B,28B} model sizes.

1/7

05.12.2024 18:16 —

👍 69

🔁 21

💬 1

📌 5

AI for science could be more impactful than chatbots. It is already helping win Nobel prizes and accelerating drug development and materials discovery.

Today we published an essay about it: why it matters, how it’s happening and its implications. Here is a summary from an econ / social sci lens.

26.11.2024 10:39 —

👍 79

🔁 30

💬 2

📌 7

I'm in a starter pack!

go.bsky.app/GZ4hZzu

28.10.2024 12:20 —

👍 7

🔁 1

💬 1

📌 0

I want to take care of everybody and I'm furious at the people who don't want to take care of everybody but I need to not step off the everybody plate just because they suck

30.09.2024 17:41 —

👍 18

🔁 4

💬 1

📌 3

"A plausible explanation of this is that persons who have achieved or enjoy high social status are less willing to entertain the possibility that a disaster could occur which would spoil everything."

11.09.2024 14:09 —

👍 34

🔁 8

💬 2

📌 0