A review of the proceedings from four major computer-science conferences showed that none from 2021, and all from 2025, had fake citations.

arxiv.org/abs/2602.058...

#AI #LLMs #Hallucinations #Misconduct #ScholComm

A review of the proceedings from four major computer-science conferences showed that none from 2021, and all from 2025, had fake citations.

arxiv.org/abs/2602.058...

#AI #LLMs #Hallucinations #Misconduct #ScholComm

Listen to this. Not a penny more for this. Abolish and prosecute anyone who had anything to do with it.

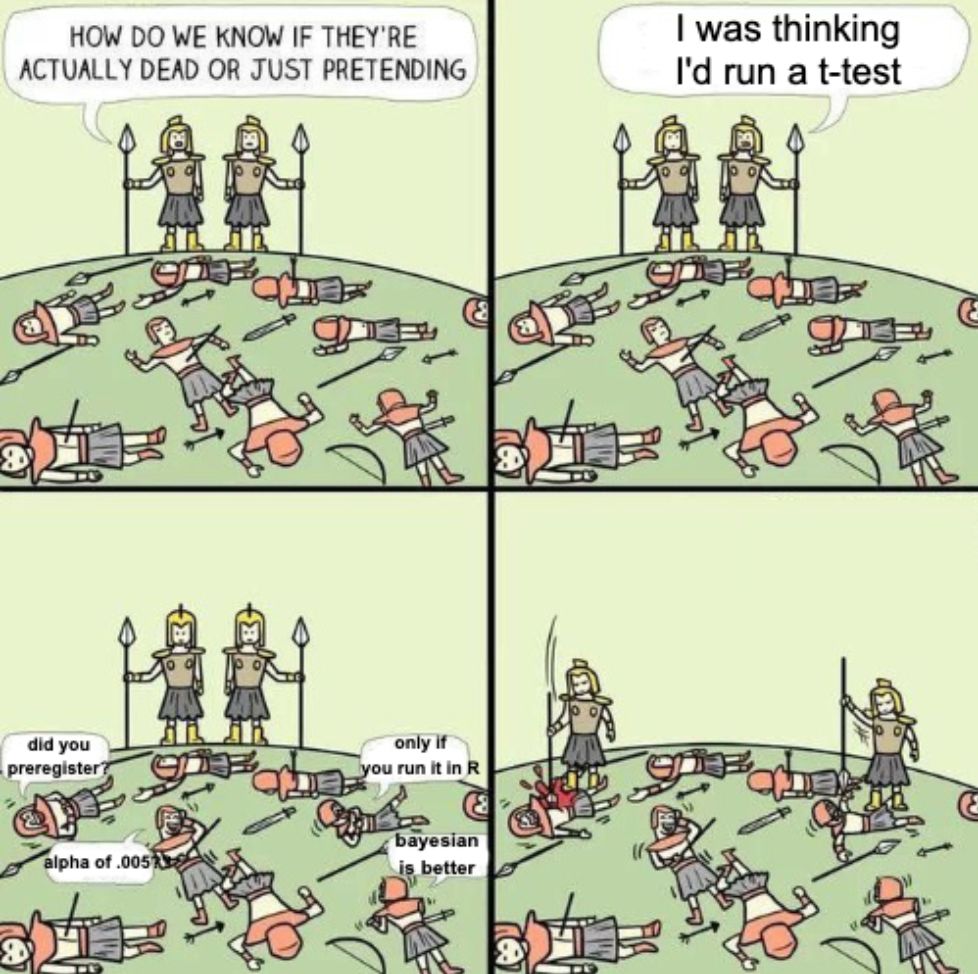

10.02.2026 15:41 — 👍 15402 🔁 5890 💬 237 📌 161Wow this scoring chaos seems to be an extreme case of what I say about many ad hoc analyses: no derivation of method from a clear scientific theory, no assessment of statistical properties, and decades pass before someone notices. This happens in biology too, so let’s not pick on psychology only

02.02.2026 11:55 — 👍 36 🔁 12 💬 2 📌 0

who did this

05.11.2023 07:29 — 👍 284 🔁 78 💬 4 📌 3

Associate or full position in energy economics at AU!

Link in comment

Thanks! I'll give it another go.

14.01.2026 19:47 — 👍 2 🔁 0 💬 0 📌 0

This looks very cool. Downloaded it, but then I needed something called Azul Zulu JRE with JavaFX, which I never heard of before. Downloaded that and then it wouldn't install.

If you want more people to use this, make a browser app so anyone can just click a link, upload their thing and then go.

Multiple entities over multiple time periods, e.g., same people across years. So at least two periods as long as the same people are observed in both periods.

06.01.2026 17:30 — 👍 1 🔁 0 💬 0 📌 0

(Also, icyi, here are the 42 papers citing our non-existent paper which includes a "meta-analysis" - often called the evidence "gold standard" - of "LLM effects" in education 🤮

scholar.google.com.vn/scholar?star...)

Keeping this at hand in case I need to point to it and tap

12.12.2025 16:39 — 👍 105 🔁 34 💬 5 📌 1

Will you incorporate LLMs and AI prompting into the course in the future? No. Why won’t you incorporate LLMs and AI prompting into the course? These tools are useful for coding (see this for my personal take on this). However, they’re only useful if you know what you’re doing first. If you skip the learning-the-process-of-writing-code step and just copy/paste output from ChatGPT, you will not learn. You cannot learn. You cannot improve. You will not understand the code.

In that post, it warns that you cannot use it as a beginner: …to use Databot effectively and safely, you still need the skills of a data scientist: background and domain knowledge, data analysis expertise, and coding ability. There is no LLM-based shortcut to those skills. You cannot LLM your way into domain knowledge, data analysis expertise, or coding ability. The only way to gain domain knowledge, data analysis expertise, and coding ability is to struggle. To get errors. To google those errors. To look over the documentation. To copy/paste your own code and adapt it for different purposes. To explore messy datasets. To struggle to clean those datasets. To spend an hour looking for a missing comma. This isn’t a form of programming hazing, like “I had to walk to school uphill both ways in the snow and now you must too.” It’s the actual process of learning and growing and developing and improving. You’ve gotta struggle.

This Tumblr post puts it well (it’s about art specifically, but it applies to coding and data analysis too): Contrary to popular belief the biggest beginner’s roadblock to art isn’t even technical skill it’s frustration tolerance, especially in the age of social media. It hurts and the frustration is endless but you must build the frustration tolerance equivalent to a roach’s capacity to survive a nuclear explosion. That’s how you build on the technical skill. Throw that “won’t even start because I’m afraid it won’t be perfect” shit out the window. Just do it. Just start. Good luck. (The original post has disappeared, but here’s a reblog.) It’s hard, but struggling is the only way to learn anything.

You might not enjoy code as much as Williams does (or I do), but there’s still value in maintaining codings skills as you improve and learn more. You don’t want your skills to atrophy. As I discuss here, when I do use LLMs for coding-related tasks, I purposely throw as much friction into the process as possible: To avoid falling into over-reliance on LLM-assisted code help, I add as much friction into my workflow as possible. I only use GitHub Copilot and Claude in the browser, not through the chat sidebar in Positron or Visual Studio Code. I treat the code it generates like random answers from StackOverflow or blog posts and generally rewrite it completely. I disable the inline LLM-based auto complete in text editors. For routine tasks like generating {roxygen2} documentation scaffolding for functions, I use the {chores} package, which requires a bunch of pointing and clicking to use. Even though I use Positron, I purposely do not use either Positron Assistant or Databot. I have them disabled. So in the end, for pedagogical reasons, I don’t foresee me incorporating LLMs into this class. I’m pedagogically opposed to it. I’m facing all sorts of external pressure to do it, but I’m resisting. You’ve got to learn first.

Some closing thoughts for my students this semester on LLMs and learning #rstats datavizf25.classes.andrewheiss.com/news/2025-12...

09.12.2025 20:17 — 👍 331 🔁 99 💬 14 📌 31

🎯

statsepi.substack.com/p/the-review...

“No, we’ve all accepted that the goal is to publish anything and everything and see what sticks. More students. More “collaborations”. So many papers that no human can possibly be paying very much attention to any of it. And we all know where the incentives lie.”

07.12.2025 07:12 — 👍 24 🔁 5 💬 0 📌 0Some comments on PubPeer: pubpeer.com/publications...

27.11.2025 20:09 — 👍 65 🔁 3 💬 2 📌 0

Infographic with AI slop published in Nature Scientific Reports

"Runctitiononal features"? "Medical fymblal"? "1 Tol Line storee"? This gets worse the longer you look at it. But it's got to be good, because it was published in Nature Scientific Reports last week: www.nature.com/articles/s41... h/t @asa.tsbalans.se

27.11.2025 09:30 — 👍 2308 🔁 744 💬 206 📌 474

A Quarto document in RStudio with the author field set to "Andrew Heiss"

hahahaha just got an email from someone who was using Claude to generate a boilerplate #QuartoPub document and the LLM *used my name* as the author. The computers are literally trying to be me now 😂🤣🙃🫠

21.11.2025 13:38 — 👍 339 🔁 29 💬 15 📌 2Which tools are you using for making them?

20.11.2025 18:23 — 👍 2 🔁 0 💬 1 📌 0

I know that at this point it's a subplot in the Epstein files drama, but I feel compelled to point out, once again, that Larry Summers HAS NO BUSINESS teaching students at ANY university ever again!

My latest cries into the abyss, in @thenation.com

www.thenation.com/article/soci...

A paper critiquing post-publication peer review has numerous made-up references, including a @nature.com article falsely attributed to our Ivan Oransky.

link.springer.com/article/10.1...

I've used your book several times for a graduate course on Bayes stats for business and data science students with great success. If you divide into beginner and adv. I imagine the first half would fit well for a bachelor level course.

17.11.2025 07:23 — 👍 2 🔁 0 💬 0 📌 0

NEW: Epstein survivors release the most powerful PSA I have ever seen.

Make this go viral so every member of the House of Representatives sees it.

TU/e has gained a new research centre: META/e. Daniël Lakens and Krist Vaesen were among the founders of this knowledge hub for metascience—research aimed at improving the practice of science itself. “We want to be a home for every researcher who occasionally wonders: what are we even doing?”

13.11.2025 14:50 — 👍 26 🔁 13 💬 0 📌 1Yes!!

01.11.2025 07:04 — 👍 4 🔁 0 💬 1 📌 0

New blog post on Gelman's recent claim that Type S and M errors are intended as a 'rhetorical tool', and if I was wrong to believe they were recommended more routinely in our recent preprint criticizing the idea of Type S and M errors. daniellakens.blogspot.com/2025/09/type...

28.09.2025 05:22 — 👍 10 🔁 7 💬 0 📌 1

We present our new preprint titled "Large Language Model Hacking: Quantifying the Hidden Risks of Using LLMs for Text Annotation". We quantify LLM hacking risk through systematic replication of 37 diverse computational social science annotation tasks. For these tasks, we use a combined set of 2,361 realistic hypotheses that researchers might test using these annotations. Then, we collect 13 million LLM annotations across plausible LLM configurations. These annotations feed into 1.4 million regressions testing the hypotheses. For a hypothesis with no true effect (ground truth $p > 0.05$), different LLM configurations yield conflicting conclusions. Checkmarks indicate correct statistical conclusions matching ground truth; crosses indicate LLM hacking -- incorrect conclusions due to annotation errors. Across all experiments, LLM hacking occurs in 31-50\% of cases even with highly capable models. Since minor configuration changes can flip scientific conclusions, from correct to incorrect, LLM hacking can be exploited to present anything as statistically significant.

🚨 New paper alert 🚨 Using LLMs as data annotators, you can produce any scientific result you want. We call this **LLM Hacking**.

Paper: arxiv.org/pdf/2509.08825

What corresponds to the Z-test in this analogy? If the P-curve is the W-test then what is the Z-test?

25.09.2025 13:30 — 👍 0 🔁 0 💬 1 📌 0More examples of faked institutional email addresses from @deevybee.bsky.social here deevybee.blogspot.com/2022/10/what...

23.09.2025 14:24 — 👍 12 🔁 2 💬 2 📌 19 Equivalence Testing and Interval Hypotheses – Improving Your Statistical Inferences share.google/tZRu9HekIBdY...

16.09.2025 21:02 — 👍 3 🔁 0 💬 0 📌 0Absolutely! I'm planning on getting met into the stats curriculum in our undergrad business adm program. My favorite resource is Lakens' online book.

16.09.2025 21:01 — 👍 1 🔁 0 💬 1 📌 0If it makes sense to test a hypothesis, do minimum effect testing and/or set alpha as a function of sample size.

16.09.2025 16:51 — 👍 1 🔁 0 💬 1 📌 0