Using feature importance to interpret your models?

This paper might be of interest to you. Papers by @gunnark.bsky.social are always worth checking out.

Using feature importance to interpret your models?

This paper might be of interest to you. Papers by @gunnark.bsky.social are always worth checking out.

Read more in my latest blog post: mindfulmodeler.substack.com/p/whos-reall...

08.04.2025 12:56 — 👍 1 🔁 0 💬 0 📌 0

My stock portfolio is deep in the red, and tariffs by the Trump admin might be the cause. Could an LLM have been used to calculate them? It made me rethink how LLMs shape decisions, from big global-economy-wrecking ones to everyday decisions.

08.04.2025 12:56 — 👍 8 🔁 3 💬 1 📌 0

SHAP interpretations depend on background data — change the data, change the explanation. A critical but often overlooked issue in model interpretability.

Read more:

🎧 Listen here: www.buzzsprout.com/2186686/epis...

Part 2 drops soon—stay tuned!

I recently joined The AI Fundamentalists with my co-author Timo Freiesleben to discuss our book Supervised Machine Learning for Science. We explored how scientists can leverage ML while maintaining rigor and embedding domain knowledge.

28.03.2025 09:23 — 👍 11 🔁 0 💬 1 📌 0

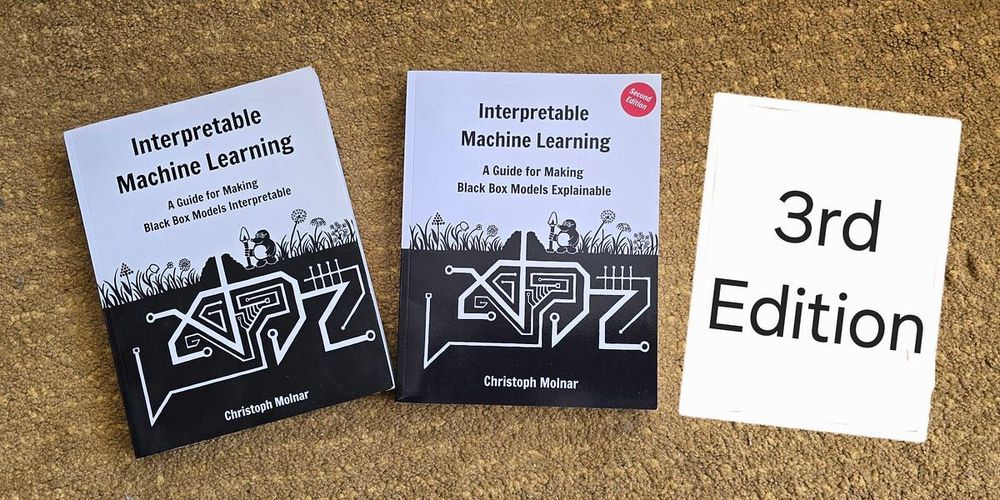

The 3rd edition of Interpretable Machine Learning is out! 🎉 Major cleanup, better examples, and new chapters on Data & Models, Interpretability Goals, Ceteris Paribus, and LOFO Importance.

The book remains free to read for everyone. But you can also buy ebook or paperback.

Has anyone seen Counterfactual Explanations for machine learning models somewhere in the wild?

They are often discussed in research papers, but I have yet to see them being used somewhere in an actual process or product.

I've found the Quarto documentation to be really good, especially the search: quarto.org

12.03.2025 10:11 — 👍 1 🔁 1 💬 1 📌 0It's still hard to predict for me when it fails. For example, told it to simply check for placements of citations in a markdown file, which should be doable with a regex. And Claude failed. But a similar task worked out the other day.

12.03.2025 10:10 — 👍 2 🔁 0 💬 1 📌 0

Trying Claude Code for some tasks. Paradoxically, it's most expensive when it doesn't work because it fails, then tries a couple of times again, burning through tokens.

So sometimes it's 20 cents for saving you 20 minutes of work.

Other times it's $1 for wasting 10 minutes.

Only waiting for the print proof, but if it looks good, I'll publish the third edition of Interpretable Machine Learning next week.

As always, it was more work than anticipated—especially moving the entire book project from Bookdown to Quarto, which took a bit of effort.

Can an office game outperform machine learning?

My most recent post on Mindful Modeler dives into the wisdom of the crowds and prediction markets.

Read the full story here:

5/ It was stressful, but I don’t regret it. I learned a lot and definitely feel validated in my skills again.

Full story & solution details: https://buff.ly/4gHZYHD

4/ Writing Supervised ML for Science at the same time was a huge plus—competition & book writing fed into each other (e.g., uncertainty quantification).

04.02.2025 13:18 — 👍 5 🔁 0 💬 1 📌 03/ One key insight: SHAP’s reference data matters! I used historical forecasts for interpretability. Also combined SHAP with ceteris paribus profiles for sensitivity analysis.

04.02.2025 13:18 — 👍 4 🔁 0 💬 1 📌 0

2/ My approach:

✅ XGBoost ensemble, quantile loss

✅ SHAP for explainability + custom waterfall plots + ceteris paribus plots

✅ Conformal prediction to fix interval coverage

✅ Auto-generated reports with Quarto

1/ Years ago, I went full-time into writing & cut ML practice. At some point, I felt like an impostor writing about ML but no longer practicing. This competition about water supply forecasting on DrivenData (500k prize pool) was a way back in.

04.02.2025 13:18 — 👍 2 🔁 0 💬 1 📌 0

A year ago, I took a risk & spent quite some time on a ML competition. It paid off—I won 4th place overall & 1st in explainability!

Here's a summary of the journey, challenges, & key insights from my winning solution (water supply forecasting)

double button meme. Guy (labeled openai) presses two buttons simultaneously. First one says "Training on other's work is okay" The other says it's not okay

OpenAI right now

29.01.2025 09:51 — 👍 107 🔁 17 💬 2 📌 0deprecated was maybe the wrong word. It's no longer the default in the shap package. There are faster alternatives

22.01.2025 12:39 — 👍 4 🔁 0 💬 0 📌 0

The connection between SHAP and LIME is only when we represent features differently for LIME and use a different weight function.

My take is that, while interesting, it can be misleading as SHAP and original LIME are very different, as you also say.

The original SHAP paper has been cited over 30k times.

The paper showed that attribution methods, like LIME and LRP, compute Shapley values (with some adaptations).

The paper also introduces estimation methods for Shapley values, like KernelSHAP, which today is deprecated.

Not planned so far

21.01.2025 16:20 — 👍 0 🔁 0 💬 0 📌 0

To this day, the Interpretable Machine Learning book is still my most impactful project. But as time went on, I dreaded working on it. Fortunately, I found the motivation again and I'm working on the 3rd edition. 😁

Read more here:

the "They don't know meme". Party. Awkward guy in the corner saying They don't know I work on the 3rd edition of Interpretable Machine Learning". Rest is having fun, titled "Everyone else talking about generative AI"

How I sometimes feel working on "traditional" machine learning topics instead of generative AI stuff 😂

Okay, that makes a lot of sense. Thanks!

09.01.2025 10:05 — 👍 2 🔁 0 💬 0 📌 0I love that visualization. At the same time confused about why souping works.

09.01.2025 08:23 — 👍 2 🔁 0 💬 1 📌 0While the results on ARC are impressive, the ARC problems are abstract games in little grid worlds. And it remains to be seen whether this translates into "general" intelligence.

09.01.2025 07:23 — 👍 1 🔁 0 💬 1 📌 0Great way to frame it

17.12.2024 11:14 — 👍 0 🔁 0 💬 0 📌 0