Our new work on continuous chain of thought.

10.12.2024 16:51 —

👍 4

🔁 0

💬 0

📌 0

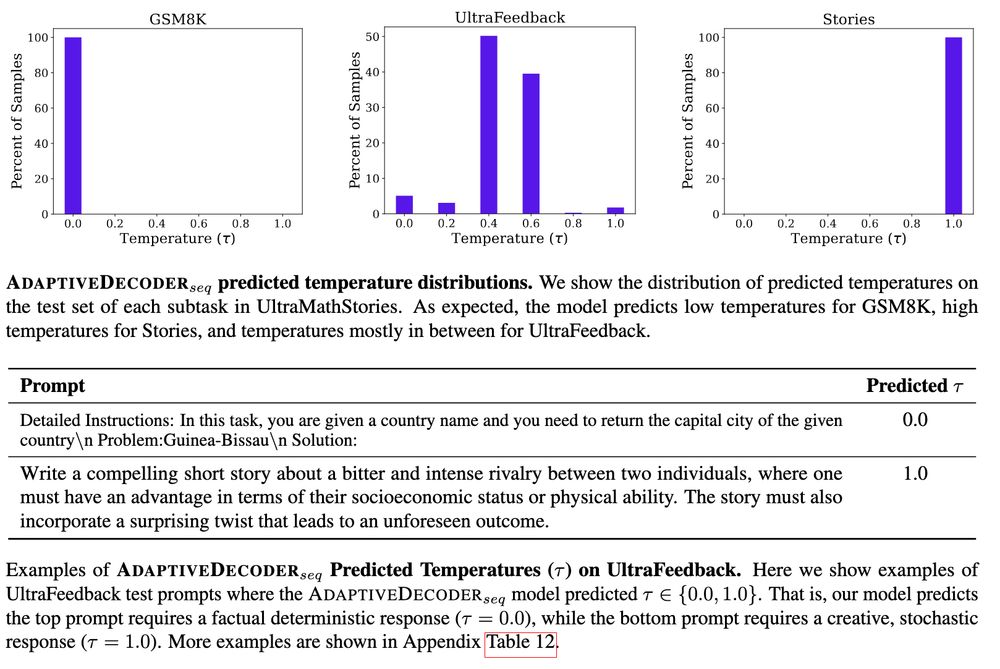

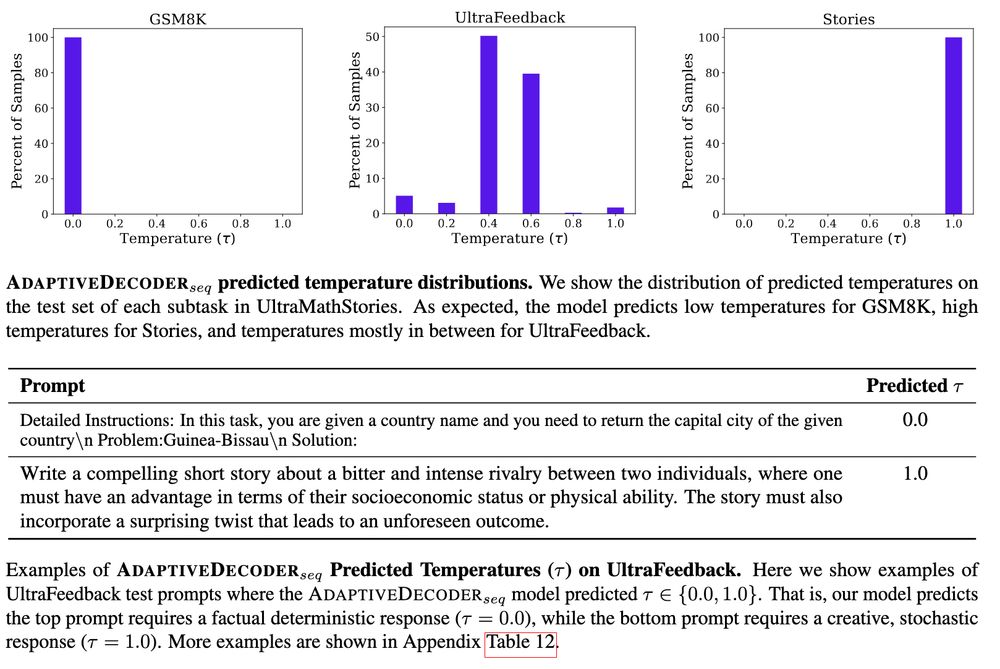

Analysis: AD picks high temp for creative & low for fact-seeking prompts, automatically via training.

Our methods AD & Latent Pref Optimization are general & can be applied to train other hyperparams or latent features.

Excited how people could *adapt* this research!

🧵4/4

22.11.2024 13:06 —

👍 2

🔁 0

💬 0

📌 0

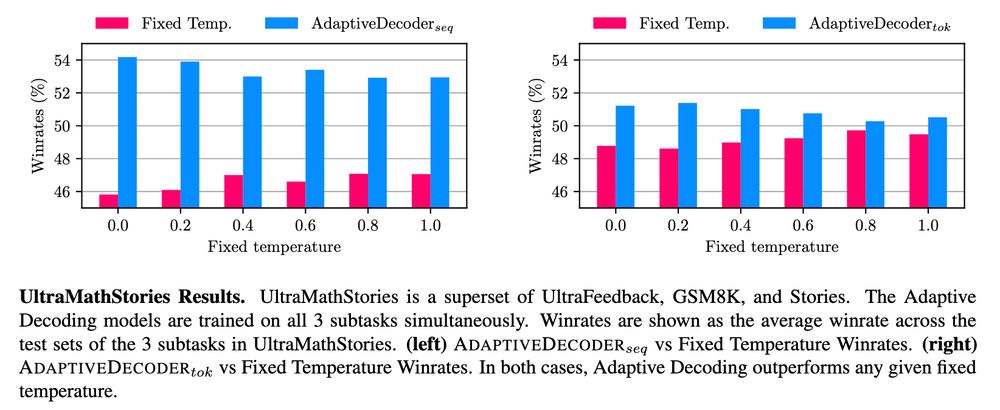

We train on a mix of tasks:

GSM8K - requires factuality (low temp)

Stories - requires creativity (high temp)

UltraFeedback - general instruction following, requires mix

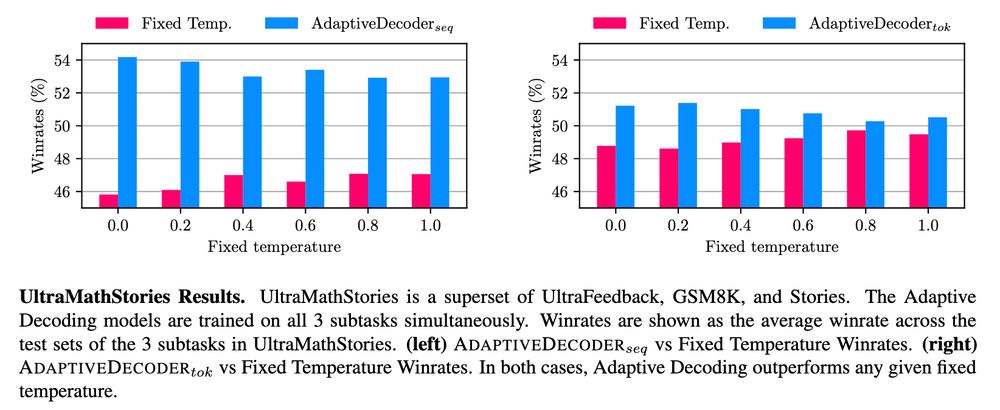

Results: Adaptive Decoding outperforms any fixed temperature, automatically choosing via the AD layer.

🧵3/4

22.11.2024 13:06 —

👍 2

🔁 0

💬 2

📌 0

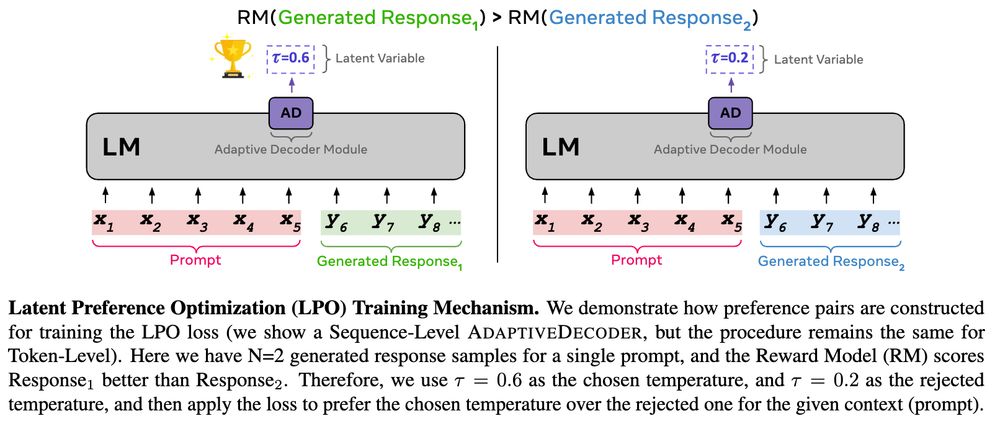

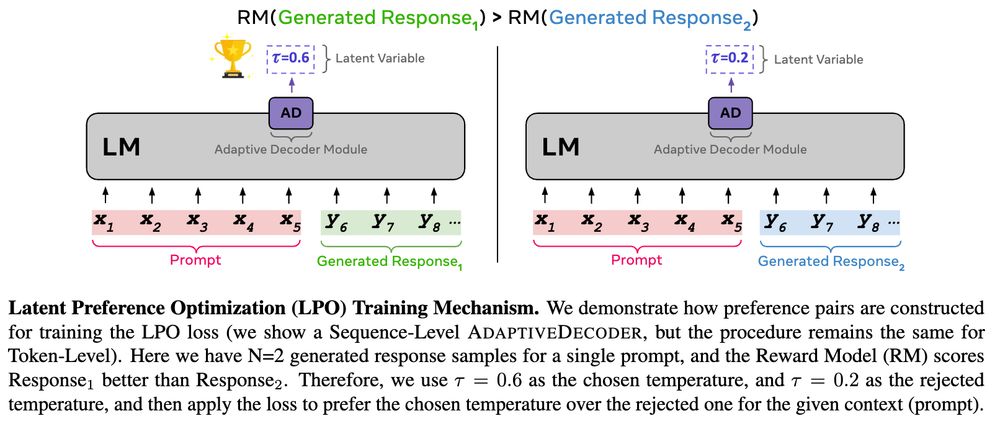

Recipe 👩🍳:

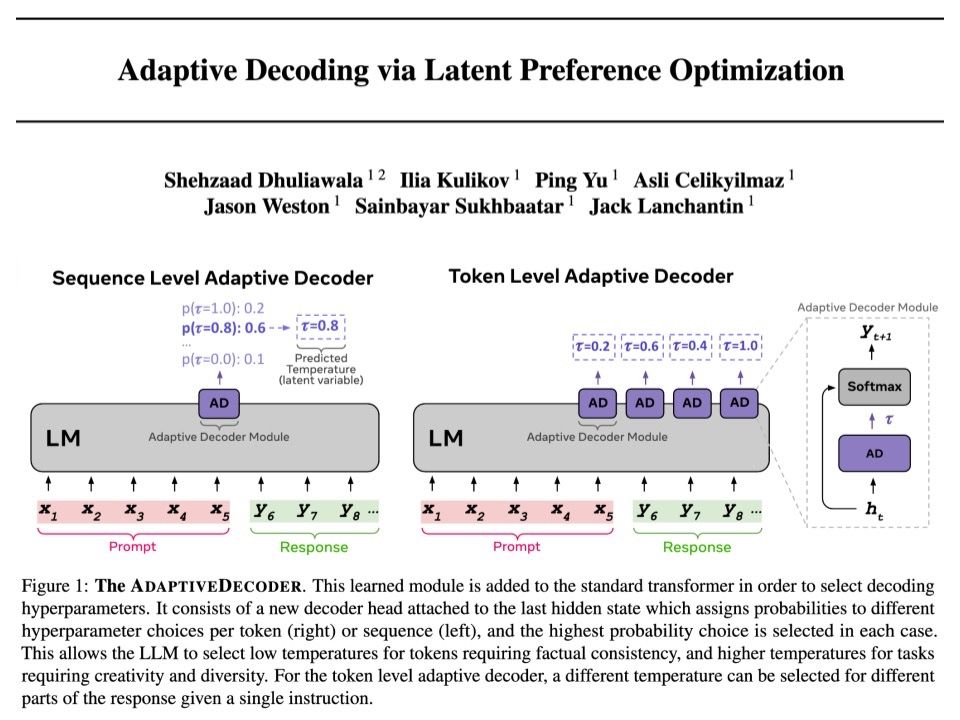

Adaptive Decoder (AD) Layer:

- Assigns probability to each hyperparam choice (decoding temp) given hidden state. Given temp, sample a token.

Training (Latent PO):

- Train AD by sampling params+tokens & use reward model on rejected hyperparam preference pairs

🧵2/4

22.11.2024 13:06 —

👍 1

🔁 0

💬 1

📌 0

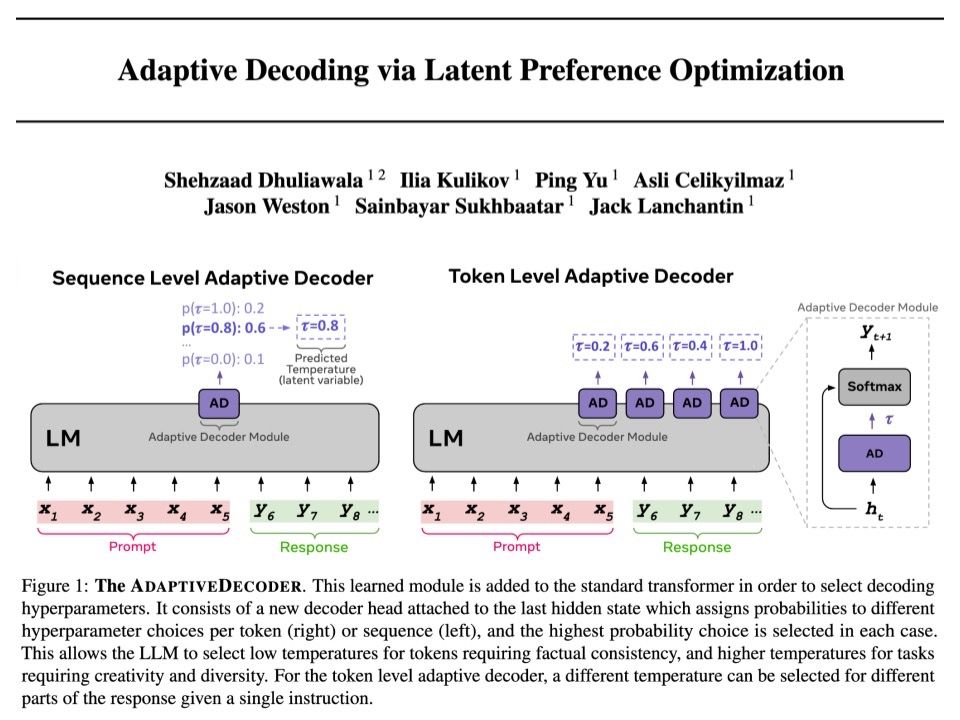

🚨 Adaptive Decoding via Latent Preference Optimization 🚨

- New layer for Transformer, selects decoding params automatically *per token*

- Learnt via new method Latent Preference Optimization

- Outperforms any fixed temperature decoding, choosing creativity or factuality

arxiv.org/abs/2411.09661

🧵1/4

22.11.2024 13:06 —

👍 43

🔁 6

💬 2

📌 0