We already know prompt repetition is a handy hack to improve a decoder-only LM’s performance as it allows the model to “see” bidirectionally, an ability otherwise suppressed by the causal mask.

But what happens if we increase the number of repetitions? 🤔🧵 @eaclmeeting.bsky.social #EACL2026

02.02.2026 12:04 — 👍 5 🔁 4 💬 1 📌 1

👋🌊🇭🇷

18.08.2025 10:51 — 👍 0 🔁 0 💬 0 📌 0

So, news becomes more positive as the years go by. Or does it? We trained sentiment classifiers on STONE & 24sata, then analyzed sentiment over 5 periods of the TL Retriever. We find that positivity rises at the expense of neutrality. But negativity in news headlines also increases.

15.07.2025 12:14 — 👍 0 🔁 0 💬 1 📌 0

We detect sentiment shift by swapping embeddings across periods. Using later-period embeddings in earlier periods results in increased positive sentiment. Using earlier-period embeddings in later periods results in decreased positive sentiment.

15.07.2025 12:14 — 👍 0 🔁 0 💬 1 📌 0

We wondered if the trained embeddings could tell us something about the shift in sentiment. Can we detect changes in positivity and negativity just using the trained embeddings? The answer is yes!

15.07.2025 12:14 — 👍 0 🔁 0 💬 1 📌 0

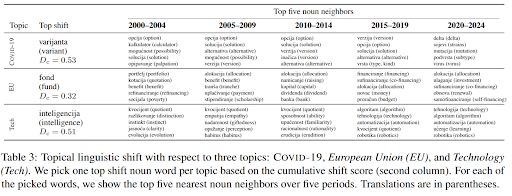

We identify words that change the most by their cumulative cosine distance scores within the last 25 years. For these words, we unveil the change in meaning by picking five nearest neighbors per period. We group the words into three major topics: EU, technology, and COVID.

15.07.2025 12:14 — 👍 0 🔁 0 💬 1 📌 0

We train embeddings using skip-gram with negative sampling (SGNS) method from Word2Vec. We align embeddings between different periods using Procrustes alignment. We validate the quality of embeddings on two word similarity datasets.

15.07.2025 12:14 — 👍 1 🔁 0 💬 1 📌 0

TakeLab Retriever

TakeLab Retriever

We leverage the TakeLab Retriever 🐕 (retriever.takelab.fer.hr) corpus of 10 million articles from Croatian news outlets, which we split into five equal periods (2000--2024).

Semantic change is measured using the cumulative cosine distance between embeddings in neighboring periods.

15.07.2025 12:14 — 👍 0 🔁 0 💬 1 📌 0

Despite traditional diachronic studies using corpora spanning centuries, we also find interesting results when training diachronic embeddings on only 25 years of news data. We detect words from 3 turbulent topics—EU, Technology, and COVID—whose semantics were strongly affected.

15.07.2025 12:14 — 👍 0 🔁 0 💬 1 📌 0

📣📣 New preprint alert!!

Despite events in the world becoming bleaker, the news is… more positive?

We conduct a diachronic study of word embeddings trained on 10M Croatian news articles spanning 25 years and find some surprising results!

arxiv.org/abs/2506.13569

15.07.2025 12:14 — 👍 2 🔁 2 💬 1 📌 1

So, news becomes more positive as the years go by. Or does it? We trained sentiment classifiers on STONE & 24sata, then analyzed sentiment over 5 periods of the TL Retriever. We find that positivity rises at the expense of neutrality. But negativity in news headlines also increases.

15.07.2025 12:08 — 👍 0 🔁 0 💬 1 📌 0

We detect sentiment shift by swapping embeddings across periods. Using later-period embeddings in earlier periods results in increased positive sentiment. Using earlier-period embeddings in later periods results in decreased positive sentiment.

15.07.2025 12:08 — 👍 0 🔁 0 💬 1 📌 0

We wondered if the trained embeddings could tell us something about the shift in sentiment. Can we detect changes in positivity and negativity just using the trained embeddings? The answer is yes!

15.07.2025 12:08 — 👍 0 🔁 0 💬 1 📌 0

We identify words that change the most by their cumulative cosine distance scores within the last 25 years. For these words, we unveil the change in meaning by picking five nearest neighbors per period. We group the words into three major topics: EU, technology, and COVID.

15.07.2025 12:08 — 👍 0 🔁 0 💬 1 📌 0

We train embeddings using skip-gram with negative sampling (SGNS) method from Word2Vec. We align embeddings between different periods using Procrustes alignment. We validate the quality of embeddings on two word similarity datasets.

15.07.2025 12:08 — 👍 1 🔁 0 💬 1 📌 0

TakeLab Retriever

TakeLab Retriever

We leverage the TakeLab Retriever 🐕 (retriever.takelab.fer.hr) corpus of 10 million articles from Croatian news outlets, which we split into five equal periods (2000--2024).

Semantic change is measured using the cumulative cosine distance between embeddings in neighboring periods.

15.07.2025 12:08 — 👍 0 🔁 0 💬 1 📌 0

Despite traditional diachronic studies using corpora spanning centuries, we also find interesting results when training diachronic embeddings on only 25 years of news data. We detect words from 3 turbulent topics—EU, Technology, and COVID—whose semantics were strongly affected.

15.07.2025 12:08 — 👍 0 🔁 0 💬 1 📌 0

Posting about research fby and events and news relevant for the Amsterdam NLP community. Account maintained by @wzuidema@bsky.social

NLP Researcher @ WueNLP University of Wuerzburg

PhD Student @ TakeLab FER (UniZG)

💻 PhD Student at @dh-fbk.bsky.social @mobs-fbk.bsky.social @land-fbk.bsky.social

🇮🇹 FBK, University of Trento

🇪🇺 @ellis.eu

☕ NLP, CSS and coffee

https://nicolopenzo.github.io/

Asst prof @ University of Utah · NLP · she/her 🇭🇷

Researcher in NLP, ML, computer music. Prof @uwcse @uwnlp & helper @allen_ai @ai2_allennlp & familiar to two cats. Single reeds, tango, swim, run, cocktails, מאַמע־לשון, GenX. Opinions not your business.

Research Scientist, Google DeepMind / Ex-academic / Deep learning to help people write code / ❤️s:🐱🐶☕️🍕

Stanford Linguistics and Computer Science. Director, Stanford AI Lab. Founder of @stanfordnlp.bsky.social . #NLP https://nlp.stanford.edu/~manning/

Breakthrough AI to solve the world's biggest problems.

› Join us: http://allenai.org/careers

› Get our newsletter: https://share.hsforms.com/1uJkWs5aDRHWhiky3aHooIg3ioxm

AI @ OpenAI, Tesla, Stanford

https://mega002.github.io

The Ubiquitous Knowledge Processing Lab researches Natural Language Processing (#NLProc) with a strong emphasis on Large Language Models, Conversational AI & Question Answering | @cs-tudarmstadt.bsky.social · @TUDa.bsky.social

https://www.ukp.tu-darmstadt

https://mcgill-nlp.github.io/people/

Working towards the safe development of AI for the benefit of all at Université de Montréal, LawZero and Mila.

A.M. Turing Award Recipient and most-cited AI researcher.

https://lawzero.org/en

https://yoshuabengio.org/profile/

Associate professor at CMU, studying natural language processing and machine learning. Co-founder All Hands AI