Compositionality is a central desideratum for intelligent systems...but it's a fuzzy concept and difficult to quantify. In this blog post, lab member @ericelmoznino.bsky.social outlines ideas toward formalizing it & surveys recent work. A must-read for interested researchers in AI and Neuro

19.08.2025 13:50 — 👍 21 🔁 5 💬 0 📌 0

This work wouldn’t exist without my amazing co-authors:

@mnoukhov.bsky.social & @AaronCourville🙏

22.07.2025 14:41 — 👍 0 🔁 0 💬 0 📌 0

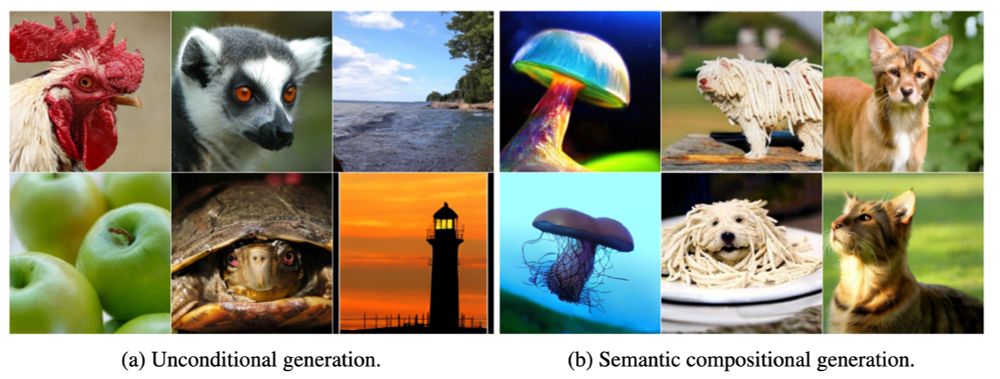

Example: There are no “teapots on mountains” in ImageNet.

We verify this via nearest-neighbor search in DinoV2 space.

But our model can still create them—by composing concepts it learned separately.

22.07.2025 14:41 — 👍 0 🔁 0 💬 1 📌 0

LLMs can speak in DLC!

We fine-tune a language model to sample DLC tokens from text, giving us a pipeline:

Text → DLC → Image

This also enables generation beyond ImageNet.

22.07.2025 14:41 — 👍 0 🔁 0 💬 1 📌 0

DLCs are compositional.

Swap tokens between two images (🐕 Komodor + 🍝 Carbonara) → the model produces coherent hybrids never seen during training.

22.07.2025 14:41 — 👍 0 🔁 0 💬 1 📌 0

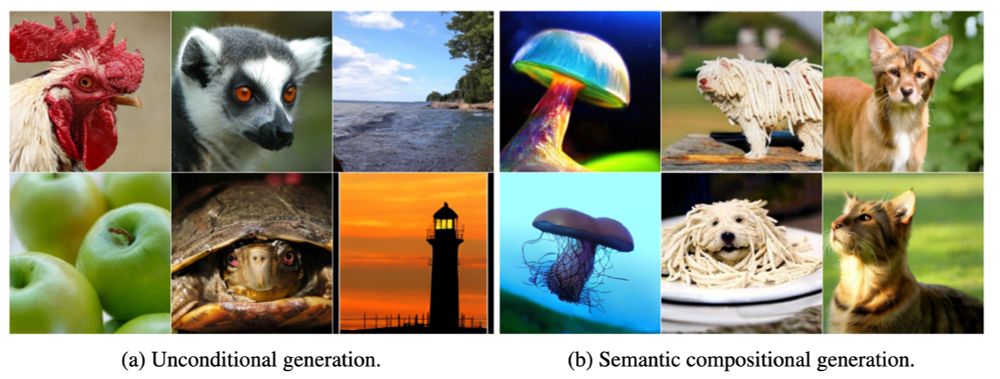

🚀 Results:

DiT-XL/2 + DLC → FID 1.59 on unconditional ImageNet

Works well with and without classifier-free guidance

Learns faster and better than prior works using pre-trained encoders

🤯

22.07.2025 14:41 — 👍 0 🔁 0 💬 1 📌 0

Unconditional generation pipeline:

Sample a DLC (e.g., with SEDD)

Decode it into an image (e.g., with DiT)

This ancestral sampling approach is simple but powerful.

22.07.2025 14:41 — 👍 1 🔁 0 💬 1 📌 0

DLCs enables exactly this.

Images → sequences of discrete tokens via a Simplicial Embedding (SEM) encoder

We take the argmax over token distributions → get the DLC sequence

Think of it as “tokenizing” images—like words for LLMs.

22.07.2025 14:41 — 👍 0 🔁 0 💬 1 📌 0

Text models don’t have this problem! LLMs can model internet scale corpus.

So… can we improve image generation of highly-modal distributions by decomposing it into:

1. Generating discrete tokens - p(c)

2. Decoding tokens into images - p(x|c)

22.07.2025 14:41 — 👍 0 🔁 0 💬 1 📌 0

Modeling highly multimodal distributions in continuous space is hard.

Even a simple 2D Gaussian mixture with a large number of modes may be tricky to model directly. Good conditioning solves this!

Could this be why large image generative models are almost always conditional? 🤔

22.07.2025 14:41 — 👍 0 🔁 0 💬 1 📌 0

🧵 Everyone is chasing new diffusion models—but what about the representations they model from?

We introduce Discrete Latent Codes (DLCs):

- Discrete representation for diffusion models

- Uncond. gen. SOTA FID (1.59 on ImageNet)

- Compositional generation

- Integrates with LLM

🧱

22.07.2025 14:41 — 👍 5 🔁 3 💬 1 📌 0

Congrats Lucas! Looking forward to see what will come out of your lab in Zurich!

05.12.2024 12:55 — 👍 1 🔁 0 💬 0 📌 0

Committed to the daily re-imagining of what a university press can be since 1962.

Website: https://mitpress.mit.edu // The Reader (our home for excerpts, essays, & interviews): https://thereader.mitpress.mit.edu

CTO of Technology & Society at Google, working on fundamental AI research and exploring the nature and origins of intelligence.

Mathematician/informatician thinking probabilistically, expecting the same of you.

Edinburgh 🏴 / they≥she>he≥0 🏳️⚧️

It is the categories in the mind and the guns in their hands which keep us enslaved.

Researching the dark arts of deep learning at Meta's FAIR (Fundamental AI Research) Lab https://markibrahim.me/

AI4science research, density functional theory @ Microsoft Research Amsterdam. PhD on generative modeling, flows, diffusion @ Mila Montreal

Research Scientist @Apple MLR

Mathematician at UCLA. My primary social media account is https://mathstodon.xyz/@tao . I also have a blog at https://terrytao.wordpress.com/ and a home page at https://www.math.ucla.edu/~tao/

Research Scientist at ServiceNow

Gradient-descent enthusiast building LLM agents.

Formerly Mila, Deepmind, Amazon, ElemenAI, Spotify

Research Scientist Meta/FAIR, Prof. University of Geneva, co-founder Neural Concept SA. I like reality.

https://fleuret.org

messing up with gaussians

Ph.D. Student at Mila

Visiting Researcher at Meta FAIR

Causality, Trustworthy ML

Former: Microsoft Research, IIT Kanpur

divyat09.github.io

PhD student @mila-quebec.bsky.social, visiting researcher at FAIR. Responsible AI and generative models.

Ph.D. Student studying AI & decision making at Mila / McGill University. Currently at FAIR @ Meta. Previously Google DeepMind & Google Brain.

https://brosa.ca

Advocate for tech that makes humans better | Spatial Computing, Holodeck, and AI Futurist | Ex-Microsoft, Rackspace | Co-author, "The Infinite Retina."

AIxBio Research Scientist 👩🔬 | PhD Computational Biology 👩💻 | BSc Biomedicine 🧫 | "Singlecellologist" 🦠 into biologically meaningful representation learning 🧬 | Decoding life in London 🇬🇧

Staff ML Scientist @valenceai.bsky.social Labs/Recursion Pharma, Mila

GFlowNets, molecules & stuff

https://folinoid.com

Researcher (OpenAI. Ex: DeepMind, Brain, RWTH Aachen), Gamer, Hacker, Belgian.

Anon feedback: https://admonymous.co/giffmana

📍 Zürich, Suisse 🔗 http://lucasb.eyer.be

AI @ OpenAI, Tesla, Stanford

PhD researcher at Mila Quebec