Now out in @cp-trendscognsci.bsky.social: our short response to @neurosteven.bsky.social & Edward de Haan's recent paper on the binding problem. We argue that the binding problem arises because of tradeoffs faced by any information processing system, including the brain and DNNs. shorturl.at/RGXzt

16.01.2026 10:38 —

👍 19

🔁 8

💬 2

📌 1

bioRxiv

Welcome to the bioRxiv homepage.

An idea to post an expanded version on biorxiv.org?

10.09.2025 12:52 —

👍 0

🔁 0

💬 0

📌 0

That is deal with the statistical structure of the world on the basis of learning experience (sampling + evolution). To jump to human behaviour would be, I think, mixing up 2, or potentially 3 levels of observation.

10.09.2025 12:50 —

👍 1

🔁 0

💬 0

📌 0

DCNNs here confirm our ideas of vision at a neuroscience level and potentially expand these with a broader view of what these filters are and how they can emerge. Beyond that, it suggests a theory of what vision does at this stage.

10.09.2025 12:50 —

👍 1

🔁 0

💬 1

📌 0

I guess the theory that you would abstract from results such as these is that feedforward vision is dominated by features that naturally emerge through hierarchical processing and show these features can emerge in a convolutional tree.

10.09.2025 12:50 —

👍 0

🔁 0

💬 1

📌 0

A substantial amount of the neural activity that can be explained relates to these processes, and this part (my 5 cents, this data) is (the bulk) of what is explained by DCNNs. But a necessary part of processing for vision outside the lab.

09.09.2025 08:02 —

👍 0

🔁 0

💬 1

📌 0

For the model to classify objects it does a lot of 'stuff'. For instance, without a background a shallow network suffices (Seijdel et al., 2020, scholar.google.com/citations?vi...). Natural images force these networks to do a lot more than what we would label object recognition.

09.09.2025 08:02 —

👍 0

🔁 0

💬 1

📌 0

It has been surprising for me the last 7 years how easy it is to find signature of texture processing (Loke et al., 2024) and scene segmentation (Seijdel et al., 2020, 2021) in DCNNs and how little of it seems to relate to subsequent steps. We have really been trying.

08.09.2025 20:13 —

👍 1

🔁 0

💬 0

📌 0

DNNs still capture an impressive amount of variance. The most parsimonious account I think is that DNNs model the initial encoding well but miss, or perform differently, subsequent steps of object recognition.

08.09.2025 20:13 —

👍 1

🔁 1

💬 2

📌 0

Great work from my PhD Jessica Loke, together with @lynnkasorensen.bsky.social , @irisgroen.bsky.social and Nathalie Cappaert.

08.09.2025 18:32 —

👍 4

🔁 0

💬 0

📌 0

Trajectories make it visual:

🔵 Texture path → high alignment, low object info (upper-left quadrant)

🔴/🟢 Natural & object-only paths → more object info but no extra alignment.

This explains why better object recognition ≠ better brain prediction.

08.09.2025 18:32 —

👍 1

🔁 0

💬 1

📌 0

Cross-prediction: texture features predict brain responses to natural scenes almost as well as features from the originals themselves.

Local image statistics = the common representational currency between artificial and biological vision.

08.09.2025 18:32 —

👍 1

🔁 0

💬 1

📌 0

The key dissociation:

• EEG encodes object category across all conditions.

• But object info does not drive DNN–brain alignment.

• Peak alignment occurs when object info is minimal (texture condition).

08.09.2025 18:32 —

👍 1

🔁 0

💬 1

📌 0

Three versions of each image:

🔴Natural scenes

🔵Texture-synthesized (global summaries of local stats only; no recognizable objects)

🟢Object-only (objects without backgrounds). Counterintuitive result: strongest DNN–brain alignment for texture-only images!

08.09.2025 18:32 —

👍 2

🔁 0

💬 1

📌 0

🧠 New preprint: Why do deep neural networks predict brain responses so well?

We find a striking dissociation: it’s not shared object recognition. Alignment is driven by sensitivity to texture-like local statistics.

📊 Study: n=57, 624k trials, 5 models doi.org/10.1101/2025...

08.09.2025 18:32 —

👍 113

🔁 37

💬 5

📌 5

Our response is due in 3 weeks. Pondering.

07.09.2025 17:18 —

👍 3

🔁 0

💬 0

📌 0

Thank you!!!!

17.08.2025 15:06 —

👍 9

🔁 0

💬 0

📌 0

Lynn Flannery, Kerry Miller, Jeff Wilson, Kevin Koenrades, Brenda Klappe and our Volunteers: Nina Fitzmaurice, Ole Jürgensen, Denise Kittelmann, Elif Ayten

Maithe van Noort, Mohanna Hoveyda, Caroline Harbison, Yamil Vidal, Sotirios Panagiotou,Sofie Wahlberg, Danting Meng, Mobina Tousian.

17.08.2025 15:06 —

👍 9

🔁 0

💬 1

📌 0

mehrer.bsky.social

@claires012345.bsky.social @mheilbron.bsky.social Angela Radulescu @neuroprinciplist.bsky.social @dotadotadota.bsky.social Tyler bonnen Sneha Aenugu @hannesmehrer.bsky.social @debyee.bsky.social Julian Kosciessa @anne-urai.bsky.social @mdhk.net @tdado.bsky.social Shauney Wilson, Shawna Lampkin,

17.08.2025 15:06 —

👍 13

🔁 0

💬 1

📌 0

But could not have run without @lauragwilliams.bsky.social @jaspervdb.bsky.social @achterbrain.bsky.social @niklasmuller.bsky.social @eringrant.me @pebenjamters.bsky.social @shahabbakht.bsky.social @judithfan.bsky.social @jfeather.bsky.social Jiahui Guo @tknapen.bsky.social @lampinen.bsky.social

17.08.2025 15:06 —

👍 11

🔁 0

💬 1

📌 0

LinkedIn

This link will take you to a page that’s not on LinkedIn

a reception in Hotel Arena, an epic-party in Ijver (with about 40% of attendees on the dance floor), and above all 929 community members who brought a lot of energy and hopefully had, on multiple dimensions a fantastic conference.

So proud to be, together with @irisgroen.bsky.social chair.

17.08.2025 15:06 —

👍 15

🔁 1

💬 1

📌 0

#CCN2025 is over. Over 5 days there were 6 fantastic keynotes, 550 posters, 3 community events, 3 keynote & tutorials, 3 generative adversarial collaborations, 8 Satellite events, 1 community lunch meeting, 1 cross-conference hackathon, 1 competition, coffee all day, stroopwafels on day 1,

17.08.2025 15:06 —

👍 63

🔁 7

💬 1

📌 2

That's a wrap for CCN2025 -- and so planning for CCN2026 in New York is starting today! Save travels to all participants and remember to fill out the feedback survey sent via email!

15.08.2025 16:29 —

👍 59

🔁 7

💬 1

📌 0

The moment we've all been waiting for— #CCN2025 is HERE! Today we start with satellite events, and tomorrow the main conference begins in Amsterdam! We'll be sharing daily updates about each day's program, so follow the CCN account to stay in the loop. Can't wait to see everyone!

11.08.2025 08:29 —

👍 35

🔁 4

💬 1

📌 0

After preparing for a full year together with @neurosteven.bsky.social and all other amazing organizers

of @cogcompneuro.bsky.social, #CCN2025 is finally here!

While I'm proud of the entire program we put together, I'd now like to highlight my own lab's contributions, 6 posters total:

10.08.2025 15:20 —

👍 52

🔁 7

💬 1

📌 0

CCN2025 kicks off this Tuesday in Amsterdam (with satellite events Monday)! Fun fact: We might be getting the best conference weather Amsterdam has ever seen ☀️

Can't wait to meet everyone and dive into the exciting program ahead. See you there!

06.08.2025 14:47 —

👍 10

🔁 0

💬 0

📌 0

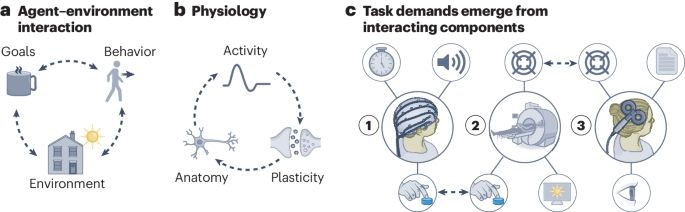

Representation of locomotive action affordances in human behavior, brains, and deep neural networks | PNAS

To decide how to move around the world, we must determine which locomotive actions

(e.g., walking, swimming, or climbing) are afforded by the immed...

In these tumultuous times, still happy to report a scientific achievement: our preprint on affordance perception was just published in PNAS!

www.pnas.org/doi/10.1073/...

Using behavior, fMRI and deep network analyses, we report two key findings. To recapitulate (preprint 🧵lost on other place):

16.06.2025 11:33 —

👍 72

🔁 27

💬 3

📌 1