ACL 2026 Workshop CoNLL

Welcome to the OpenReview homepage for ACL 2026 Workshop CoNLL

🚨 CoNLL 2026: Call for Papers 🚨

📍San Diego | July 3–4, 2026 (co-located w/ ACL).

🙋♀️Focus: theoretically/cognitively motivated CL & NLP

NEW Areas: Computational Usage-Based Grammars + Language & the Brain.

(Online presentation option available)

📅 Deadline Feb 19, 2026 (AoE): bit.ly/4kgRyKF

05.02.2026 14:05 —

👍 6

🔁 3

💬 0

📌 0

Questionnaire | page 1

A short survey on research practices with eye-tracking data, especially in reading studies, as part of the OpenEye Project

soscisurvey.de/OpenEye/

Deadline for participation: December 20

12.12.2025 08:04 —

👍 1

🔁 1

💬 0

📌 0

CoNLL 2026 | CoNLL

Super excited to co-chair (with Claire Bonial) my favorite NLP conference - CoNLL!

If you think your work will matter not just now, but also in 5 or 10 years, you should submit it to CoNLL 2026!

CFP: conll.org

12.12.2025 05:57 —

👍 2

🔁 0

💬 0

📌 0

fun pre-print for your start of week reading:

"People Make Graded Judgments About The Inconceivable"

(by Hu, Sosa, and me)

doi.org/10.31234/osf...

08.12.2025 14:18 —

👍 38

🔁 12

💬 1

📌 0

#NeurIPS2025 Check out EyeBench 👀, a mega-project which provides a much needed infrastructure for loading & preprocessing eye-tracking for reading datasets, and addressing super exciting modeling challenges: decoding linguistic knowledge 👩 and reading interactions 👩+📖 from gaze!

eyebench.github.io

02.12.2025 10:21 —

👍 9

🔁 2

💬 0

📌 1

It's officially been 75 years since the proposal of the Turing Test, a good time bring up 'The Minimal Turing Test':

www.sciencedirect.com/science/arti...

02.10.2025 15:37 —

👍 27

🔁 6

💬 1

📌 0

if any

11.09.2025 05:23 —

👍 5

🔁 0

💬 0

📌 0

my friend/colleague Frank Jäkel wrote a book on AI. I sadly don't know German but I happily know Frank, and I've heard him talking about this for a while now, and just on that basis I'd recommend the German speakers in the audience check it out

24.08.2025 15:50 —

👍 15

🔁 3

💬 0

📌 2

ACL 2025 Tutorial: Eye Tracking and NLP

ACL 2025 Tutorial on Eye Tracking and NLP

We had a lot of fun delivering the Eye Tracking and NLP tutorial at ACL! The slides are available on the tutorial website acl2025-eyetracking-and-nlp.github.io

20.08.2025 07:28 —

👍 3

🔁 0

💬 0

📌 0

Help us record firefly flashes! 👇🙏

25.06.2025 21:32 —

👍 65

🔁 54

💬 5

📌 0

OECS thematic collections.

If you haven't been looking recently at the Open Encyclopedia of Cognitive Science (oecs.mit.edu), here's your reminder that we are a free, open access resource for learning about the science of mind.

Today we are launching our new Thematic Collections to organize our growing set of articles!

30.05.2025 00:18 —

👍 414

🔁 184

💬 10

📌 7

LinkedIn

This link will take you to a page that’s not on LinkedIn

Data and documentation: github.com/lacclab/OneS...

Preprint: osf.io/preprints/ps...

Exciting recent work with OneStop from our lab (more on this soon!!): github.com/lacclab/OneS...

29.05.2025 11:12 —

👍 2

🔁 0

💬 0

📌 0

👁️🗨️ 4 sub-corpora: 📖 reading for comprehension, 🔎📖 information seeking, 📖📖 repeated reading, 🔎📖📖 information seeking in repeated reading.

🏋🏽 Text difficulty level manipulation: reading original and simplified texts.

👌 High quality recordings with an EyeLink 1000 Plus eye tracker.

29.05.2025 11:12 —

👍 2

🔁 0

💬 1

📌 0

👥 360 participants (English L1) & 152 hours of eye movement recordings - more data than all the publicly available English L1 eye tracking corpora combined!

🗞️ 30 newswire articles in English (162 paragraphs) with reading comprehension questions and auxiliary text annotations.

29.05.2025 11:12 —

👍 0

🔁 0

💬 1

📌 0

👀 📖 Big news! 📖 👀

Happy to announce the release of the OneStop Eye Movements dataset! 🎉 🎉

OneStop is the product of over 6 years of experimental design, data collection and data curation.

github.com/lacclab/OneS...

29.05.2025 11:12 —

👍 9

🔁 3

💬 1

📌 0

Sentence processing workshop, May 27, 2025

In person (no streaming/zoom) sentence processing workshop at Potsdam with Tal Linzen, Brian Dillon, Titus von der Malsburg, Oezge Bakay, William Timkey, Pia Schoknecht, Michael Vrazitulis, and Johan Hennert:

vasishth.github.io/sentproc-wor...

22.05.2025 07:06 —

👍 5

🔁 2

💬 0

📌 0

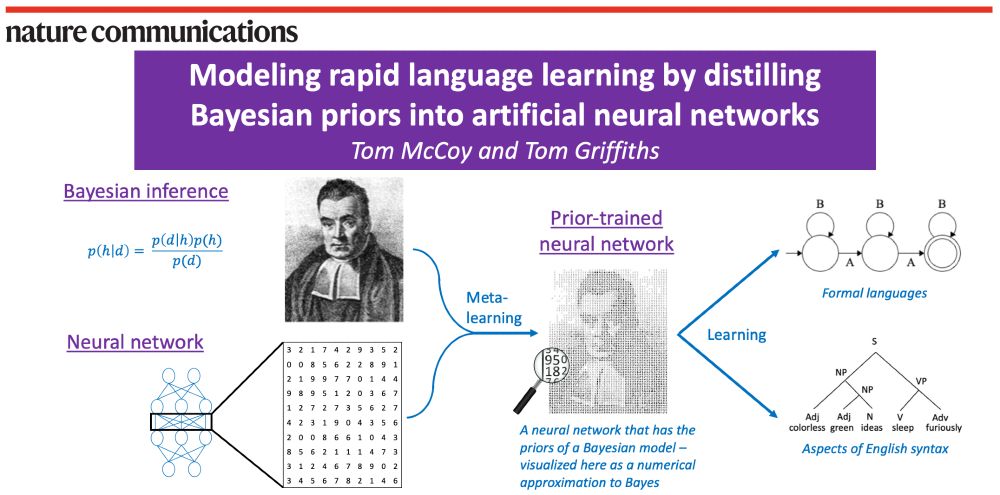

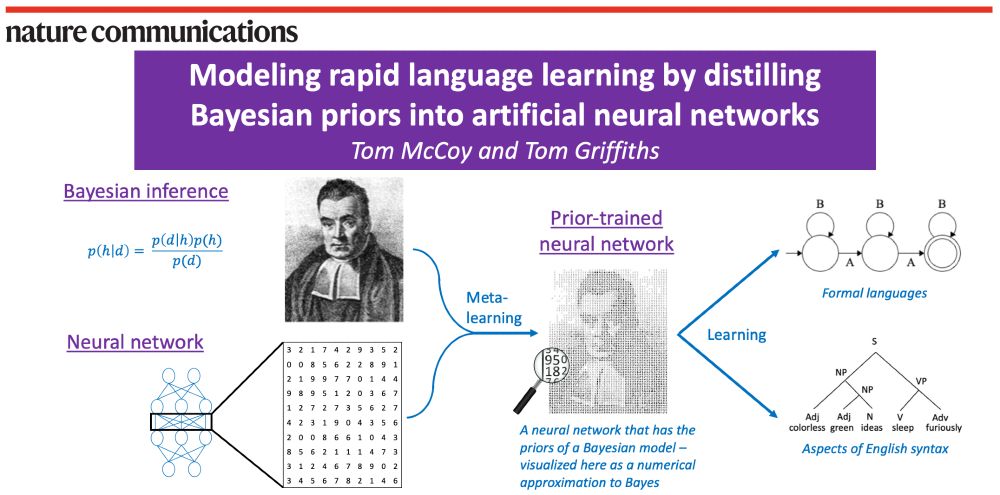

A schematic of our method. On the left are shown Bayesian inference (visualized using Bayes’ rule and a portrait of the Reverend Bayes) and neural networks (visualized as a weight matrix). Then, an arrow labeled “meta-learning” combines Bayesian inference and neural networks into a “prior-trained neural network”, described as a neural network that has the priors of a Bayesian model – visualized as the same portrait of Reverend Bayes but made out of numbers. Finally, an arrow labeled “learning” goes from the prior-trained neural network to two examples of what it can learn: formal languages (visualized with a finite-state automaton) and aspects of English syntax (visualized with a parse tree for the sentence “colorless green ideas sleep furiously”).

🤖🧠 Paper out in Nature Communications! 🧠🤖

Bayesian models can learn rapidly. Neural networks can handle messy, naturalistic data. How can we combine these strengths?

Our answer: Use meta-learning to distill Bayesian priors into a neural network!

www.nature.com/articles/s41...

1/n

20.05.2025 19:04 —

👍 155

🔁 43

💬 4

📌 1

On the left is a probabilistic context free grammar (PCFG). On the right is an image of the Transformer architecture. There are arrows going back and forth between the PCFG and the Transformer, showing how the assignment goes back and forth between them.

Made a new assignment for a class on Computational Psycholinguistics:

- I trained a Transformer language model on sentences sampled from a PCFG

- The students' task: Given the Transformer, try to infer the PCFG (w/ a leaderboard for who got closest)

Would recommend!

1/n

02.05.2025 15:30 —

👍 21

🔁 3

💬 1

📌 0

Check out our new work on introspection in LLMs! 🔍

TL;DR we find no evidence that LLMs have privileged access to their own knowledge.

Beyond the study of LLM introspection, our findings inform an ongoing debate in linguistics research: prompting (eg grammaticality judgments) =/= prob measurement!

12.03.2025 17:43 —

👍 50

🔁 7

💬 0

📌 1

it's only Consciousness if it comes from the Consciousness region of the brain, otherwise its just sparkling attention

11.03.2025 12:36 —

👍 30

🔁 3

💬 3

📌 1

new preprint on Theory of Mind in LLMs, a topic I know a lot of people care about (I care. I'm part of people):

"Re-evaluating Theory of Mind evaluation in large language models"

(by Hu* @jennhu.bsky.social , Sosa, and me)

link: arxiv.org/pdf/2502.21098

06.03.2025 13:33 —

👍 92

🔁 27

💬 4

📌 6

Out today in Nature Machine Intelligence!

From childhood on, people can create novel, playful, and creative goals. Models have yet to capture this ability. We propose a new way to represent goals and report a model that can generate human-like goals in a playful setting... 1/N

21.02.2025 16:29 —

👍 135

🔁 40

💬 5

📌 4

Hello! I'm looking to hire a post-doc, to start this Summer or Fall.

It'd be great if you could share this widely with people you think might be interested.

More details on the position & how to apply: bit.ly/cocodev_post...

Official posting here: academicpositions.harvard.edu/postings/14723

13.02.2025 14:07 —

👍 109

🔁 87

💬 3

📌 3

EvLab

Our research aims to understand how the language system works and how it fits into the broader landscape of the human mind and brain.

Our language neuroscience lab (evlab.mit.edu) is looking for a new lab manager/FT RA to start in the summer. Apply here: tinyurl.com/3r346k66 We'll start reviewing apps in early Mar. (Unfortunately, MIT does not sponsor visas for these positions, but OPT works.)

05.02.2025 14:43 —

👍 30

🔁 20

💬 0

📌 0

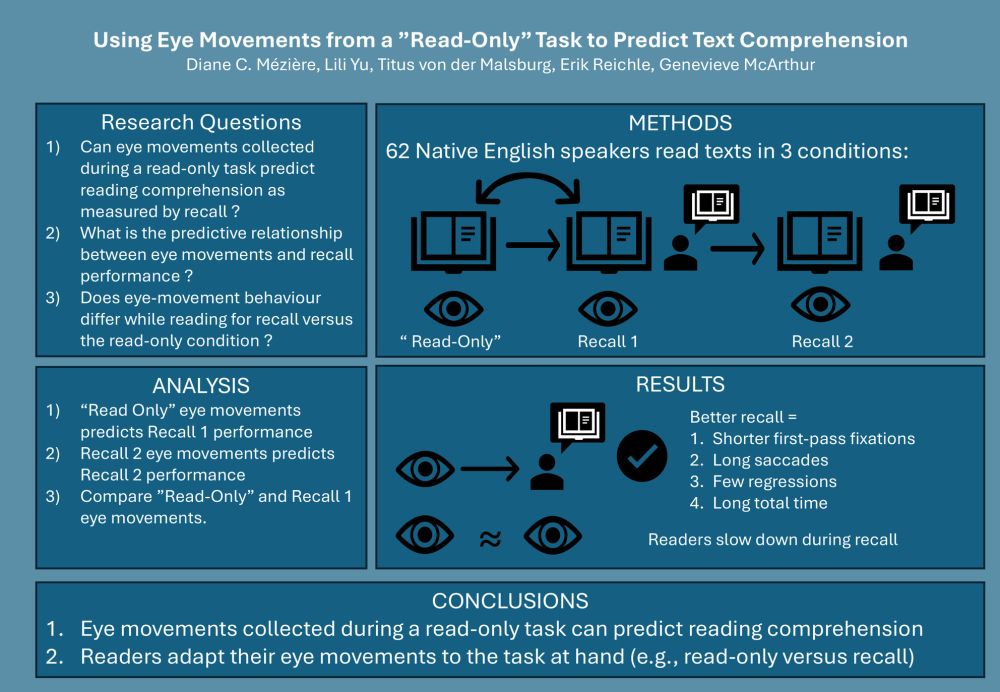

The 3rd Workshop on Eye Movements and the Assessment of Reading ComprehensionJune 5–7, 2025, University of Stuttgart

The 3rd Workshop on Eye Movements and the Assessment of Reading Comprehension will take place on June 5–7, 2025 at the University of Stuttgart!

Submit an abstract by March 1st and join us!

tmalsburg.github.io/Comprehensio...

03.02.2025 12:15 —

👍 4

🔁 1

💬 0

📌 0

Troland Research Award – NAS

Two Troland Research Awards of $75,000 are given annually to recognize unusual achievement by early-career researchers (preferably 45 years of age or younger) and to further empirical research within ...

So excited to receive the Troland Award!! Huge congrats to the other winner—Nick Turk-Browne! And TY, as always, to my mentors&nominators, to my amazing labbies past&present, and to all the wonderful and supportive colleagues in our broader scientific community. <3 www.nasonline.org/award/trolan...

23.01.2025 17:50 —

👍 220

🔁 21

💬 34

📌 0