So happy to have presented our project on **Individual signatures of spillover** at CPL2025. Grateful to my supervisor Lena Jaeger, for her great ideas and for her kind support! Thanks to my coauthors and the audience at CPL2025. The award was a democratic vote by the audience!

20.12.2025 20:39 — 👍 1 🔁 0 💬 0 📌 0

A distinct set of brain areas process prosody--the melody of speech

Human speech carries information beyond the words themselves: pitch, loudness, duration, and pauses--jointly referred to as 'prosody'--emphasize critical words, help group words into phrases, and conv...

New preprint on prosody in the brain!

tinyurl.com/2ndswjwu

HeeSoKim NiharikaJhingan SaraSwords @hopekean.bsky.social @coltoncasto.bsky.social JenniferCole @evfedorenko.bsky.social

Prosody areas are distinct from pitch, speech, and multiple-demand areas, and partly overlap with lang+social areas→🧵

15.12.2025 19:27 — 👍 35 🔁 13 💬 1 📌 3

Take a look at how we challenge state-of-the-art NLP systems to recognize token-level semantic differences across languages with our new SwissGov-RSD dataset! @vamvas.bsky.social @ricosennrich.bsky.social

Paper: arxiv.org/pdf/2512.075...

Dataset: huggingface.co/datasets/Zur...

#NLProc

09.12.2025 08:48 — 👍 6 🔁 3 💬 0 📌 0

#NeurIPS2025 Check out EyeBench 👀, a mega-project which provides a much needed infrastructure for loading & preprocessing eye-tracking for reading datasets, and addressing super exciting modeling challenges: decoding linguistic knowledge 👩 and reading interactions 👩+📖 from gaze!

eyebench.github.io

02.12.2025 10:21 — 👍 9 🔁 2 💬 0 📌 1

Actually, we are also trying to working on a solution, but for Chinese, mainly.

01.12.2025 23:03 — 👍 1 🔁 0 💬 0 📌 0

Wow! Thank you so much!!

26.11.2025 22:55 — 👍 3 🔁 0 💬 1 📌 0

UZH group picture at #EMNLP2025!

If you're here, catch us for a chat!

06.11.2025 12:27 — 👍 4 🔁 1 💬 0 📌 0

Take-home Message

🔹 We formalize input quality in reading as mutual information.

🔹 We link it to measurable human behavior.

🔹 We show multimodal LLMs can model this effect quantitatively.

Bottom-up information matters — and now we can measure how much it matters.

02.11.2025 11:06 — 👍 0 🔁 0 💬 0 📌 0

Key Result 2: Information from Models

Using fine-tuned Qwen2.5-VL and TransOCR, we estimated the MI between images and word identity.

MI systematically drops: Full > Upper > Lower — perfectly mirroring human reading patterns! 🤯

02.11.2025 11:06 — 👍 0 🔁 0 💬 1 📌 0

Key Result 1: Human Reading

Reading times show a clear pattern:

Full visible< Upper visible < Lower visible in both English & Chinese.

👉 Upper halves are more informative (and easier to read).

02.11.2025 11:06 — 👍 0 🔁 0 💬 1 📌 0

We model reading time as proportional to the number of visual “samples” needed to reduce uncertainty below a threshold ϕ.

Higher mutual information → fewer samples → faster reading.

02.11.2025 11:06 — 👍 0 🔁 0 💬 1 📌 0

📊 We quantify this using mutual information (MI) between visual input and word identity.

To test the theory, we created a reading experiment using the MoTR (Mouse-Tracking-for-Reading) paradigm 🖱️📖

We ran the study in both English and Chinese.

02.11.2025 11:06 — 👍 1 🔁 0 💬 1 📌 0

We propose a formal model where reading is a Bayesian update integrating top-down expectations and bottom-up evidence.

When bottom-up input is noisy (e.g., words are partially occluded), comprehension becomes harder and slower.

02.11.2025 11:06 — 👍 1 🔁 0 💬 1 📌 0

👀Ever wondered how visual information quality affects reading and language processing?

Our new #EMNLP2025 paper with @wegotlieb.bsky.social, Lena Jäger -- “Modeling Bottom-up Information Quality during Language Processing”, bridges psycholinguistics and multimodal LLMs.

🧠💡👇

arxiv.org/pdf/2509.17047

02.11.2025 11:06 — 👍 4 🔁 0 💬 1 📌 0

Let's meet at #EMNLP and talk about multilingual knowledge benchmarks!

⚠️MLAMA is full of disfluent sentences

❓Reason: templated translation

💡Simple full-sentence translation improves factual retrieval up to 25%

🙌Remember to check your benchmarks with speakers!

Link: arxiv.org/pdf/2510.15115

28.10.2025 21:09 — 👍 1 🔁 1 💬 0 📌 0

💥Introducing new paper: arxiv.org/pdf/2510.17715, QueST — train specialized generators to create challenging coding problems.

From Qwen3-8B-Base

✅ 100K synthetic problems: better than Qwen3-8B

✅ Combining with human written problems: matches DeepSeek-R1-671B

🧵(1/5)

21.10.2025 14:01 — 👍 4 🔁 3 💬 1 📌 0

![R code and output showing the new functionality:

``` r

## pak::pkg_install("quentingronau/bridgesampling#44")

## see: https://cran.r-project.org/web/packages/bridgesampling/vignettes/bridgesampling_example_stan.html

library(bridgesampling)

### generate data ###

set.seed(12345)

mu <- 0

tau2 <- 0.5

sigma2 <- 1

n <- 20

theta <- rnorm(n, mu, sqrt(tau2))

y <- rnorm(n, theta, sqrt(sigma2))

### set prior parameters ###

mu0 <- 0

tau20 <- 1

alpha <- 1

beta <- 1

stancodeH0 <- 'data {

int<lower=1> n; // number of observations

vector[n] y; // observations

real<lower=0> alpha;

real<lower=0> beta;

real<lower=0> sigma2;

}

parameters {

real<lower=0> tau2; // group-level variance

vector[n] theta; // participant effects

}

model {

target += inv_gamma_lpdf(tau2 | alpha, beta);

target += normal_lpdf(theta | 0, sqrt(tau2));

target += normal_lpdf(y | theta, sqrt(sigma2));

}

'

tf <- withr::local_tempfile(fileext = ".stan")

writeLines(stancodeH0, tf)

mod <- cmdstanr::cmdstan_model(tf, quiet = TRUE, force_recompile = TRUE)

fitH0 <- mod$sample(

data = list(y = y, n = n,

alpha = alpha,

beta = beta,

sigma2 = sigma2),

seed = 202,

chains = 4,

parallel_chains = 4,

iter_warmup = 1000,

iter_sampling = 50000,

refresh = 0

)

#> Running MCMC with 4 parallel chains...

#>

#> Chain 3 finished in 0.8 seconds.

#> Chain 2 finished in 0.8 seconds.

#> Chain 4 finished in 0.8 seconds.

#> Chain 1 finished in 1.1 seconds.

#>

#> All 4 chains finished successfully.

#> Mean chain execution time: 0.9 seconds.

#> Total execution time: 1.2 seconds.

H0.bridge <- bridge_sampler(fitH0, silent = TRUE)

print(H0.bridge)

#> Bridge sampling estimate of the log marginal likelihood: -37.73301

#> Estimate obtained in 8 iteration(s) via method "normal".

#### Expected output:

## Bridge sampling estimate of the log marginal likelihood: -37.53183

## Estimate obtained in 5 iteration(s) via method "normal".

```](https://cdn.bsky.app/img/feed_thumbnail/plain/did:plc:lgxrkw556dtxkplwrj5rzlli/bafkreicf6zdyyjmaxrdfyq2sekokprym3c2o5atca6i2cczcx5zec4q4hm@jpeg)

R code and output showing the new functionality:

``` r

## pak::pkg_install("quentingronau/bridgesampling#44")

## see: https://cran.r-project.org/web/packages/bridgesampling/vignettes/bridgesampling_example_stan.html

library(bridgesampling)

### generate data ###

set.seed(12345)

mu <- 0

tau2 <- 0.5

sigma2 <- 1

n <- 20

theta <- rnorm(n, mu, sqrt(tau2))

y <- rnorm(n, theta, sqrt(sigma2))

### set prior parameters ###

mu0 <- 0

tau20 <- 1

alpha <- 1

beta <- 1

stancodeH0 <- 'data {

int<lower=1> n; // number of observations

vector[n] y; // observations

real<lower=0> alpha;

real<lower=0> beta;

real<lower=0> sigma2;

}

parameters {

real<lower=0> tau2; // group-level variance

vector[n] theta; // participant effects

}

model {

target += inv_gamma_lpdf(tau2 | alpha, beta);

target += normal_lpdf(theta | 0, sqrt(tau2));

target += normal_lpdf(y | theta, sqrt(sigma2));

}

'

tf <- withr::local_tempfile(fileext = ".stan")

writeLines(stancodeH0, tf)

mod <- cmdstanr::cmdstan_model(tf, quiet = TRUE, force_recompile = TRUE)

fitH0 <- mod$sample(

data = list(y = y, n = n,

alpha = alpha,

beta = beta,

sigma2 = sigma2),

seed = 202,

chains = 4,

parallel_chains = 4,

iter_warmup = 1000,

iter_sampling = 50000,

refresh = 0

)

#> Running MCMC with 4 parallel chains...

#>

#> Chain 3 finished in 0.8 seconds.

#> Chain 2 finished in 0.8 seconds.

#> Chain 4 finished in 0.8 seconds.

#> Chain 1 finished in 1.1 seconds.

#>

#> All 4 chains finished successfully.

#> Mean chain execution time: 0.9 seconds.

#> Total execution time: 1.2 seconds.

H0.bridge <- bridge_sampler(fitH0, silent = TRUE)

print(H0.bridge)

#> Bridge sampling estimate of the log marginal likelihood: -37.73301

#> Estimate obtained in 8 iteration(s) via method "normal".

#### Expected output:

## Bridge sampling estimate of the log marginal likelihood: -37.53183

## Estimate obtained in 5 iteration(s) via method "normal".

```

Exciting #rstats news for Bayesian model comparison: bridgesampling is finally ready to support cmdstanr, see screenshot. Help us by installing the development version of bridgesampling and letting us know if it works for your model(s): pak::pkg_install("quentingronau/bridgesampling#44")

02.09.2025 09:16 — 👍 28 🔁 9 💬 2 📌 1

We are done with the ninth Statistical Methods for Linguistics and Psychology (SMLP) summer school, Potsdam, Germany. The tenth edition is planned for 24-28 August 2026.

31.08.2025 08:00 — 👍 16 🔁 3 💬 0 📌 0

Honoured to receive two (!!) SAC highlights awards at #ACL2025 😁 (Conveniently placed on the same slide!)

With the amazing: @philipwitti.bsky.social, @gregorbachmann.bsky.social and @wegotlieb.bsky.social,

@cuiding.bsky.social, Giovanni Acampa, @alexwarstadt.bsky.social, @tamaregev.bsky.social

31.07.2025 07:41 — 👍 22 🔁 3 💬 0 📌 0

Sina Ahmadi receiving award.

Congratulations to @sinaahmadi.bsky.social and co-authors for receiving an ACL 2025 Outstanding Paper Award for PARME: Parallel Corpora for Low-Resourced Middle Eastern Languages!

aclanthology.org/2025.acl-lon...

30.07.2025 15:10 — 👍 14 🔁 6 💬 0 📌 0

Shravan Vasishth's Intro Bayes course home page

Next week onwards, I'm teaching a five-day introductory course on Bayesian Data Analysis in Gent. Newly recorded video lectures to accompany the course are now online: vasishth.github.io/LecturesIntr...

10.07.2025 19:32 — 👍 13 🔁 5 💬 0 📌 0

Terminology Translation Task

📣Take part in 3rd Terminology shared task @WMT!📣

This year:

👉5 language pairs: EN->{ES, RU, DE, ZH},

👉2 tracks - sentence-level and doc-level translation,

👉authentic data from 2 domains: finance and IT!

www2.statmt.org/wmt25/termin...

Don't miss an opportunity - we only do it once in two years😏

06.06.2025 15:54 — 👍 3 🔁 2 💬 0 📌 2

Some of my colleagues are already very excited about this work!

04.06.2025 17:58 — 👍 2 🔁 0 💬 0 📌 0

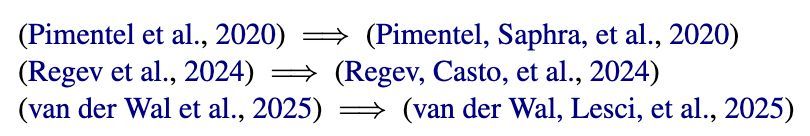

Inline citations with only first author name, or first two co-first author names.

If you're finishing your camera-ready for ACL or ICML and want to cite co-first authors more fairly, I just made a simple fix to do this! Just add $^*$ to the authors' names in your bibtex, and the citations should change :)

github.com/tpimentelms/...

29.05.2025 08:53 — 👍 85 🔁 23 💬 4 📌 0

👀 📖 Big news! 📖 👀

Happy to announce the release of the OneStop Eye Movements dataset! 🎉 🎉

OneStop is the product of over 6 years of experimental design, data collection and data curation.

github.com/lacclab/OneS...

29.05.2025 11:12 — 👍 9 🔁 3 💬 1 📌 0

I am so proud of this work. My first NLP experience. I learned a lot from this amazing team!!!!

14.05.2025 16:56 — 👍 3 🔁 0 💬 0 📌 0

The biggest advantage of MoTR over alternative methods is that it is very cheap and fast compared to its alternatives, while still provides very sensitive and accurate measurements. Our online data collection from 60 Russian speakers took less than 24 hours!!

07.03.2025 22:26 — 👍 1 🔁 0 💬 0 📌 0

Participants must move their mouse over the text to reveal the words, while their cursor movements are recorded (similar to how eye movements are recorded in eye tracking). See below for an example MoTR trial.

07.03.2025 22:26 — 👍 0 🔁 0 💬 1 📌 0

2- We use MoTR (Mouse Tracking for Reading) as a cheaper but reliable alternative to in-person eye tracking. MoTR is a new experimental tool, where participants screen is blurred except for a small region around the tip of the mouse pointer

07.03.2025 22:22 — 👍 2 🔁 0 💬 1 📌 0

Computational sentence processing modeling | Computational psycholinguistics | PhD student at LLF, CNRS, Université Paris Cité | Currently visiting COLT, Universitat Pompeu Fabra, Barcelona, Spain

https://ninanusb.github.io/

PhD student in NLP and Cognitive Science. Interested in human-LM alignment, accessibility, model reliability, and drag race. He/him 🏳️🌈

Asst Prof UZH Psychology - quant methods, dynamic systems, human development, psychology. @CharlesDriverAU

Anthropologist - Bayesian modeling - science reform - cat and cooking content too - Director @ MPI for evolutionary anthropology https://www.eva.mpg.de/ecology/staff/richard-mcelreath/

Postdoc at Harvard and MIT.

psycholinguist, based in Prague, head of the ERCEL Lab (https://ercel.ff.cuni.cz/)

Research group @Ghent University.

Our research focuses on the cognitive science of learning and language.

Assoc prof @DeptEdYork. Language processing & acquisition, open research @irisdatabase.bsky.social | @oasisdatabase.bsky.social

Associate Professor at UCL Experimental Psychology; math psych & cognitive psychology; statistical and cognitive modelling in R; German migrant worker in UK

psycholinguistics @ Potsdam, Germany

https://d-paape.github.io

Assistant Professor at Bar-Ilan University

https://yanaiela.github.io/

PhD @ ETH Zürich | working on (multilingual) evaluation of NLP | on the academic job market | go #vegan | https://vilda.net

Lecturer@Queen's Uni Belfast; postdoc&PhD@Edinburgh Uni. I work on LLM post-training, multilingualism, machine translation, and financial AI.

PhD student at the University of Zurich. Trying to get to know what LLMs know🤔

PhD student at Cambridge University. Causality & language models. Passionate musician, professional debugger.

pietrolesci.github.io

DiLi lab at the Department of Computational Linguistics, University of Zurich. 👀🤖📖🧠💬 https://www.cl.uzh.ch/en/research-groups/digital-linguistics.html

The Association for Computational Linguistics (ACL) is a scientific and professional organization for people working on Natural Language Processing/Computational Linguistics.

Hash tags: #NLProc #ACL2026NLP

Studying language in biological brains and artificial ones at the Kempner Institute at Harvard University.

www.tuckute.com

Computational cognitive scientist, developing integrative models of language, perception, and action. Assistant Prof at NYU.

More info: https://www.nogsky.com/

![R code and output showing the new functionality:

``` r

## pak::pkg_install("quentingronau/bridgesampling#44")

## see: https://cran.r-project.org/web/packages/bridgesampling/vignettes/bridgesampling_example_stan.html

library(bridgesampling)

### generate data ###

set.seed(12345)

mu <- 0

tau2 <- 0.5

sigma2 <- 1

n <- 20

theta <- rnorm(n, mu, sqrt(tau2))

y <- rnorm(n, theta, sqrt(sigma2))

### set prior parameters ###

mu0 <- 0

tau20 <- 1

alpha <- 1

beta <- 1

stancodeH0 <- 'data {

int<lower=1> n; // number of observations

vector[n] y; // observations

real<lower=0> alpha;

real<lower=0> beta;

real<lower=0> sigma2;

}

parameters {

real<lower=0> tau2; // group-level variance

vector[n] theta; // participant effects

}

model {

target += inv_gamma_lpdf(tau2 | alpha, beta);

target += normal_lpdf(theta | 0, sqrt(tau2));

target += normal_lpdf(y | theta, sqrt(sigma2));

}

'

tf <- withr::local_tempfile(fileext = ".stan")

writeLines(stancodeH0, tf)

mod <- cmdstanr::cmdstan_model(tf, quiet = TRUE, force_recompile = TRUE)

fitH0 <- mod$sample(

data = list(y = y, n = n,

alpha = alpha,

beta = beta,

sigma2 = sigma2),

seed = 202,

chains = 4,

parallel_chains = 4,

iter_warmup = 1000,

iter_sampling = 50000,

refresh = 0

)

#> Running MCMC with 4 parallel chains...

#>

#> Chain 3 finished in 0.8 seconds.

#> Chain 2 finished in 0.8 seconds.

#> Chain 4 finished in 0.8 seconds.

#> Chain 1 finished in 1.1 seconds.

#>

#> All 4 chains finished successfully.

#> Mean chain execution time: 0.9 seconds.

#> Total execution time: 1.2 seconds.

H0.bridge <- bridge_sampler(fitH0, silent = TRUE)

print(H0.bridge)

#> Bridge sampling estimate of the log marginal likelihood: -37.73301

#> Estimate obtained in 8 iteration(s) via method "normal".

#### Expected output:

## Bridge sampling estimate of the log marginal likelihood: -37.53183

## Estimate obtained in 5 iteration(s) via method "normal".

```](https://cdn.bsky.app/img/feed_thumbnail/plain/did:plc:lgxrkw556dtxkplwrj5rzlli/bafkreicf6zdyyjmaxrdfyq2sekokprym3c2o5atca6i2cczcx5zec4q4hm@jpeg)