Really enjoyed chatting with @oxfordmathematics.bsky.social

about AI in mathematics, where it can genuinely help, and what some of the limitations are.

13.02.2026 07:56 — 👍 0 🔁 0 💬 0 📌 0

Alternative Title: ‘A Timely Series of Talks on Time Series.’

Was too proud of this one so had to post it somewhere!

03.03.2025 17:54 — 👍 2 🔁 0 💬 0 📌 0

Just wrapped up my short course ‘Time Series Modelling: From Foundations to Frontiers’ at the Oxford Internet Institute @oii.ox.ac.uk

Huge thanks to @ammaox.bsky.social for the invitation—had some really engaging discussions!

Looking forward to being back at the OII soon.

03.03.2025 17:54 — 👍 1 🔁 0 💬 1 📌 0

Sonnet-3.7 has me vibe coding for the first time 🎧💻

Never written html, css, or JavaScript before, but I’ve created the website I’ve always wanted, featuring an optional command line interface ✨

BenWalker.co.uk

25.02.2025 13:57 — 👍 1 🔁 0 💬 0 📌 0

Looking forward to presenting this work at #NeurIPS2024 !

Come find us on Thursday from 11-2 @ West Ballroom A-D #6907

09.12.2024 23:19 — 👍 3 🔁 0 💬 0 📌 0

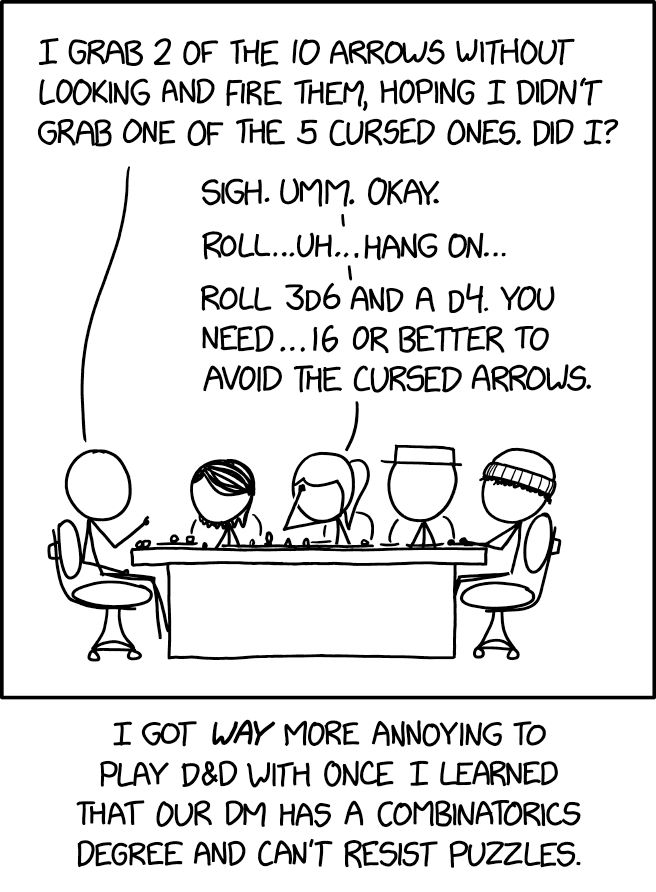

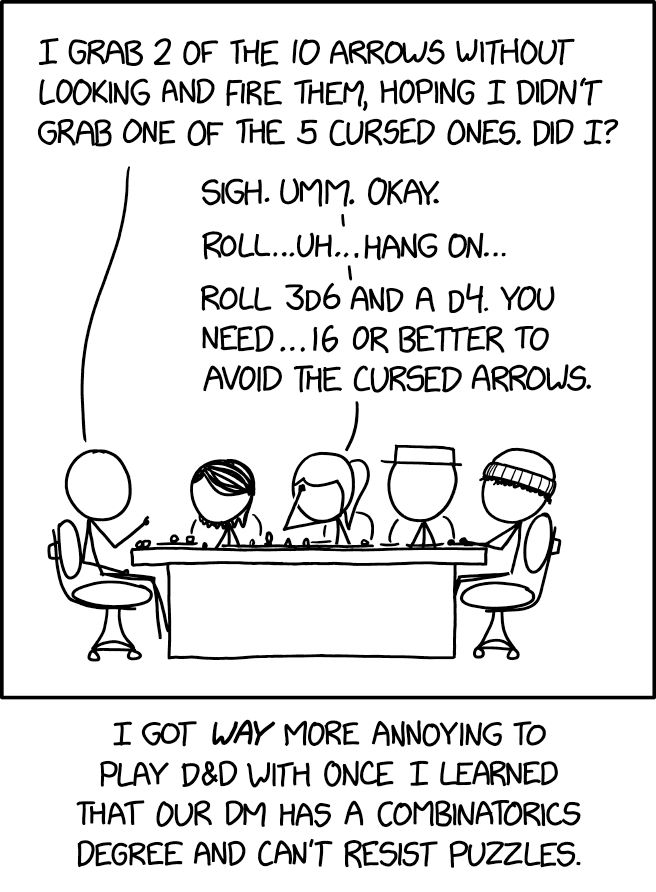

D&D Combinatorics xkcd.com/3015

23.11.2024 00:59 — 👍 26879 🔁 2156 💬 201 📌 109

Huge thanks to my incredible co-authors Nicola Cirone, Antonio Orvieto, Cristopher Salvi, and Terry Lyons!

#NeurIPS2024 #MachineLearning #DeepLearning #StateSpaceModels

🧵6/6

23.11.2024 09:04 — 👍 2 🔁 0 💬 0 📌 0

S4, Mamba, and Transformers need 4 blocks just to compose 12 permutations!

In contrast, using a dense state-transition matrix (IDS4/Linear CDE) or a non-linear state-transition (RNN) allows for state-tracking with only 1 layer.

🧵5/6

23.11.2024 09:04 — 👍 1 🔁 0 💬 1 📌 0

An excellent empirical example of this limited capacity is the A5 benchmark, from “The Illusion of State in State-Space Models” by Merrill et al.

The benchmark tests state-tracking, a crucial ability for tasks involving permutation composition like chess.

The results? 👇

🧵4/6

23.11.2024 09:04 — 👍 1 🔁 0 💬 1 📌 0

We rigorously show that Mamba’s selectivity mechanism boosts expressiveness.

However, we also show that using a diagonal state-transition matrix—while drastically reducing computational costs—also significantly limits the model's capacity.

🧵3/6

23.11.2024 09:04 — 👍 1 🔁 0 💬 1 📌 0

In this paper, we introduce a unified framework for state-space models using Rough Path Theory, providing a rigorous theoretical foundation for why the Mamba recurrence outperforms other SSMs—and precisely where their expressiveness may be limited.

🧵2/6

23.11.2024 09:04 — 👍 1 🔁 0 💬 1 📌 0

Theoretical Foundations of Deep Selective State-Space Models

Structured state-space models (SSMs) such as S4, stemming from the seminal work of Gu et al., are gaining popularity as effective approaches for modeling sequential data. Deep SSMs demonstrate outstan...

Want to know why Mamba beats other state-space models—and where it falls short?

Then check out our #NeurIPS 2024 paper: "Theoretical Foundations of Deep Selective State-Space Models."

🔗 Read the paper: arxiv.org/abs/2402.19047

💻 Access the code: github.com/Benjamin-Walke…

🧵1/6

23.11.2024 09:04 — 👍 21 🔁 2 💬 2 📌 1

CS/ML PhD research in high-dimensional time-series @helsinki.fi

Before: 7y of C++/JS/VR/AR/ML at Varjo, Yle, Reaktor, Automattic ...

After dark: synthesizers & 3D gfx

Exploring how technology is transforming the way we live, work and govern.

Postdoc @ UCLA StarAI Lab, PhD in CS from Oxford. Probabilistic ML, Tractable Models, Causality

Assistant Professor (Presidential Young Professor, PYP) at the National University of Singapore (NUS).

https://liuanji.github.io/

jon

https://www.patreon.com/c/SecretBase

https://www.youtube.com/SecretBaseSBN

LLMs and ratings at lmarena.ai

Esports stuff for fun:

https://cthorrez.github.io/riix/riix.html

https://huggingface.co/datasets/EsportsBench/EsportsBench

I do SciML + open source!

🧪 ML+proteins @ http://Cradle.bio

📚 Neural ODEs: http://arxiv.org/abs/2202.02435

🤖 JAX ecosystem: http://github.com/patrick-kidger

🧑💻 Prev. Google, Oxford

📍 Zürich, Switzerland

Senior Lecturer, Visual Computing, University of Bath

🔗 https://vinaypn.github.io/

Lecturer in Maths & Stats at Bristol. Interested in probabilistic + numerical computation, statistical modelling + inference. (he / him).

Homepage: https://sites.google.com/view/sp-monte-carlo

Seminar: https://sites.google.com/view/monte-carlo-semina

Director Data Science Institute @UWMadison, Professor of Physics,

EiC @MLSTjournal. Physics, stats/ML/AI, open science.

DeepMind Professor of AI @Oxford

Scientific Director @Aithyra

Chief Scientist @VantAI

ML Lead @ProjectCETI

geometric deep learning, graph neural networks, generative models, molecular design, proteins, bio AI, 🐎 🎶

Python, Boston, mathy fun, juggling, autism parenting. https://nedbat.com

Senior Researcher in Health Futures at Microsoft Research. Previously PhD in deep generative models at Durham University.

Chief Researcher at Araya, Tokyo. #ALife, #AI, embodied and enactive #cognition. Information, control and applied category theory for cognitive science.

https://manuelbaltieri.com/

Science, data, random useless knowledge

Interested in AI developments and climate change research

A business analyst at heart who enjoys delving into AI, ML, data engineering, data science, data analytics, and modeling. My views are my own.

You can also find me at threads: @sung.kim.mw

Machine Learning, {Org, Med, Comp} Chem, RL/Planning, AI-assisted Scientific Discovery & Creativity, Music. ELLIS Scholar. Team Lead at Microsoft Research AI for Science. 2xDad