Can we improve the road signs near border accesses? People make a wrong turn too often, and now there's a steeper price to pay:

www.wgrz.com/article/news...

@rpchaves.bsky.social

"Words, words, words." - Hamlet, Act II, scene ii. (he/him)

Can we improve the road signs near border accesses? People make a wrong turn too often, and now there's a steeper price to pay:

www.wgrz.com/article/news...

All code and more plots and regressions at: github.com/RuiPChaves/L...

01.01.2026 13:53 — 👍 0 🔁 0 💬 0 📌 0

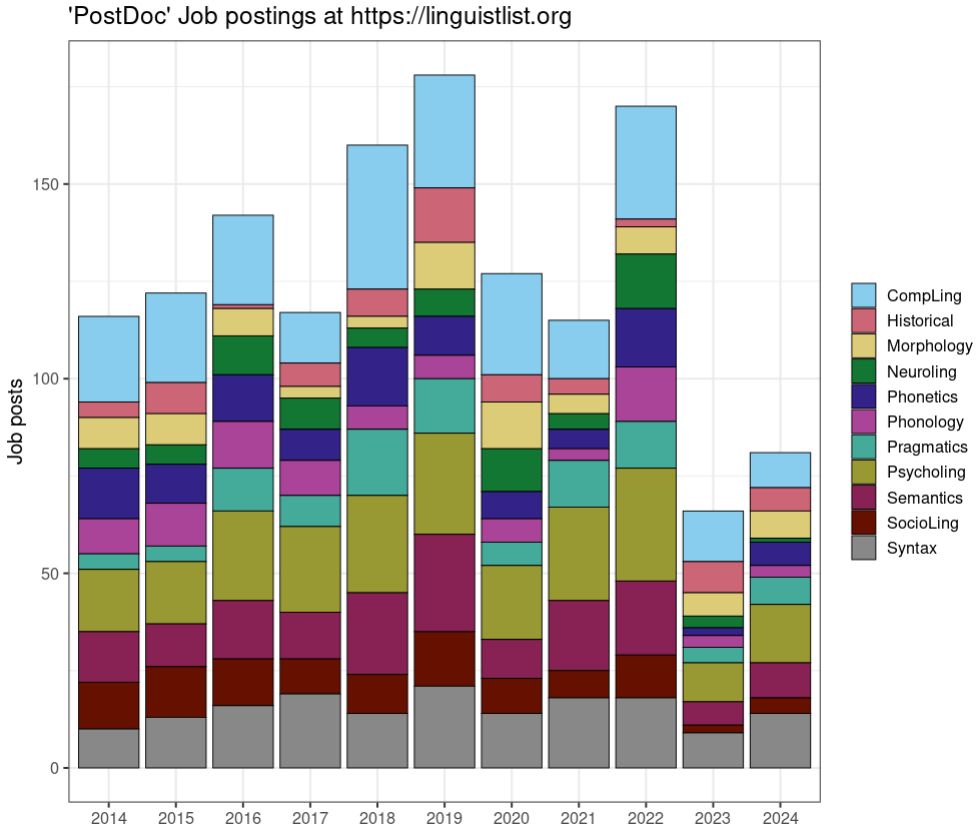

Number of Linguistics job postings have dropped significantly in the Linguist List. Please note that the numbers reported below are inflated because some job postings mention multiple areas of study (e.g. phonology + phonetics, or computational linguistics + semantics).

01.01.2026 13:53 — 👍 2 🔁 0 💬 1 📌 0Putting my Asimov hat on, maybe one day tutor systems will be very good, at least for some people. But I can’t imagine being a kid and enjoying learning from a screen rather than from a person, in a spontaneous classroom environment. Most of us are social creatures.

30.10.2025 12:55 — 👍 3 🔁 0 💬 1 📌 0The deeper the model goes into a topic the worse it gets (plain wrong or hallucinates refs), and I suspect it depends a lot on the field. Including when it is asked to create exercises. For basic things probably fine, but how can a student tell when the model strays from the truth?

30.10.2025 10:47 — 👍 15 🔁 0 💬 1 📌 0420k malicious tokens (0.00016% of the total training tokens) were enough to ruin the model, even after fine-tuning. This means the public can attack these models by creating and uploading bad data to Github, Stackoverflow, blogs, etc...

14.10.2025 19:14 — 👍 1 🔁 0 💬 0 📌 0Yet another example of how LLMs are not robust (a key argument against symbolic AI): a small fraction of 'bad' data can compromise models regardless of dataset or model size. Data poisoning does not scale with model size. arxiv.org/pdf/2510.07192

14.10.2025 19:05 — 👍 1 🔁 0 💬 1 📌 0

At Starbucks I order a Short coffee. I suspect most people don’t even know that exists…

09.07.2025 00:15 — 👍 1 🔁 0 💬 0 📌 0

There is mounting evidence that LLMs rely heavily on brute-force and shallow heuristics to achieve high accuracy, despite some studies (though not all) finding evidence for sophisticated latent representations. Add another to the bunch:

www.arxiv.org/abs/2506.16678

Coolest? It's a tie between Braitenberg's "Vehicles"

and Hofstadter "GEB".

He has never read a book in his life.

16.06.2025 00:31 — 👍 2 🔁 0 💬 0 📌 0I feel sick.

11.06.2025 23:50 — 👍 3 🔁 0 💬 0 📌 0

We even got real close to curing HIV: www.iavi.org/press-releas.... But this uses mrna, and that has come to be a dirty word for these people. It shouldn't. It's a triumph of modern molecular biology.

10.06.2025 00:06 — 👍 1 🔁 0 💬 1 📌 0Wow, yes. An amazing glimpse of the LLM Lie Machine at work. Anyone whose students are bring tempted by LLMs, sharing this could be very helpful showing how it lies, and how it fails.

03.06.2025 16:00 — 👍 271 🔁 137 💬 12 📌 4I'm so sorry. Beyond words.

02.06.2025 10:28 — 👍 1 🔁 0 💬 0 📌 0

"So here’s one place my compass is pointing me to: pushing back against bullshit. Frequently. Publicly. This frightens me because I have enough to deal with...I’m a people pleaser. I love being loved. But we are in terrifying times and all I have is my compass." - @jimmycoan.bsky.social

17.05.2025 15:07 — 👍 49 🔁 9 💬 1 📌 1

Bresnan (1991) journals.linguisticsociety.org/proceedings/...

08.05.2025 22:36 — 👍 2 🔁 2 💬 0 📌 0Register now for the LSA Linguistic Institute in Eugene, OR: over 80 courses over two 2.5-week terms (July 7-22::July 24-August 8). I'm teaching Constructions & the Grammar of Context with E Francis in session 1! center.uoregon.edu/LSA/2025/

11.03.2025 19:22 — 👍 3 🔁 2 💬 0 📌 0Interesting! I've no problem with that. This is crafted as a cartoon, so it is read top to bottom.

05.03.2025 22:32 — 👍 1 🔁 0 💬 0 📌 0Thank you for doing this work, it is very interesting! Challenge: I suspect if you asked o1 to create an X-bar grammar sufficiently large to parse a couple of pages of English it would not do a good job

.

I've annotated the o1 trees here: drive.google.com/file/d/1we0S... I think these systems *are* amazing, and getting better and better, but have a long way to go. Major issues: inconsistent analyses (suggesting not a single grammar exists), wrong analysis, partial trees, and gibberish trees (only 2).

06.02.2025 16:55 — 👍 0 🔁 0 💬 2 📌 0O1's results are impressive, but they are not as good as a professional human annotator, at least for these sentences.

06.02.2025 15:09 — 👍 1 🔁 0 💬 1 📌 0This is a good one but the other outputs form o1, are not good, and often mutually inconsistent. E.g. in the "This town's only road's potholes' depths are driving me insane" example the VP is too flat, the "only road" NP is wrong, inconsistent with tree for "a medium-sized sturdy lamp" in next S

06.02.2025 15:08 — 👍 1 🔁 0 💬 1 📌 0Maybe they are assuming AI will bring about those breakthroughs? What happens when that bubble eventually pops?

06.02.2025 00:37 — 👍 1 🔁 0 💬 0 📌 0ps - Should have used NDL instead of HOL.

07.01.2025 13:22 — 👍 0 🔁 0 💬 0 📌 0

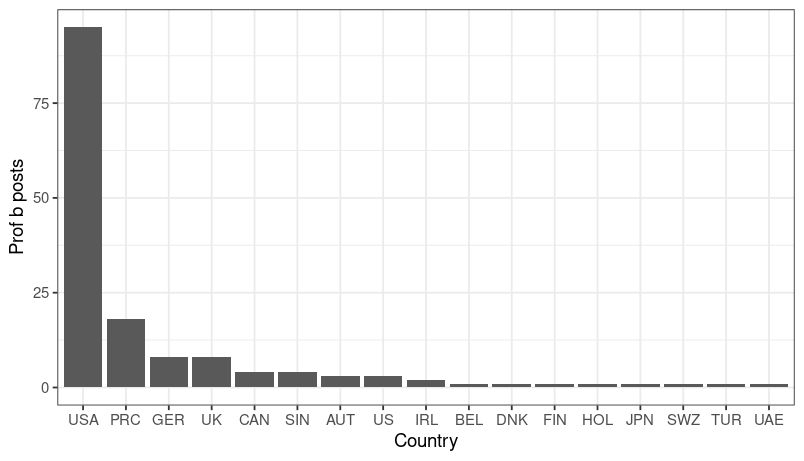

The LL mostly consists of US posts. Here are the 2024 posts for "Professor":

06.01.2025 22:15 — 👍 2 🔁 0 💬 1 📌 0That's a great idea. Maybe mine ProQuest, LingBuzz, and similar venues?

05.01.2025 15:41 — 👍 2 🔁 0 💬 1 📌 0Keep in mind that there are hardly any CL posts that don't mention another discipline, so that blue bar mostly consists of posts from a few of the other bars. Similarly, some posts mention both phonetics and phonology, or syntax and semantics, etc. The raw post count is even smaller.

05.01.2025 13:17 — 👍 2 🔁 0 💬 1 📌 0 01.01.2025 15:02 — 👍 2 🔁 0 💬 2 📌 0

01.01.2025 15:02 — 👍 2 🔁 0 💬 2 📌 0