In this amazing multidisciplinary collaboration, we report our early experience with the @openclaw-x.bsky.social ->

23.02.2026 23:32 — 👍 40 🔁 21 💬 1 📌 9

In this amazing multidisciplinary collaboration, we report our early experience with the @openclaw-x.bsky.social ->

23.02.2026 23:32 — 👍 40 🔁 21 💬 1 📌 9

📋 Why it matters: interpretable, controllable compression students that learn how the teacher thinks. We also see faster, cleaner training dynamics compared to baselines. Preprint + details: arxiv.org/abs/2509.25002 (4/4)

30.09.2025 23:32 — 👍 1 🔁 0 💬 0 📌 0

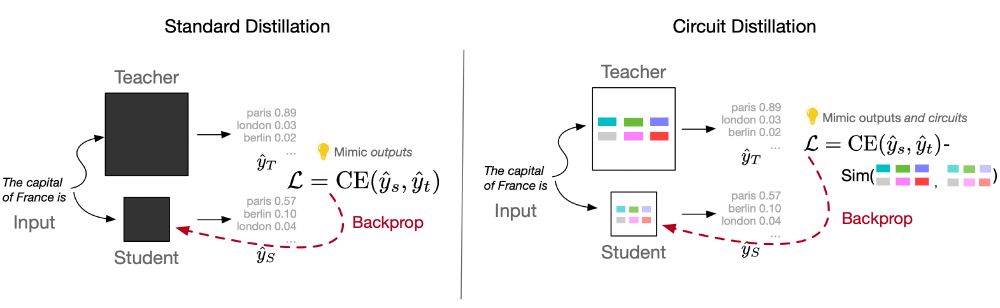

📘 How it works (high level): identify the teacher’s task circuit --> find functionally analogous student components via ablation --> align their internals during training. Outcome: the student learns the same computation, not just the outputs. (3/4)

30.09.2025 23:32 — 👍 0 🔁 0 💬 1 📌 0

🍎 On Entity Tracking and Theory of Mind, a student that updates only ~11–15% of attention heads inherits the teacher’s capability and closes much of the gap; targeted transfer over brute-force fine-tuning. (2/4)

30.09.2025 23:32 — 👍 0 🔁 0 💬 1 📌 0

🔊 New work w/ @silvioamir.bsky.social & @byron.bsky.social! We show you can distill a model’s mechanism, not just its answers -- teaching a small LM to run it's circuit same as a larger teacher model. We call it Circuit Distillation. (1/4)

30.09.2025 23:32 — 👍 5 🔁 0 💬 1 📌 1

5️⃣ Attribution techniques could help trace distillation practices, ensuring compliance with model usage policies & improving transparency in AI systems. [6/6]

🔗 Dive into the full details: arxiv.org/abs/2502.06659

4️⃣ Our analysis spans summarization, question answering, and instruction following, using models like Llama, Mistral, and Gemma as teachers. Across tasks, PoS templates consistently outperformed n-grams in distinguishing teachers 📊 [5/6]

11.02.2025 17:16 — 👍 0 🔁 0 💬 1 📌 0

3️⃣ But here’s the twist: Syntactic patterns (like Part-of-Speech templates) do retain strong teacher signals! Students unconsciously mimic structural patterns from their teacher, leaving behind an identifiable trace 🧩 [4/6]

11.02.2025 17:16 — 👍 0 🔁 0 💬 1 📌 0

2️⃣ Simple similarity metrics like BERTScore fail to attribute a student to its teacher. Even perplexity under the teacher model isn’t enough to reliably identify the original teacher. Shallow lexical overlap is just not a strong fingerprint 🔍 [3/6]

11.02.2025 17:16 — 👍 0 🔁 0 💬 1 📌 0

1️⃣ Model distillation transfers knowledge from a large teacher model to a smaller student model. But does the fine-tuned student reveal clues in its outputs about its origins? [2/6]

11.02.2025 17:16 — 👍 0 🔁 0 💬 1 📌 0

📢 Can we trace a small distilled model back to its teacher? 🤔New work (w/ @chantalsh.bsky.social, @silvioamir.bsky.social & @byron.bsky.social) finds some footprints left by LLMs in distillation! [1/6]

🔗 Full paper: arxiv.org/abs/2502.06659